Microsoft’s Copilot Studio now offers xAI’s Grok 4.1 Fast in preview, opening a new lane in the multi‑model ecosystem for enterprise agent builders. The addition—available today in early access environments for United States‑based makers and off by default until an organization administrator opts in—promises a low‑latency, tool‑oriented text model optimized for very large context windows and deep tool integrations. At the same time, Microsoft and xAI emphasize that Grok runs outside Microsoft‑managed hosting and that customer prompts and responses are not retained or used to train xAI’s models, shifting responsibilities for data protection and contractual terms to xAI’s enterprise agreements. This article unpacks what the integration actually changes for Copilot Studio users, verifies the technical claims that matter to IT teams, highlights the operational benefits, and lays out the security, compliance, and governance questions every administrator should answer before enabling Grok inside their tenant.

Microsoft’s Copilot Studio is positioned as an orchestration and agent‑design surface where enterprise makers can compose prompts, attach tools, test interactions, and deploy agents that connect to internal data sources. Over the past year Microsoft has shifted from a single‑provider model toward a deliberate multi‑model approach—adding Anthropic’s Claude models and broader OpenAI model families—so organizations can choose the model that best fits particular workflows, from creative drafting to deep reasoning.

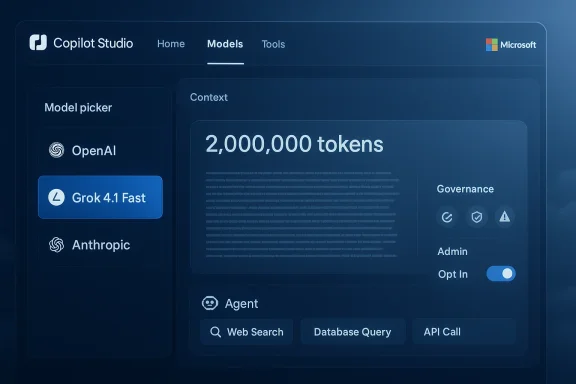

xAI’s Grok lineage entered the enterprise conversation quickly: the Grok 4 family was added to Microsoft’s Azure AI Foundry, and xAI followed with Grok 4.1 variants that include a Fast mode tuned for low latency, agentic tool use, and very large context windows. Microsoft’s Copilot Studio announcement makes one of these Grok 4.1 variants—Grok 4.1 Fast—available in preview as an additional model option for makers located in the United States, subject to explicit admin opt‑in. The model is text‑generation only (it does not support image or other media generation in this integration) and is presented as complementary to other models in the Copilot Studio model picker.

Key operational mechanics:

However, the integration also changes the governance equation: the model is hosted externally and governed by xAI’s terms, so risk and contractual oversight shift. That tradeoff is acceptable for many organizations—provided they perform the contractual due diligence, pilot the model against their acceptance criteria, and apply strict access and data‑minimization controls.

Practical takeaway: treat Grok as another powerful tool in your model toolbox, not a drop‑in replacement for an enterprise model governance program. Use the admin opt‑in as the trigger for a formal, documented pilot that validates tool accuracy, latency, telemetry, and contractual protections. If the pilot checks all critical boxes, Grok 4.1 Fast can be a compelling choice for agentic, low‑latency workloads that demand long context and dependable tool calling.

Conclusion

Microsoft’s addition of xAI Grok 4.1 Fast to Copilot Studio expands the multi‑model landscape and gives makers a new, specialized option for building fast, tool‑oriented agents. The technical promises—large context windows, improved tool calling, and low latency—fit a clear set of enterprise use cases, but adoption brings non‑trivial governance work: contractual signoffs with xAI, auditability requirements, region‑specific data considerations, and operational monitoring. Organizations that proceed methodically—treating the admin opt‑in as the start of a formal risk assessment, piloting with telemetry and verification layers, and keeping sensitive workloads on fully‑managed in‑tenant models until compliance is confirmed—will capture the benefits without accepting unmanaged risk. For Copilot Studio builders, Grok 4.1 Fast is another arrow in the quiver—powerful when used deliberately, risky when treated as a drop‑in convenience.

Source: Microsoft xAI models now available in Microsoft Copilot Studio | Microsoft Copilot Blog

Background / Overview

Background / Overview

Microsoft’s Copilot Studio is positioned as an orchestration and agent‑design surface where enterprise makers can compose prompts, attach tools, test interactions, and deploy agents that connect to internal data sources. Over the past year Microsoft has shifted from a single‑provider model toward a deliberate multi‑model approach—adding Anthropic’s Claude models and broader OpenAI model families—so organizations can choose the model that best fits particular workflows, from creative drafting to deep reasoning.xAI’s Grok lineage entered the enterprise conversation quickly: the Grok 4 family was added to Microsoft’s Azure AI Foundry, and xAI followed with Grok 4.1 variants that include a Fast mode tuned for low latency, agentic tool use, and very large context windows. Microsoft’s Copilot Studio announcement makes one of these Grok 4.1 variants—Grok 4.1 Fast—available in preview as an additional model option for makers located in the United States, subject to explicit admin opt‑in. The model is text‑generation only (it does not support image or other media generation in this integration) and is presented as complementary to other models in the Copilot Studio model picker.

What Microsoft and xAI are shipping (the essentials)

- Availability model: Grok 4.1 Fast is rolled into Copilot Studio as a preview option in early access environments and is off by default. Organization administrators must opt in before makers can select it. Existing agents remain unchanged unless an admin enables the model and a maker deliberately switches the agent’s model.

- Hosting and contracts: xAI’s models are hosted outside Microsoft‑managed environments. Using Grok through Copilot Studio creates an independent relationship between the customer and xAI that is governed by xAI’s Enterprise Terms of Service and Data Protection Addendum rather than Microsoft’s managed data controls.

- Data use statement: Microsoft’s messaging for the integration explicitly states that when Grok 4.1 Fast is used inside Copilot Studio, customer data is not retained or used to train xAI’s models. That statement aligns with the enterprise assurances customers now expect from large model vendors, but it also means the contractual promise comes from xAI, not Microsoft.

- Model focus and limitations: Grok 4.1 Fast is built for fast reasoning and text generation, with capabilities focused on long context processing and accurate tool calling. The integration does not enable image generation or multimodal outputs through Copilot Studio with this model.

- Regional rollout: Initial availability is limited (U.S. makers in early access). Microsoft states readiness evaluations are underway for other regions, which means global availability may lag and regional privacy boundaries could complicate adoption timelines.

Technical profile: what Grok 4.1 Fast brings to the table

To judge whether Grok 4.1 Fast is a meaningful addition to your Copilot Studio toolkit, you need to understand three technical vectors where the model claims strength.1) Large context windows and multi‑turn memory

Grok 4.1 Fast is designed for extended context. xAI’s public materials and independent reporting indicate that the Fast variant targets extremely large context windows (reports reference a 2,000,000‑token window for the Fast, tool‑oriented variant). That scale changes what you can build: entire codebases, long contracts, multi‑session customer interactions, or lengthy research documents can be processed with fewer external retrieval round trips.- Why it matters: fewer context truncations reduce the need for complex retrieval‑and‑stitch orchestration and make it easier to maintain multi‑turn coherence for agents that must reason over long documents.

- Caveat: very large context windows increase input token costs and can expose more sensitive content in a single prompt; design and governance must keep that in mind.

2) Tool‑calling and agentic workflows

Grok 4.1 Fast is explicitly tuned for tool use—calling APIs, invoking connectors, and executing deterministic actions as part of a workflow. Reports and model descriptions point to improved function‑calling accuracy and lower latency for tool invocation compared with prior Grok releases.- Why it matters: tool correctness is the core differentiator when moving from chat assistants to agents that perform real work—placing orders, querying systems of record, or executing remediation steps.

- Caveat: tool calling reduces hallucination risk when integrated with properly validated APIs, but the overall system still needs robust guardrails: rate limits, input sanitization, and circuit breakers.

3) Low latency and fast reasoning

Fast variants trade off depth for latency; Grok 4.1 Fast aims to provide rapid responses with good reasoning for typical agent tasks. Where deeper chain‑of‑thought is required, xAI’s other modes (e.g., higher‑depth “Thinking” variants) remain available outside this Copilot Studio integration.- Why it matters: latency matters for interactive UIs, real‑time triage, or services embedded in customer experiences. Fast reasoning helps agents remain responsive while executing multi‑step operations.

- Caveat: for very complex, multi‑step logical proofs or expert‑level synthesis, the tradeoff may favor a slower reasoning mode—even within a multi‑model strategy you may want to orchestrate a “fast primary, deep secondary” pattern.

How Copilot Studio integrates external models: process and controls

Copilot Studio’s model picker is evolving into a central control point for enterprise model governance. The Grok addition follows the pattern Microsoft introduced with Anthropic: models hosted externally are made available through the Copilot Studio interface, but activation requires tenant admin consent.Key operational mechanics:

- Admin opt‑in: Administrators must explicitly enable Grok 4.1 Fast at the tenant level. This preserves a gate for compliance and procurement workflows.

- Default behavior: If admins do not opt in, nothing changes—makers continue to use only the models currently available to the tenant. Existing agents remain on their configured model versions.

- Responsible AI gating: Microsoft performs pre‑rollout security, safety, and quality evaluations for any model added to the Copilot Studio lineup. Those evaluations are intended to be a minimum bar—not a full substitute for an organization’s own risk assessment.

- Choice in the agent: Once enabled, makers can select Grok 4.1 Fast in the prompt/model drop‑down to power specific agents or tasks, making model choice per‑agent possible.

Enterprise implications: security, compliance, and contractual shifts

Bringing a third‑party model into a production‑grade agent ecosystem is not just a technical integration; it is a contractual, compliance, and security event. Here’s what changes substantively.Data protection and contractual allocation of risk

- Grok is hosted outside Microsoft’s managed environment. Microsoft’s announcement makes this explicit: when you use Grok through Copilot Studio, the relationship with xAI becomes independent and is governed by xAI’s Enterprise Terms and Data Protection Addendum.

- Microsoft and xAI both state that customer data used through Copilot Studio for Grok is not retained for model training. That assurance is meaningful, but it comes from xAI’s policy commitments—enterprises should obtain contractually binding guarantees and audit rights under xAI’s DPA before using the model for regulated workloads.

Auditability and logging

- Copilot Studio will surface the model choice and traffic, but the ability to perform tenant‑level exportable audits and to correlate which agent interactions invoked an external model will determine whether the organization can meet regulatory transparency needs.

- Administrators should verify:

- What telemetry stays in Microsoft logs versus what is visible to xAI.

- Retention windows for logs and the ability to obtain those logs for eDiscovery or incident response.

Jurisdiction and data residency

- The initial rollout is U.S.‑centric. If your organization operates in the EU/UK/EFTA, regulations such as GDPR and data residency requirements may limit or delay adoption. Microsoft indicated readiness evaluations for other regions are underway; organizations with cross‑border data flows should defer production use until region‑specific compliance assurances are documented.

Supply chain and operational resilience

- Adding another provider diversifies risk (less single‑vendor dependency), but it also expands your supply chain. Vet xAI’s operational SLAs, availability, and redundancy plans. Understand how failover works inside Copilot Studio if Grok endpoints are intermittently unavailable.

Strengths: why this matters for makers and IT leaders

- Greater model choice for specialized tasks. Developers can use different models tailored to particular workloads—fast tool‑oriented Grok for low‑latency agenting, other models for deep reasoning or creative tasks.

- Simplified agent experimentation. Copilot Studio’s model picker reduces friction when testing alternate backends—no need to rewire the entire pipeline to trial a new model.

- Potential cost/performance optimization. Fast variants can be cheaper and faster for high‑throughput, tool‑heavy workloads; teams can allocate expensive, high‑accuracy models for tasks that require them.

- Competitive vendor diversity. Microsoft’s multi‑model approach reduces the risk of lock‑in and encourages innovation among model providers.

- Improved tool‑calling fidelity. If Grok’s tool‑calling accuracy is as advertised, tasks that require reliable actions (ticket updates, DB queries, system orchestration) will be simpler to implement.

Risks and open questions

- Contractual and legal exposure: Because Grok runs outside Microsoft‑managed zones and is governed by xAI’s contracts, make sure the DPA and Enterprise Terms address data processing, subprocessor use, breach notification timelines, and audit rights. Relying on vendor statements in a blog post is not sufficient for regulated data.

- Operational visibility: Confirm what telemetry is captured in Microsoft logs versus what xAI receives. Incident response depends on having accessible logs for all steps of an event.

- Regional compliance and data residency: U.S. availability only means EEA, UK, and other regions may require additional assessments or may be blocked entirely until local guarantees are in place.

- Model updates and versioning: How will updates to Grok (for example, future Grok 4.2 or Fast patches) be managed inside Copilot Studio? Who decides when an agent switches to a new model version?

- Security of third‑party hosting: Verify xAI hosting controls, encryption standards, and access lists. External hosting invites additional attack surface considerations.

- Unverified or evolving specs: Industry reporting cites large token windows and aggressive pricing for Grok 4.1 Fast; treat those numbers as vendor claims that should be validated against current model cards and contractual pricing.

Practical on‑ramp: a step‑by‑step adoption checklist for admins and makers

- Admin policy decision:

- Convene security, legal, and procurement stakeholders to review xAI’s Enterprise Terms and DPA.

- Define acceptable use cases for external models and identify prohibited data classes (e.g., PHI, certain PII).

- Contractual due diligence:

- Obtain contract language for data handling, retention, breach notification, subprocessor lists, and audit rights.

- Verify model training and retention commitments are explicit and enforceable.

- Pilot and POC:

- Create a controlled pilot with non‑sensitive data and instrument end‑to‑end telemetry (request/response, tool calls, error rates).

- Measure latency, tool‑calling accuracy, and hallucination rates against your acceptance criteria.

- Logging and monitoring:

- Ensure Copilot Studio logs include model selection per interaction and that logs are exportable into your SIEM.

- Configure alerts for unusual tool calls or spikes in external model usage.

- Data minimization and sanitization:

- Limit what is sent to the model—obfuscate or redact unnecessary PII and adopt summary‑only approaches when feasible.

- Where possible, pre‑filter or redact sensitive segments before including documents in prompts.

- Access controls and RBAC:

- Restrict who can enable external models at the admin level.

- Use role‑based access to limit which makers can select Grok for production agents.

- Update and lifecycle governance:

- Define how model updates are approved and how agents are tested prior to model upgrades.

- Maintain a model‑inventory with version, purpose, and test evidence.

- Compliance sign‑off before production:

- For regulated workloads, get sign‑off from compliance and legal with documented risk treatment plans.

- If needed, restrict use to named pilot tenants until legal concerns are cleared.

Developer guidance: designing agents with Grok 4.1 Fast

- Use a hybrid model design: pick Grok for latency‑sensitive, tool‑heavy sub‑agents and reserve deeper, higher‑accuracy models for complex synthesis steps.

- Implement a verification layer for any tool‑triggering output. Treat model recommendations as commands to be validated by deterministic checks before executing them.

- Test edge cases where long context might introduce stale or contradictory information; implement freshness indicators and explicit "context cut" heuristics.

- Profile token consumption and cost during POC. Large context windows are powerful but can dramatically increase per‑call token usage.

- Add user consent and explainability surfaces in UI flows for external model usage so end users know when content is processed by a third party.

Responsible AI: safety evaluations and what they mean in practice

Microsoft states every model added to Copilot Studio undergoes security, safety, and quality evaluations. That is a valuable gate, but organizations must understand the scope:- Microsoft’s evaluations focus on a baseline risk profile suited for enterprise deployments and are not a substitute for tenant‑level safety policy checks.

- Organizations should demand:

- Access to model cards and documented safety evaluations.

- Evidence of adversarial testing and mitigation strategies for prompt injection, data exfiltration, and tool misuse.

- A remediation plan and SLA obligations for model behavior that violates safety expectations.

Final analysis: is this a seismic change or incremental flexibility?

Adding xAI’s Grok 4.1 Fast to Copilot Studio is significant, but not revolutionary in isolation. It is an expression of Microsoft’s broader multi‑model strategy—a pragmatic move that gives enterprise makers more options and accelerates competition among model vendors. For teams building agentic workflows that depend on rapid tool execution and long‑context reasoning, Grok 4.1 Fast could offer tangible operational advantages.However, the integration also changes the governance equation: the model is hosted externally and governed by xAI’s terms, so risk and contractual oversight shift. That tradeoff is acceptable for many organizations—provided they perform the contractual due diligence, pilot the model against their acceptance criteria, and apply strict access and data‑minimization controls.

Practical takeaway: treat Grok as another powerful tool in your model toolbox, not a drop‑in replacement for an enterprise model governance program. Use the admin opt‑in as the trigger for a formal, documented pilot that validates tool accuracy, latency, telemetry, and contractual protections. If the pilot checks all critical boxes, Grok 4.1 Fast can be a compelling choice for agentic, low‑latency workloads that demand long context and dependable tool calling.

Conclusion

Microsoft’s addition of xAI Grok 4.1 Fast to Copilot Studio expands the multi‑model landscape and gives makers a new, specialized option for building fast, tool‑oriented agents. The technical promises—large context windows, improved tool calling, and low latency—fit a clear set of enterprise use cases, but adoption brings non‑trivial governance work: contractual signoffs with xAI, auditability requirements, region‑specific data considerations, and operational monitoring. Organizations that proceed methodically—treating the admin opt‑in as the start of a formal risk assessment, piloting with telemetry and verification layers, and keeping sensitive workloads on fully‑managed in‑tenant models until compliance is confirmed—will capture the benefits without accepting unmanaged risk. For Copilot Studio builders, Grok 4.1 Fast is another arrow in the quiver—powerful when used deliberately, risky when treated as a drop‑in convenience.

Source: Microsoft xAI models now available in Microsoft Copilot Studio | Microsoft Copilot Blog