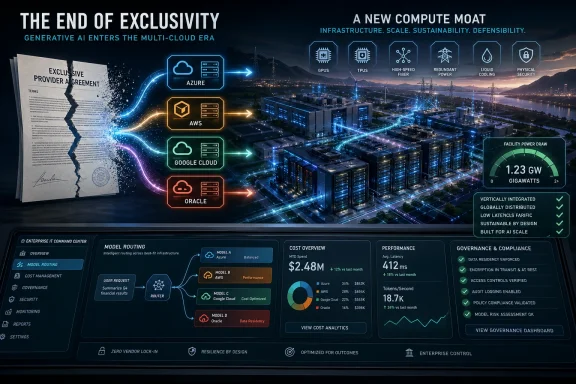

Microsoft and OpenAI have not truly “broken up,” but they have ended the arrangement that made their partnership the symbolic center of the generative AI boom. The amended agreement removes the most important exclusivity barriers: OpenAI can now serve products across any cloud provider, while Microsoft’s license to OpenAI intellectual property becomes non-exclusive through 2032. The result is less a divorce than a regime change, and it signals that the industry’s most valuable scarce asset is no longer simply the best model. In 2026, the strategic center of gravity has shifted toward compute capacity, data center power, chip supply, and enterprise distribution.

Microsoft’s original 2019 investment in OpenAI was one of the most consequential technology bets of the last decade. At the time, OpenAI was still transitioning from research lab to commercial AI platform, and Microsoft needed a way to make Azure more relevant in a cloud market long defined by Amazon Web Services’ lead. The bargain was simple in spirit: Microsoft supplied capital and infrastructure, while OpenAI supplied frontier AI research that could make Azure feel indispensable.

That arrangement looked brilliant after ChatGPT arrived in late 2022. Suddenly, Microsoft had privileged access to the most culturally important software product since the smartphone app store, and Azure became the default enterprise path to OpenAI’s models. The partnership fed directly into Microsoft 365 Copilot, GitHub Copilot, Azure OpenAI Service, and a broader narrative that Microsoft had leapfrogged Google in applied AI.

But exclusivity that looks strategic in a scarcity market can become restrictive in a scale market. OpenAI’s need for compute grew faster than any single cloud provider could comfortably satisfy, while enterprise customers increasingly wanted model choice rather than model lock-in. At the same time, competitors including Anthropic, Google DeepMind, Meta, xAI, Mistral, and others turned the model layer into a rapidly contested market rather than a one-company bottleneck.

The new agreement therefore reflects a deeper industry correction. Microsoft remains OpenAI’s primary cloud partner, OpenAI products are still expected to ship first on Azure unless Microsoft cannot or chooses not to support the required capabilities, and Microsoft remains a major shareholder. Yet the symbolic change is unmistakable: the era when one cloud provider could use exclusive access to one frontier model as a durable moat has ended.

The revised agreement weakens that story without destroying Microsoft’s position. OpenAI can now distribute products across other clouds, and Microsoft’s IP license is no longer exclusive. The practical effect is that OpenAI becomes more like an independent platform company and less like an Azure-bound research engine.

This matters because model access is becoming less defensible than model integration. Enterprises rarely want one model forever; they want routing, governance, cost controls, auditability, and fallback options. If a procurement team can buy OpenAI, Anthropic, Gemini, and open-weight models through multiple channels, the cloud vendor’s advantage moves from exclusivity to operational excellence.

Key implications include:

Enterprise AI adoption also punishes rigidity. Large companies operate across multiple clouds for redundancy, regulatory reasons, merger history, pricing leverage, and internal politics. A vendor that says “move everything to our cloud to use this model” is asking customers to reorganize infrastructure around a moving target.

That is why the amended Microsoft-OpenAI deal is not merely a legal update. It acknowledges that model exclusivity has become commercially expensive. In a market where customers expect optionality, the exclusive supplier risks becoming the bottleneck rather than the gatekeeper.

This is why the largest AI deals increasingly look less like software partnerships and more like industrial infrastructure contracts. OpenAI’s reported commitments with Azure, Oracle, and AWS total hundreds of billions of dollars over multiple years. Anthropic, meanwhile, has deepened ties with Amazon and Google to secure the compute capacity needed to train and serve Claude at global scale.

The apparent paradox is that AI labs are valued like software companies but increasingly behave like energy-intensive industrial customers. Their marginal product may be code, but their limiting input is physical infrastructure. That makes the AI platform business more capital-intensive than the internet platforms that preceded it.

The new scarcity stack looks like this:

This is the hidden meaning of the new Microsoft-OpenAI relationship. OpenAI may be freer contractually, but it remains bound by infrastructure reality. Azure-first distribution, existing commitments, and deep engineering integration ensure that Microsoft is still inside OpenAI’s operating model.

The same logic benefits AWS, Oracle, and Google Cloud. If a lab signs a multi-year compute commitment, the cloud provider receives a long-duration revenue stream and may gain equity upside as well. The shovel sellers do not need to know which prospector finds gold; they need only ensure every prospector keeps buying shovels.

But Microsoft traded legal exclusivity for something arguably more durable: predictable economics. It retains a major equity stake, a continuing non-exclusive license to OpenAI models and products through 2032, ongoing revenue share from OpenAI through 2030 subject to a cap, and a primary cloud role. That is not a clean breakup; it is a restructuring of risk.

For Microsoft, this may be a more mature posture. The company no longer has to carry the entire burden of satisfying OpenAI’s infrastructure appetite, which has become enormous even by hyperscaler standards. It can keep building Copilot, Azure AI services, security products, and internal model research while letting OpenAI diversify some of the capital load.

Microsoft’s likely wins include:

That internal push gives Microsoft leverage. If OpenAI’s models remain best-in-class, Microsoft can use them. If another model becomes better for coding, productivity, multimodal reasoning, or edge deployment, Microsoft can adapt.

The amended agreement therefore does not mark Microsoft’s retreat from AI. It marks Microsoft’s transition from exclusive patron to portfolio platform operator. That is a subtle but important difference.

But multi-cloud freedom can also create operational complexity. Different clouds use different accelerators, networking assumptions, storage layers, security primitives, observability tools, and procurement models. Running the same AI product consistently across Azure, AWS, Oracle, and Google Cloud is far harder than distributing a conventional SaaS app.

OpenAI’s challenge is that freedom at the sales layer may mean fragmentation at the infrastructure layer. Training pipelines optimized for one chip architecture do not automatically perform well on another. Inference economics can change dramatically depending on accelerator availability, batch sizes, memory bandwidth, and regional energy costs.

A realistic multi-cloud AI strategy requires sequential discipline:

This is where the “best model wins” thesis breaks down. A model can be popular, technically impressive, and strategically important while still struggling to generate enough gross margin to cover its compute appetite. Consumer subscriptions, API usage, enterprise seats, advertising, agents, and licensing all have to scale together.

OpenAI is not alone in facing this equation. Every frontier lab is being pushed toward a world where model training runs cost more, inference demand grows, and customers expect prices to fall. That is a brutal combination unless productivity gains become measurable enough to justify much larger enterprise contracts.

Google sits in a more complicated position. It has its own frontier model family in Gemini, its own TPU stack, and a cloud business that wants third-party AI workloads. That means Google can compete with OpenAI at the model layer while still benefiting if labs need TPU capacity or enterprise distribution.

The cloud market is therefore becoming less like a winner-take-all race and more like an infrastructure auction. Model companies need capacity, and hyperscalers need anchor tenants to justify vast data center investment. The question is no longer simply “which model is smartest?” but which provider can deliver the cheapest reliable intelligence per watt.

Infrastructure competitors are differentiating through:

This resembles Amazon’s classic marketplace logic. The company does not have to pick the single winning seller if it owns the marketplace, fulfillment network, and payment rails. In AI, the equivalent is cloud infrastructure, chips, agent runtimes, and enterprise procurement channels.

Oracle’s opportunity is different but equally revealing. It is trying to convert a late-cloud position into an AI infrastructure role by building or financing huge capacity blocks. If successful, Oracle becomes proof that the AI boom can reorder cloud relevance around power access and willingness to build.

Model performance changes too quickly for three-year lock-in to feel safe. A model that leads in coding this quarter may lag in document reasoning next quarter, while a cheaper open-weight model may become sufficient for internal support or classification workloads. The smart enterprise posture is controlled optionality, not chaotic experimentation.

That does not mean every company should run five models in production. It means the architecture should allow model substitution where appropriate, with policy, logging, and evaluation frameworks above the model layer. The enterprise moat is not access to one model; it is the ability to deploy AI safely, cheaply, and measurably.

Enterprise AI teams should prioritize:

But Microsoft now has to prove Copilot’s value more concretely. If OpenAI models are available elsewhere, Azure cannot rely solely on privileged model access. Microsoft must win through integration, security, compliance, admin controls, and workflow automation.

That is good news for customers. Competition should pressure Microsoft to make Copilot more transparent, measurable, and interoperable. The worst outcome would be AI licensing that feels like another opaque enterprise software tax.

If OpenAI can use more clouds, it may reduce capacity bottlenecks during major launches. That could mean fewer usage caps, faster model rollouts, and more resilient service during traffic spikes. It may also allow OpenAI to tune infrastructure for products such as agents, video, real-time voice, and coding environments.

However, more infrastructure options do not automatically mean simpler products. OpenAI is expanding across consumer subscriptions, enterprise tools, developer APIs, agents, media generation, and potentially advertising-supported experiences. The risk is that product strategy becomes more crowded even as infrastructure becomes more flexible.

Consumer-facing effects may include:

This is especially important as AI tools become more personal. Voice assistants, memory features, browser agents, email agents, and coding copilots can reveal far more about a user than a traditional search query. Infrastructure scale must therefore be matched by clear privacy promises.

Microsoft has its own trust calculation. If OpenAI builds more direct consumer relationships across clouds, Microsoft must ensure Copilot feels like a coherent Microsoft product rather than a wrapper around someone else’s roadmap. That distinction will matter as AI becomes embedded into Windows and Microsoft 365.

The better argument is that models are no longer scarce in the same way. There are now multiple credible frontier systems, strong open-weight alternatives, and specialized models that outperform general systems on narrow tasks. That changes bargaining power.

In the earlier ChatGPT era, the question was whether you had access to the model. In the current era, the question is how efficiently you can deploy the right model for the right job. That favors platforms with orchestration, evaluation, observability, and cost controls.

The model layer still matters in several areas:

For enterprises, open-weight models can be attractive when data control, customization, latency, or cost predictability matter more than absolute frontier performance. For governments, they can support sovereignty goals. For developers, they provide a playground for experimentation without waiting for a commercial API roadmap.

This is another reason exclusivity is fading. If a customer can run a capable model in its own environment, the value of exclusive access to a closed model declines. The frontier still commands attention, but the middle of the market becomes fiercely competitive.

By making Microsoft’s license non-exclusive and allowing OpenAI to serve products through any cloud provider, the companies can argue that the market remains open. That does not eliminate competition concerns, but it changes the shape of them. Regulators may focus less on one exclusive channel and more on broader questions of infrastructure concentration.

The next regulatory frontier is likely to involve compute access. If only a few hyperscalers can finance gigawatt-scale AI infrastructure, then model competition may depend on access to those firms. That raises familiar questions about gatekeepers, preferential pricing, self-preferencing, and barriers to entry.

Regulators may examine:

For smaller cloud providers, the picture is harder. Multi-cloud rhetoric sounds open, but frontier AI workloads require enormous scale. If the practical choices are still Azure, AWS, Google, Oracle, and a handful of specialized GPU clouds, the market may be open in theory but concentrated in practice.

That concentration creates openings for governments and regional infrastructure providers. Sovereign AI programs, national compute reserves, and regional data center initiatives may grow as countries decide that AI capacity is too strategic to outsource entirely. The cloud market is becoming geopolitics by other means.

Investors should pay close attention to the companies selling capacity into the boom. Oracle, CoreWeave, Nvidia, AMD, Broadcom, AWS, Microsoft, and Google are no longer peripheral suppliers; they are central characters in the financial structure of generative AI. If model revenue disappoints, the shock will travel through the infrastructure chain.

The most important signals over the next year will be:

Source: TechFlow Post Microsoft and OpenAI “Part Ways”: The Era of Model Exclusivity Has Ended

Background

Background

Microsoft’s original 2019 investment in OpenAI was one of the most consequential technology bets of the last decade. At the time, OpenAI was still transitioning from research lab to commercial AI platform, and Microsoft needed a way to make Azure more relevant in a cloud market long defined by Amazon Web Services’ lead. The bargain was simple in spirit: Microsoft supplied capital and infrastructure, while OpenAI supplied frontier AI research that could make Azure feel indispensable.That arrangement looked brilliant after ChatGPT arrived in late 2022. Suddenly, Microsoft had privileged access to the most culturally important software product since the smartphone app store, and Azure became the default enterprise path to OpenAI’s models. The partnership fed directly into Microsoft 365 Copilot, GitHub Copilot, Azure OpenAI Service, and a broader narrative that Microsoft had leapfrogged Google in applied AI.

But exclusivity that looks strategic in a scarcity market can become restrictive in a scale market. OpenAI’s need for compute grew faster than any single cloud provider could comfortably satisfy, while enterprise customers increasingly wanted model choice rather than model lock-in. At the same time, competitors including Anthropic, Google DeepMind, Meta, xAI, Mistral, and others turned the model layer into a rapidly contested market rather than a one-company bottleneck.

The new agreement therefore reflects a deeper industry correction. Microsoft remains OpenAI’s primary cloud partner, OpenAI products are still expected to ship first on Azure unless Microsoft cannot or chooses not to support the required capabilities, and Microsoft remains a major shareholder. Yet the symbolic change is unmistakable: the era when one cloud provider could use exclusive access to one frontier model as a durable moat has ended.

The End of Model Exclusivity

From Crown Jewel to Shared Asset

For years, the market treated GPT access as a strategic crown jewel. If an enterprise wanted OpenAI’s frontier models with contractual support, compliance promises, and managed infrastructure, Azure was the privileged route. That gave Microsoft a powerful sales story: choose Azure, and you get the model that everyone is trying to build around.The revised agreement weakens that story without destroying Microsoft’s position. OpenAI can now distribute products across other clouds, and Microsoft’s IP license is no longer exclusive. The practical effect is that OpenAI becomes more like an independent platform company and less like an Azure-bound research engine.

This matters because model access is becoming less defensible than model integration. Enterprises rarely want one model forever; they want routing, governance, cost controls, auditability, and fallback options. If a procurement team can buy OpenAI, Anthropic, Gemini, and open-weight models through multiple channels, the cloud vendor’s advantage moves from exclusivity to operational excellence.

Key implications include:

- OpenAI gains distribution flexibility across customers already standardized on AWS, Google Cloud, Oracle, or hybrid environments.

- Microsoft keeps strategic exposure through equity, Azure-first product positioning, and a continuing IP license.

- Enterprise buyers gain leverage because model providers must compete on deployment terms, not merely brand power.

- Cloud vendors must differentiate through performance, reliability, security, and regional availability.

- The AI market becomes more modular, with models, chips, clouds, and applications negotiated separately.

Why Exclusivity Became a Liability

The old model assumed that the best AI system would be scarce enough to justify a single privileged channel. That assumption made sense when GPT-4 stood far ahead of most commercial alternatives. It makes less sense now, when Claude, Gemini, Llama-based systems, and specialized coding or reasoning models can compete credibly across many workloads.Enterprise AI adoption also punishes rigidity. Large companies operate across multiple clouds for redundancy, regulatory reasons, merger history, pricing leverage, and internal politics. A vendor that says “move everything to our cloud to use this model” is asking customers to reorganize infrastructure around a moving target.

That is why the amended Microsoft-OpenAI deal is not merely a legal update. It acknowledges that model exclusivity has become commercially expensive. In a market where customers expect optionality, the exclusive supplier risks becoming the bottleneck rather than the gatekeeper.

Compute Is the New Strategic Moat

The Scarcity Has Moved Down the Stack

The most important phrase in the new AI economy may not be “large language model.” It may be gigawatts of data center capacity. Training and serving frontier AI systems requires chips, land, cooling, networking, electrical interconnection, power purchase agreements, and years of construction discipline.This is why the largest AI deals increasingly look less like software partnerships and more like industrial infrastructure contracts. OpenAI’s reported commitments with Azure, Oracle, and AWS total hundreds of billions of dollars over multiple years. Anthropic, meanwhile, has deepened ties with Amazon and Google to secure the compute capacity needed to train and serve Claude at global scale.

The apparent paradox is that AI labs are valued like software companies but increasingly behave like energy-intensive industrial customers. Their marginal product may be code, but their limiting input is physical infrastructure. That makes the AI platform business more capital-intensive than the internet platforms that preceded it.

The new scarcity stack looks like this:

- Advanced accelerators from Nvidia, AMD, Google, Amazon, and custom silicon programs.

- High-bandwidth memory and packaging capacity that constrain chip output.

- Data center campuses with enough land, cooling, and grid access.

- Electricity supply measured in gigawatts rather than megawatts.

- Networking fabric capable of linking enormous accelerator clusters.

- Engineering talent that can optimize models across different chip architectures.

Why Cloud Providers Gain Power

Cloud providers understand this transition better than almost anyone. They know that every frontier AI lab wants flexibility in theory but needs guaranteed capacity in practice. Once a model company commits to a particular training stack, moving becomes expensive, slow, and risky.This is the hidden meaning of the new Microsoft-OpenAI relationship. OpenAI may be freer contractually, but it remains bound by infrastructure reality. Azure-first distribution, existing commitments, and deep engineering integration ensure that Microsoft is still inside OpenAI’s operating model.

The same logic benefits AWS, Oracle, and Google Cloud. If a lab signs a multi-year compute commitment, the cloud provider receives a long-duration revenue stream and may gain equity upside as well. The shovel sellers do not need to know which prospector finds gold; they need only ensure every prospector keeps buying shovels.

Microsoft’s Real Trade: Exclusivity for Certainty

Losing the Label, Keeping the Economics

At first glance, Microsoft appears to have surrendered a crown jewel. It no longer holds exclusive OpenAI model rights, and OpenAI can court rival cloud platforms more openly. That sounds like a loss for Redmond, especially after years of marketing Azure as the enterprise gateway to OpenAI.But Microsoft traded legal exclusivity for something arguably more durable: predictable economics. It retains a major equity stake, a continuing non-exclusive license to OpenAI models and products through 2032, ongoing revenue share from OpenAI through 2030 subject to a cap, and a primary cloud role. That is not a clean breakup; it is a restructuring of risk.

For Microsoft, this may be a more mature posture. The company no longer has to carry the entire burden of satisfying OpenAI’s infrastructure appetite, which has become enormous even by hyperscaler standards. It can keep building Copilot, Azure AI services, security products, and internal model research while letting OpenAI diversify some of the capital load.

Microsoft’s likely wins include:

- Reduced pressure to fund every OpenAI data center need directly.

- Continued access to OpenAI technology for Microsoft products through 2032.

- Equity participation if OpenAI’s valuation continues to rise.

- Azure-first positioning that keeps Microsoft central to launches.

- Freedom to invest in internal AI models and alternative partnerships.

- Improved regulatory optics compared with a tighter exclusive arrangement.

The Suleyman Factor

Microsoft’s hiring of Mustafa Suleyman and its expansion of Microsoft AI were early signs that Redmond did not want to depend forever on a single outside lab. The company still benefits enormously from OpenAI, but it also needs its own model roadmap, consumer AI identity, and research culture. No platform company of Microsoft’s scale wants to be permanently downstream of another company’s product decisions.That internal push gives Microsoft leverage. If OpenAI’s models remain best-in-class, Microsoft can use them. If another model becomes better for coding, productivity, multimodal reasoning, or edge deployment, Microsoft can adapt.

The amended agreement therefore does not mark Microsoft’s retreat from AI. It marks Microsoft’s transition from exclusive patron to portfolio platform operator. That is a subtle but important difference.

OpenAI’s Freedom Comes With New Constraints

Multi-Cloud Is Not the Same as Independence

OpenAI gains something valuable from the revised deal: the ability to meet customers where they already are. If a bank is standardized on AWS, a manufacturer relies on Google Cloud analytics, or a government contractor has a multi-cloud compliance architecture, OpenAI can now pursue that business more directly. That removes friction from enterprise sales.But multi-cloud freedom can also create operational complexity. Different clouds use different accelerators, networking assumptions, storage layers, security primitives, observability tools, and procurement models. Running the same AI product consistently across Azure, AWS, Oracle, and Google Cloud is far harder than distributing a conventional SaaS app.

OpenAI’s challenge is that freedom at the sales layer may mean fragmentation at the infrastructure layer. Training pipelines optimized for one chip architecture do not automatically perform well on another. Inference economics can change dramatically depending on accelerator availability, batch sizes, memory bandwidth, and regional energy costs.

A realistic multi-cloud AI strategy requires sequential discipline:

- Standardize model-serving interfaces so customers see consistent behavior across clouds.

- Optimize inference separately for GPUs, TPUs, Trainium, and other accelerator families.

- Build governance layers that enforce privacy, residency, and audit controls across providers.

- Negotiate capacity with redundancy rather than treating each cloud deal as isolated.

- Protect margin discipline so growth does not simply convert into larger compute losses.

Revenue Must Catch the Infrastructure Curve

The biggest question is whether OpenAI’s revenue can grow fast enough to support its infrastructure commitments. Reported annualized revenue figures suggest astonishing growth, but the compute obligations being discussed are even more astonishing. The gap between software revenue and industrial-scale capital commitments is now the central financial drama of the AI boom.This is where the “best model wins” thesis breaks down. A model can be popular, technically impressive, and strategically important while still struggling to generate enough gross margin to cover its compute appetite. Consumer subscriptions, API usage, enterprise seats, advertising, agents, and licensing all have to scale together.

OpenAI is not alone in facing this equation. Every frontier lab is being pushed toward a world where model training runs cost more, inference demand grows, and customers expect prices to fall. That is a brutal combination unless productivity gains become measurable enough to justify much larger enterprise contracts.

AWS, Oracle, and Google Smell the Opportunity

The New Infrastructure Auction

The end of Microsoft exclusivity opens the door for OpenAI’s rivals and partners to compete more aggressively around infrastructure. AWS gains a chance to put OpenAI workloads in front of customers who already buy most of their cloud services from Amazon. Oracle gains relevance through massive AI infrastructure projects that would have seemed improbable a decade ago.Google sits in a more complicated position. It has its own frontier model family in Gemini, its own TPU stack, and a cloud business that wants third-party AI workloads. That means Google can compete with OpenAI at the model layer while still benefiting if labs need TPU capacity or enterprise distribution.

The cloud market is therefore becoming less like a winner-take-all race and more like an infrastructure auction. Model companies need capacity, and hyperscalers need anchor tenants to justify vast data center investment. The question is no longer simply “which model is smartest?” but which provider can deliver the cheapest reliable intelligence per watt.

Infrastructure competitors are differentiating through:

- Custom silicon such as TPUs and Trainium.

- GPU cluster scale and availability.

- Energy procurement and grid interconnection speed.

- Enterprise compliance posture for regulated industries.

- Developer tooling that makes model switching less painful.

- Global region coverage for latency and data sovereignty.

Amazon’s Hedge

Amazon is especially interesting because it has backed Anthropic deeply while also moving closer to OpenAI. That puts AWS in a powerful hedge position. If Claude wins more enterprise workloads, AWS benefits; if OpenAI expands onto AWS, AWS benefits; if both consume more compute, AWS benefits twice.This resembles Amazon’s classic marketplace logic. The company does not have to pick the single winning seller if it owns the marketplace, fulfillment network, and payment rails. In AI, the equivalent is cloud infrastructure, chips, agent runtimes, and enterprise procurement channels.

Oracle’s opportunity is different but equally revealing. It is trying to convert a late-cloud position into an AI infrastructure role by building or financing huge capacity blocks. If successful, Oracle becomes proof that the AI boom can reorder cloud relevance around power access and willingness to build.

Enterprise Buyers Are the Real Swing Vote

Model Choice Becomes Procurement Policy

For WindowsForum readers inside IT departments, the Microsoft-OpenAI amendment has a practical message: do not architect your AI strategy around a single model contract. The largest vendors are themselves moving away from exclusive dependency. Enterprises should take the hint.Model performance changes too quickly for three-year lock-in to feel safe. A model that leads in coding this quarter may lag in document reasoning next quarter, while a cheaper open-weight model may become sufficient for internal support or classification workloads. The smart enterprise posture is controlled optionality, not chaotic experimentation.

That does not mean every company should run five models in production. It means the architecture should allow model substitution where appropriate, with policy, logging, and evaluation frameworks above the model layer. The enterprise moat is not access to one model; it is the ability to deploy AI safely, cheaply, and measurably.

Enterprise AI teams should prioritize:

- Model abstraction layers that reduce switching costs.

- Evaluation benchmarks tied to real internal tasks.

- Data governance controls before broad rollout.

- Cost observability at the token, user, and workflow level.

- Fallback routing for outages, latency spikes, or quality regression.

- Procurement clauses that avoid cloud or model lock-in.

Copilot, Azure, and the Windows Estate

Microsoft still has a strong enterprise advantage because AI adoption often flows through existing identity, productivity, and device management systems. Microsoft 365, Entra ID, Intune, Defender, Windows, Teams, and SharePoint create a distribution surface that OpenAI alone cannot replicate. For many organizations, Copilot will remain the easiest approved AI path regardless of OpenAI’s multi-cloud freedom.But Microsoft now has to prove Copilot’s value more concretely. If OpenAI models are available elsewhere, Azure cannot rely solely on privileged model access. Microsoft must win through integration, security, compliance, admin controls, and workflow automation.

That is good news for customers. Competition should pressure Microsoft to make Copilot more transparent, measurable, and interoperable. The worst outcome would be AI licensing that feels like another opaque enterprise software tax.

The Consumer Impact: More Choice, Less Clarity

ChatGPT Outside the Azure Shadow

Consumers may not notice immediate changes from the Microsoft-OpenAI amendment. ChatGPT remains ChatGPT, and most users do not know or care which data center serves a response. Yet the agreement could affect product speed, reliability, and availability over time.If OpenAI can use more clouds, it may reduce capacity bottlenecks during major launches. That could mean fewer usage caps, faster model rollouts, and more resilient service during traffic spikes. It may also allow OpenAI to tune infrastructure for products such as agents, video, real-time voice, and coding environments.

However, more infrastructure options do not automatically mean simpler products. OpenAI is expanding across consumer subscriptions, enterprise tools, developer APIs, agents, media generation, and potentially advertising-supported experiences. The risk is that product strategy becomes more crowded even as infrastructure becomes more flexible.

Consumer-facing effects may include:

- Better availability during high-demand model launches.

- More differentiated subscription tiers tied to compute-heavy features.

- Faster rollout of agentic tools that require persistent cloud execution.

- Potential advertising experiments if subscription growth slows.

- Greater privacy scrutiny as data flows across more infrastructure partners.

The Trust Problem

Consumers have a different concern than enterprises. They are less focused on procurement leverage and more focused on trust, privacy, price, and product quality. If OpenAI’s infrastructure partnerships become more complex, users may ask where their data goes and which partners touch the stack.This is especially important as AI tools become more personal. Voice assistants, memory features, browser agents, email agents, and coding copilots can reveal far more about a user than a traditional search query. Infrastructure scale must therefore be matched by clear privacy promises.

Microsoft has its own trust calculation. If OpenAI builds more direct consumer relationships across clouds, Microsoft must ensure Copilot feels like a coherent Microsoft product rather than a wrapper around someone else’s roadmap. That distinction will matter as AI becomes embedded into Windows and Microsoft 365.

The Model Layer Is Not Dead

Commoditized Does Not Mean Worthless

It would be wrong to say models no longer matter. Frontier model quality still drives adoption, pricing power, developer enthusiasm, and enterprise credibility. A dramatic leap in reasoning, coding, multimodal understanding, or agent reliability can still reshape the market.The better argument is that models are no longer scarce in the same way. There are now multiple credible frontier systems, strong open-weight alternatives, and specialized models that outperform general systems on narrow tasks. That changes bargaining power.

In the earlier ChatGPT era, the question was whether you had access to the model. In the current era, the question is how efficiently you can deploy the right model for the right job. That favors platforms with orchestration, evaluation, observability, and cost controls.

The model layer still matters in several areas:

- Reasoning performance for complex planning and technical work.

- Coding accuracy for enterprise software development.

- Multimodal capability across text, image, audio, and video.

- Tool use and agents for workflow automation.

- Safety behavior in regulated or sensitive domains.

- Inference efficiency that affects margins and user experience.

Open-Weight Pressure

Meta’s Llama ecosystem and other open-weight models add further pressure. They do not need to beat frontier closed models on every benchmark to matter. They only need to be good enough for many workloads and cheaper or easier to control.For enterprises, open-weight models can be attractive when data control, customization, latency, or cost predictability matter more than absolute frontier performance. For governments, they can support sovereignty goals. For developers, they provide a playground for experimentation without waiting for a commercial API roadmap.

This is another reason exclusivity is fading. If a customer can run a capable model in its own environment, the value of exclusive access to a closed model declines. The frontier still commands attention, but the middle of the market becomes fiercely competitive.

Regulatory and Competitive Pressure

The Antitrust Optics

The Microsoft-OpenAI partnership has attracted regulatory attention because it sits at the intersection of cloud computing, enterprise software, AI infrastructure, and strategic investment. A deeply exclusive arrangement between one of the world’s largest software companies and the most prominent AI lab was always going to invite scrutiny. The revised agreement may reduce some of that pressure.By making Microsoft’s license non-exclusive and allowing OpenAI to serve products through any cloud provider, the companies can argue that the market remains open. That does not eliminate competition concerns, but it changes the shape of them. Regulators may focus less on one exclusive channel and more on broader questions of infrastructure concentration.

The next regulatory frontier is likely to involve compute access. If only a few hyperscalers can finance gigawatt-scale AI infrastructure, then model competition may depend on access to those firms. That raises familiar questions about gatekeepers, preferential pricing, self-preferencing, and barriers to entry.

Regulators may examine:

- Cloud concentration in frontier AI training and inference.

- Equity investments that create soft control without acquisition.

- Exclusive or preferential capacity agreements for advanced accelerators.

- Data center power access and local community impact.

- Model licensing terms that disadvantage smaller competitors.

- Bundling practices across productivity software, cloud, and AI services.

Rival Responses

Google will likely use the end of exclusivity to argue that Gemini competes in an open model market while Google Cloud competes on infrastructure. Amazon will emphasize customer choice and AWS’s ability to host multiple leading labs. Oracle will continue positioning itself as a neutral capacity builder for massive AI workloads.For smaller cloud providers, the picture is harder. Multi-cloud rhetoric sounds open, but frontier AI workloads require enormous scale. If the practical choices are still Azure, AWS, Google, Oracle, and a handful of specialized GPU clouds, the market may be open in theory but concentrated in practice.

That concentration creates openings for governments and regional infrastructure providers. Sovereign AI programs, national compute reserves, and regional data center initiatives may grow as countries decide that AI capacity is too strategic to outsource entirely. The cloud market is becoming geopolitics by other means.

Strengths and Opportunities

The revised Microsoft-OpenAI agreement creates a more flexible AI market, and that flexibility could benefit customers, developers, and even Microsoft itself. The best outcome is an ecosystem where model companies compete on quality, cloud providers compete on infrastructure efficiency, and enterprises can mix capabilities without rebuilding their entire stack every year.- OpenAI can pursue customers across more clouds, reducing friction for enterprises that do not want to standardize on Azure.

- Microsoft retains deep upside without sole infrastructure responsibility, which may improve capital discipline.

- Azure must compete on operational value, including security, compliance, reliability, and developer experience.

- AWS and Google Cloud gain stronger incentives to support frontier model availability and AI-native tooling.

- Enterprise buyers gain negotiating leverage as model access becomes less tied to one cloud contract.

- Developers benefit from a more modular ecosystem where applications can route across models and providers.

- The industry may become more resilient if AI workloads are distributed across multiple infrastructure partners.

Risks and Concerns

The same shift also introduces serious risks. Multi-cloud AI can reduce dependence on one provider, but it can increase complexity, cost opacity, and accountability gaps. The industry’s appetite for compute may also outrun realistic revenue growth, producing financial stress across the companies that have built their strategies around OpenAI and other frontier labs.- OpenAI’s compute obligations may become difficult to sustain if revenue growth slows or margins compress.

- Cloud concentration may deepen even as contractual exclusivity declines.

- Enterprise customers may face new complexity in evaluating model behavior across providers.

- Infrastructure lock-in may replace legal lock-in, especially when workloads are optimized for specific chips.

- Energy demand could provoke public backlash around grid strain, water use, and local data center expansion.

- AI pricing may become more confusing as vendors bundle models, clouds, agents, and productivity software.

- Regulators may struggle to track soft control created by equity stakes, cloud credits, and capacity commitments.

What to Watch Next

The next phase of the AI race will be measured less by demo videos and more by capacity delivery, enterprise conversion, and margin discipline. Watch whether OpenAI can turn multi-cloud access into profitable enterprise expansion rather than simply larger infrastructure bills. Also watch whether Microsoft’s Copilot business becomes more independent of OpenAI’s brand as Microsoft develops its own models and agent platforms.Investors should pay close attention to the companies selling capacity into the boom. Oracle, CoreWeave, Nvidia, AMD, Broadcom, AWS, Microsoft, and Google are no longer peripheral suppliers; they are central characters in the financial structure of generative AI. If model revenue disappoints, the shock will travel through the infrastructure chain.

The most important signals over the next year will be:

- Whether OpenAI’s AWS and Oracle relationships produce broad enterprise adoption or mainly serve as capacity relief.

- Whether Microsoft expands proprietary model work inside Copilot, Windows, and Azure AI services.

- Whether Anthropic continues gaining enterprise share through Claude and developer tools.

- Whether AI infrastructure contracts are revised, delayed, or repriced as revenue realities become clearer.

- Whether regulators focus on cloud capacity as the new AI bottleneck rather than model licensing alone.

Source: TechFlow Post Microsoft and OpenAI “Part Ways”: The Era of Model Exclusivity Has Ended