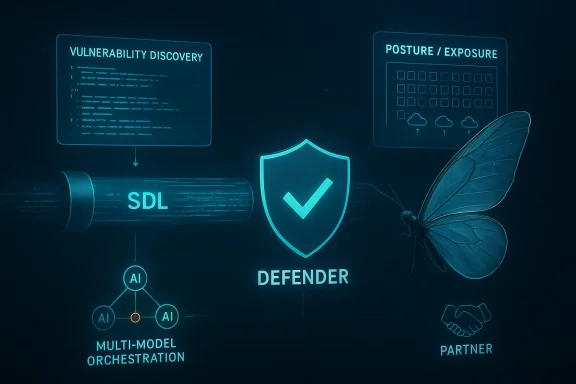

Microsoft is moving from warning about AI’s role in cyberattacks to operationalizing AI as a core part of defense. In its April 22, 2026 security blog, the company said new model capabilities are shrinking the gap between vulnerability discovery and exploitation, while also creating an opportunity to accelerate detection, mitigation, and response at enterprise scale. The headline development is a closer partnership with Anthropic through Project Glasswing, plus a broader push to bake multi-model AI into Microsoft’s security lifecycle, posture management, and defensive tooling.

The timing matters. Microsoft has spent the last year steadily reframing cybersecurity around an AI-shaped threat landscape, and this announcement fits directly into that arc. In early 2026, Microsoft warned that threat actors are increasingly operationalizing AI across the attack lifecycle, and that defenders must embed intelligence and defense across the same lifecycle if they want to keep pace.

That broader argument is not just rhetorical. Microsoft has been expanding its Secure Future Initiative, which it says has already pushed the company to use AI internally for vulnerability discovery and remediation while strengthening foundational security practices. The company’s February 2026 update to the Security Development Lifecycle stressed that traditional threat models break down for AI-specific attack vectors such as prompt injection, data poisoning, and malicious tool interactions.

At the same time, Microsoft has been building the evaluation plumbing needed to justify AI use in security work. Its CTI-REALM benchmark, published in March 2026, was designed to measure whether AI agents can convert threat intelligence into working detections, not just answer trivia about threats. That distinction is important because security operations depend on repeatable, validated outputs, not impressive demos.

The April 22 post takes those pieces and ties them together into a practical strategy. Microsoft is arguing that if attackers can use AI to move faster, defenders should use AI to discover more, prioritize better, and remediate sooner. The company is also signaling that it does not want to bet on a single model family, but instead on a multi-model operating approach where models are evaluated, compared, and deployed based on task fit and security value.

There is a market reality underneath this, too. Microsoft’s security stack has become increasingly integrated across Defender, Entra, Purview, Sentinel, and Exposure Management, and the company has been adding AI-assisted capabilities across those surfaces for months. The current announcement is less a one-off product launch than a consolidation of that work into a single narrative: AI is now part of the security control plane, not just a feature bolted on top.

The company’s language also suggests a change in how it views exploitability. It is not enough to know that a vulnerability exists; defenders need to know whether it can be chained, scaled, or operationalized quickly. That is why Microsoft repeatedly emphasizes prioritization, context, and validation rather than simply producing more findings.

Microsoft’s position is that this is not just about high-end enterprise targets. The problem extends to internet-facing assets, open-source components, and first-party codebases that can be harvested or chained into broader compromise paths. The more sprawling the estate, the more useful AI becomes to both sides.

That framing also aligns with Microsoft’s recent warning that the agent ecosystem itself will be heavily attacked. In other words, the challenge is no longer only malware or phishing; it is also the software and AI systems defenders deploy to automate their own work. That makes governance and inventory foundational, not optional.

This is strategically interesting because it reinforces Microsoft’s insistence that no single model family should define its security strategy. In practical terms, that means Microsoft wants the freedom to swap in different models depending on task quality, safety, and enterprise fit. That is a very different posture from the old “one assistant for everything” AI narrative.

Microsoft’s approach also gives it a hedge against model-specific blind spots. If one model performs well on detection engineering but poorly on prioritization, another may fill the gap. That is especially important for enterprises that want defensible, repeatable workflows rather than novelty.

It also hints that Microsoft wants tighter integration between model providers and its security stack. If model output is going to feed vulnerability discovery, detection engineering, and remediation, then the platform matters as much as the model. That is classic Microsoft: elevate the platform, then make the ecosystem come to it.

The promise here is not merely faster scanning. It is a broader and more adaptive discovery process, one that can spot patterns humans might miss and prioritize the issues that are most likely to matter in the field. Microsoft is also applying these capabilities to select open-source codebases, with findings handled through coordinated vulnerability disclosure.

The company also says AI-assisted discoveries will move through existing MSRC workflows, including normal monthly update releases and, when necessary, out-of-band patches. That helps connect a novel capability to a familiar operational process, which is exactly what mature security programs need.

There is also a customer-facing payoff. Microsoft says it can rapidly deploy corresponding Defender detections alongside fixes, which reduces the time between a vulnerability being understood and a mitigation being available. That kind of paired release model is likely to become a key differentiator in enterprise security purchasing.

This is where Microsoft’s broader exposure-management story becomes important. Security Exposure Management is increasingly positioned as the place where organizations assess current state, understand prioritized actions, simulate what-if scenarios, and automate remediation. That suggests a shift from static compliance toward living risk management.

The emphasis on continuous discovery is especially relevant for internet-facing assets, where unknown or forgotten resources often create the easiest entry points. Microsoft Defender External Attack Surface Management is built to map those exposures, classify them, and prioritize what matters most.

Microsoft is also trying to make posture more understandable for non-specialists. The notion of “what-if” scenarios and impact simulation is important because it reduces the fear that security hardening must come at the cost of usability. If the platform can show the blast radius before enforcing a control, adoption should be easier.

The company’s messaging also reinforces a long-standing Microsoft pattern: pair product updates with ecosystem coordination. MAPP exists specifically to give defensive security providers early access before Update Tuesday, helping them ship protections in sync with Microsoft’s own release cadence.

Microsoft’s plan to “sim-shipping” detections with corresponding updates is therefore more than a product detail. It is a recognition that modern defense needs synchronized layers: patch, detect, monitor, and inform. One without the others leaves an avoidable gap.

The other signal here is that Microsoft wants Defender to be the place where new detections land first. For customers already invested in that ecosystem, the integration story gets stronger. For everyone else, the message is that security tooling without close linkage to vendor remediation may start to feel slower by comparison.

For consumers, the changes are mostly indirect. End users will benefit when Microsoft’s enterprise protections improve the quality of patches, detections, and platform defaults, but they are not the primary audience for this announcement. The real audience is security teams, IT administrators, and CISOs who need to manage large, heterogeneous estates.

That distinction will likely become sharper as AI-assisted exploitation accelerates. The latency of human approval chains, maintenance windows, and validation cycles is now a security variable in its own right. Teams that can shorten those cycles are going to be materially safer.

That is why Microsoft keeps returning to context and actionability. A long list of potential vulnerabilities is not useful unless it comes with prioritization, ownership, and a workflow to close the loop. Enterprises that treat the output as an input to process rather than as a final answer will get the most value.

It also raises the bar for model providers. If security benchmarks like CTI-REALM and integrated workflows become important buying signals, then model performance will be judged less by generic capability and more by operational usefulness in security tasks. That is a very different market from consumer chatbots or general coding assistants.

But integration cuts both ways. The more Microsoft centralizes the security workflow, the more customers will expect consistency, transparency, and predictable outcomes. If model-driven suggestions are not accurate or actionable, trust can erode quickly.

That could be difficult for smaller vendors to match. Niche innovators may outpace Microsoft in specialized areas, but they may struggle to provide the same cross-domain orchestration. In a world where the best defense is coordinated action, that orchestration will matter more than ever.

There is also a market risk. If every vendor starts promising AI-driven everything, customers may face a new wave of feature inflation disguised as innovation. The real test will be whether these tools measurably reduce exposure, shorten remediation times, and improve detection quality in live environments.

Watch also for how quickly Microsoft expands model testing beyond Anthropic. The company has said the multi-model approach is intentional, so the market should expect more comparisons, more task-specific evaluations, and more integrations when models prove their worth. That could reshape how customers evaluate AI vendors in security.

Source: Microsoft AI-powered defense for an AI-accelerated threat landscape | Microsoft Security Blog

Background

Background

The timing matters. Microsoft has spent the last year steadily reframing cybersecurity around an AI-shaped threat landscape, and this announcement fits directly into that arc. In early 2026, Microsoft warned that threat actors are increasingly operationalizing AI across the attack lifecycle, and that defenders must embed intelligence and defense across the same lifecycle if they want to keep pace.That broader argument is not just rhetorical. Microsoft has been expanding its Secure Future Initiative, which it says has already pushed the company to use AI internally for vulnerability discovery and remediation while strengthening foundational security practices. The company’s February 2026 update to the Security Development Lifecycle stressed that traditional threat models break down for AI-specific attack vectors such as prompt injection, data poisoning, and malicious tool interactions.

At the same time, Microsoft has been building the evaluation plumbing needed to justify AI use in security work. Its CTI-REALM benchmark, published in March 2026, was designed to measure whether AI agents can convert threat intelligence into working detections, not just answer trivia about threats. That distinction is important because security operations depend on repeatable, validated outputs, not impressive demos.

The April 22 post takes those pieces and ties them together into a practical strategy. Microsoft is arguing that if attackers can use AI to move faster, defenders should use AI to discover more, prioritize better, and remediate sooner. The company is also signaling that it does not want to bet on a single model family, but instead on a multi-model operating approach where models are evaluated, compared, and deployed based on task fit and security value.

There is a market reality underneath this, too. Microsoft’s security stack has become increasingly integrated across Defender, Entra, Purview, Sentinel, and Exposure Management, and the company has been adding AI-assisted capabilities across those surfaces for months. The current announcement is less a one-off product launch than a consolidation of that work into a single narrative: AI is now part of the security control plane, not just a feature bolted on top.

Why Microsoft Is Reframing the Threat

Microsoft’s core thesis is that the old asymmetry in cybersecurity is getting worse in the attacker’s favor. AI-assisted discovery can lower the skill threshold for finding weaknesses, chaining issues, and generating proof-of-concept code, which compresses the defender’s reaction window. That is a meaningful shift because many security programs still assume a human-paced vulnerability lifecycle.The company’s language also suggests a change in how it views exploitability. It is not enough to know that a vulnerability exists; defenders need to know whether it can be chained, scaled, or operationalized quickly. That is why Microsoft repeatedly emphasizes prioritization, context, and validation rather than simply producing more findings.

The shrinking window

The shrinking window between disclosure and exploitation is the real operational problem. If AI can accelerate recon, exploit chaining, and code generation, then defenders cannot rely on monthly rhythms alone. They need telemetry, exposure management, and patching discipline that assume rapid follow-on abuse.Microsoft’s position is that this is not just about high-end enterprise targets. The problem extends to internet-facing assets, open-source components, and first-party codebases that can be harvested or chained into broader compromise paths. The more sprawling the estate, the more useful AI becomes to both sides.

- AI reduces the cost of offensive experimentation.

- Small defects can become larger exploit chains faster.

- Exposure management becomes as important as patching.

- Response speed matters more when the attacker’s cycle compresses.

- Detection must be closer to the moment of change.

What changed in practice

Microsoft is not claiming the threat changed in kind; it is claiming the economics changed. The techniques are familiar, but the speed and volume are not. That distinction matters because it points to a defensive response centered on automation, telemetry, and model-assisted triage rather than new security theory.That framing also aligns with Microsoft’s recent warning that the agent ecosystem itself will be heavily attacked. In other words, the challenge is no longer only malware or phishing; it is also the software and AI systems defenders deploy to automate their own work. That makes governance and inventory foundational, not optional.

Project Glasswing and the Anthropic Partnership

One of the most notable elements of the announcement is Microsoft’s close work with Anthropic under Project Glasswing. Microsoft says it is testing Claude Mythos Preview to identify and mitigate vulnerabilities earlier and to coordinate defensive response, using its own benchmark to assess model performance on detection engineering tasks.This is strategically interesting because it reinforces Microsoft’s insistence that no single model family should define its security strategy. In practical terms, that means Microsoft wants the freedom to swap in different models depending on task quality, safety, and enterprise fit. That is a very different posture from the old “one assistant for everything” AI narrative.

Why model diversity matters

Security work is not one task. It includes code review, telemetry analysis, threat intelligence interpretation, exploit validation, and remediation planning, all of which stress models in different ways. A model that is strong at one stage may be weaker at another, so a portfolio approach can make more sense than a monogamous one.Microsoft’s approach also gives it a hedge against model-specific blind spots. If one model performs well on detection engineering but poorly on prioritization, another may fill the gap. That is especially important for enterprises that want defensible, repeatable workflows rather than novelty.

- Project Glasswing is about real-world security workflows.

- Anthropic is one partner, not the strategy.

- Model comparison is being treated as a security control.

- Benchmarking is part of procurement and trust.

- Task-specific performance matters more than broad hype.

The enterprise implications

For enterprises, the bigger implication is that Microsoft is normalizing the idea that security AI will be heterogeneous. Buyers should expect model choice to be tied to workflow, data sensitivity, and governance requirements. That will likely favor platforms that can orchestrate models cleanly rather than those that simply expose the most fluent chatbot.It also hints that Microsoft wants tighter integration between model providers and its security stack. If model output is going to feed vulnerability discovery, detection engineering, and remediation, then the platform matters as much as the model. That is classic Microsoft: elevate the platform, then make the ecosystem come to it.

AI-Led Vulnerability Discovery in the SDL

Microsoft says it plans to incorporate advanced AI models directly into its Security Development Lifecycle to identify vulnerabilities and develop mitigations earlier. That matters because the SDL has long been one of the company’s most consequential security disciplines, and folding AI into it could scale discovery across far more code than traditional methods can realistically cover.The promise here is not merely faster scanning. It is a broader and more adaptive discovery process, one that can spot patterns humans might miss and prioritize the issues that are most likely to matter in the field. Microsoft is also applying these capabilities to select open-source codebases, with findings handled through coordinated vulnerability disclosure.

From discovery to mitigation

Microsoft is explicit that discovery is only step one. Its internal learning is that a finding is only useful if it can be validated, prioritized, and turned into a fix. That is a critical distinction because vulnerability backlogs often fail not from lack of data, but from lack of triage capacity.The company also says AI-assisted discoveries will move through existing MSRC workflows, including normal monthly update releases and, when necessary, out-of-band patches. That helps connect a novel capability to a familiar operational process, which is exactly what mature security programs need.

- Discovery feeds established response pipelines.

- AI does not replace vulnerability governance.

- Validation still matters before release.

- Coordinated disclosure remains the preferred route.

- Out-of-band updates remain part of the playbook.

Why this could matter at scale

If Microsoft successfully integrates AI into SDL workflows, the benefit is not just for Microsoft products. It could influence how large software vendors think about secure development in an era of machine-speed exploitation. A platform vendor proving that AI can improve its own code hygiene sends a strong signal to the rest of the market.There is also a customer-facing payoff. Microsoft says it can rapidly deploy corresponding Defender detections alongside fixes, which reduces the time between a vulnerability being understood and a mitigation being available. That kind of paired release model is likely to become a key differentiator in enterprise security purchasing.

Exposure Management and the AI-Ready Posture

Microsoft’s second pillar is AI-ready posture, which is really a way of saying that patching alone is no longer enough. The company highlights five dimensions where autonomous AI-driven attacks gain disproportionate advantage: patching, open-source software, customer source code, internet-facing assets, and baseline security hygiene.This is where Microsoft’s broader exposure-management story becomes important. Security Exposure Management is increasingly positioned as the place where organizations assess current state, understand prioritized actions, simulate what-if scenarios, and automate remediation. That suggests a shift from static compliance toward living risk management.

The Secure Now concept

Microsoft’s new Secure Now blade is designed to combine guidance with action, so customers can move from recommendation to remediation in one flow. That kind of integration matters because many teams know what they should do but struggle with the operational friction of doing it at scale.The emphasis on continuous discovery is especially relevant for internet-facing assets, where unknown or forgotten resources often create the easiest entry points. Microsoft Defender External Attack Surface Management is built to map those exposures, classify them, and prioritize what matters most.

Posture is becoming a security primitive

The strongest idea in this section is that posture is no longer a secondary dashboard metric. It is becoming the front line of AI-era defense because attackers can use automation to harvest misconfigurations, weak hygiene, and forgotten assets much faster than humans can audit them manually. That makes continuous exposure management a strategic control, not just an operational report.Microsoft is also trying to make posture more understandable for non-specialists. The notion of “what-if” scenarios and impact simulation is important because it reduces the fear that security hardening must come at the cost of usability. If the platform can show the blast radius before enforcing a control, adoption should be easier.

- Continuous inventory beats periodic guesswork.

- Prioritization should be risk-based, not noisy.

- Simulation can reduce change-management fear.

- Exposure management spans cloud and on-prem.

- AI makes basic hygiene more valuable, not less.

Detections, Defender, and the Response Layer

Microsoft says it will deploy detections to Microsoft Defender when updates are released and share details through MAPP partners to help mitigate risk. That is significant because it connects vulnerability discovery to downstream threat protection in a way that can reduce the lag between a fix and the creation of practical defenses.The company’s messaging also reinforces a long-standing Microsoft pattern: pair product updates with ecosystem coordination. MAPP exists specifically to give defensive security providers early access before Update Tuesday, helping them ship protections in sync with Microsoft’s own release cadence.

Why detection timing matters

Detection timing matters because exploitation windows are shrinking. If a vulnerability is actively being chained or weaponized, then a signature, rule, or behavior detection can sometimes buy defenders the time they need even before patch deployment finishes. That is especially useful in large organizations where patch cycles are never instantaneous.Microsoft’s plan to “sim-shipping” detections with corresponding updates is therefore more than a product detail. It is a recognition that modern defense needs synchronized layers: patch, detect, monitor, and inform. One without the others leaves an avoidable gap.

What customers should notice

Customers should notice that Microsoft is increasingly tying security value to operational choreography. The best defense is no longer just a patch or a rule; it is the timing and sequencing of the entire response package. That will reward organizations that can ingest updates quickly and validate defenses quickly.The other signal here is that Microsoft wants Defender to be the place where new detections land first. For customers already invested in that ecosystem, the integration story gets stronger. For everyone else, the message is that security tooling without close linkage to vendor remediation may start to feel slower by comparison.

- Detections and fixes should ship together.

- Early warning only helps if it is actionable.

- Defender becomes a key response distribution point.

- MAPP remains an important channel for partners.

- Timing is now a competitive security advantage.

Enterprise Impact Versus Consumer Reality

For enterprises, the announcement is really about process maturity. Microsoft is telling customers that if they run its cloud services, much of the mitigation burden is absorbed for them, but if they self-host or run on-premises infrastructure, staying current is becoming a fundamental requirement rather than a best practice. That is a strong statement, and it reflects how much of the new threat model assumes delayed patching.For consumers, the changes are mostly indirect. End users will benefit when Microsoft’s enterprise protections improve the quality of patches, detections, and platform defaults, but they are not the primary audience for this announcement. The real audience is security teams, IT administrators, and CISOs who need to manage large, heterogeneous estates.

Cloud-managed versus self-managed

Microsoft’s distinction between PaaS/SaaS and customer-managed environments is crucial. In cloud services, mitigations can be applied automatically, which reduces the burden on customers. In self-managed environments, however, the organization bears the burden of speed, inventory, and disciplined change management.That distinction will likely become sharper as AI-assisted exploitation accelerates. The latency of human approval chains, maintenance windows, and validation cycles is now a security variable in its own right. Teams that can shorten those cycles are going to be materially safer.

Governance is becoming a business issue

The implication for business leaders is straightforward: exposure management is now an operating model question, not just a technical one. If AI can surface more issues faster, organizations need governance to decide which issues deserve immediate remediation and which can wait. Without that discipline, AI just generates more noise.That is why Microsoft keeps returning to context and actionability. A long list of potential vulnerabilities is not useful unless it comes with prioritization, ownership, and a workflow to close the loop. Enterprises that treat the output as an input to process rather than as a final answer will get the most value.

- Cloud customers get more automatic protection.

- Self-hosted estates need tighter patch discipline.

- Governance decides what gets fixed first.

- Security teams need faster change windows.

- AI output without process can become noise.

The Competitive Landscape

Microsoft’s announcement also has competitive implications. By pairing AI model partnerships with its own security platform, Microsoft is trying to own both the intelligence layer and the remediation layer. That puts pressure on rival security vendors that may offer strong point solutions but lack the same breadth across identity, endpoint, cloud, and collaboration surfaces.It also raises the bar for model providers. If security benchmarks like CTI-REALM and integrated workflows become important buying signals, then model performance will be judged less by generic capability and more by operational usefulness in security tasks. That is a very different market from consumer chatbots or general coding assistants.

Why Microsoft’s stack matters

Microsoft’s advantage is distribution. It already has a huge installed base across Microsoft 365, Entra, Defender, Sentinel, and Azure, which means it can embed AI-driven security workflows into tools customers already use. That integration story is likely to resonate with enterprises that prefer fewer vendors and tighter control planes.But integration cuts both ways. The more Microsoft centralizes the security workflow, the more customers will expect consistency, transparency, and predictable outcomes. If model-driven suggestions are not accurate or actionable, trust can erode quickly.

A platform play, not just a feature play

This is fundamentally a platform play. Microsoft is trying to make AI-assisted security one of the reasons to live inside its ecosystem, while also making the ecosystem more defensive by default. The model partnership is interesting, but the real story is the connective tissue between model, benchmark, telemetry, and remediation.That could be difficult for smaller vendors to match. Niche innovators may outpace Microsoft in specialized areas, but they may struggle to provide the same cross-domain orchestration. In a world where the best defense is coordinated action, that orchestration will matter more than ever.

- Integration is becoming a moat.

- Benchmarks are becoming procurement signals.

- Security AI is moving from demo to workflow.

- Broad platforms can outcompete narrow tools.

- Trust depends on accurate, actionable output.

Strengths and Opportunities

Microsoft’s announcement has real strengths because it aligns model capability with operational security pain points. It is not trying to sell AI as a vague productivity booster; it is positioning AI as a way to discover, prioritize, and mitigate risk faster. That framing is more credible, and it maps well to how security teams actually work. Actionable security beats abstract innovation every time.- Multi-model flexibility reduces dependence on one provider.

- CTI-REALM gives the industry a more realistic benchmark.

- Secure Now can shorten the path from insight to action.

- Defender EASM strengthens visibility into internet-facing assets.

- Baseline Security Mode can improve default posture across core Microsoft 365 services.

- Sim-shipped detections can reduce the gap between patch and protection.

- Coordinated disclosure helps keep the process disciplined and credible.

Risks and Concerns

There is also real risk in this approach. The first concern is overreliance: if organizations assume AI will compensate for weak hygiene, they may postpone the basics that still matter most, such as patching, asset inventory, and least privilege. Microsoft itself warns that patching alone is not enough, but the inverse is also true: AI alone is not enough either.- False confidence could replace disciplined security work.

- Model errors may produce noisy or misleading output.

- Operational overload could happen if findings are not well prioritized.

- Opaque decisions can create governance and audit concerns.

- Vendor lock-in may increase if the workflow becomes too integrated.

- Self-managed environments may struggle to keep pace.

- Attackers will also use the same AI tools to improve offense.

There is also a market risk. If every vendor starts promising AI-driven everything, customers may face a new wave of feature inflation disguised as innovation. The real test will be whether these tools measurably reduce exposure, shorten remediation times, and improve detection quality in live environments.

What to Watch Next

The next phase of this story will be about execution, not announcement. Microsoft says the scanning harness it has built internally will be productized and previewed in June 2026, and that will be a key milestone for anyone trying to understand how serious this shift is in practice. The quality of that preview will tell us whether the company can turn AI-powered research into repeatable enterprise workflow.Watch also for how quickly Microsoft expands model testing beyond Anthropic. The company has said the multi-model approach is intentional, so the market should expect more comparisons, more task-specific evaluations, and more integrations when models prove their worth. That could reshape how customers evaluate AI vendors in security.

Key things to monitor

- The June 2026 preview of the multi-model scanning harness.

- Whether CTI-REALM gains broader industry adoption as a standard benchmark.

- How Microsoft balances automation with human verification in security workflows.

- Whether Secure Now becomes a widely used entry point for posture remediation.

- How customers in self-managed environments adapt to the new patching expectations.

Source: Microsoft AI-powered defense for an AI-accelerated threat landscape | Microsoft Security Blog