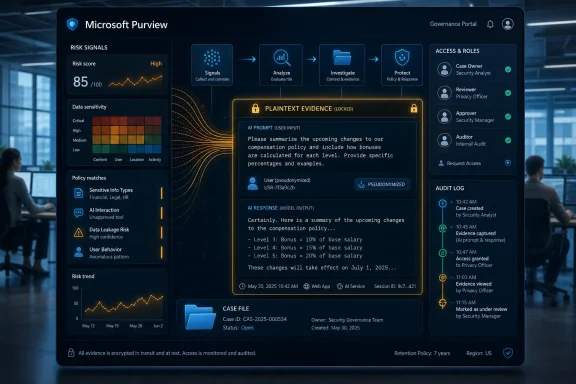

Microsoft is preparing a Microsoft Purview capability that lets authorized enterprise reviewers read flagged AI prompts and responses in plaintext, with preview activity in May 2026 and general availability expected in June 2026 for organizations using the relevant compliance controls. That sounds like a small administrative upgrade until you understand what is being upgraded: the evidence trail around workplace AI use is moving from signals, scores, and policy matches into the actual words employees typed and the answers AI systems returned. The security case is obvious. The workplace-trust problem is just as obvious.

The new Purview path is not, on paper, a universal transcript viewer for every Copilot session in the company. Microsoft is framing it as a case-review capability for risky or policy-matching interactions, surrounded by pseudonymization, role-based access controls, deanonymization permissions, and audit logging. But in practice, the important shift is that a flagged AI exchange can become readable evidence inside an enterprise investigation. For administrators, compliance officers, legal teams, and security leaders, that is powerful. For employees, contractors, and anyone typing into AI under a managed tenant, it is a reminder that prompts are not private thoughts. They are workplace records.

Enterprise AI governance has spent the past two years hiding behind reassuring vocabulary. Vendors talk about visibility, posture management, compliance signals, risky interactions, and data protection. Those phrases are not meaningless, but they are soft around the edges. They imply pattern recognition and policy enforcement without forcing everyone to confront the moment when a human reviewer reads a worker’s actual AI prompt.

Purview’s new plaintext review capability makes that moment explicit. If a Communication Compliance policy flags an AI exchange, the prompt and response can become part of the case material. A reviewer is no longer just looking at the fact that a sensitive data classifier fired, or that a user interacted with an unsanctioned AI app, or that an insider-risk score moved upward. The reviewer may be looking at the sentence that triggered the policy.

That distinction matters because prompts are often more revealing than file activity. A file name might show that a spreadsheet exists; a prompt can reveal what the worker was trying to do with it. A browser event might show that someone visited ChatGPT; a prompt can show whether they pasted customer records, pre-release code, merger language, disciplinary notes, or a regulatory memo. AI usage logs collapse intent, content, and workflow into a single artifact.

Microsoft’s argument is that enterprises need this level of evidence because AI has become part of actual work. Employees are not merely experimenting with chatbots in a sandbox. They are asking AI systems to summarize confidential documents, rewrite customer emails, generate code, compare contracts, draft policies, and troubleshoot production systems. If organizations cannot see the risky subset of those interactions, they cannot investigate leakage, harassment, regulatory violations, or misuse with much precision.

That is the security case, and it is a strong one. The unresolved question is whether enterprises will treat plaintext review as a last-mile investigative tool or as a normalized layer of managerial visibility. Purview can provide the rails, but organizational culture decides how often the train runs.

The design is meant to slow down the jump from “something risky happened” to “this named employee did it.” A reviewer can examine the policy match, assess the seriousness of the exchange, and escalate only if the situation warrants it. Deanonymization is supposed to require authorization, with role limits and audit logs recording what happened.

That sequencing is important. In a mature compliance program, it can separate legitimate investigation from curiosity, overreach, or fishing expeditions. A security analyst might see that a pseudonymized user pasted sensitive regulated data into an external AI service. A compliance reviewer might determine that the exchange is harmless or that it requires escalation. Only then should a named identity enter the case.

But pseudonymization is not the same as privacy. It is a procedural delay on identifiability, not a guarantee that content will remain unseen. Once the prompt itself is readable, many exchanges may be identifying even without a displayed name. The subject matter, writing style, project references, customer accounts, team names, or ticket details can point to a small number of people.

That is why the real governance question is not whether Microsoft masks user names by default. The real question is who inside the customer organization can approve the move from masked review to named investigation, how that approval is documented, and whether anyone reviews the reviewers. Audit logs are useful only if someone has the authority and habit to inspect them.

This is not just about Microsoft 365 Copilot. The governance perimeter is broader. Microsoft is building Purview to see AI activity across first-party Copilot experiences, Copilot Studio agents, Security Copilot, Fabric-related Copilot workflows, Foundry-built apps and agents, and connected third-party or external AI services where the tenant has the right controls in place. In some deployments, that can include enterprise AI applications registered in Entra; in others, it can include consumer AI tools accessed through managed browsers, endpoints, or networks.

That breadth is both the point and the problem. AI is not a single application anymore. It is a feature embedded into productivity suites, developer workflows, security consoles, business applications, and browsers. A compliance system that only sees one Copilot entry point would miss much of the behavior enterprises actually worry about.

Purview’s answer is to treat AI interactions as another communication and data-risk surface. Prompts and responses can appear as policy matches when they trigger Communication Compliance conditions. Those matches can then be investigated, remediated, escalated, resolved, exported, or folded into broader case workflows.

The phrase policy match does a lot of work here. It suggests a narrow, rules-driven process rather than indiscriminate monitoring. But customers can define policies broadly or narrowly. A conservative organization might limit review to obvious leakage of sensitive information, threats, harassment, or regulated data. A more aggressive organization might build policies that capture a much wider set of AI interactions in the name of risk discovery.

The same exchange can plausibly belong to all of those categories. A worker asking an external AI tool to summarize a customer complaint might be creating a data leakage issue, a retention issue, a contractual confidentiality issue, and an HR issue if the prompt includes inappropriate commentary. Once the text is available, different stakeholders will want to interpret it through their own mandates.

That is why access design cannot be an afterthought. A company that gives broad reviewer rights to a generic security group may discover that it has created a new internal surveillance surface. A company that locks access too tightly may leave investigators unable to understand real incidents. The balance is not technical alone; it is institutional.

The cleanest model is likely a tiered one. First-line reviewers see pseudonymized policy matches and limited context. Escalation requires documented justification. Deanonymization requires a separate role or approval path. Legal and HR access is case-based rather than standing. Periodic audits test whether reviewers are opening content in proportion to the risk.

That model is slower than simply granting a powerful admin role. It is also the difference between a compliance tool and a trust crisis.

AI prompts, however, are a strange new class of workplace record. They are communication, search query, scratchpad, code request, data-processing instruction, and confession booth all at once. Employees often type into AI systems the way they think, not the way they write formal email. They paste fragments, unfinished ideas, confidential material, and blunt summaries they would never send to another person.

That makes prompt review unusually sensitive. A prompt can reveal not only what data an employee handled, but what they intended to infer, automate, summarize, translate, conceal, or send elsewhere. The AI response adds a second layer: it may show generated code, advice, rewritten language, or hallucinated material that shaped the next step in the worker’s process.

For security teams, that richness is exactly why plaintext matters. Metadata may not tell whether a user pasted a harmless public paragraph or an unreleased product plan. It may not reveal whether an AI response encouraged unsafe code, mishandled regulated data, or produced discriminatory language. The actual exchange resolves ambiguity.

For privacy-minded employees, the same richness is the danger. The more useful the evidence, the more intrusive the review.

Purview’s AI review feature is different in purpose. It is aimed at compliance and risk investigation, not productivity ranking. It has pseudonymization and case workflows rather than a manager-friendly scoreboard. It is part of a regulated evidence-handling system, not a workplace performance dashboard.

Still, the trust boundary is related. Microsoft is once again giving enterprise customers deeper visibility into worker activity inside Microsoft 365 and adjacent services. This time the object is not meetings, chats, or document collaboration patterns. It is the employee’s interaction with generative AI.

The distinction will matter to IT pros trying to explain the rollout internally. “This is not productivity monitoring” may be true. It may also be insufficient. Employees will want to know whether their AI prompts can be read, by whom, under what circumstances, and whether they will be told when an interaction becomes part of a case.

A policy that exists only in Purview configuration is not enough. Organizations need a human-readable AI usage notice that says, plainly, that flagged prompts and responses may be reviewed. If enterprises want employees to use approved AI tools instead of hiding in personal accounts, they cannot make the approved path feel like a trap.

This convergence is inevitable. Enterprises do not buy AI at scale merely because the model is clever. They buy it when procurement, legal, security, and compliance believe the deployment can be governed. That means identity integration, auditability, retention, data controls, DLP, eDiscovery, and some way to understand misuse.

Microsoft’s advantage is that Purview already sits where many of those workflows live. If an organization uses Microsoft 365, Entra, Defender, Purview, Teams, SharePoint, Exchange, and Copilot, the gravitational pull is obvious. AI governance becomes another layer in the Microsoft control plane.

The risk is lock-in by investigation. Once AI prompts, policy matches, identities, sensitivity labels, audit logs, endpoint signals, and case workflows all converge in Purview, the compliance story becomes operationally hard to replace. Microsoft is not merely selling Copilot as an assistant. It is selling the oversight layer that makes Copilot and neighboring AI systems acceptable to risk committees.

That may be good architecture for Microsoft customers. It is also a reminder that enterprise AI is becoming less about the chatbot window and more about the administrative substrate underneath it.

The right time to define review rules is before plaintext access is operational. Security and compliance teams should decide what policy categories justify content review, what categories justify deanonymization, and which roles are allowed to perform each step. They should also decide how long prompt-review evidence is retained, whether employees receive notice in acceptable-use policies, and how legal privilege is handled.

There is also a training problem. Reviewers need to understand that AI prompts may contain sensitive data unrelated to the incident. A prompt flagged for financial information might also contain health data, employee relations details, source code, or customer secrets. Reading the prompt may expose the reviewer to material they were never supposed to see.

That is not a reason to avoid review. It is a reason to design it narrowly. The best internal policy will treat plaintext AI review as a controlled escalation, not as a casual investigative convenience.

Administrators should also test false positives. If a policy floods reviewers with marginal AI matches, the organization will either normalize over-review or ignore the queue. Neither outcome is healthy. The goal should be fewer, better, better-governed cases.

Source: WinBuzzer https://winbuzzer.com/2026/05/11/mi...s-read-flagged-ai-prompts-in-plaintex-xcxwbn/

The new Purview path is not, on paper, a universal transcript viewer for every Copilot session in the company. Microsoft is framing it as a case-review capability for risky or policy-matching interactions, surrounded by pseudonymization, role-based access controls, deanonymization permissions, and audit logging. But in practice, the important shift is that a flagged AI exchange can become readable evidence inside an enterprise investigation. For administrators, compliance officers, legal teams, and security leaders, that is powerful. For employees, contractors, and anyone typing into AI under a managed tenant, it is a reminder that prompts are not private thoughts. They are workplace records.

Microsoft Moves AI Governance From Dashboard Abstraction to Human Review

Microsoft Moves AI Governance From Dashboard Abstraction to Human Review

Enterprise AI governance has spent the past two years hiding behind reassuring vocabulary. Vendors talk about visibility, posture management, compliance signals, risky interactions, and data protection. Those phrases are not meaningless, but they are soft around the edges. They imply pattern recognition and policy enforcement without forcing everyone to confront the moment when a human reviewer reads a worker’s actual AI prompt.Purview’s new plaintext review capability makes that moment explicit. If a Communication Compliance policy flags an AI exchange, the prompt and response can become part of the case material. A reviewer is no longer just looking at the fact that a sensitive data classifier fired, or that a user interacted with an unsanctioned AI app, or that an insider-risk score moved upward. The reviewer may be looking at the sentence that triggered the policy.

That distinction matters because prompts are often more revealing than file activity. A file name might show that a spreadsheet exists; a prompt can reveal what the worker was trying to do with it. A browser event might show that someone visited ChatGPT; a prompt can show whether they pasted customer records, pre-release code, merger language, disciplinary notes, or a regulatory memo. AI usage logs collapse intent, content, and workflow into a single artifact.

Microsoft’s argument is that enterprises need this level of evidence because AI has become part of actual work. Employees are not merely experimenting with chatbots in a sandbox. They are asking AI systems to summarize confidential documents, rewrite customer emails, generate code, compare contracts, draft policies, and troubleshoot production systems. If organizations cannot see the risky subset of those interactions, they cannot investigate leakage, harassment, regulatory violations, or misuse with much precision.

That is the security case, and it is a strong one. The unresolved question is whether enterprises will treat plaintext review as a last-mile investigative tool or as a normalized layer of managerial visibility. Purview can provide the rails, but organizational culture decides how often the train runs.

Pseudonymization Is the Guardrail, Not the Destination

Microsoft’s privacy positioning rests heavily on pseudonymization. Communication Compliance is designed so reviewers begin with masked identities, and user names are not supposed to be casually visible at the start of a review. That model reflects a lesson Microsoft has learned before: administrative visibility into employee activity becomes politically radioactive when it looks like workplace surveillance.The design is meant to slow down the jump from “something risky happened” to “this named employee did it.” A reviewer can examine the policy match, assess the seriousness of the exchange, and escalate only if the situation warrants it. Deanonymization is supposed to require authorization, with role limits and audit logs recording what happened.

That sequencing is important. In a mature compliance program, it can separate legitimate investigation from curiosity, overreach, or fishing expeditions. A security analyst might see that a pseudonymized user pasted sensitive regulated data into an external AI service. A compliance reviewer might determine that the exchange is harmless or that it requires escalation. Only then should a named identity enter the case.

But pseudonymization is not the same as privacy. It is a procedural delay on identifiability, not a guarantee that content will remain unseen. Once the prompt itself is readable, many exchanges may be identifying even without a displayed name. The subject matter, writing style, project references, customer accounts, team names, or ticket details can point to a small number of people.

That is why the real governance question is not whether Microsoft masks user names by default. The real question is who inside the customer organization can approve the move from masked review to named investigation, how that approval is documented, and whether anyone reviews the reviewers. Audit logs are useful only if someone has the authority and habit to inspect them.

Communication Compliance Becomes the Front Door for AI Evidence

The rollout lands inside an already expanding Purview model for generative AI. Microsoft’s Communication Compliance policies can be scoped to specific users and groups, and AI applications can be included as policy locations. That includes Microsoft Copilot experiences, enterprise AI apps connected through Microsoft Entra or Purview Data Map controls, and other AI apps detected through browser or network activity.This is not just about Microsoft 365 Copilot. The governance perimeter is broader. Microsoft is building Purview to see AI activity across first-party Copilot experiences, Copilot Studio agents, Security Copilot, Fabric-related Copilot workflows, Foundry-built apps and agents, and connected third-party or external AI services where the tenant has the right controls in place. In some deployments, that can include enterprise AI applications registered in Entra; in others, it can include consumer AI tools accessed through managed browsers, endpoints, or networks.

That breadth is both the point and the problem. AI is not a single application anymore. It is a feature embedded into productivity suites, developer workflows, security consoles, business applications, and browsers. A compliance system that only sees one Copilot entry point would miss much of the behavior enterprises actually worry about.

Purview’s answer is to treat AI interactions as another communication and data-risk surface. Prompts and responses can appear as policy matches when they trigger Communication Compliance conditions. Those matches can then be investigated, remediated, escalated, resolved, exported, or folded into broader case workflows.

The phrase policy match does a lot of work here. It suggests a narrow, rules-driven process rather than indiscriminate monitoring. But customers can define policies broadly or narrowly. A conservative organization might limit review to obvious leakage of sensitive information, threats, harassment, or regulated data. A more aggressive organization might build policies that capture a much wider set of AI interactions in the name of risk discovery.

The Same Prompt Can Mean Different Things to Security, Legal, and HR

Plaintext prompt review will not belong to one department. That is where enterprises should expect friction. Security teams will see AI prompts as possible exfiltration events. Compliance teams will see records-retention, supervision, and regulatory exposure. Legal teams will see discovery, privilege, and investigation material. HR may see employee misconduct, harassment, or policy violations.The same exchange can plausibly belong to all of those categories. A worker asking an external AI tool to summarize a customer complaint might be creating a data leakage issue, a retention issue, a contractual confidentiality issue, and an HR issue if the prompt includes inappropriate commentary. Once the text is available, different stakeholders will want to interpret it through their own mandates.

That is why access design cannot be an afterthought. A company that gives broad reviewer rights to a generic security group may discover that it has created a new internal surveillance surface. A company that locks access too tightly may leave investigators unable to understand real incidents. The balance is not technical alone; it is institutional.

The cleanest model is likely a tiered one. First-line reviewers see pseudonymized policy matches and limited context. Escalation requires documented justification. Deanonymization requires a separate role or approval path. Legal and HR access is case-based rather than standing. Periodic audits test whether reviewers are opening content in proportion to the risk.

That model is slower than simply granting a powerful admin role. It is also the difference between a compliance tool and a trust crisis.

AI Prompts Are Not Like Email, Even When Compliance Treats Them That Way

Microsoft’s Purview heritage makes the feature feel familiar. Enterprises already use compliance tools to review email, Teams messages, files, audit logs, DLP events, insider-risk alerts, and legal-hold material. In regulated industries, communications supervision is not exotic. It is mandatory.AI prompts, however, are a strange new class of workplace record. They are communication, search query, scratchpad, code request, data-processing instruction, and confession booth all at once. Employees often type into AI systems the way they think, not the way they write formal email. They paste fragments, unfinished ideas, confidential material, and blunt summaries they would never send to another person.

That makes prompt review unusually sensitive. A prompt can reveal not only what data an employee handled, but what they intended to infer, automate, summarize, translate, conceal, or send elsewhere. The AI response adds a second layer: it may show generated code, advice, rewritten language, or hallucinated material that shaped the next step in the worker’s process.

For security teams, that richness is exactly why plaintext matters. Metadata may not tell whether a user pasted a harmless public paragraph or an unreleased product plan. It may not reveal whether an AI response encouraged unsafe code, mishandled regulated data, or produced discriminatory language. The actual exchange resolves ambiguity.

For privacy-minded employees, the same richness is the danger. The more useful the evidence, the more intrusive the review.

Microsoft Is Still Managing the Shadow of Productivity Score

Microsoft knows this terrain is dangerous because it has walked near it before. The company’s 2020 Productivity Score controversy became a shorthand for employee-surveillance anxiety in Microsoft 365. Microsoft later adjusted the product and messaging, emphasizing organizational trends rather than individual worker monitoring, but the memory remains.Purview’s AI review feature is different in purpose. It is aimed at compliance and risk investigation, not productivity ranking. It has pseudonymization and case workflows rather than a manager-friendly scoreboard. It is part of a regulated evidence-handling system, not a workplace performance dashboard.

Still, the trust boundary is related. Microsoft is once again giving enterprise customers deeper visibility into worker activity inside Microsoft 365 and adjacent services. This time the object is not meetings, chats, or document collaboration patterns. It is the employee’s interaction with generative AI.

The distinction will matter to IT pros trying to explain the rollout internally. “This is not productivity monitoring” may be true. It may also be insufficient. Employees will want to know whether their AI prompts can be read, by whom, under what circumstances, and whether they will be told when an interaction becomes part of a case.

A policy that exists only in Purview configuration is not enough. Organizations need a human-readable AI usage notice that says, plainly, that flagged prompts and responses may be reviewed. If enterprises want employees to use approved AI tools instead of hiding in personal accounts, they cannot make the approved path feel like a trap.

The Competitive Market Is Converging on Admin Visibility

Microsoft is not the only vendor selling enterprise AI through the language of control. Google’s Gemini for Workspace pitch leans on existing administrative settings, data protections, and enterprise security boundaries. Anthropic has been expanding business controls around Claude, including administrative oversight and compliance-oriented APIs. OpenAI, too, has increasingly separated enterprise offerings from consumer assumptions around data handling and administration.This convergence is inevitable. Enterprises do not buy AI at scale merely because the model is clever. They buy it when procurement, legal, security, and compliance believe the deployment can be governed. That means identity integration, auditability, retention, data controls, DLP, eDiscovery, and some way to understand misuse.

Microsoft’s advantage is that Purview already sits where many of those workflows live. If an organization uses Microsoft 365, Entra, Defender, Purview, Teams, SharePoint, Exchange, and Copilot, the gravitational pull is obvious. AI governance becomes another layer in the Microsoft control plane.

The risk is lock-in by investigation. Once AI prompts, policy matches, identities, sensitivity labels, audit logs, endpoint signals, and case workflows all converge in Purview, the compliance story becomes operationally hard to replace. Microsoft is not merely selling Copilot as an assistant. It is selling the oversight layer that makes Copilot and neighboring AI systems acceptable to risk committees.

That may be good architecture for Microsoft customers. It is also a reminder that enterprise AI is becoming less about the chatbot window and more about the administrative substrate underneath it.

Admins Need Rules Before the Feature Arrives, Not After the First Incident

The June 2026 general availability target gives organizations little room for lazy governance. If the feature arrives enabled or easily activated inside existing Purview workflows, many tenants will discover the policy implications only when the first sensitive case appears. That is backwards.The right time to define review rules is before plaintext access is operational. Security and compliance teams should decide what policy categories justify content review, what categories justify deanonymization, and which roles are allowed to perform each step. They should also decide how long prompt-review evidence is retained, whether employees receive notice in acceptable-use policies, and how legal privilege is handled.

There is also a training problem. Reviewers need to understand that AI prompts may contain sensitive data unrelated to the incident. A prompt flagged for financial information might also contain health data, employee relations details, source code, or customer secrets. Reading the prompt may expose the reviewer to material they were never supposed to see.

That is not a reason to avoid review. It is a reason to design it narrowly. The best internal policy will treat plaintext AI review as a controlled escalation, not as a casual investigative convenience.

Administrators should also test false positives. If a policy floods reviewers with marginal AI matches, the organization will either normalize over-review or ignore the queue. Neither outcome is healthy. The goal should be fewer, better, better-governed cases.

The June 2026 Deadline Turns AI Trust Into a Configuration Problem

The practical lesson for Purview customers is that AI governance is no longer a theoretical committee topic. It is a permissions model, a policy scope, an audit trail, and a reviewer workflow. The organizations that handle this well will be the ones that make access rare, justified, logged, and explainable.- Organizations should assume that flagged AI prompts and responses may become reviewable workplace records inside Microsoft Purview.

- Administrators should separate pseudonymized review rights from deanonymization rights instead of granting both to the same broad group.

- Communication Compliance policies should be scoped tightly enough that reviewers are not buried in low-value AI matches.

- Employee AI usage policies should state clearly that risky or policy-matching prompts and responses can be reviewed under defined conditions.

- Audit logs for prompt review should be actively examined, not merely retained for appearances.

- Legal, HR, security, and compliance teams should agree in advance on when an AI exchange becomes an investigation rather than a security alert.

Source: WinBuzzer https://winbuzzer.com/2026/05/11/mi...s-read-flagged-ai-prompts-in-plaintex-xcxwbn/