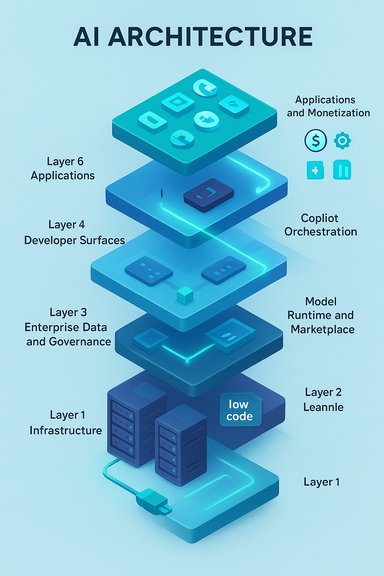

Microsoft’s AI strategy is best understood not as a single product bet but as a deliberate, cross‑stack play: a six‑layer architecture that turns raw scale into recurring revenue, developer lock‑in, and real‑world product adoption—what FourWeekMBA frames as Microsoft’s Six‑Layer Advantage.

Microsoft’s recent investor messaging and industry analyses converge on a single idea: AI monetization requires more than models — it requires ownership (or control) of every layer that sits between silicon and end‑user workflows. The Six‑Layer Advantage is an analytical framework that captures this multi‑pronged strategy: from hyperscale infrastructure to Copilot‑first user experiences and verticalized applications.

This article breaks down each layer, verifies the most important technical and financial claims, and evaluates the strengths and risks in practical terms for enterprise IT teams, Windows administrators, and informed investors. Where possible, claims are cross‑checked with independent reporting and primary company communications; unverifiable or interpretive claims are flagged for caution.

For IT leaders and Windows administrators, the pragmatic course is simple: adopt the best parts of the stack that improve security and productivity today, insist on proven governance for any agent or Copilot rollout, and budget for both seat‑based licensing and cloud consumption. For investors and strategists, Microsoft’s approach is less of a speculative bet and more of a structural play — one that will reward execution over hype.

The Six‑Layer Advantage is a useful, verifiable lens for decoding Microsoft’s current moves — but it is an analytical tool, not a guarantee. The coming quarters will show which parts of the flywheel accelerate and which require further engineering and policy work to stabilize.

Source: FourWeekMBA Microsoft’s Six-Layer Advantage - FourWeekMBA

Background / Overview

Background / Overview

Microsoft’s recent investor messaging and industry analyses converge on a single idea: AI monetization requires more than models — it requires ownership (or control) of every layer that sits between silicon and end‑user workflows. The Six‑Layer Advantage is an analytical framework that captures this multi‑pronged strategy: from hyperscale infrastructure to Copilot‑first user experiences and verticalized applications.This article breaks down each layer, verifies the most important technical and financial claims, and evaluates the strengths and risks in practical terms for enterprise IT teams, Windows administrators, and informed investors. Where possible, claims are cross‑checked with independent reporting and primary company communications; unverifiable or interpretive claims are flagged for caution.

What the Six Layers Are (concise map)

Microsoft’s six layers, as presented in the FourWeekMBA analysis and mirrored in public disclosures and analyst coverage, can be summarized as:- Layer 1 — Infrastructure (chips, GPUs, data centers): the physical compute and networking footprint for training and inference.

- Layer 2 — Enterprise Data & Governance (Fabric and identity): the secure, governed data plane that provides a single source of truth for retrieval‑augmented workflows.

- Layer 3 — Model Runtime & Marketplace (Foundry / multi‑model orchestration): runtime environments, model catalogs, and routers that choose the right model for the right task.

- Layer 4 — Developer Surfaces (pro‑code & low‑code): tooling for engineers and citizen developers — from SDKs to Copilot Studio — to build, tune, and deploy agents.

- Layer 5 — Copilot Orchestration (frontend as platform): the conversational / agent layer that surfaces models and agents to users inside Office, Windows, Edge, and vertical apps.

- Layer 6 — Applications & Monetization (Microsoft 365, vertical solutions): packaged products, per‑seat and consumption pricing, and device/partner channels that convert usage into predictable revenue.

Layer 1 — Infrastructure: The Capital Foundation

What Microsoft controls

Microsoft’s advantage starts with hyperscale infrastructure: custom data centers, GPU fleets, networking, and power capacity that support both training and inference at scale. The company has publicly signaled multi‑year capital investment to expand AI‑ready capacity, and financial reporting shows material increases in capex tied to data‑center buildout.Why it matters

- Cost control: Owning the data‑center footprint and negotiating large hardware orders provides leverage on cost per token/inference.

- Latency and locality: For regulated enterprises, local region hosting and private link architectures reduce risk and improve compliance.

Risks and operational challenges

- Capital intensity: Heavy capex reduces short‑term margins and raises breakeven for new infrastructure. Independent reporting notes capex increases into the tens of billions as Microsoft scales GPU capacity.

- Supply chain & power constraints: GPUs, custom accelerators, and grid capacity are real bottlenecks; these are not solved overnight and require multi‑year planning.

Layer 2 — Enterprise Data & Governance: Fabric as the Control Plane

The promise

Microsoft’s Fabric (and adjacent identity and governance services) aims to keep enterprise context close to models without wholesale data movement. It provides governance, semantics, indexing, and RAG pipelines so that copilots and agents can reason over company artifacts securely.Why it’s strategic

- Trust & compliance: Enterprises are realistic about data privacy and regulation. A governed data plane reduces barriers to adoption for regulated industries.

- Operational efficiency: Keeping data close and annotated reduces retrieval costs and improves answer accuracy for domain‑specific agents.

Caveats

- Vendor lock‑in: A tightly integrated data layer increases switching friction; companies must weigh that against the productivity gains. This is a design trade‑off inherent in the model.

- Surface for attack: Centralizing semantic layers makes them high‑value targets — governance is necessary but not sufficient without continual hardening.

Layer 3 — Model Runtime & Marketplace: Azure AI Foundry and the Router Idea

What Foundry does

Foundry is Microsoft’s multi‑model runtime and marketplace where enterprises can host, route between, and benchmark different model families (OpenAI, Microsoft in‑house models, and third parties). It’s explicitly designed for orchestration and cost optimization — dispatching small models for routine queries and reserving “thinking” models for hard tasks.Confirming the architecture

Independent reporting and public Microsoft commentary emphasize the multi‑model, router‑based approach; major vendor model families are accessible via model catalogs and runtime tools in Azure. This reduces total cost of ownership by tailoring model selection to task complexity.Strengths

- Economic control plane: The router reduces inference cost by matching workload to model capability.

- Interoperability: Hosting third‑party models keeps customers flexible and reduces single‑vendor dependency.

Risks

- Complexity of orchestration: Operationalizing multi‑model routing at scale introduces new failure modes — versioning, latency spikes, and consistency challenges.

- Model governance and provenance: Multi‑model deployments complicate auditing and red‑teaming; enterprises must ensure consistent safety across heterogeneous models.

Layer 4 — Developer Surfaces: Building the Builders

Two developer audiences

Microsoft targets both:- Pro‑code engineers with SDKs, APIs, and DevOps pipelines; and

- Citizen developers with low‑code/no‑code environments like Copilot Studio.

Why it’s defensible

- Distribution through GitHub and VS Code: Microsoft can embed tooling into developer workflows that already exist, increasing the chance of enterprise continuity and reuse.

- Rapid prototyping to production: Built‑in modelOps and governance in Foundry let teams move from experiments to production faster, shortening time‑to‑value.

Risks

- Feedback overload & quality control: Rapid release cycles (e.g., Azure AI Labs’ accelerated experimentation) create a deluge of models and features that teams must triage. Without disciplined governance, productivity can suffer.

Layer 5 — Copilot Orchestration: The Front End That Sells AI

Copilot’s role

Copilot is not a single assistant but a family of touchpoints — embedded in Microsoft 365, Windows, Edge, and device partners — that orchestrate agents and surface AI capabilities to end users. Microsoft’s narrative (and independent reporting) positions Copilot as the UX layer that translates backend capabilities into everyday productivity gains.Verified scale signals

Microsoft and multiple independent outlets have reported that the Copilot family has surpassed 100 million monthly active users, and that Copilot adoption spans a majority of Fortune 500 firms. These numbers were highlighted in company communications and corroborated by major news outlets.Why this matters commercially

- Monetization lever: Copilot converts infrastructure consumption into per‑seat and per‑usage revenue, enabling Microsoft to upsell productivity tiers and capture value at the point of work.

- Retention & stickiness: Integrating agents into workflows increases switching costs and makes enterprise migrations more fraught for customers.

Risks

- User experience & trust: If Copilot responses are inconsistent or hallucinate on sensitive matters, enterprise confidence and seat renewals could erode. Continuous trust engineering is essential.

Layer 6 — Applications & Monetization: Converting Usage into Revenue

How Microsoft captures value

- Per‑seat upgrades (Copilot tiers): Microsoft embeds AI features into Microsoft 365 and offers premium Copilot tiers that raise ARPU.

- Consumption billing: For heavy AI workloads, customers consume Azure inference and Foundry runtime, generating cloud usage revenue.

- Vertical solutions: Industry‑specific copilots (sales, finance, customer service) allow Microsoft to charge premium prices for domain expertise.

Financial validation

Independent reporting of Microsoft’s recent results confirms a meaningful AI‑driven revenue inflection: Azure now surpasses a material annualized revenue threshold (reported above $75 billion), and management highlighted Copilot adoption as a key growth catalyst. These metrics substantiate the claim that Microsoft’s stack is already yielding commercial traction.The Strategic Flywheel: Why the Layers Reinforce Each Other

Microsoft’s approach creates a positive feedback loop:- Invest in infrastructure to lower inference and storage costs.

- Provide a governed data layer so enterprises trust the platform.

- Offer a multi‑model runtime to optimize cost & capability.

- Deliver developer tools so customers build and deploy agents quickly.

- Surface AI via Copilot to drive end‑user engagement and upsell.

- Monetize through apps & consumption, which funds more capex and product improvements.

Competitive Landscape and Where Microsoft’s Model Wins

Advantages

- Scale & enterprise footprint: Few companies have Microsoft’s combined reach in productivity, identity, cloud, and developer tools — this reduces customer churn and creates cross‑sell opportunities.

- Financial firepower: Microsoft can fund multi‑year investments in GPUs and data centers without jeopardizing other business lines.

- Ecosystem leverage: GitHub, LinkedIn, Office, Windows, and Xbox all serve as distribution or data sources for AI features, creating unique integration points.

Where competitors press back

- Cloud rivals (AWS, Google Cloud): Each has their own multi‑model solutions and deep infra investments; Microsoft’s success depends on execution, price, and feature parity in core cloud services.

- Model suppliers & neutrality: Reliance on external models (OpenAI, Mistral, others) forces Microsoft to balance partnership benefits against strategic autonomy; Foundry’s multi‑model stance mitigates but does not eliminate this dependence.

Financial & Operational Risks — A Reality Check

- Capital Intensity and Margin Pressure. Large capex increases (reported rises in data‑center spend) compress gross margins in the near term. Microsoft is explicit about continued investment to meet AI demand.

- Monetization Execution. The market prices in high expectations for Copilot and AI monetization; failure to convert usage into durable ARPU increases downside.

- Regulatory & Antitrust Scrutiny. As Microsoft extends AI into platform and application layers, regulators will scrutinize deals, acquisitions, and partner relationships—raising the bar for mergers and new business models.

- Trust, Safety & Quality. Rapid product iteration and multi‑model environments increase the surface area for hallucinations, bias, and data leakage. Continuous investment in red‑teaming and governance is non‑optional.

Practical Takeaways for IT Teams and Windows Administrators

- Design for hybrid trust: Use Fabric‑like patterns (governed lakes/indices, private links) to keep sensitive data local while leveraging cloud inference.

- Adopt multi‑model thinking: Plan for routing and cost optimization — not every request needs a “thinking” model. Foundry‑style orchestration reduces bill shock.

- Govern the agent lifecycle: Treat copilots as software artifacts — version, test, red‑team, and monitor them like code. Rapid experimentation is powerful but requires controls.

- Budget for consumption and capex: Expect both seat‑based licensing and cloud consumption to appear on budgets; costs may shift from headcount to inference and storage.

What’s Verifiable — and What’s Interpretation

- Verifiable facts: Microsoft’s Azure annualized revenue crossing significant thresholds and the Copilot family exceeding 100 million monthly active users are reported in company communications and corroborated by independent outlets.

- Interpretive framing: The label “Six‑Layer Advantage” is an analytical framework used by industry analysts (e.g., FourWeekMBA) to explain Microsoft’s cross‑stack strategy — useful for understanding incentives but not an official Microsoft product name. Treat it as a model, not a patentable architecture.

Conclusion — A Realistic Assessment

Microsoft’s six‑layer framework explains why its AI strategy looks different from startups that focus only on models or UX. By owning or orchestrating infrastructure, data governance, runtime, developer tooling, orchestration, and final‑mile apps, Microsoft creates multiple monetization levers and high switching costs for enterprise customers. That structure is already paying off in user adoption and cloud revenue acceleration. That said, this advantage is conditional. It depends on disciplined capital allocation, trustworthy AI behavior at scale, effective multi‑model governance, and competitive responses from other hyperscalers. The risk profile is not negligible: the strategy trades margin pressure and regulatory scrutiny for long‑term platform control.For IT leaders and Windows administrators, the pragmatic course is simple: adopt the best parts of the stack that improve security and productivity today, insist on proven governance for any agent or Copilot rollout, and budget for both seat‑based licensing and cloud consumption. For investors and strategists, Microsoft’s approach is less of a speculative bet and more of a structural play — one that will reward execution over hype.

The Six‑Layer Advantage is a useful, verifiable lens for decoding Microsoft’s current moves — but it is an analytical tool, not a guarantee. The coming quarters will show which parts of the flywheel accelerate and which require further engineering and policy work to stabilize.

Source: FourWeekMBA Microsoft’s Six-Layer Advantage - FourWeekMBA