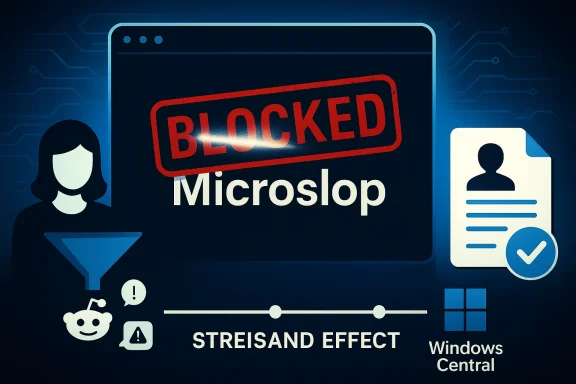

Microsoft's attempt at quiet moderation exploded into a public mess this week after users discovered the derisive nickname "Microslop" was being filtered in Microsoft's official Copilot Discord — and an opportunistic Reddit post claiming the same filter had been applied to Xbox PC app reviews on the Microsoft Store turned out to be false, according to reporting and direct outreach to the company. s://www.pcgamer.com/software/ai/microsoft-banned-the-word-microslop-in-its-copilot-discord-server-then-began-restricting-access-after-users-started-posting-microsl0p-and-other-funnies/)

The nickname Microslop — a portmanteau used online to mock Microsoft's aggressive push to fold generative AI into products such as Copilot, Edge, and Windows 11 — has been circulating in communities since late 2025 as shorthand for clumsy or intrusive AI features. The phrase became a focal point of community frustration in early March 2026 when moderators activated a keyword filter that blocked direct use of the word inside the official Copilot Discord server. That moderation action was widely reported and then amplified when users evaded the filter ws or mass-posted the meme, prompting moderators to lock sections of the server temporarily to regain control.

At the same time, a viral Reddit post claimed Microsoft had extended the ban to Store reviews for the Xbox PC app — a much more damaging accusation that suggested Microsoft was censoring product feedback. The Reddit thread included a fabricated screenshot notice and attracted thousands of upvotes and hundreds of comments before moderators flagged it as possibly misleading. Windows Latest investigated the claim, tested the Microsoft Store review flow themselves, and contacted Microsoft; their reporting found the Store review ban claim was false.

This is especially true for consumer-facing brands in the middle of controversial product pivots; Microsoft’s broad, public push to embed Copilot and AI features into Windows and its consumer apps has made the company an easy target for memes and anger. That context magnifies the opttechspot.com](https://www.techspot.com/news/11153...t-discord-server-melts.html?utm_source=openai))

The immediate danger — a rumor that Microsoft was censoring Xbox Store reviews — has been defused by on-the-ground verification and direct company contact. The longer-term danger remains: unless trust is actively rebuilt through candid communication and better moderation practices, the next meme — and the next viral claim — will land on thinner ice and trigger a louder backlash than this one.

In short: Microsoft did ban "Microslop" inside its Copilot Discord as a short-term moderation move, but the company did not — and, by a practical test, does not — have a blanket ban on the word in Microsoft Store reviews for the Xbox PC app. The real story is not a single blocked token, it's the fragile relationship between tech platforms and the communities that judge them — and how quickly misinformation can fill the vacuum left by opaque moderation.

Source: Windows Latest Users claim Microsoft removed 'Microslop' reviews from Xbox app for Windows 11, but Microsoft denies it

Background

Background

The nickname Microslop — a portmanteau used online to mock Microsoft's aggressive push to fold generative AI into products such as Copilot, Edge, and Windows 11 — has been circulating in communities since late 2025 as shorthand for clumsy or intrusive AI features. The phrase became a focal point of community frustration in early March 2026 when moderators activated a keyword filter that blocked direct use of the word inside the official Copilot Discord server. That moderation action was widely reported and then amplified when users evaded the filter ws or mass-posted the meme, prompting moderators to lock sections of the server temporarily to regain control.At the same time, a viral Reddit post claimed Microsoft had extended the ban to Store reviews for the Xbox PC app — a much more damaging accusation that suggested Microsoft was censoring product feedback. The Reddit thread included a fabricated screenshot notice and attracted thousands of upvotes and hundreds of comments before moderators flagged it as possibly misleading. Windows Latest investigated the claim, tested the Microsoft Store review flow themselves, and contacted Microsoft; their reporting found the Store review ban claim was false.

What actually happened: Discord moderation vs. Store policy

The Copilot Discord filter

- Microsoft’s Copilot Discord deployed an automated filter that matched messages containing the literal token "Microslop," deleting or blocking those posts and, in some cases, leading to timeouts or account restrictions for repeat posting.

- The filter appears to have been introduced as a damage-control or anti-spam measure during a surge of posting that quickly escalated into coordinated filter-evasion experiments and meme flooding.

- The moderation move produced a classic Streisand effect: the more the server tried to suppress the term, the more it spread across forums, social platforms, and browser extensions that popularized the nickname. Multiple outlets documented the escalation and the temporary server lockdowns.

The Microsoft Store rumor

- A Reddit user claimed a Microsoft Store review had been removed because it used the word Microslop, posting an image purporting to be a Microsoft policy enforcement email. That screenshot was fabricated (it did not contain verifiable metadata or links back to a legitimate Microsoft policy action) and moderators later labeled the post "Possibly misleading."

- Windows Latest published an independent test: they posted a review of the Xbox PC app that included the word "Microslop" and confirmed the review was accepted and remained visible on the Micrng there was no global ban of that token in Store reviews. When asked, Microsoft told Windows Latest it had not banned the word "microslop" in Microsoft Store reviews, and that review removals are handled under broader policy enforcement for relevance and guideline compliance rather than the presence of a particular insult. That clarification undercuts claims that Microsoft is actively banning the nickname across its product review systems.

How moderation systems work — and why they misfire

Keyword filters are blunt instruments

Keyword-based filters are simple and fast to deploy, which makes them attractive for moderating large, real-time communities. But they have well-known failure modes:- False positives: harmless or contextually legitimate comments can be removed because they contain a blocked token.

- False negatives and evasion: users can bypass filters by substituting characters (Microsl0p, Micro‑slop), spacing, or punctuation.

- Context blindness: keywords cannot distinguish criticism from abuse, nor can they account for parody, sarcasm, or educational uses.

Scale and speed matter

Large official channels see rapid bursts of activity. Moderators need tools that can act quickly to shut down coordinated spam or harassment, but decisions made under pressure — like adding a single word to a filter list — can have outsized reputational consequences when the community interprets those moves as suppressing legitimate dissent.This is especially true for consumer-facing brands in the middle of controversial product pivots; Microsoft’s broad, public push to embed Copilot and AI features into Windows and its consumer apps has made the company an easy target for memes and anger. That context magnifies the opttechspot.com](https://www.techspot.com/news/11153...t-discord-server-melts.html?utm_source=openai))

Verifying the Store-review claim: what reporters and users did

When a claim of censorship like this sunalistic steps are straightforward and, in this case, decisive:- Check primary evidence. The screenshot shared on Reddit lacked verifiable metadata and provenance; subreddit moderators flagged the post as possibly misleading.

- Reproduce the result. Windows Latest posted an actual review containing the contested word and found it was accepted and displayed in the Microsoft Store. irectly contradicted the claim that the word itself was blocked.

- Ask the company. Windows Latest contacted Microsoft and received a statement denying that the term had been banned in Store reviews; Microsoft reiterated that removals are driven by policy violations related to relevance or guideline breaches, not specific nicknames. Because the authoritative public response came from Microsoft to a journalist, that is our best-available verification.

Why the hoax spread so quickly

Several intersecting dynamics turned a relatively small moderation event into a broad belief that Microsoft was censoring Store reviews:- Pre-existing mistrust. Microsoft’s aggressive AI integration and a long tail of Windows 11 stability complaints have created a skeptical user base predisposed to believe nout the company. Many community members were primed to accept claims of heavy-handedness without demanding verification.

- Engagement incentives. Low-effort claims that dramatize corporate wrongdoing generate clicks, upvotes, and shares. The Reddit post's fabricated screenshot offered just enough drama to fuel engagement, and users amplified it before fact-checking could occur.

- The Streisand effect. The initial attempt to remove or block the term inside a prominent official channel acted as a gasoline-splash on the meme; suppression drew attention and ridicule, ensuring the term spread faster and farther. Multiple outlets documented how the filter backfired.

The reputational calculus for Microsoft and other large platforms

Microsoft’s moderation choices expose a trade-off between immediate community safety and long-term trust:- Short-term measures like keyword blocks can stop coordinated spam or harmful floods fast, but they invite accusations of censorship when the blocked content is a form of criticism rather than harassment.

- Heavy-handed responses to meme-based criticism risk alienating the most vocal, influential community members who set tone on platforms like Reddit, Discord, and social media.

- Conversely, failing to act quickly against coordinated trolling can degrade the quality of product communities and drown out constructive feedback.

- Transparent moderation policies. Make rules and the rationale for specific interventions public, especially for official brand channels. That reduces the "black box" suspicion that fuels conspiracy-like thinking.

- Graduated responses. Use warnings, temporary silent deletions, and manual review before permanent bans for community-native criticism that is not abusive.

- Proactive communication. When a public-facing moderation action affects a large or high-profile community, a short explanation — with timing and scope — can head off misinterpretation and the kind of viral amplification this event produced.

Technical fixes and governance recommendations

For community moderators and corporate social teams responsible for high-volume channels, the Microslop episode is a practical case study. Recommended steps:- Use context-aware moderation tools (machine learning classifiers, human-in-the-loop review) instead of token-only filters for non-abusive speech.

- Maintain an accessible moderation FAQ that explains how automated filters work, what kinds of content trigger moderation, and how users can appeal actions.

- Preserve transparent change logs for major filter updates in high-profile channels so that community managers can show when and why terms were blocked or unblocked.

- Test filter rules in staging channels before applying them to main community forums to measure likely collateral impact.

- Train moderators on escalation procedures that prefer de-escalation and public explanation over mass lockdowns except in genuine safety emergencies.

The broader media ecosystem: why verification matters

This episode also underlines the role of responsible reporting and community moderation across platforms:- Journalists and editorial teams should always attempt to reproduce claims that allege censorship or policy enforcement, and obtain direct company comment before amplifying explosive assertions.

- Community moderators on social platforms should label unverified claimickly, and encourage skeptical engagement (asking for screenshots, logs, or reproduction steps) rather than letting speculation metastasize.

- Readers and community members should treat sensational moderation claims as provisional until a test or company comment verifies them.

Risks that remain even after the clarification

Clearing up the Microsoft Store rumor does not eliminate the underlying threats to Microsoft’s relationship with its communities:- Trust erosion is persistent. One-off clarifications do not automatically rebuild confidence; repeated perception of intrusive product changes (real or perceived) creates a higher baseline of skepticism.

- Policy opacity persists. If moderation remains opaque, future incidents will have similar explosive potential; communities will continue to assume the worst in the absence of clear, shared rules.

- Escalation cycles are fast. Meme culture moves quickly; attempts to suppress ridicule are likely to blow up in the moderator's face unless handled with care and transparency.

What Microsoft should do next — practical short-term moves

- Publish a short public note explaining the Copilot Discord moderation incident: what was blocked, why, when it was introduced, and whether it has been rolled back. Be specific about whether the action targeted spam, coordinated attacks, or the term itself. Transparency reduces speculation.

- Share a community moderation FAQ and an appeal path for users who believe they were wrongly moderated. This demonstrates procedural fairness.

- Audit automated filters applied across interrelated properties (Discord, Xbox official channels, Store moderation workflows) and publish a summary of findings and corrective steps.

- Re-engage directly with power-user communities — top posters, moderator leads, and prominent content creators — to rebuild channels for constructive feedback and to test moderation changes before they go live.

Final analysis: moderation, misinformation and collective responsibility

The Microslop episode is a short lesson in three linked dynamics that shape modern platform governance:- Technical bluntness: Basic moderation tools like token filters are tempting because they're quick and cheap — but their bluntness can produce outsized social costs when applied to culturally resonant speech.

- Social velocity: Communities and social platforms amplify narratives at internet speed. A single unverified claim, if framed as censorship, will spread far before fact-checking can catch up.

- Institutional optics: Large companies in high-visibility transitions (here, Microsoft’s AI pushes and Windows 11 controversy) face asymmetric scrutiny; small operational decisions are read as evidence of broader intent.

The immediate danger — a rumor that Microsoft was censoring Xbox Store reviews — has been defused by on-the-ground verification and direct company contact. The longer-term danger remains: unless trust is actively rebuilt through candid communication and better moderation practices, the next meme — and the next viral claim — will land on thinner ice and trigger a louder backlash than this one.

In short: Microsoft did ban "Microslop" inside its Copilot Discord as a short-term moderation move, but the company did not — and, by a practical test, does not — have a blanket ban on the word in Microsoft Store reviews for the Xbox PC app. The real story is not a single blocked token, it's the fragile relationship between tech platforms and the communities that judge them — and how quickly misinformation can fill the vacuum left by opaque moderation.

Source: Windows Latest Users claim Microsoft removed 'Microslop' reviews from Xbox app for Windows 11, but Microsoft denies it