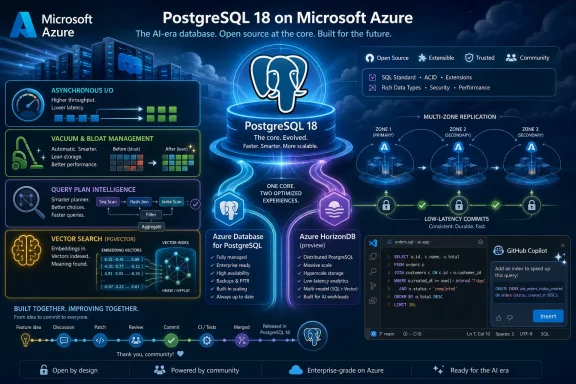

Microsoft is positioning PostgreSQL as a core Azure database for AI-era applications, pairing upstream contributions to PostgreSQL 18 with managed services such as Azure Database for PostgreSQL and preview-era Azure HorizonDB, while promoting developer tooling, vector search, and community programs in May 2026. The pitch is not merely that Postgres runs well on Azure. It is that Microsoft now sees Postgres as one of the main places where cloud infrastructure, AI application design, and open-source credibility meet. That is a significant turn for a company whose database identity was once almost entirely synonymous with SQL Server.

The striking part of Microsoft’s latest Postgres message is not that Azure supports PostgreSQL. Every serious cloud does that. The striking part is how deliberately Microsoft is trying to present itself as both a cloud vendor and a participant in the upstream PostgreSQL project.

That distinction matters because PostgreSQL’s reputation was not built by a single company. It came from decades of conservative engineering, public review, extension-friendly architecture, and a culture that tends to value correctness over spectacle. A hyperscaler cannot simply buy that trust by wrapping the database in a portal and calling it managed.

Microsoft’s answer is to emphasize upstream work: committers, contributors, performance patches, planner improvements, asynchronous I/O work, vacuum behavior, and memory management. The company says its engineers contributed hundreds of commits to the latest PostgreSQL release, while separate Microsoft material has described it as one of the top corporate contributors to the project. The exact count varies across Microsoft’s own recent posts, but the strategic claim is consistent: Azure’s Postgres business is being tied to visible participation in core Postgres, not just consumption of it.

That is a necessary message because the audience is skeptical by design. Postgres users tend to care deeply about portability, license simplicity, and avoiding architectural traps. If Microsoft wants enterprises to move serious Postgres workloads to Azure, it has to show that Azure is not merely extracting value from the ecosystem, but helping make the engine better for everyone.

That has become much more valuable as application stacks have grown more complicated. Modern teams are juggling transactional data, analytics pipelines, event systems, caching layers, vector indexes, model calls, and compliance requirements. In that environment, a database that can remain a stable center of gravity has strategic value.

Microsoft’s blog frames PostgreSQL as increasingly foundational for startups and demanding production systems alike. That is not an unreasonable reading of the market. PostgreSQL has become the default relational choice for many new applications precisely because it gives developers enough capability to delay or avoid adding more specialized systems too early.

The AI wave strengthens that position. Many AI-enabled applications still need mundane but essential database features: permissions, joins, constraints, transactions, indexing, replication, backup, and observability. The model may be glamorous, but the application still has to know who is allowed to see which rows, how fresh the data is, and whether yesterday’s migration broke the billing table.

The classic AI application pattern has often involved moving data out of the system of record, generating embeddings elsewhere, storing vectors in a separate service, then stitching the results back together with application code. That can work, but it creates operational drag. It also introduces awkward questions about freshness, security boundaries, and whether the vector index understands the same business rules as the transactional database.

PostgreSQL’s extension model makes it attractive here because it can absorb new capabilities without abandoning SQL. Vector search, semantic ranking, and model-related functions can be exposed in ways that feel closer to database work than to a pile of sidecars. Microsoft is leaning heavily into that idea with Azure Database for PostgreSQL integrations around vector search, Microsoft Foundry, and AI-assisted developer workflows.

There is a risk in the framing, of course. “AI-ready database” can become marketing fog very quickly. The real test is whether these features reduce system complexity without hiding too much behavior from operators. If AI capabilities inside Postgres become another opaque managed-service dependency, Microsoft will have traded one kind of glue code for another kind of lock-in anxiety.

Asynchronous I/O is especially important because database performance is often less about raw CPU and more about how efficiently the engine can keep storage busy without wasting worker time. PostgreSQL has historically been conservative in this area, and the move toward more capable asynchronous read paths signals an important modernization of the engine’s internals.

Vacuum work is similarly unglamorous and essential. Anyone who has operated a busy Postgres system knows that vacuum behavior can be the difference between a database that quietly maintains itself and one that drifts toward bloat, latency spikes, and emergency maintenance windows. Improvements here show up as fewer 2 a.m. incidents, not prettier screenshots.

Planner and execution enhancements matter for a different reason. As Postgres estates grow, partitioned tables, large datasets, and mixed workload patterns become normal rather than exotic. Smarter planning reduces the tax that complexity imposes on production systems. Microsoft’s focus on these areas is a reminder that the cloud Postgres race is not only about provisioning speed or dashboard polish; it is also about making the upstream engine better at the hard cases.

That positioning reveals a tension in the Postgres market. On one side, users want open-source alignment, familiar behavior, and minimal abstraction. On the other, they want cloud-native scale, rapid failover, large read fan-out, and reduced operational burden. Those goals do not always fit neatly inside stock PostgreSQL architecture.

HorizonDB is Microsoft’s attempt to resolve that tension by preserving the PostgreSQL interface while changing the underlying architecture. The service separates compute and storage, promises rapid scaling, and presents itself as a way to escape application-level sharding. If it works as advertised, that is attractive to teams that have outgrown a single node but do not want to rewrite their application around shard keys and distributed transaction caveats.

The phrase PostgreSQL-compatible is doing a lot of work here. Compatibility can mean wire protocol support, SQL surface similarity, extension support, planner behavior, operational semantics, or all of the above. Serious customers will want to know exactly where HorizonDB behaves like Postgres, where it differs, and which extensions or edge cases do not carry over cleanly.

Azure Database for PostgreSQL remains the more straightforward option for teams that want a managed service aligned closely with open-source Postgres. It fits lift-and-shift migrations, conventional application backends, and teams that value predictability over architectural novelty. For many workloads, that is exactly the right trade.

HorizonDB is aimed at a different class of problem: high-throughput, low-latency systems that need horizontal scale, rapid recovery, and multi-zone resilience without pushing all the complexity into application code. That is a cloud database problem as much as a PostgreSQL problem. It is the territory where hyperscalers try to justify their premium.

The danger for Microsoft is product confusion. If customers hear “Postgres on Azure” and then discover two services with different scaling models, compatibility promises, pricing assumptions, and operational behaviors, they will need crisp guidance. A portfolio can look like choice to a cloud architect and like ambiguity to an overworked platform team.

The PostgreSQL extension for Visual Studio Code has become a central part of Microsoft’s pitch. The idea is straightforward: let developers provision, inspect, query, tune, and migrate without constantly leaving the editor. Add Copilot assistance, and SQL authoring becomes part of the same AI-assisted workflow Microsoft is pushing across its developer platform.

This is sensible product strategy. Developers do not choose databases only from white papers. They choose what is easy to start, easy to inspect, easy to debug, and easy to explain to the next person on the team. If Azure Postgres feels native inside the editor many developers already use, Microsoft lowers the friction that often keeps managed services out of early architectural decisions.

But this is also where cloud gravity becomes subtle. A VS Code extension that helps with local Postgres is one thing; a workflow that makes Azure provisioning, Azure monitoring, Azure identity, Azure AI models, and Azure migration paths feel like the natural default is another. Microsoft does not need to block portability to benefit from shaping the path of least resistance.

The question is whether the value flows both ways. Microsoft’s upstream work on PostgreSQL internals benefits users far beyond Azure if it lands in the community release. That is the strongest part of the company’s argument. A performance improvement in core Postgres is more credible than a proprietary feature that only works after a customer moves to one cloud.

Community sponsorship also plays a role, though it should not be overstated. Conferences, podcasts, user groups, and educational programs help maintain the ecosystem, but they are not a substitute for code, review, bug fixes, documentation, and long-term maintenance. PostgreSQL’s community is unusually good at distinguishing between meaningful participation and brand proximity.

Microsoft seems aware of this. The company’s emphasis on committers, contributors, and annual transparency around Postgres work is designed to satisfy a community that expects receipts. The more Microsoft makes its case through upstream artifacts rather than campaign language, the more persuasive it will be.

Azure Database for PostgreSQL answers part of that with a managed version of a familiar system. HorizonDB answers another part by suggesting that Microsoft can carry Postgres-style workloads into more demanding cloud-native territory. The two together let Microsoft tell CIOs that they do not have to choose between open-source adoption and enterprise-scale operations.

That is a powerful message in organizations trying to modernize away from proprietary database estates. Migration from legacy commercial databases to Postgres is attractive for cost and flexibility, but the hard parts are rarely the first schema conversion. The hard parts are performance parity, stored procedure rewrites, operational readiness, staff training, and the long tail of application assumptions.

This is where Microsoft’s combination of Copilot-assisted migration, VS Code workflows, managed Postgres, and AI integrations becomes more than a developer convenience story. It becomes a platform migration story. Microsoft wants to be the vendor that makes Postgres feel safe for organizations that like the economics of open source but still want enterprise scaffolding.

That means the competitive battlefield is shifting from the database engine alone to the control plane around it. Backups, failover, identity integration, patching, analytics replication, vector indexing, model access, and IDE workflows are becoming part of the managed Postgres product. The core database remains open, but the operational experience becomes increasingly cloud-shaped.

This is both useful and uncomfortable. Useful, because most teams do not want to hand-build every operational component around Postgres. Uncomfortable, because the more the value moves into managed orchestration, the harder it becomes to compare services cleanly or move between them without losing capabilities.

Microsoft’s challenge is to make Azure’s Postgres advantages feel additive rather than enclosing. If Azure Database for PostgreSQL and HorizonDB make Postgres easier to run while preserving the mental model developers trust, Microsoft gains credibility. If the services drift into “Postgres-ish” experiences that require customers to absorb hidden platform assumptions, the community will notice.

That does not mean every workload should move to HorizonDB, or even to Azure. It means Microsoft now has strong incentives to make PostgreSQL on Azure feel first-class across the stack: editor, cloud service, AI integration, security, monitoring, migration, and community presence. Those incentives will shape tooling and documentation that many teams encounter before they ever make a formal architecture decision.

There is also a Windows-adjacent angle here. Microsoft’s developer story increasingly assumes that VS Code, GitHub, Copilot, Azure, and open-source infrastructure all belong in the same workflow. Postgres is being pulled into that continuum. The database that once symbolized a non-Microsoft stack is now being presented as a natural citizen of Microsoft’s developer universe.

That is not hypocrisy; it is the modern software market. Microsoft learned that owning the operating system was not enough, then that owning the developer toolchain was not enough, and now that owning the cloud relationship is not enough unless the underlying open-source platforms are treated as strategic peers. Postgres fits that lesson perfectly.

Compatibility should be tested with real schemas, real extensions, real query plans, real failover drills, and real operational tooling. A benchmark that shows strong throughput under ideal conditions is useful, but it does not answer whether an application’s ugliest migration script, oddest extension, or most fragile report query behaves as expected.

The same skepticism applies to AI features. Vector search inside or near Postgres can simplify architectures, but only if it integrates cleanly with security, filtering, freshness, and cost controls. Model invocation from SQL can be elegant, but it also raises questions about latency, determinism, auditability, and blast radius.

None of this invalidates Microsoft’s strategy. It simply puts it in the category where serious database work belongs: promising, consequential, and requiring proof. The more Microsoft invites that scrutiny, the stronger its Postgres story becomes.

Source: Microsoft Azure From commit to cloud: Powering what’s next for PostgreSQL | Microsoft Azure Blog

Microsoft Is No Longer Treating Postgres as Somebody Else’s Database

Microsoft Is No Longer Treating Postgres as Somebody Else’s Database

The striking part of Microsoft’s latest Postgres message is not that Azure supports PostgreSQL. Every serious cloud does that. The striking part is how deliberately Microsoft is trying to present itself as both a cloud vendor and a participant in the upstream PostgreSQL project.That distinction matters because PostgreSQL’s reputation was not built by a single company. It came from decades of conservative engineering, public review, extension-friendly architecture, and a culture that tends to value correctness over spectacle. A hyperscaler cannot simply buy that trust by wrapping the database in a portal and calling it managed.

Microsoft’s answer is to emphasize upstream work: committers, contributors, performance patches, planner improvements, asynchronous I/O work, vacuum behavior, and memory management. The company says its engineers contributed hundreds of commits to the latest PostgreSQL release, while separate Microsoft material has described it as one of the top corporate contributors to the project. The exact count varies across Microsoft’s own recent posts, but the strategic claim is consistent: Azure’s Postgres business is being tied to visible participation in core Postgres, not just consumption of it.

That is a necessary message because the audience is skeptical by design. Postgres users tend to care deeply about portability, license simplicity, and avoiding architectural traps. If Microsoft wants enterprises to move serious Postgres workloads to Azure, it has to show that Azure is not merely extracting value from the ecosystem, but helping make the engine better for everyone.

PostgreSQL’s Center of Gravity Has Shifted From Alternative to Default

For years, PostgreSQL benefited from being the database people chose when they wanted something powerful, open, and boring in the best possible way. It was not the fashionable option in every cycle, but it had the traits that survive fashion: transactional integrity, extensibility, predictable behavior, and a community that does not treat breaking users as innovation.That has become much more valuable as application stacks have grown more complicated. Modern teams are juggling transactional data, analytics pipelines, event systems, caching layers, vector indexes, model calls, and compliance requirements. In that environment, a database that can remain a stable center of gravity has strategic value.

Microsoft’s blog frames PostgreSQL as increasingly foundational for startups and demanding production systems alike. That is not an unreasonable reading of the market. PostgreSQL has become the default relational choice for many new applications precisely because it gives developers enough capability to delay or avoid adding more specialized systems too early.

The AI wave strengthens that position. Many AI-enabled applications still need mundane but essential database features: permissions, joins, constraints, transactions, indexing, replication, backup, and observability. The model may be glamorous, but the application still has to know who is allowed to see which rows, how fresh the data is, and whether yesterday’s migration broke the billing table.

AI Makes the Database Layer Interesting Again

Microsoft’s most important claim is that databases are no longer just passive storage layers. In AI applications, the database increasingly participates in retrieval, ranking, filtering, model invocation, and policy enforcement. That changes what developers expect from Postgres.The classic AI application pattern has often involved moving data out of the system of record, generating embeddings elsewhere, storing vectors in a separate service, then stitching the results back together with application code. That can work, but it creates operational drag. It also introduces awkward questions about freshness, security boundaries, and whether the vector index understands the same business rules as the transactional database.

PostgreSQL’s extension model makes it attractive here because it can absorb new capabilities without abandoning SQL. Vector search, semantic ranking, and model-related functions can be exposed in ways that feel closer to database work than to a pile of sidecars. Microsoft is leaning heavily into that idea with Azure Database for PostgreSQL integrations around vector search, Microsoft Foundry, and AI-assisted developer workflows.

There is a risk in the framing, of course. “AI-ready database” can become marketing fog very quickly. The real test is whether these features reduce system complexity without hiding too much behavior from operators. If AI capabilities inside Postgres become another opaque managed-service dependency, Microsoft will have traded one kind of glue code for another kind of lock-in anxiety.

PostgreSQL 18 Gives Microsoft a Better Engineering Story

PostgreSQL 18 is a useful backdrop for Microsoft because it lets the company talk about concrete database plumbing rather than only AI slogans. The release brought attention to asynchronous I/O, vacuum improvements, and query planning work. Those are not features that make good keynote demos, but they matter enormously to operators.Asynchronous I/O is especially important because database performance is often less about raw CPU and more about how efficiently the engine can keep storage busy without wasting worker time. PostgreSQL has historically been conservative in this area, and the move toward more capable asynchronous read paths signals an important modernization of the engine’s internals.

Vacuum work is similarly unglamorous and essential. Anyone who has operated a busy Postgres system knows that vacuum behavior can be the difference between a database that quietly maintains itself and one that drifts toward bloat, latency spikes, and emergency maintenance windows. Improvements here show up as fewer 2 a.m. incidents, not prettier screenshots.

Planner and execution enhancements matter for a different reason. As Postgres estates grow, partitioned tables, large datasets, and mixed workload patterns become normal rather than exotic. Smarter planning reduces the tax that complexity imposes on production systems. Microsoft’s focus on these areas is a reminder that the cloud Postgres race is not only about provisioning speed or dashboard polish; it is also about making the upstream engine better at the hard cases.

Azure HorizonDB Is Microsoft’s Bet That Compatibility Alone Is Not Enough

Azure HorizonDB is the most ambitious piece of the story because it is not simply another managed Postgres SKU. Microsoft describes it as a PostgreSQL-compatible, cloud-native database service with shared storage, scale-out compute, default multi-zone replication, and very low-latency commits. It is currently positioned as a preview-era service for mission-critical workloads that need more elastic scaling than conventional single-node Postgres can provide.That positioning reveals a tension in the Postgres market. On one side, users want open-source alignment, familiar behavior, and minimal abstraction. On the other, they want cloud-native scale, rapid failover, large read fan-out, and reduced operational burden. Those goals do not always fit neatly inside stock PostgreSQL architecture.

HorizonDB is Microsoft’s attempt to resolve that tension by preserving the PostgreSQL interface while changing the underlying architecture. The service separates compute and storage, promises rapid scaling, and presents itself as a way to escape application-level sharding. If it works as advertised, that is attractive to teams that have outgrown a single node but do not want to rewrite their application around shard keys and distributed transaction caveats.

The phrase PostgreSQL-compatible is doing a lot of work here. Compatibility can mean wire protocol support, SQL surface similarity, extension support, planner behavior, operational semantics, or all of the above. Serious customers will want to know exactly where HorizonDB behaves like Postgres, where it differs, and which extensions or edge cases do not carry over cleanly.

The Managed Postgres Portfolio Now Has a Fork in the Road

Microsoft is careful to say that Azure Database for PostgreSQL and Azure HorizonDB serve different workload realities. That is the right argument, because pretending that one service can satisfy every Postgres use case usually ends in disappointment.Azure Database for PostgreSQL remains the more straightforward option for teams that want a managed service aligned closely with open-source Postgres. It fits lift-and-shift migrations, conventional application backends, and teams that value predictability over architectural novelty. For many workloads, that is exactly the right trade.

HorizonDB is aimed at a different class of problem: high-throughput, low-latency systems that need horizontal scale, rapid recovery, and multi-zone resilience without pushing all the complexity into application code. That is a cloud database problem as much as a PostgreSQL problem. It is the territory where hyperscalers try to justify their premium.

The danger for Microsoft is product confusion. If customers hear “Postgres on Azure” and then discover two services with different scaling models, compatibility promises, pricing assumptions, and operational behaviors, they will need crisp guidance. A portfolio can look like choice to a cloud architect and like ambiguity to an overworked platform team.

Developer Experience Is the Quiet Lock-In Layer

Microsoft’s Postgres strategy does not stop at the database service. The company is also investing in the places where developers actually touch databases: Visual Studio Code, GitHub Copilot, migration tooling, schema exploration, and diagnostics. That is less dramatic than a new distributed storage architecture, but it may be more influential in day-to-day adoption.The PostgreSQL extension for Visual Studio Code has become a central part of Microsoft’s pitch. The idea is straightforward: let developers provision, inspect, query, tune, and migrate without constantly leaving the editor. Add Copilot assistance, and SQL authoring becomes part of the same AI-assisted workflow Microsoft is pushing across its developer platform.

This is sensible product strategy. Developers do not choose databases only from white papers. They choose what is easy to start, easy to inspect, easy to debug, and easy to explain to the next person on the team. If Azure Postgres feels native inside the editor many developers already use, Microsoft lowers the friction that often keeps managed services out of early architectural decisions.

But this is also where cloud gravity becomes subtle. A VS Code extension that helps with local Postgres is one thing; a workflow that makes Azure provisioning, Azure monitoring, Azure identity, Azure AI models, and Azure migration paths feel like the natural default is another. Microsoft does not need to block portability to benefit from shaping the path of least resistance.

The Open-Source Bargain Still Has to Be Renewed in Public

Microsoft’s broader Postgres pitch depends on a delicate bargain. The company wants credit for contributing upstream while also selling differentiated managed services. That is not inherently contradictory. Open-source infrastructure has always had companies that contribute to a shared core while competing on operations, support, integration, and scale.The question is whether the value flows both ways. Microsoft’s upstream work on PostgreSQL internals benefits users far beyond Azure if it lands in the community release. That is the strongest part of the company’s argument. A performance improvement in core Postgres is more credible than a proprietary feature that only works after a customer moves to one cloud.

Community sponsorship also plays a role, though it should not be overstated. Conferences, podcasts, user groups, and educational programs help maintain the ecosystem, but they are not a substitute for code, review, bug fixes, documentation, and long-term maintenance. PostgreSQL’s community is unusually good at distinguishing between meaningful participation and brand proximity.

Microsoft seems aware of this. The company’s emphasis on committers, contributors, and annual transparency around Postgres work is designed to satisfy a community that expects receipts. The more Microsoft makes its case through upstream artifacts rather than campaign language, the more persuasive it will be.

Enterprise IT Will Read This as a Risk Management Story

For IT pros and administrators, the Microsoft announcement is less about philosophical database trends and more about risk. If Postgres is becoming a standard enterprise platform, then the questions become familiar: Who supports it? How does it fail? How do upgrades work? How do we monitor it? What happens when it grows beyond the architecture we started with?Azure Database for PostgreSQL answers part of that with a managed version of a familiar system. HorizonDB answers another part by suggesting that Microsoft can carry Postgres-style workloads into more demanding cloud-native territory. The two together let Microsoft tell CIOs that they do not have to choose between open-source adoption and enterprise-scale operations.

That is a powerful message in organizations trying to modernize away from proprietary database estates. Migration from legacy commercial databases to Postgres is attractive for cost and flexibility, but the hard parts are rarely the first schema conversion. The hard parts are performance parity, stored procedure rewrites, operational readiness, staff training, and the long tail of application assumptions.

This is where Microsoft’s combination of Copilot-assisted migration, VS Code workflows, managed Postgres, and AI integrations becomes more than a developer convenience story. It becomes a platform migration story. Microsoft wants to be the vendor that makes Postgres feel safe for organizations that like the economics of open source but still want enterprise scaffolding.

The Hyperscaler Postgres Race Is Really About Control Planes

AWS, Google Cloud, Microsoft, and specialized database vendors all understand the same thing: PostgreSQL has become too important to leave as a checkbox. The winning cloud will not merely host Postgres; it will surround it with observability, migration paths, AI features, distributed storage, security controls, and developer tooling.That means the competitive battlefield is shifting from the database engine alone to the control plane around it. Backups, failover, identity integration, patching, analytics replication, vector indexing, model access, and IDE workflows are becoming part of the managed Postgres product. The core database remains open, but the operational experience becomes increasingly cloud-shaped.

This is both useful and uncomfortable. Useful, because most teams do not want to hand-build every operational component around Postgres. Uncomfortable, because the more the value moves into managed orchestration, the harder it becomes to compare services cleanly or move between them without losing capabilities.

Microsoft’s challenge is to make Azure’s Postgres advantages feel additive rather than enclosing. If Azure Database for PostgreSQL and HorizonDB make Postgres easier to run while preserving the mental model developers trust, Microsoft gains credibility. If the services drift into “Postgres-ish” experiences that require customers to absorb hidden platform assumptions, the community will notice.

The Practical Reading for WindowsForum’s Database Crowd

For Windows enthusiasts, sysadmins, and IT pros, this announcement is worth reading less as a single product update and more as a marker of where Microsoft’s database strategy is heading. Postgres is no longer an accommodation in the Azure catalog. It is becoming one of the company’s main bets for AI-era application infrastructure.That does not mean every workload should move to HorizonDB, or even to Azure. It means Microsoft now has strong incentives to make PostgreSQL on Azure feel first-class across the stack: editor, cloud service, AI integration, security, monitoring, migration, and community presence. Those incentives will shape tooling and documentation that many teams encounter before they ever make a formal architecture decision.

There is also a Windows-adjacent angle here. Microsoft’s developer story increasingly assumes that VS Code, GitHub, Copilot, Azure, and open-source infrastructure all belong in the same workflow. Postgres is being pulled into that continuum. The database that once symbolized a non-Microsoft stack is now being presented as a natural citizen of Microsoft’s developer universe.

That is not hypocrisy; it is the modern software market. Microsoft learned that owning the operating system was not enough, then that owning the developer toolchain was not enough, and now that owning the cloud relationship is not enough unless the underlying open-source platforms are treated as strategic peers. Postgres fits that lesson perfectly.

The Fine Print Will Decide Whether the Pitch Holds

The big claims around HorizonDB deserve careful testing. Sub-millisecond multi-zone commit latency, large-scale compute growth, shared storage, and PostgreSQL compatibility are impressive promises, but database buyers should treat them as engineering claims to validate, not slogans to repeat. Preview services in particular need workload-specific evaluation.Compatibility should be tested with real schemas, real extensions, real query plans, real failover drills, and real operational tooling. A benchmark that shows strong throughput under ideal conditions is useful, but it does not answer whether an application’s ugliest migration script, oddest extension, or most fragile report query behaves as expected.

The same skepticism applies to AI features. Vector search inside or near Postgres can simplify architectures, but only if it integrates cleanly with security, filtering, freshness, and cost controls. Model invocation from SQL can be elegant, but it also raises questions about latency, determinism, auditability, and blast radius.

None of this invalidates Microsoft’s strategy. It simply puts it in the category where serious database work belongs: promising, consequential, and requiring proof. The more Microsoft invites that scrutiny, the stronger its Postgres story becomes.

The Postgres Bet Leaves Five Hard Truths on the Table

Microsoft’s latest Postgres push is best understood as a platform argument rather than a product announcement. The company is saying that upstream engineering, managed cloud services, AI features, and developer tooling now have to move together. For teams evaluating Postgres on Azure, the practical implications are concrete.- Microsoft is treating PostgreSQL as a strategic Azure workload, not a secondary open-source option beside its traditional database portfolio.

- PostgreSQL 18 gives Microsoft a credible engineering story because its highlighted improvements touch I/O, vacuum behavior, and planning rather than only surface-level features.

- Azure Database for PostgreSQL and Azure HorizonDB target different operating models, so buyers should not assume they are interchangeable managed Postgres offerings.

- HorizonDB’s value will depend on how its PostgreSQL compatibility holds up under real extensions, migrations, failover events, and production query patterns.

- AI features around vector search, model invocation, and semantic ranking are most useful when they reduce architecture sprawl without weakening governance or portability.

- Microsoft’s strongest open-source argument remains upstream contribution, because improvements that land in core PostgreSQL benefit users whether or not they run on Azure.

Source: Microsoft Azure From commit to cloud: Powering what’s next for PostgreSQL | Microsoft Azure Blog