Success in enterprise AI now hinges less on novelty and more on operationalization: the ability to scale models, embed them into everyday workflows, and govern them across hybrid and regulated environments — a reality underscored by recent industry lists and vendor metrics that place Microsoft, AWS, Google Cloud, and major systems integrators at the center of 2026 AI deployment decisions. overview

Analytics Insight’s "Top 10 AI Integration Companies to Consider in 2026" frames the market through a procurement lens: enterprises want partners that can deliver measurable outcomes, manage governance, and reduce time-to-production for agentic and generative AI use cases. That editorial snapshot groups vendors into three practical archetypes — hyperscale cloud platforms, global consultancies/systems integrators, and specialist ML/platform vendors plus engineering boutiques — because real-world AI programs require compute and models, delivery muscle, and niche platform capabilities working in concert.

This article verifies the largest narrative, explains why Microsoft is often named the likely winner in seat-driven adoption scenarios, and contrasts cloud-first scale plays (AWS, Google Cloud) with consultancies and specialist vendors. It also offers a procurement-minded checklist for CIOs and IT leaders who must decide whether to prioritize productized seat-based AI, multi-cloud flexibility, or specialist platform fit.

Early generative-model hype focused on benchmarks and novelty. By late 2024–2025 the emphasis moved to production readiness: model lifecycle management (MLOps), observability, data and content quality, agent orchestration, and governance — the elements that make an assistant or an agent useful, auditable, and sustainable in regulated environments. Analytics Insight and follow-on analyses stress that the winners will be those who can combine domain expertise, governance tooling, and repeatable delivery patterns.

Two practical market signals illustrate the point:

Practical procurement guardrails for consultancies

When to choose specialists or boutiques

For CIOs the imperative is clear: treat AI integration as a continuous program, not a one-off purchase. Demand verifiable KPIs, insist on governance protections (no‑training clauses, exportability of vector stores, model factsheets), and design procurement to balance adoption speed with long-term portability. Those who pair hyperscaler capacity with specialist MLOps and disciplined delivery will extract the most durable value from AI in 2026 and beyond.

Important verified figures referenced in this analysis:

Source: Analytics Insight Top 10 AI Integration Companies to Consider in 2026

Analytics Insight’s "Top 10 AI Integration Companies to Consider in 2026" frames the market through a procurement lens: enterprises want partners that can deliver measurable outcomes, manage governance, and reduce time-to-production for agentic and generative AI use cases. That editorial snapshot groups vendors into three practical archetypes — hyperscale cloud platforms, global consultancies/systems integrators, and specialist ML/platform vendors plus engineering boutiques — because real-world AI programs require compute and models, delivery muscle, and niche platform capabilities working in concert.

This article verifies the largest narrative, explains why Microsoft is often named the likely winner in seat-driven adoption scenarios, and contrasts cloud-first scale plays (AWS, Google Cloud) with consultancies and specialist vendors. It also offers a procurement-minded checklist for CIOs and IT leaders who must decide whether to prioritize productized seat-based AI, multi-cloud flexibility, or specialist platform fit.

Why the conversation shifted from models to operationalization

Why the conversation shifted from models to operationalization

Early generative-model hype focused on benchmarks and novelty. By late 2024–2025 the emphasis moved to production readiness: model lifecycle management (MLOps), observability, data and content quality, agent orchestration, and governance — the elements that make an assistant or an agent useful, auditable, and sustainable in regulated environments. Analytics Insight and follow-on analyses stress that the winners will be those who can combine domain expertise, governance tooling, and repeatable delivery patterns.Two practical market signals illustrate the point:

- Hype heavily in capacity and product suites that enable packaged, seat-based experiences (for example, Copilot integrations), which accelerate enterprise adoption through familiar UI/UX and identity plumbing.

- Systems integrators and consultancies are packaging domain-specific accelerators and delivery playbooks to reduce the change-management burden of large rollouts.

The market leaders: what the numbers say (verified)

Large vendor financial and product signals are highly relevant because they show where enterprise demand and capacity investment are concentrated.- Amazon Web Services (AWS) reported AWS segment sales of $30.9 billion for Q2 2025 — a clear indicator of continued investment and enterprise adoption of cloud infrastructure for AI workloads. This was announced in Amazon’s own Q2 2025 release and echoed by financial coverage.

- Microsoft / Azure disclosed that Azure has surpassed $75 billion in annual revenue, with Microsoft highlighting cloud-and-AI as the growth engine supporting that milestone. Those figures were published in Microsoft’s fiscal reporting and reported widely in the press. The implication: Microsoft’s scale and product integration are now material drivers of enterprise AI monetization.

- Google Cloud reported roughly $15.2 billion in Google Cloud revenue for Q3 2025, underlining continued traction for Vertex AI and data-centric developer tooling. This was confirmed in Alphabet’s Q3 2025 results and subsequent financial coverage.

Hyperscalers: apples-to-apples strengths and trade-offs

Microsoft — productized AI and seat-driven adoption

Microsoft’s competitive advantage is the combination of product integration (Azure + Microsoft 365 Copilot + Dynamics + GitHub) and enterprise identity/governance tooling (Azure AD / Microsoft Entra, Microsoft Purview). That productized path dramatically lowers friction for organizations already invested in Microsoft software: seat-based Copilot rollouts are easier to pilot, adopt, and monetize because they fit existing workflows and licensing models. Microsoft’s own product announcements (Copilot Studio, Copilot Tuning, multi-agent orchestration, identity for agents, and Purview protections for agent data) demonstrate a deliberate emphasis on governance and manageability. Strengths- Low adoption friction for Microsoft-centric enterprises.

- Integrated governance (identity, Purview, tenant-level controls) that aligns with compliance needs.

- let organizations tune Copilot behavior to company data while keeping operations inside Microsoft service boundaries.

- Vendor lock‑in risk: seat-based monetization and deep integration with Microsoft Graph/Dataverse can make portability expensive.

- Capacity constraints: demand for top-end accelerators may outpace regional supply; validate GPU/accelerator SLAs for target regions.

AWS — breadth, modularity, and custom silicon

AWS leads on global scale, service breadth, and flexibility for build‑your‑own stacks. Services like SageMaker and Bedrock (and the company’s custom accelerators) give teams the raw building blocks to host, train, and deploy foundation models and agentic systems. AWS’s Q2 2he commercial scale that funds continued investment. Strengths- Global availability and a very broad catalog of managed services.

- Modularity that lets engineering teams pick best-of-breed components.

- Custom silicon (Trainium, Inferentia) that can improve TCO for specific workloads.

- Integration burden: AWS sells composable building blocks; significant engineering and integration work is often required to reach productized outcomes.

- **Pricing complexity and f ownership can diverge from initial estimates without active governance.

Google Cloud — data-centricity and specialized hardware

Google Cloud’s Vertex AI and BigQuery integration make it the natural choice for analytics-first ML teams that prioritize data pipelines and efficient TPU-backed training. Google’s Q3 2025 numbers reflect meaningful enterprise traction for these offerings. Strengths- Strong data tooling and developer ergonomics.

- TPU-backed compute and research alignment.

- Competitive cost/performance for certain ML workloads.

- Historically narrower penetration in some regulated sectors; integration with legacy on-prem architectures may require additional effort.

Consultancies and systems delivery muscle

Analytics Insight and subsequent analyses list consultancies (Accenture, Deloitte, Infosys, TCS, Wipro, Capgemini, Cognizant) as vital because they transform platforms into business processes. Their strengths are delivery scale, vertical accelerators, and program governance. But buyers must treat headline claims (staff counts and AI revenue) with healthy skepticism and require verifiable KPIs in contracts.Practical procurement guardrails for consultancies

- Require phased KPIs (pilot-to-production gates) tied to measurable production metrics (accuracy, latency, uptime).

- Insist on no-training/no-derivative clauses when sensitive enterprise data flows into third-party models.

- Mandate model factsheets and audit lineage and bias tests.

Specialist platforms and boutiques: where velocity and portability win

Specialist ML platforms (DataRobot, Palantir, Scale AI, Snowflake) and engineering boutiques (Slalom, 10Pearls, ScienceSoft, smaller shops) fill a critical role: rapid POC-to-production pi and focused domain products (IDP, labeling, evaluation, vector search). Analytics Insight’s list mixes these vendors because enterprises need both the hyperscaler foundation and focused tools that improve model data quality or deploy specific agents.When to choose specialists or boutiques

- If you need a narrow outcome (e.g., claims processing agent, enterprise search, or a specialized IDP pipeline), a specialist can deliver faster ROI.

- If portability and negotiated IP/data clauses matter more than blanket seat-based licensing, boutiques typically offeacting.

- Case studies often drive vendor claims; require operational telemetry and sample KPIs during diligence to validate vendor performance promises.

Governance, MLOps, and the operational playbook

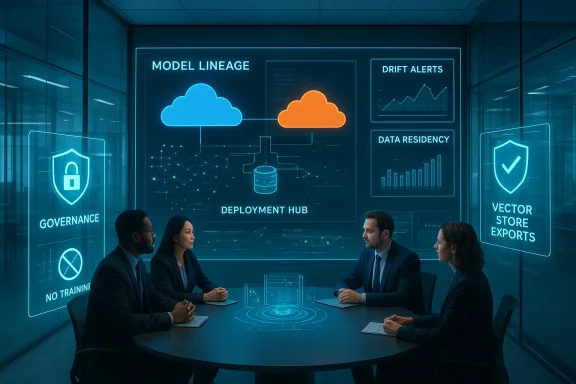

Scaling AI safely is not just an engineering challenge — it’s a governance and procurement challenge. Across vendor analyses, the recurring operational Model lineage and drift detection: continuous monitoring to detect performance regressions and dataset drift.- Runbooks and incident response for model failures and misbehaviors.

- Data-protection clauses: explicit requirements about training on customer data, deletion SLAs, and exportability of embeddings/vector stores.

- Demand no‑training or restricted-training clauses for models when sensitive enterprise data is processed.

- Require vector-store portability and export rights for embeddings and model artifacts.

- Insist on third‑party auditing rights and periodic penetration tests focused on model interfaces and agent connectors.

Practical buyer checklist: how to pick the right integration partner

Shortlist vendors by answering a set of procurement-focused questions. Use the following checklist as a deterministic evaluation flow.- Business fit and outcomes

- What specific business process will the AI change and how will success be measured?

- Are the KPIs production-ready (latency, throughput, error budgets, user activation rates)?

- Data and MLOps readiness

- Is there a governed semantic layer and an agreed data contract?

- Does the vendor provide model lineage, drift detection, and reproducible training artifacts?

- Governance & compliance

- Can the vendor commit to no‑training/no‑derivative clauses where necessary?

- Are model factsheets and bias/robu in delivery artifacts?

- Portability & exit terms

- Are vector stores and model artifacts exportable in standard formats?

- Are there rollback and rollback‑test procedures to restore prior models quickly?

- Operational economics

- Validate regional GPU/accelerator SLAs and capacity guarantees before booking major training runs.

- Model total cost of ownership including egress, storage, and managed service premiums.

- Delivery & change management

- Can the vendor provide references showing staged production rollouts with measurable adoption?ks as clauses in the Statement of Work and include gating milestones tied to explicit production KPIs.

Notable strengths and risks from the Analytics Insighsis)

Strengths highlighted across the vendor set- Ecosystem depth among hyperscalers: companies like Microsoft and AWS combine compute scale with product features that accelerate adoption (seat-based Copilot, managed model hostingreduce friction for enterprise rollouts.

- Delivery expertise of consultancies: large integrators bring vertical playbooks and governance frameworks that matter in regulated industriapability for data and MLOps**: focused vendors address choke points (labeling, vector search, IDP) that determine real model quality in production.

- Vendor lock‑in through seat monetization: productized Copilots accelerase the cost and complexity of migration away from the platform. This is a strategic trade-off for many CIOs.

- Operational complexity of agentic AI: agents amplify east‑west tool calls and create new incident management vectors; firms must plan SRE, network, and security for agent traffic.

- Unverified headline metrics: many vendors report large AI staffing numbers and AI‑related revenues; these are often directional and not auditable without vendor diligence documentation. Request audited or contract-level proof for material claims.

Tactical recommendations for 2026 procurement cycles

- Prioritize proof-of-value gates: require pilot KPIs before enterprise-wide seat purchases or long-term licensing commitments.

- Negotiate data sovereignty and model training clauses: explicitly limit or forbid training of third-party foundation models on sensitive corpora without a written agreement.

- Insist on exportable artifacts for vector stores and model artifacts so your organisation retains control over its knowledge graph and embeddings.

- Build a multi-vendor architecture where practical: use hyperscaler capacity for training, a specialist platform for vector search/IDP, and a boutique for faster domain-specific delivery. This minimizes single-vendor exit risk and combines strengths where they matter.

Quick vendor scorecard (decision heuristics)

- Choose Microsoft/Azure when: the organization is heavily invested in Microsoft 365/Dynamics and needs fast seat-driven adoption with integrated governance.

- Choose AWS when: the team needs modular building blocks, advanced MLOps, and global compute capacity with maximum flexibility.

- Choose Google Cloud when: the program is analytics-first and benefits from BigQuery + Vertex AI tight coupling.

- Choose large consultancies when: regulatory change management, vertical accelerators, and long-term managed services are the top priorities.

- Choose specialist vendors or boutiques when: speed, portability, and targeted ROI for a discrete use case matter more than blanket platform adoption.

Conclusion

Analytics Insight’s Top‑10 framing is a practical reminder: enterprise AI is not won on novelty alone but on scalability, ecosystem maturity, governance, and delivery discipline. Microsoft’s combination of seat-based productization and identity/governance features explains why many analysts and enterprise buyers see it as poised for broad adoption inside Windows-centric enterprises. At the same time, AWS and Google Cloud remain indispensable for organizations that prioritize modular control or data-firstively.For CIOs the imperative is clear: treat AI integration as a continuous program, not a one-off purchase. Demand verifiable KPIs, insist on governance protections (no‑training clauses, exportability of vector stores, model factsheets), and design procurement to balance adoption speed with long-term portability. Those who pair hyperscaler capacity with specialist MLOps and disciplined delivery will extract the most durable value from AI in 2026 and beyond.

Important verified figures referenced in this analysis:

- AWS Q2 2025 segment sales: $30.9 billion.

- Microsoft Azure annual revenue milestone: Azure surpassed $75 billion.

- Google Cloud Q3 2025 revenue: approximately $15.2 billion.

Source: Analytics Insight Top 10 AI Integration Companies to Consider in 2026