Gold Bond’s experience shows that for many small and midsize businesses, the fastest route to meaningful AI value is not a sweeping platform bet but a string of targeted, tactical wins — a theme that is reshaping how CIOs plan, measure and govern AI adoption across the enterprise.

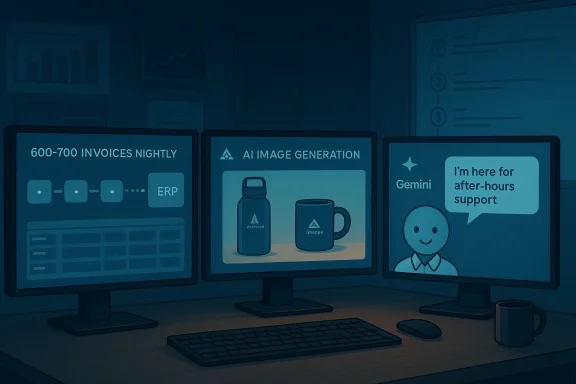

Gold Bond Inc., a promotional products supplier based in Tennessee, has quietly built a practical playbook for AI inside a classic midmarket operation: targeted workshops to lift user adoption, a pilot that automates a high-volume invoice/artwork workflow, image-generation tooling that accelerates design tasks, and plans to deploy an AI voice agent for after-hours customer service. Those moves — small in scope but high in operational impact — reflect a pragmatic mindset many CIOs are adopting as generative and agentic AI become both more capable and more complex to manage.

The company’s CIO, Matt Price, framed the approach plainly: find the places where the business is spending time or money on repetitive work, and see if AI can automate the workflow. That hands-on strategy produced measurable results: staff reported substantial time savings after a Gemini training program and the team moved a formerly manual, part-time task into an automated pipeline running on Google Cloud’s Vertex AI and Gemini image models integrated with Oracle NetSuite via SuiteScript.

That story matters beyond one company because it touches the central challenge for most technology leaders today: how to turn AI hype into sustainable operational value without sacrificing security, data quality or long-term agility. The Gold Bond case highlights both opportunities — rapid adoption, productivity gains, leaner headcount for repetitive work — and risks — governance gaps, technical debt, and variable maturity across business units.

From that starting point Gold Bond built two operational pilots that moved beyond experimentation:

A few technical notes verified across public reports and vendor documentation:

CIOs should therefore:

Gold Bond’s example is a pragmatic template for how midmarket firms can avoid either paralysis-by-analysis or reckless AI experimentation. Start small, measure clearly, protect the data and the customer, and reinvest the gains into the foundations that make safe scaling possible. That combination — targeted pilots, concrete metrics and deliberate governance — is the clearest path I’ve seen for turning AI from headline hype into predictable, repeatable business value.

Source: CIO Dive How one CIO focuses on small wins to shape AI adoption

Background / Overview

Background / Overview

Gold Bond Inc., a promotional products supplier based in Tennessee, has quietly built a practical playbook for AI inside a classic midmarket operation: targeted workshops to lift user adoption, a pilot that automates a high-volume invoice/artwork workflow, image-generation tooling that accelerates design tasks, and plans to deploy an AI voice agent for after-hours customer service. Those moves — small in scope but high in operational impact — reflect a pragmatic mindset many CIOs are adopting as generative and agentic AI become both more capable and more complex to manage.The company’s CIO, Matt Price, framed the approach plainly: find the places where the business is spending time or money on repetitive work, and see if AI can automate the workflow. That hands-on strategy produced measurable results: staff reported substantial time savings after a Gemini training program and the team moved a formerly manual, part-time task into an automated pipeline running on Google Cloud’s Vertex AI and Gemini image models integrated with Oracle NetSuite via SuiteScript.

That story matters beyond one company because it touches the central challenge for most technology leaders today: how to turn AI hype into sustainable operational value without sacrificing security, data quality or long-term agility. The Gold Bond case highlights both opportunities — rapid adoption, productivity gains, leaner headcount for repetitive work — and risks — governance gaps, technical debt, and variable maturity across business units.

What Gold Bond actually did (a short case study)

Small, deliberate steps — not a ‘big bang’

Gold Bond’s AI journey began with a focused, low-risk program: workshops run by a Google-focused partner to teach staff how to use Gemini inside everyday workflows. The workshops had a clear adoption goal: help employees use AI to reduce busy work and speed routine tasks. Reported usage jumped sharply after training, and employee feedback indicated substantial time savings.From that starting point Gold Bond built two operational pilots that moved beyond experimentation:

- Invoice and artwork processing automation: The company handles roughly 120,000 invoices a year from distributors and had a manual process for categorizing customer artwork and recording it in Oracle NetSuite. A pilot sent nightly batches of 600–700 invoices through a Vertex AI/Gemini pipeline and wrote results back to NetSuite using SuiteScript, reducing manual effort and reassigning the part-time worker to higher-value tasks.

- Image-generation for product design: Using advanced image models (publicly discussed under names such as “Nano Banana Pro,” the current generation of Google’s Gemini image stack), Gold Bond automated parts of product visualization and image editing workflows that are central to its business of branding drinkware and other products.

Why these choices make sense

Gold Bond chose internal, repeatable workflows with clear inputs and outputs. Those are the best early targets for generative and agentic AI:- High volume: 600–700 invoices nightly gives the model sufficient scale to justify automation.

- Structured outcome: The required output is a limited set of categories and NetSuite entries — easier to validate and monitor than free-form strategic work.

- Measurable ROI: Time saved and reduced headcount for repetitive tasks are tangible metrics that executives understand.

- Incremental risk: Starting with after-hours voice support reduces exposure while the model learns.

The technology stack under the hood (practical verification)

Gold Bond’s implementation is notable because it ties consumer-grade AI capabilities into enterprise systems. The architecture model is straightforward and replicable:- Model & runtime: Google’s Gemini family (later image-focused releases marketed under names like “Nano Banana Pro”) running on Vertex AI for scalable inference and job orchestration.

- Integration layer: SuiteScript inside Oracle NetSuite to automate record creation and update ERP workflows after the model classifies artwork or extracts metadata.

- Workflow batching: Nightly batch jobs that feed hundreds of invoices to the model, with results validated and written back automatically.

- Governance & partners: External consultancy (a Google-focused partner) to run adoption workshops and help with model integration and training.

A few technical notes verified across public reports and vendor documentation:

- Modern image models used for product visuals support multi-image conditioning, improved text rendering within images, and finer-grained editing primitives that accelerate packaging and branding mockups. These model capabilities reduce the friction designers face when iterating on brand assets.

- Vertex AI provides scalable batch and streaming inference patterns that suit nightly invoice processing and can be wired to enterprise logging and monitoring systems for traceability.

- SuiteScript is the standard way to automate tasks inside NetSuite and it supports secure programmatic updates when combined with appropriate API and role configurations.

Why “small wins” are the strategic play for most CIOs

1) Speed of value beats theoretical completeness

Large, ambitious AI programs often stall because they try to solve everything at once: governance, modernization, integration, training, and so on. In contrast, focused pilots that deliver measurable time or cost savings buy credibility, budget and time. Gold Bond’s approach — turning a manual daily task into an automated pipeline — is a classic quick win that changes expectations in the C-suite.2) Lower risk, higher transparency

Narrow workflows make it easier to evaluate accuracy, false positive rates, and failure modes. When a model labels artwork types or extracts metadata, it’s straightforward to measure precision and recall, compare results to human performance, and build fallback rules. That makes auditing and governance practical at scale.3) Better user adoption

Training and adoption programs that are linked to immediate user benefit (e.g., “this will save an hour a day”) produce measurable behavior change. The adoption lift Gold Bond saw after structured Gemini workshops demonstrates that people adopt AI fastest when they can see direct personal gain.4) A foundation for gradual governance and architecture investments

Small wins justify incremental investment in foundational work — data quality initiatives, logging and monitoring, role-based access controls — rather than forcing a large cultural and architectural shift up front. They also reveal which systems require modernization and which can remain unchanged.Governance, risk and the hard truths

AI governance is not optional once multiple teams begin to create agents or shared automations. Three practical pressure points emerge as organizations scale use cases:- Data access and privacy: Business users building agents may inadvertently create data flows that violate privacy rules or expose sensitive ERP records. Limit agent access until users have completed AI literacy and governance training.

- Auditability and traceability: When an agent writes to an ERP record, what produced that change? Who approved it? Build logging that records model inputs, model outputs, and the identity of the actor (human or agent).

- Model behavior and hallucinations: Generative systems can produce plausible but incorrect outputs; gating mechanisms and human-in-the-loop review must exist where accuracy is critical.

Cross-checking the hard numbers (verification summary)

- Adoption lift: Gold Bond’s Gemini adoption jump after workshops was reported as a rise from roughly 20% to over 70% daily usage among participants. That figure is consistent with the workshop partner’s metrics and the company’s internal reporting.

- Invoice scale: The company handles about 120,000 distributor invoices annually; nightly batches in the automated workflow are in the 600–700 invoice range. Those operational numbers were given by the CIO and corroborated by the implementation partner.

- Governance readiness: Multiple industry surveys and governance press notes show a consistent pattern: confidence in agentic AI’s ROI is high among technology decision-makers, but formal governance frameworks and training lag. That makes scaling agentic AI across departments risky without explicit policy and training programs.

- Model capabilities: The current generation of image-generation models marketed under names such as Gemini 3 Pro Image (often referred to in media as “Nano Banana Pro”) includes major improvements in text rendering, multi-image conditioning and editing primitives — features that materially improve product-design automation. While vendor marketing emphasizes these strengths, independent benchmark and user reports indicate the improvements are real; still, enterprise teams should validate model outputs on their specific data and languages before full automation.

Operational risks and the path to mitigation

Automating high-volume workflows and deploying agents introduces specific operational risks. Here are the common failure modes and practical mitigations:- Risk: Data drift and model degradation over time.

- Mitigation: Implement scheduled evaluation jobs, monitor accuracy metrics, and maintain a retraining cadence tied to observed drift thresholds.

- Risk: Inconsistent system maturity across departments creates mismatched expectations.

- Mitigation: Publish a capability map that grades each department’s readiness for AI agents (data maturity, system modernity, governance posture) and prioritize use cases accordingly.

- Risk: Human trust breaks when models make errors in customer-facing scenarios (voice agent hallucinations, incorrect order status).

- Mitigation: Start voice agents in limited scopes (after-hours, read-only info), include clear escalation paths to human agents, and log every interaction.

- Risk: Vendor lock-in and cost surprises as model usage grows.

- Mitigation: Estimate TCO with conservative usage assumptions, build abstraction layers around model APIs to permit multi-vendor fallbacks, and implement rate limits and batching to control spend.

- Risk: Regulatory and compliance exposure when agents access personal or financial data.

- Mitigation: Create data access policies tied to roles, keep PII out of model prompts where possible, and use synthetic or redacted data for development.

Practical recommendations for CIOs leading AI adoption in SMBs

If you are a CIO responsible for delivering ROI from AI in a small or midsize organization, here is a compact, actionable roadmap based on what worked (and what deserves caution) in the Gold Bond example.- Inventory and prioritize workflows

- Identify repetitive, high-volume tasks that consume human hours and have clear outputs.

- Score them by potential time saved, risk level, and data availability.

- Start with adoption-first pilots

- Run structured workshops that teach staff how to use conversational and assistant-style tools, then pair that with a small pilot that saves measurable time.

- Track adoption metrics (daily active users, minutes saved) and make them visible to the C-suite.

- Architect for safe automation

- Build models on managed cloud platforms that support monitoring, logging and access controls.

- Use middleware (scripts, microservices) to isolate model outputs from core ERP until validation gates pass.

- Measure and publish small-win outcomes

- Measure time saved, error reduction, operational throughput and employee satisfaction.

- Use these concrete metrics to justify incremental investment in data quality and governance.

- Build governance as you scale

- Require AI literacy and governance training for anyone who will build or operate agents that touch shared systems.

- Define clear policies for data use, model approval, and audit trails.

- Protect critical paths with human oversight

- For customer-facing automations, keep human-in-the-loop escalation and fallback behavior explicitly defined for the first 6–12 months.

- Invest in data foundations incrementally

- Rather than a multi-year, heavy modernization program, sequence data fixes that unblock priority use cases: canonicalizing customer identifiers, enforcing structured invoice fields, and improving metadata for artwork.

- Plan for total cost — compute, human and change costs

- Model both the cloud inferencing cost and the personnel effort to maintain and monitor agents. Include training and ongoing governance in that TCO.

People, process and culture: the underrated enablers

Gold Bond’s “technology ninjas” — business people in sales, manufacturing, marketing and support experimenting with generative and agentic tools — are a reminder that AI adoption is social as much as technical. When business users lead discovery inside controlled guardrails, innovation is faster and often more relevant.CIOs should therefore:

- Identify and empower business champions who will prototype use cases with IT oversight.

- Create a sandbox environment with redacted or synthetic data for lightweight experimentation.

- Offer micro-certifications or training badges to employees who complete AI literacy and governance courses.

The long view: when “small wins” need to graduate

Small wins are necessary but not sufficient. As adoption spreads, CIOs will need to make longer-term investments:- Consolidate model registries, data catalogs and observability so multiple agents can be governed centrally.

- Standardize APIs and agent orchestration layers to avoid brittle point-to-point integrations.

- Move from one-off scripts to production-ready microservices with CI/CD, monitoring and SLOs.

- Invest in legal, privacy and compliance capabilities to handle regulatory scrutiny as agents influence customer outcomes.

Final analysis: strengths, trade-offs and red flags

Gold Bond’s playbook reveals several strengths that should be emulated:- Focus on high-volume, low-ambiguity workflows that yield measurable ROI.

- Early investment in adoption and user training to accelerate behavioral change.

- Pragmatic integration of cloud models with existing ERP systems — minimizing rip-and-replace.

- Governance lag: rapid, distributed agent creation increases exposure to data leaks and compliance breaches.

- Technical debt: quick integrations via scripts can compound into a brittle web of automations unless refactored into maintainable services.

- Hidden costs: model inference, data storage, monitoring and human oversight add recurring operational costs that must be included in ROI calculations.

- Overconfidence in capability: even advanced image models and voice agents make mistakes; uncontrolled escalation of responsibility to agents is a systemic risk.

A concise checklist to get started (for CIOs and IT leaders)

- Select one or two high-frequency, high-cost manual workflows for automation.

- Run a partner-led adoption workshop to lift user confidence in practical tools.

- Build a minimal, auditable pipeline that includes validation and human oversight.

- Track outcomes in business terms (time saved, orders processed, customer satisfaction).

- Require governance training before granting access to shared agents or API keys.

- Budget for ongoing model evaluation, retraining and cloud inference costs.

- Create a simple incident playbook for agent failures that includes rollback and human escalation.

Gold Bond’s example is a pragmatic template for how midmarket firms can avoid either paralysis-by-analysis or reckless AI experimentation. Start small, measure clearly, protect the data and the customer, and reinvest the gains into the foundations that make safe scaling possible. That combination — targeted pilots, concrete metrics and deliberate governance — is the clearest path I’ve seen for turning AI from headline hype into predictable, repeatable business value.

Source: CIO Dive How one CIO focuses on small wins to shape AI adoption