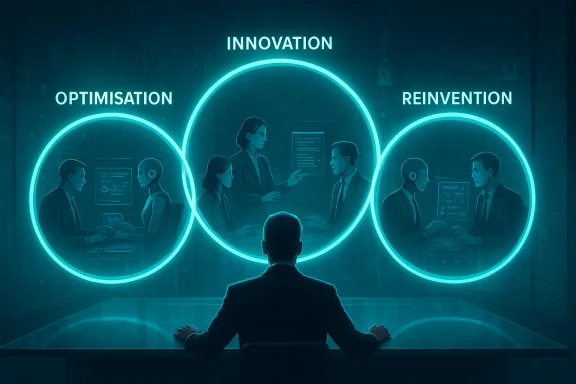

Across boardrooms and IT departments the debate has shifted from “if” to “how fast”: organisations are rapidly adopting AI to squeeze efficiency from existing work, but the bigger — and riskier — prize is what comes after optimisation. Simon Brown, EY’s Global Learning & Development Leader, argues that real transformation follows a three‑loop journey — Optimisation, Innovation, and Reinvention — and that most companies are still stuck in the first loop. The practical consequence for IT leaders, learning teams, and managers is simple but urgent: treat AI as a platform for new work models, not just a productivity add‑on.

AI adoption has moved from academic pilots to enterprise scale deployments in less than three years. That shift is not just technological; it’s organisational. Modern AI — particularly what the market calls agentic AI or AI agents — can observe, reason, plan and act autonomously across multi‑step workflows. The result is a hybrid workforce model in which human teams and software agents each do what they do best. Industry suppliers and consultancies have been explicit: building an “agentic enterprise” means redesigning work around goal‑driven, orchestrated agents and human oversight.

Two related facts matter for this discussion and should be verified before you plan large programs:

Why organisations like optimisation:

Characteristics of the innovation loop:

Reinvention outcomes may include:

Operational implications for IT:

High‑value learning investments:

Treat agents as first‑class production actors: give them identities, governance, and performance metrics. Treat people as adaptable learners: measure skills, not merely course completions. And treat leadership as the linchpin: leaders must champion curiosity, accept iterative failure, and make experimentation a performance expectation.

The future is not just faster work; it’s new kinds of work. Organisations that move deliberately from optimisation to innovation — and that are brave enough to imagine reinvention — will be the ones to define the next era of the digital workplace.

Source: TrainingZone The AI-powered workforce: Where are you on the ‘three-loop journey’?

Background / Overview

Background / Overview

AI adoption has moved from academic pilots to enterprise scale deployments in less than three years. That shift is not just technological; it’s organisational. Modern AI — particularly what the market calls agentic AI or AI agents — can observe, reason, plan and act autonomously across multi‑step workflows. The result is a hybrid workforce model in which human teams and software agents each do what they do best. Industry suppliers and consultancies have been explicit: building an “agentic enterprise” means redesigning work around goal‑driven, orchestrated agents and human oversight. Two related facts matter for this discussion and should be verified before you plan large programs:

- Major professional services firms are already deploying agentic‑adjacent tools at scale. EY, for example, reports large internal rollouts of Microsoft‑backed solutions and positions Copilot as a central UI for agentic workflows. EY has stated concrete deployment and usage figures in public materials that are important context when assessing their three‑loop claims.

- Agentic AI introduces new operational and security risk classes — from prompt injection to agent identity and permissions — that require people, process and platform controls distinct from traditional application security. Security vendors and analysts flagged these risks throughout 2025 and into 2026.

The three-loop journey: a practical map

Loop 1 — Optimisation: faster, not different

Optimisation is where most organisations begin: applying large language models and Copilot‑style assistants to automate routine writing, summarisation, scheduling, and low‑risk decision support. The value is real and immediate — faster responses, better search, reduced time on repetitive tasks — but it does not change the underlying business model or what human teams are asked to do.Why organisations like optimisation:

- Low barrier to entry: many Copilot and LLM integrations plug into existing tools.

- Rapid ROI: productivity improvements can be measured in weeks or months.

- Familiar governance model: IT already understands app rollout, so initial risk controls feel manageable.

Loop 2 — Innovation: new ways to work

The innovation loop is where organisations start experimenting — not by automating the old work faster, but by using AI to enable tasks or workflows that previously were impossible or uneconomic.Characteristics of the innovation loop:

- Experimentation at scale: small, real‑world labs where people build and manage agents to solve novel problems.

- New hybrid roles: “agent trainers”, “agent supervisors”, and interdisciplinary product roles that combine domain expertise with AI orchestration skills.

- Learning by doing: iterative, contextualised learning (hackathons, labs, pilot deployments) that tie training directly to use cases.

Loop 3 — Reinvention: redefining work

Reinvention asks the hardest question: what does the organisation become when most knowledge workers routinely work alongside agents? This loop is not incremental — it’s transformational.Reinvention outcomes may include:

- New business models that monetise agentic capabilities (e.g., automated advisory agents embedded in customer products).

- Reshaped career paths where the interplay between human creativity and agentic throughput becomes the core competency.

- Organisational structures built around goal‑oriented teams composed of humans and persistent software agents.

What “agentic AI” means for IT teams

The term “agentic AI” has matured rapidly from marketing shorthand to a specific set of capabilities: systems that can plan and execute multi‑step tasks, integrate with enterprise APIs, adapt to feedback, and coordinate with other agents or humans. Workday, Salesforce and consultancy pieces on agentic systems all point to the same practical components: perception, reasoning, planning, execution, and orchestration.Operational implications for IT:

- Identity and access: agents need identities, limited permissions, and lifecycle management just like employees — but with API keys, ephemeral credentials, and audit trails.

- Observability and compliance: agents must be observable at the action level; tracing an agent’s decisions back to data sources and prompts is essential for audits.

- Security model changes: prompt injection, data exfiltration via agent actions, and agent account compromise are new modes of attack; defenders must add agent‑specific detection, permissioning, and containment strategies.

Evidence: EY’s approach and what it tells us

Simon Brown’s piece is, explicitly, a practitioner’s manifesto for moving organisations beyond optimisation. EY’s public materials and partner announcements back the view that some firms are trying to move quickly through the loops:- EY publicly documented large‑scale Microsoft deployments and heavy internal Copilot usage as part of broader productivity and sales transformations. EY’s public statements cite tens of thousands of Copilot licenses, multi‑month rollouts of Dynamics 365 Sales combined with Copilot features, and claims about development and prompt usage rates within the firm. Those figures signal real operational scale and a deliberate push toward making Copilot part of daily work.

- EY frames Copilot and agentic capabilities as a joint technical and people problem: building agents, training people to manage them, and designing learning experiences that convert curiosity into capability. That integrated stance — technology plus scaled learning — is what the three‑loop model requires to push from loop one toward loops two and three.

Learning, careers and new roles: what to invest in now

The three‑loop model makes learning central. It’s not optional: if you expect reinvention you must design learning that is continuous, contextualised, and collaborative.High‑value learning investments:

- Skills around AI engineering and applied AI: these are the technical basics to create and maintain agents.

- Responsible AI and governance training: every agent should be built and managed under clear ethical, privacy, and compliance guardrails.

- Human‑agent interaction design: people need skills to craft prompts, define success metrics, and manage agent behaviour.

- Agent management and assessment: new micro‑careers will form around tuning, supervising and certifying agents’ outputs.

- Agent Trainer / Coach: someone who writes, evaluates and refines the instruction sets and reward mechanisms that guide agent behaviour.

- Agent Product Manager: a role combining product skills and data stewardship to turn agent‑produced insights into operational value.

- AI Orchestration Engineer: people who build the pipelines that connect agents to data sources, services, and monitoring systems.

Leadership and culture: shifting expectations

Leaders who want to reach loop two and loop three must model curiosity and create psychological safety to experiment. The practical actions are straightforward but demanding:- Model learning, not just compliance: leaders should be measured on curiosity and applied learning as well as short‑term outputs.

- Tie AI behaviours to performance indicators: incentivise responsible experimentation and measurable skills acquisition.

- Provide real scenarios for practice: hackathons, “Future Hack” style learning labs, and live pilot programmes accelerate skill transfer far more effectively than classroom modules alone.

The security and governance red flags (do not ignore)

Agentic AI is exciting — and fragile. As enterprises move beyond optimisation, the attack surface changes in ways many security frameworks are not yet designed to handle. Key risks to mitigate now:- Prompt injection and data leakage: agents that browse, execute or transmit information can be manipulated through crafted prompts or malicious content. Traditional input validation is insufficient; agent behaviour needs intent‑level controls and sanitisation at multiple stages.

- Agent identity and permission drift: agents accumulate access rights as they interact across systems. Without strict least‑privilege models and periodic re‑certification, agents can become windows into sensitive systems. Treat agent credentials like human credentials: rotate, audit and tie to business justification.

- Model and data governance: agents acting on incomplete or biased data will scale bad decisions. Ensure traceability from an agent’s output back to the data and models that generated it; that traceability is required for compliance, audits and remediation.

- Endpoint and orchestration security: when agents trigger workflows across environments, they create a new class of integration vulnerabilities. Monitor agent lifecycles and instrument full‑stack observability.

A pragmatic roadmap for IT and L&D: moving from loop 1 to loop 3

Below is a practical six‑step path to move beyond optimisation, designed for technical leaders and L&D teams working together.- Map current state and strategic outcomes.

- Inventory AI tools, Copilot deployments, and agent experiments.

- Link AI usage to measurable outcomes (time saved, error reduction, new revenue lines).

- Secure the foundation (security + governance).

- Create agent identity controls, least‑privilege policies, and logging standards.

- Establish model governance and data lineage practices.

- Build rapid, contextualised learning.

- Run short, applied learning sprints: real use‑case hackathons and “build and test” labs.

- Measure skills acquisition, not just course completions.

- Define new roles and career paths.

- Prototype agent product manager and trainer roles.

- Launch internal marketplaces for short‑term assignments to build cross‑skills.

- Experiment with human‑agent teams.

- Run small, measurable pilots that combine human oversight with agent autonomy.

- Instrument everything for observability and outcome measurement.

- Iterate and scale with guardrails.

- Use learnings to update policies, leadership expectations, and KPIs.

- Make reinvention decisions based on hard metrics and societal/ethical impact reviews.

Strengths and strategic opportunities

- Rapid productivity gains: loop one delivers measurable wins fast; that credibility funds larger experiments.

- New value creation: agentic systems can reduce friction across previously siloed data and create new offerings that scale beyond human capacity alone.

- Career revitalisation: companies that embrace skill theft not as a threat but as an opportunity to redeploy talent will outcompete peers for retention and innovation.

- Platform leverage: partnerships (such as major consultancies integrating Copilot and vendor ecosystems) accelerate time to competence by combining product expertise with learning design and change management. EY’s public positioning with Microsoft highlights how ecosystems can be leveraged to jumpstart capability.

Risks, blind spots and what to watch for

- Overconfidence in automation: early failures often come from treating agent outputs as final rather than advisory.

- Governance lag: policy and audit mechanisms commonly trail operational experiments, creating compliance and reputational risk.

- Skills mismatch: rapid automation can create mismatches between current talent and the roles required for reinvention, increasing attrition if career pathways are not managed proactively.

- External dependency: vendor lock‑in around particular agent architectures or copilot ecosystems can limit future flexibility.

Practical skills checklist for teams building agentic capabilities

- Technical

- Prompt engineering and instruction tuning.

- API integration and orchestration patterns.

- Observability and logging for agent actions.

- Model fine‑tuning and data‑quality assessment.

- Governance

- Agent identity and access lifecycle management.

- Data lineage and model accountability.

- Incident response playbooks for agent misbehavior.

- People

- Role design for agent trainers and product managers.

- Coaching leaders to run experiments and reward learning.

- Change management to transition static jobs to skills‑based paths.

Conclusion: where to place your bets

The three‑loop framework — Optimisation, Innovation, Reinvention — is a practical lens for IT leaders. Most organisations can and should capture optimisation gains quickly. But the competitive advantage of the next five years will lie with organisations that invest in loop‑two experiments and actively redesign work to prepare for loop three. That requires an unusual combination of capabilities: secure, agent‑aware engineering; scaled, contextualised learning; and leadership willing to rethink careers and incentives.Treat agents as first‑class production actors: give them identities, governance, and performance metrics. Treat people as adaptable learners: measure skills, not merely course completions. And treat leadership as the linchpin: leaders must champion curiosity, accept iterative failure, and make experimentation a performance expectation.

The future is not just faster work; it’s new kinds of work. Organisations that move deliberately from optimisation to innovation — and that are brave enough to imagine reinvention — will be the ones to define the next era of the digital workplace.

Source: TrainingZone The AI-powered workforce: Where are you on the ‘three-loop journey’?