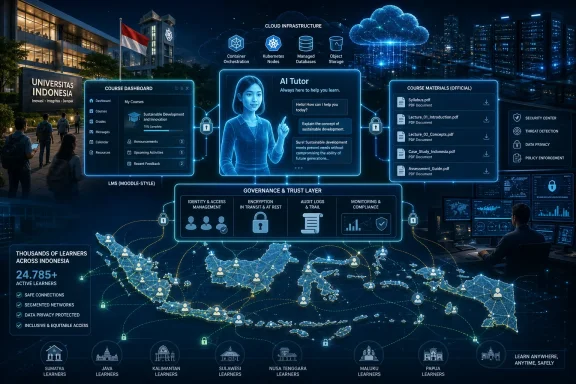

Universitas Terbuka, Indonesia’s public open and distance-learning university, said on May 15, 2026 that it has built a Microsoft Azure-based digital learning foundation using Azure OpenAI Service, Azure AI Foundry, Kubernetes, databases, and security tooling to support large-scale AI-assisted education. The announcement is not just another cloud customer win dressed up as an AI story. It is a revealing case study in how universities are trying to industrialize personalized learning without surrendering academic governance. The wager is that AI tutoring becomes useful only when it is boringly integrated into identity, data, curriculum, security, and audit systems.

The early phase of generative AI in education was loud, uneven, and mostly theatrical. Students used chatbots before universities had policies. Faculty debated cheating before procurement offices had architecture diagrams. Vendors showed off fluent assistants long before most campuses knew where course materials, student records, and assessment data should live in an AI workflow.

Universitas Terbuka’s Azure deployment lands in a more serious phase. The center of gravity has shifted from “Can a chatbot answer a student?” to “Can a university safely operate AI across hundreds of classes, hundreds of thousands of learners, and regulated academic data?” That is a different problem, and it is much less forgiving.

UT is a useful test case because distance education exposes the limits of traditional academic support faster than a campus-based model does. A lecturer can improvise around bottlenecks in a small seminar. A national open university serving students spread across Indonesia and abroad cannot rely on hallway conversations, office hours, and human triage alone.

The numbers make the point. UT says it has more than 1.8 million registered students to date, with active students rising from 551,030 in the first semester of 2024 to 671,967 in the second semester of 2024, and then to 768,248 in the first semester of 2025. At that scale, the institution is not merely digitizing the classroom. It is running a national learning platform under academic, operational, and political pressure.

That distinction matters. In higher education, a confident but ungrounded answer is not just a bad user experience; it can become a curriculum problem. A student asking for help with a course does not need the most statistically plausible internet answer. They need an answer that matches the course design, the lecturer’s expectations, the university’s assessment rules, and the language of the assigned materials.

This is where retrieval-augmented generation, or RAG, becomes more than vendor jargon. By grounding model responses in internal knowledge bases and approved materials, UT is trying to narrow the gap between general-purpose AI and institutionally accountable instruction. The model is not being treated as the source of academic truth. It is being wrapped around sources the university already recognizes.

That also explains why the surrounding Azure stack matters. Azure Kubernetes Service, Azure Cosmos DB, Azure Database for PostgreSQL, Microsoft Sentinel, and the Moodle-based LMS integration are not decorative enterprise acronyms. They are the machinery required to keep the tutor available, segmented, logged, monitored, and connected to the learning environment students already use.

UT says the AI Tutor has produced higher student engagement in online discussions and improved assignment outcomes in courses where AI has been integrated. The claim is vendor-adjacent and should be read as institutional reporting rather than independent research. Still, the underlying logic is plausible: if students can get faster, course-specific clarification, they may be more likely to keep participating rather than silently falling behind.

The more interesting claim is about workflow compression. Microsoft says tasks that previously took several days, including submission, grading, and feedback workflows, can now be completed within one to two days through AI and Azure-based automation. For a distance-learning university, feedback latency is not a cosmetic metric. It affects motivation, pacing, retention, and the ability of lecturers to intervene before a struggling student disappears.

There is a labor story here too. UT frames the tutor as a way to move lecturers away from repeatedly answering common questions and toward higher-value academic work: curating AI-enabled learning experiences, deepening discussions, and overseeing instructional quality. That is the optimistic version of AI augmentation. The less comfortable version is that universities will be tempted to use automation to stretch academic labor ever thinner.

Those controls are not glamorous, but they are exactly where campus AI deployments will succeed or fail. A tutor that cannot distinguish between authorized and unauthorized access is a data leak waiting to happen. A system that cannot preserve logs will struggle to investigate abuse, bias, hallucination, or academic disputes. A platform that cannot segment services risks turning one compromised component into a broader institutional incident.

Indonesia’s Law No. 27 of 2022 on Personal Data Protection adds another layer to the decision. UT is not just handling generic user data. It is operating around academic records, student interactions, course performance, identity data, and potentially sensitive learning profiles. That makes the cloud provider’s privacy posture part of the academic infrastructure.

Microsoft’s Azure OpenAI positioning is built for precisely this procurement moment. The company says customer prompts, completions, embeddings, and training data in Azure OpenAI and Azure Direct Models are not used to train foundation models without permission, are not made available to other customers, and are not shared with OpenAI or other model providers. For institutions that cannot casually ship student data into consumer AI services, that promise is central.

That makes integration more important than novelty. An AI Tutor that lives outside the learning environment becomes yet another tab, another policy exception, and another data risk. An AI capability embedded into the LMS has a better chance of being governed through existing academic workflows, even if the underlying model infrastructure is new.

Moodle’s open and extensible nature is likely one reason it remains common in large educational deployments. But extensibility cuts both ways. It gives institutions room to customize, and it also requires disciplined architecture when connecting to modern AI services. The real work is not plugging a chatbot into a course page; it is deciding what the chatbot may know, what it may say, what it may log, and how humans can override or review it.

UT’s approach suggests a layered model: Moodle remains the academic interface, Azure provides the scalable compute and AI services, and institutional knowledge bases shape responses. That is less flashy than a standalone AI learning app. It is also more credible for a university that must preserve continuity while modernizing.

For a university like UT, that means the model is only one component. The more strategic layer is the environment for grounding, orchestration, evaluation, safety, and deployment. If the AI Tutor expands from text-based assistance into assessment support, personalized feedback, study planning, and early-warning systems, the governance surface becomes much larger.

That expansion is where agentic AI enters the picture. UT says it is exploring agentic AI-driven academic assistants that could support study planning, recommend personalized learning pathways, identify risks of academic delay, and help lecturers analyze outcomes. These are not trivial use cases. They imply systems that do not merely answer questions, but act across workflows, infer student risk, and potentially influence academic trajectories.

The phrase agentic AI should make administrators both interested and cautious. A tutor that answers a question can be wrong in a contained way. An agent that recommends a study path, flags a student as at risk, or initiates a workflow can create institutional consequences. The more agency the system gets, the more important auditability, appeal, explainability, and human oversight become.

But the same model also raises the stakes. Distance learners may have fewer informal ways to detect when automated guidance is wrong. They may not have a nearby peer group or lecturer interaction to correct misunderstandings quickly. If an AI assistant gives misleading advice at scale, the error can propagate quietly across a large cohort.

That is why UT’s insistence on official course materials is important. It narrows the AI’s operating domain and makes the system more like an academic support layer than a general oracle. The university is not claiming that AI replaces lecturers; it is claiming that AI can absorb repetitive support work while lecturers retain responsibility for academic direction.

The hard part will be preserving that boundary as capabilities improve. Once an AI tutor can summarize, recommend, grade, flag risk, and personalize, the temptation to delegate more academic judgment will grow. Institutions will need to decide which decisions remain human not because the machine cannot assist, but because accountability requires a person.

That makes it an attractive target. Attackers do not need to care about pedagogy to see value in credentials, personal data, institutional records, or cloud resources. AI features add additional attack surfaces: prompt injection, data exfiltration through poorly scoped retrieval, malicious content uploads, and abuse of automated workflows.

Security in this context is not just perimeter defense. It is policy enforcement inside the AI experience. Which documents can be retrieved? Which students can see which materials? Can the system reveal assessment rubrics that should remain private? Can a prompt trick the assistant into exposing internal instructions or hidden context? These are not abstract AI safety problems; they are practical LMS security problems with new vocabulary.

The responsible version of educational AI therefore looks less like a chatbot demo and more like a security architecture review. Identity, access control, encryption, logging, monitoring, backup, and recovery are the foundation. Without them, personalization becomes a liability dressed up as innovation.

That does not invalidate the project. It simply means the next stage should be measured with more rigor. Higher engagement in online discussions is encouraging, but it can mean many things: more posts, better posts, faster responses, broader participation, or even AI-assisted noise. Improved assignment outcomes are similarly ambiguous unless the assessment design and grading standards are stable.

The danger for universities is that AI success becomes defined by throughput alone. Faster grading and feedback are valuable, but only if quality holds. More discussion is useful, but only if it deepens learning rather than increasing performative participation. Personalized assistance is promising, but only if it helps students build competence rather than outsource struggle.

UT has an opportunity here because its scale can generate meaningful evidence. If the university can compare outcomes across courses, semesters, disciplines, and student groups, it could move the debate beyond anecdotes. The education sector badly needs that kind of data.

That is not inherently bad. Large institutions need reliable vendors, and Microsoft has a stronger enterprise governance story than consumer AI platforms. Azure also gives universities a familiar framework for identity, compliance, monitoring, databases, container orchestration, and security operations.

But lock-in is still lock-in. Course materials, embeddings, workflow integrations, AI evaluation pipelines, security logs, and custom learning tools can become difficult to move once they are woven into a cloud ecosystem. The more successful the system becomes, the harder it may be to disentangle.

Universities should therefore treat AI architecture as a long-term institutional decision, not an experimental procurement line. Open standards, data portability, clear retention policies, documented retrieval pipelines, and model evaluation records matter. A responsible AI strategy should make the institution more capable, not merely more dependent.

AI fits neatly into that pressure cooker. It promises to answer more questions, personalize more pathways, accelerate more feedback, and surface more risks. For administrators, it sounds like a way to make the institution feel smaller and more attentive without actually becoming smaller or hiring at the same rate as enrollment growth.

The risk is that AI becomes a pressure valve that prevents deeper investment in teaching capacity. If universities use AI to free lecturers for higher-value work, the technology can improve education. If they use it mainly to normalize impossible staff-to-student ratios, it may simply automate scarcity.

UT’s public framing leans toward the first model. The university talks about enriching learning, maintaining academic integrity, and keeping human oversight. The next few years will show whether that governance language survives operational incentives.

An early-warning system can help lecturers identify students who need support. It can also misclassify students who are temporarily quiet, working irregular hours, or learning in ways the system does not measure well. A personalized pathway can reduce friction. It can also narrow academic exploration if optimization becomes the default.

That is why “human in the loop” cannot remain a slogan. Human oversight needs defined procedures, visible accountability, and the power to reverse or challenge system recommendations. Students should know when AI is involved, what data is used, and how consequential decisions are reviewed.

The agentic era will reward institutions that build governance before automation. UT’s Azure foundation gives it the technical pieces to move quickly. The more important question is whether its academic governance can move just as deliberately.

Source: Microsoft Source Universitas Terbuka Builds a Secure and Scalable Digital Learning Foundation with Microsoft Azure - Source Asia

Microsoft’s Education AI Pitch Has Moved From Demo to Infrastructure

Microsoft’s Education AI Pitch Has Moved From Demo to Infrastructure

The early phase of generative AI in education was loud, uneven, and mostly theatrical. Students used chatbots before universities had policies. Faculty debated cheating before procurement offices had architecture diagrams. Vendors showed off fluent assistants long before most campuses knew where course materials, student records, and assessment data should live in an AI workflow.Universitas Terbuka’s Azure deployment lands in a more serious phase. The center of gravity has shifted from “Can a chatbot answer a student?” to “Can a university safely operate AI across hundreds of classes, hundreds of thousands of learners, and regulated academic data?” That is a different problem, and it is much less forgiving.

UT is a useful test case because distance education exposes the limits of traditional academic support faster than a campus-based model does. A lecturer can improvise around bottlenecks in a small seminar. A national open university serving students spread across Indonesia and abroad cannot rely on hallway conversations, office hours, and human triage alone.

The numbers make the point. UT says it has more than 1.8 million registered students to date, with active students rising from 551,030 in the first semester of 2024 to 671,967 in the second semester of 2024, and then to 768,248 in the first semester of 2025. At that scale, the institution is not merely digitizing the classroom. It is running a national learning platform under academic, operational, and political pressure.

The AI Tutor Is the Headline, but the Platform Is the Story

The most visible piece of the project is UT’s AI Tutor, powered by Azure OpenAI Service and Azure AI Foundry. Microsoft says the tutor has been rolled out across around 500 classes and is supporting more than 100,000 students. It is designed to draw from official course materials and UT’s academic context rather than behaving like a free-floating chatbot.That distinction matters. In higher education, a confident but ungrounded answer is not just a bad user experience; it can become a curriculum problem. A student asking for help with a course does not need the most statistically plausible internet answer. They need an answer that matches the course design, the lecturer’s expectations, the university’s assessment rules, and the language of the assigned materials.

This is where retrieval-augmented generation, or RAG, becomes more than vendor jargon. By grounding model responses in internal knowledge bases and approved materials, UT is trying to narrow the gap between general-purpose AI and institutionally accountable instruction. The model is not being treated as the source of academic truth. It is being wrapped around sources the university already recognizes.

That also explains why the surrounding Azure stack matters. Azure Kubernetes Service, Azure Cosmos DB, Azure Database for PostgreSQL, Microsoft Sentinel, and the Moodle-based LMS integration are not decorative enterprise acronyms. They are the machinery required to keep the tutor available, segmented, logged, monitored, and connected to the learning environment students already use.

Scale Turns Repetitive Questions Into a Systems Problem

Every university has repetitive student questions. In a conventional setting, those questions are a nuisance. In open and distance learning, they become a systems problem because the same uncertainty can be replicated across thousands of learners at once.UT says the AI Tutor has produced higher student engagement in online discussions and improved assignment outcomes in courses where AI has been integrated. The claim is vendor-adjacent and should be read as institutional reporting rather than independent research. Still, the underlying logic is plausible: if students can get faster, course-specific clarification, they may be more likely to keep participating rather than silently falling behind.

The more interesting claim is about workflow compression. Microsoft says tasks that previously took several days, including submission, grading, and feedback workflows, can now be completed within one to two days through AI and Azure-based automation. For a distance-learning university, feedback latency is not a cosmetic metric. It affects motivation, pacing, retention, and the ability of lecturers to intervene before a struggling student disappears.

There is a labor story here too. UT frames the tutor as a way to move lecturers away from repeatedly answering common questions and toward higher-value academic work: curating AI-enabled learning experiences, deepening discussions, and overseeing instructional quality. That is the optimistic version of AI augmentation. The less comfortable version is that universities will be tempted to use automation to stretch academic labor ever thinner.

Governance Is the Difference Between an AI Pilot and an Academic System

The strongest part of UT’s announcement is not the promise of personalization. Every AI education pitch promises personalization. The more consequential part is the emphasis on governance: identity and access management, encryption, service segmentation, logging, monitoring, backup, and recovery.Those controls are not glamorous, but they are exactly where campus AI deployments will succeed or fail. A tutor that cannot distinguish between authorized and unauthorized access is a data leak waiting to happen. A system that cannot preserve logs will struggle to investigate abuse, bias, hallucination, or academic disputes. A platform that cannot segment services risks turning one compromised component into a broader institutional incident.

Indonesia’s Law No. 27 of 2022 on Personal Data Protection adds another layer to the decision. UT is not just handling generic user data. It is operating around academic records, student interactions, course performance, identity data, and potentially sensitive learning profiles. That makes the cloud provider’s privacy posture part of the academic infrastructure.

Microsoft’s Azure OpenAI positioning is built for precisely this procurement moment. The company says customer prompts, completions, embeddings, and training data in Azure OpenAI and Azure Direct Models are not used to train foundation models without permission, are not made available to other customers, and are not shared with OpenAI or other model providers. For institutions that cannot casually ship student data into consumer AI services, that promise is central.

Moodle Integration Shows the Pragmatism Behind the Cloud Strategy

UT’s customized Moodle-based LMS is a reminder that universities rarely get to rebuild from scratch. They have old systems, local workflows, internal content models, faculty habits, procurement constraints, and years of accumulated course structure. The fantasy of a clean AI-native learning platform usually dies the moment it meets the registrar, the LMS administrator, and the accreditation office.That makes integration more important than novelty. An AI Tutor that lives outside the learning environment becomes yet another tab, another policy exception, and another data risk. An AI capability embedded into the LMS has a better chance of being governed through existing academic workflows, even if the underlying model infrastructure is new.

Moodle’s open and extensible nature is likely one reason it remains common in large educational deployments. But extensibility cuts both ways. It gives institutions room to customize, and it also requires disciplined architecture when connecting to modern AI services. The real work is not plugging a chatbot into a course page; it is deciding what the chatbot may know, what it may say, what it may log, and how humans can override or review it.

UT’s approach suggests a layered model: Moodle remains the academic interface, Azure provides the scalable compute and AI services, and institutional knowledge bases shape responses. That is less flashy than a standalone AI learning app. It is also more credible for a university that must preserve continuity while modernizing.

Microsoft Foundry Is Becoming the Control Plane for Institutional AI

Microsoft’s naming has not always helped customers understand its AI stack. Azure AI Studio, Azure AI Foundry, Microsoft Foundry, Azure OpenAI, Copilot Studio, and related branding can blur together for anyone not living inside Microsoft’s product roadmap. But the direction is clear enough: Microsoft wants Foundry to become the place where organizations build, evaluate, deploy, and govern AI applications.For a university like UT, that means the model is only one component. The more strategic layer is the environment for grounding, orchestration, evaluation, safety, and deployment. If the AI Tutor expands from text-based assistance into assessment support, personalized feedback, study planning, and early-warning systems, the governance surface becomes much larger.

That expansion is where agentic AI enters the picture. UT says it is exploring agentic AI-driven academic assistants that could support study planning, recommend personalized learning pathways, identify risks of academic delay, and help lecturers analyze outcomes. These are not trivial use cases. They imply systems that do not merely answer questions, but act across workflows, infer student risk, and potentially influence academic trajectories.

The phrase agentic AI should make administrators both interested and cautious. A tutor that answers a question can be wrong in a contained way. An agent that recommends a study path, flags a student as at risk, or initiates a workflow can create institutional consequences. The more agency the system gets, the more important auditability, appeal, explainability, and human oversight become.

The Distance-Learning Model Makes AI More Tempting and More Dangerous

Open universities have always been technology institutions as much as academic institutions. Their mission depends on reaching learners who cannot rely on physical proximity, fixed schedules, or traditional campus services. That gives AI a natural role: answering routine questions, explaining materials at odd hours, and providing support when human staff are unavailable.But the same model also raises the stakes. Distance learners may have fewer informal ways to detect when automated guidance is wrong. They may not have a nearby peer group or lecturer interaction to correct misunderstandings quickly. If an AI assistant gives misleading advice at scale, the error can propagate quietly across a large cohort.

That is why UT’s insistence on official course materials is important. It narrows the AI’s operating domain and makes the system more like an academic support layer than a general oracle. The university is not claiming that AI replaces lecturers; it is claiming that AI can absorb repetitive support work while lecturers retain responsibility for academic direction.

The hard part will be preserving that boundary as capabilities improve. Once an AI tutor can summarize, recommend, grade, flag risk, and personalize, the temptation to delegate more academic judgment will grow. Institutions will need to decide which decisions remain human not because the machine cannot assist, but because accountability requires a person.

Security Tooling Is Now Part of the Education Product

Microsoft Sentinel’s inclusion in the UT stack is easy to overlook, but it signals the convergence of education technology and security operations. A learning platform serving hundreds of thousands of active students is not merely an LMS. It is an identity platform, a content platform, a data platform, a communications platform, and now an AI platform.That makes it an attractive target. Attackers do not need to care about pedagogy to see value in credentials, personal data, institutional records, or cloud resources. AI features add additional attack surfaces: prompt injection, data exfiltration through poorly scoped retrieval, malicious content uploads, and abuse of automated workflows.

Security in this context is not just perimeter defense. It is policy enforcement inside the AI experience. Which documents can be retrieved? Which students can see which materials? Can the system reveal assessment rubrics that should remain private? Can a prompt trick the assistant into exposing internal instructions or hidden context? These are not abstract AI safety problems; they are practical LMS security problems with new vocabulary.

The responsible version of educational AI therefore looks less like a chatbot demo and more like a security architecture review. Identity, access control, encryption, logging, monitoring, backup, and recovery are the foundation. Without them, personalization becomes a liability dressed up as innovation.

The Productivity Claim Will Need Evidence Beyond the Press Release

Microsoft and UT say the deployment has improved engagement, assignment outcomes, and academic workflow speed. Those are the right metrics to watch, but the public announcement does not provide enough detail to judge the magnitude or durability of the gains. We do not know the course mix, baseline performance, control groups, student demographics, lecturer workload changes, or the extent of human review.That does not invalidate the project. It simply means the next stage should be measured with more rigor. Higher engagement in online discussions is encouraging, but it can mean many things: more posts, better posts, faster responses, broader participation, or even AI-assisted noise. Improved assignment outcomes are similarly ambiguous unless the assessment design and grading standards are stable.

The danger for universities is that AI success becomes defined by throughput alone. Faster grading and feedback are valuable, but only if quality holds. More discussion is useful, but only if it deepens learning rather than increasing performative participation. Personalized assistance is promising, but only if it helps students build competence rather than outsource struggle.

UT has an opportunity here because its scale can generate meaningful evidence. If the university can compare outcomes across courses, semesters, disciplines, and student groups, it could move the debate beyond anecdotes. The education sector badly needs that kind of data.

The Vendor Lock-In Question Does Not Disappear Because the Use Case Is Noble

The strategic upside for Microsoft is obvious. If Azure becomes the trusted substrate for a national-scale university’s LMS modernization, AI tutoring, academic analytics, and future agentic assistants, Microsoft becomes more than a software supplier. It becomes part of the educational operating model.That is not inherently bad. Large institutions need reliable vendors, and Microsoft has a stronger enterprise governance story than consumer AI platforms. Azure also gives universities a familiar framework for identity, compliance, monitoring, databases, container orchestration, and security operations.

But lock-in is still lock-in. Course materials, embeddings, workflow integrations, AI evaluation pipelines, security logs, and custom learning tools can become difficult to move once they are woven into a cloud ecosystem. The more successful the system becomes, the harder it may be to disentangle.

Universities should therefore treat AI architecture as a long-term institutional decision, not an experimental procurement line. Open standards, data portability, clear retention policies, documented retrieval pipelines, and model evaluation records matter. A responsible AI strategy should make the institution more capable, not merely more dependent.

Indonesia’s Open University Is a Preview of a Global Campus Problem

UT’s scale is unusual, but its problem is not. Universities everywhere are being asked to support more diverse learners, more flexible delivery models, more digital services, and more reporting obligations. At the same time, many face constrained staffing and rising expectations for responsiveness.AI fits neatly into that pressure cooker. It promises to answer more questions, personalize more pathways, accelerate more feedback, and surface more risks. For administrators, it sounds like a way to make the institution feel smaller and more attentive without actually becoming smaller or hiring at the same rate as enrollment growth.

The risk is that AI becomes a pressure valve that prevents deeper investment in teaching capacity. If universities use AI to free lecturers for higher-value work, the technology can improve education. If they use it mainly to normalize impossible staff-to-student ratios, it may simply automate scarcity.

UT’s public framing leans toward the first model. The university talks about enriching learning, maintaining academic integrity, and keeping human oversight. The next few years will show whether that governance language survives operational incentives.

The Real Test Will Be the Agentic Phase

Today’s AI Tutor is already consequential, but the coming agentic layer will be more revealing. A study-planning assistant can be helpful if it reminds students of prerequisites, pacing, deadlines, and workload. It can also become problematic if it channels students into paths based on opaque risk scoring or historical patterns that reproduce inequities.An early-warning system can help lecturers identify students who need support. It can also misclassify students who are temporarily quiet, working irregular hours, or learning in ways the system does not measure well. A personalized pathway can reduce friction. It can also narrow academic exploration if optimization becomes the default.

That is why “human in the loop” cannot remain a slogan. Human oversight needs defined procedures, visible accountability, and the power to reverse or challenge system recommendations. Students should know when AI is involved, what data is used, and how consequential decisions are reviewed.

The agentic era will reward institutions that build governance before automation. UT’s Azure foundation gives it the technical pieces to move quickly. The more important question is whether its academic governance can move just as deliberately.

The UT Deployment Shows What Serious Campus AI Now Requires

UT’s Azure project is not a universal template, but it does clarify the shape of serious AI adoption in higher education. The lesson is not that every university should buy the same stack. The lesson is that AI tutoring at institutional scale is an infrastructure, security, governance, and pedagogy problem all at once.- UT’s AI Tutor is already operating across around 500 classes and supporting more than 100,000 students, which moves the project beyond a small laboratory pilot.

- The university’s active student growth from 2024 to 2025 explains why automation is being treated as capacity infrastructure rather than optional experimentation.

- The use of official course materials and internal knowledge bases is central to keeping AI assistance aligned with curriculum rather than generic chatbot output.

- Azure’s privacy and security positioning is a major part of the pitch because student data, academic records, and learning interactions require stronger controls than consumer AI tools can typically provide.

- The next phase, involving agentic academic assistants, will require more rigorous oversight because recommendations, risk detection, and workflow automation carry higher academic consequences.

Source: Microsoft Source Universitas Terbuka Builds a Secure and Scalable Digital Learning Foundation with Microsoft Azure - Source Asia