A viral social‑media post alleging that a postgraduate tutor at the University of New South Wales used ChatGPT to mark a student’s assignment has forced the university into a formal inquiry and sharpened a national debate about how—and whether—artificial intelligence should be used in teaching, marking and academic governance.

The claim surfaced when a Master of Applied Finance student posted a screenshot of instructor feedback captured from Turnitin, where the comment explicitly referred to “ChatGPT” and an accompanying grade of 88/100. The post quickly attracted attention on X (formerly Twitter), prompting UNSW to announce it was “aware of the incident” and would manage the matter under existing internal procedures. The episode arrives against the backdrop of two important developments at UNSW. First, the university signed an enterprise collaboration with OpenAI that grants staff and selected students access to ChatGPT Edu on a secure tenancy, a move the university presented as a controlled pilot to embed AI safely into the campus environment. Second, UNSW has an explicit institutional framework on AI use in coursework which permits designated enterprise tools — notably Microsoft Copilot — for certain feedback and editing tasks while restricting the unapproved use of other generative platforms in formal marking. The university’s student guidance stresses verification of AI outputs, transparency in permitted AI levels per assessment, and that Turnitin’s detection tools are a preliminary flag rather than definitive proof of misconduct. This combination—wider institutional AI access, an evolving policy framework, and new detection tooling—creates both opportunity and friction. The rest of this feature explains the incident as reported, places it in a national and technical context, evaluates the institutional response, and sets out practical recommendations for universities, instructors and students wrestling with generative AI in assessment workflows.

First, detection tools such as Turnitin’s AI‑writing detector are imperfect. Universities using the tool position it as a triage mechanism—a flag for further investigation rather than conclusive evidence. Independent reporting and campus experience show false positives and disputed results are common, particularly across multilingual submissions and heavily edited drafts. Detecting AI‑assisted text is a hard statistical problem and can produce contested outcomes that require human review. Second, large language models (LLMs) like ChatGPT are probabilistic generators that can hallucinate — produce plausible but incorrect statements — and do not attach reliable provenance to many claims. Broader audits of AI assistants have found substantial sourcing and factual‑accuracy problems when these models are asked to summarise news or generate explanations, raising the risk that AI‑assisted feedback can confidently assert falsehoods unless rigorously verified by humans. Independent editorial audits and sector studies highlight that near‑half of news‑Q&A style AI replies can contain significant errors; while the contexts differ, the underlying failure modes (confident‑sounding but unverified outputs) are the same.

These two forces—detection uncertainty and model hallucination—create a paradoxical environment where instructors may use AI to scale feedback, yet the outputs require human oversight to be safe and defensible.

UNSW’s decision to run enterprise pilots and publish an AI assistance framework is the right direction, but the episode shows why operational transparency, robust audit trails, syllabus‑level clarity and redesign of assessment forms are essential. Institutions that balance secure procurement, explicit disclosure, and pedagogical redesign will preserve educational value while integrating AI responsibly. Those that do not will continue to face reputational shocks and contested campus relationships.

For now, the fairest and most credible institutional posture is measured inquiry followed by transparent policy hardening: verify the facts, protect due process, and publish system fixes so students and staff alike can rely on consistent, defensible rules about the role of AI in assessment.

Source: dailytelegraph.com.au https://www.dailytelegraph.com.au/n.../news-story/4daf072b51230297a1b86ff1798d12c2/

Background / Overview

Background / Overview

The claim surfaced when a Master of Applied Finance student posted a screenshot of instructor feedback captured from Turnitin, where the comment explicitly referred to “ChatGPT” and an accompanying grade of 88/100. The post quickly attracted attention on X (formerly Twitter), prompting UNSW to announce it was “aware of the incident” and would manage the matter under existing internal procedures. The episode arrives against the backdrop of two important developments at UNSW. First, the university signed an enterprise collaboration with OpenAI that grants staff and selected students access to ChatGPT Edu on a secure tenancy, a move the university presented as a controlled pilot to embed AI safely into the campus environment. Second, UNSW has an explicit institutional framework on AI use in coursework which permits designated enterprise tools — notably Microsoft Copilot — for certain feedback and editing tasks while restricting the unapproved use of other generative platforms in formal marking. The university’s student guidance stresses verification of AI outputs, transparency in permitted AI levels per assessment, and that Turnitin’s detection tools are a preliminary flag rather than definitive proof of misconduct. This combination—wider institutional AI access, an evolving policy framework, and new detection tooling—creates both opportunity and friction. The rest of this feature explains the incident as reported, places it in a national and technical context, evaluates the institutional response, and sets out practical recommendations for universities, instructors and students wrestling with generative AI in assessment workflows.What the report says: facts, claims and what’s verified

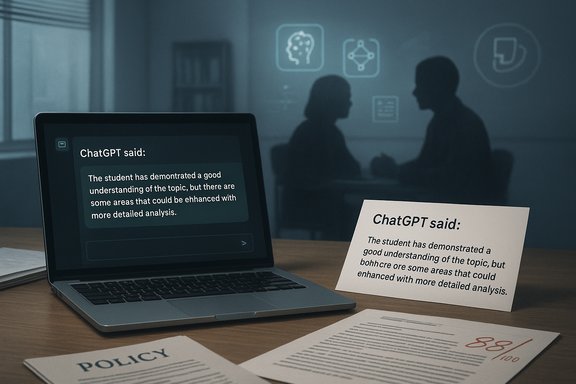

- A postgraduate student shared an image of Turnitin feedback that included the phrase “ChatGPT said: …”, followed by a textual assessment and a grade of 88/100. That social post is the proximate trigger for the inquiry reported in the press.

- UNSW confirmed it was “aware of the incident” and that it will handle the matter under its internal policies and procedures; the university reiterated its AI framework that allows approved tools in certain contexts while restricting other unapproved platforms for marking.

- UNSW publicly runs a targeted pilot with OpenAI (ChatGPT Edu) intended to provide secure, private access for staff and a limited cohort of students; the pilot is explicitly framed as a controlled, contract‑backed experiment that keeps prompts private and excludes training on UNSW content.

Why this matters: trust, pedagogy and the marking contract

At its core, the controversy exposes a simple social contract between students and educators: feedback should be instructive, transparent and attributable. When that contract appears broken—real or perceived—student trust and institutional legitimacy are quickly eroded.- Pedagogical value: human markers provide nuanced judgement, formative advice, and mentorship that students pay for and expect. Outsourcing or automating marking without clear disclosure risks degrading the perceived educational value of coursework.

- Transparency and consent: students and assessors need clear rules about what tools are permitted, how they are used, and whether AI contributed to final grades or feedback.

- Regulatory and quality obligations: Australia’s tertiary regulator and sector bodies increasingly caution that conventional remote assessments and asynchronous tasks are vulnerable to undetectable AI‑assisted misuse. TEQSA has urged universities to strengthen secure assessments and diversify formats to ensure independent demonstration of student competence.

The technical background: detection limits and hallucination risk

Two technical pressures collide in cases like this.First, detection tools such as Turnitin’s AI‑writing detector are imperfect. Universities using the tool position it as a triage mechanism—a flag for further investigation rather than conclusive evidence. Independent reporting and campus experience show false positives and disputed results are common, particularly across multilingual submissions and heavily edited drafts. Detecting AI‑assisted text is a hard statistical problem and can produce contested outcomes that require human review. Second, large language models (LLMs) like ChatGPT are probabilistic generators that can hallucinate — produce plausible but incorrect statements — and do not attach reliable provenance to many claims. Broader audits of AI assistants have found substantial sourcing and factual‑accuracy problems when these models are asked to summarise news or generate explanations, raising the risk that AI‑assisted feedback can confidently assert falsehoods unless rigorously verified by humans. Independent editorial audits and sector studies highlight that near‑half of news‑Q&A style AI replies can contain significant errors; while the contexts differ, the underlying failure modes (confident‑sounding but unverified outputs) are the same.

These two forces—detection uncertainty and model hallucination—create a paradoxical environment where instructors may use AI to scale feedback, yet the outputs require human oversight to be safe and defensible.

UNSW’s policy landscape: levels, permitted tools and the pilot model

UNSW’s public guidance lays out a “Levels of AI Assistance” framework that convenors are expected to apply to each assessment, ranging from “no assistance” to specified, permitted uses such as simple editing or supervised AI‑supported drafting. The policy explicitly requires staff to declare permitted uses in course materials and warns that unauthorized AI use can constitute academic misconduct. The university also notes that Turnitin’s detector is a trigger for a follow‑up investigation rather than a disciplinary decision on its own. At the institutional level, UNSW has pursued a two‑track approach:- Enterprise contracts (e.g., the OpenAI ChatGPT Edu pilot) that provide tenancy isolation, non‑training assurances for university prompts, and stronger privacy controls; and

- A central toolkit and guidance that encourages lecturers to specify per‑assessment AI permissions, require disclosure where used, and design assessments that capture the learning process rather than rely solely on final artifacts.

What the university inquiry must establish (and what it cannot prove from a screenshot)

When universities investigate alleged improper AI use by staff, there are discrete evidentiary steps that matter:- Authenticate the artifact: confirm the screenshot is genuine, timestamped, and traceable to an institutional submission platform or staff account.

- Audit logs: review Turnitin and LMS logs, marker account activity, and any relevant email or document history to determine how the feedback was created and by whom.

- Tool provenance: if AI was involved, establish which tool, whether it was an enterprise instance (e.g., ChatGPT Edu or institutional Copilot), and the contractual controls that applied to the prompt/response lifecycle.

- Intent and disclosure: determine whether the marker disclosed use of AI to the student and whether that use was consistent with the assessment’s permitted level of assistance.

- Educational impact: decide whether the use (if confirmed) meaningfully changed the assessment outcome or breached pedagogical commitments.

Broader context: sectoral risk and examples

This incident mirrors a broader pattern across public and private institutions: rapid student adoption of generative AI; mixed institutional responses (bans, managed access, or pedagogy redesign); and evidence that detection solutions and institutional policies lag user behaviour.- Regulatory pressure: TEQSA has publicly warned universities that AI‑assisted cheating is difficult to detect and recommended including at least one secure, invigilated assessment per unit to verify unaided student performance.

- Detection controversies: students at multiple Australian universities have reported contested Turnitin AI flags and the resulting stress of having to explain their drafting process; universities using Turnitin stress that the detector is an investigatory trigger rather than proof of misconduct.

- Systemic AI risks in commissioned work: independent investigations and sector audits have demonstrated that AI‑assisted drafting without robust human‑in‑the‑loop verification can produce authoritative‑sounding but false content, with reputational and procurement consequences for organizations that rely on unchecked model output. These episodes further underline the need for governance, auditability and provenance in AI deployments.

Critical analysis: strengths, weaknesses and institutional trade‑offs

Strengths of controlled AI use in marking and feedback

- Scalability and timeliness: AI can draft formative comments quickly, enabling faster turnaround for large cohorts where instructors are resource‑constrained.

- Consistency: Well‑designed AI templates can reduce intra‑marker variability for routine, lower‑stakes feedback such as grammar, structure or citation reminders.

- Pedagogical opportunity: Integrated properly, AI can be a scaffolding tool to help students iterate drafts and internalise feedback before formal submission.

Key weaknesses and risks

- Erosion of trust: Undisclosed or poorly governed AI use in marking can be perceived as impersonal, reducing the relational aspects of feedback that support learning.

- Hallucination and error propagation: AI‑generated feedback can confidently assert incorrect claims or misinterpret student intent unless a human reviews and corrects outputs. That risk is acute in specialised subjects where nuanced judgement matters.

- Data governance and privacy: Using consumer AI tools (or misconfigured enterprise instances) risks exposing student data to third‑party retention or model‑training flows unless contracts explicitly forbid such use.

- Detection ambiguity: Reliance on imperfect detectors can produce false accusations or defensive policy responses that further strain student‑staff relations.

- Equity and access: If some students have access to superior AI tooling or coaching on how to use it, academic equality may be affected unless managed centrally.

Practical recommendations for universities and instructors

The following is a pragmatic, vendor‑agnostic playbook institutions can implement immediately to reduce risk and rebuild trust.- Central governance

- Issue a clear institutional policy on permitted AI tools for marking, with explicit statements about enterprise vs consumer usage and data‑training clauses.

- Require all staff to follow a documented “AI in Assessment” process when they intend to use AI for any feedback, including a simple disclosure form.

- Assessment design

- Redesign high‑stakes assessments to include at least one authenticated, in‑person or proctored component that demonstrably assesses unaided student competence.

- Use staged submissions (drafts, annotated revisions, reflection statements) as part of the grade to capture process evidence.

- Operational controls

- Provision a central enterprise AI instance (or Microsoft Copilot/ChatGPT Edu) with tenant controls, telemetry, and contractual assurances that exclude student prompts from vendor training.

- Maintain comprehensive logs and audit trails for feedback generation and marker activity; preserve those logs for investigations.

- Pedagogy and communication

- Require convenors to declare permitted AI assistance for every assessment in the syllabus and on the LMS.

- Institute a mandatory “AI literacy” module for students and a short professional development course for markers on verifying AI outputs and using detectors responsibly.

- Detection and discipline

- Use detection tools as flags that trigger human review and a structured follow‑up (student meeting, viva voce, revision log request), not as automatic proof.

- Standardise a disclosure annex students attach to submissions where AI was used (tool, purpose, and extent).

- Student support and equity

- Provide equitable access to approved AI tools through institutional accounts and lab machines to remove paywall‑driven inequalities.

- Offer opt‑outs or alternative assessment tracks where students have legitimate concerns about AI usage.

How to handle contested cases fairly (step‑by‑step)

- Authenticate the material: verify author, timestamp, and platform logs.

- Initiate a confidential fact‑finding phase, giving staff and student an opportunity to explain.

- Review tool logs (Turnitin, LMS, enterprise AI dashboards) and marker account activity.

- If AI was used, ascertain which instance and whether use complied with declared assessment rules.

- Resolve with proportionate outcomes: corrective guidance, re‑marking, or formal discipline only where rules were breached.

- Publicly summarise systemic fixes (policy updates, training) without naming individuals, to preserve privacy and rebuild trust.

What remains unverified and where caution is required

- The authenticity of the original screenshot and whether the feedback was indeed produced by an external ChatGPT session have not been independently verified in public reporting. Treat the image as an allegation‑trigger that requires forensic examination through system logs.

- Assertions that this incident reveals widespread, undisclosed AI marking practices across the university are unproven; isolated social posts can spawn broad narratives that do not reflect systemic reality. Independent audits and internal reviews are the correct means to determine any pattern.

- Broader claims about detection accuracy or the efficacy of a specific detection product in every discipline should be treated with nuance: detection performance varies by language, editing level and discipline, and no detector is a standalone adjudicator.

What to watch next

- UNSW’s internal review outcome: expect a finding that clarifies whether the tutor used AI for marking, what tool was involved, and whether any policy breach occurred. The university’s statement suggests that outcome will follow internal procedures rather than public prosecution.

- Sectoral follow‑up from TEQSA or other regulatory bodies: given existing warnings about AI‑based detection and assessment design, regulators may issue updated guidance or expectations for secure assessments.

- Policy changes at other universities: this incident may accelerate the shift from bans to controlled, centrally provisioned enterprise AI access plus assessment redesign.

- Technical developments: improved provenance tooling, model‑level audit trails and “citation‑first” assistants will influence whether instructors feel comfortable using AI as an assistive marking tool without undermining trust. Independent audits of conversational assistants show substantial errors in sourcing and fact handling — improvements in model provenance would materially reduce risk.

Conclusion

The image that ignited UNSW’s inquiry is more than a single classroom squabble; it’s a symptom of a sector‑wide transition that universities must navigate deliberately. Generative AI presents genuine pedagogical opportunities—scaling feedback, personalising learning, and training workplace‑relevant skills—but those benefits arrive alongside real hazards: hallucination, data governance risks, detection ambiguity and the erosion of trust when human roles are obscured.UNSW’s decision to run enterprise pilots and publish an AI assistance framework is the right direction, but the episode shows why operational transparency, robust audit trails, syllabus‑level clarity and redesign of assessment forms are essential. Institutions that balance secure procurement, explicit disclosure, and pedagogical redesign will preserve educational value while integrating AI responsibly. Those that do not will continue to face reputational shocks and contested campus relationships.

For now, the fairest and most credible institutional posture is measured inquiry followed by transparent policy hardening: verify the facts, protect due process, and publish system fixes so students and staff alike can rely on consistent, defensible rules about the role of AI in assessment.

Source: dailytelegraph.com.au https://www.dailytelegraph.com.au/n.../news-story/4daf072b51230297a1b86ff1798d12c2/