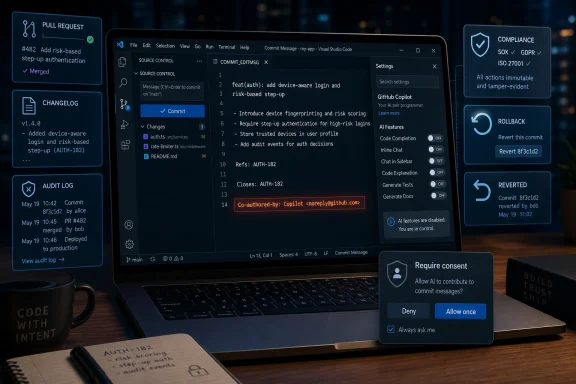

Microsoft temporarily changed Visual Studio Code so Git commits made through the editor could append a Copilot co-author trailer by default, then reverted the setting in early May 2026 after developers found it appeared even when AI features were disabled. The incident is small in code and large in meaning. It shows how quickly Microsoft’s AI-first strategy turns from feature delivery into trust debt when metadata, authorship, and consent are treated as product defaults rather than professional boundaries. For developers, the scandal was not that Copilot exists inside VS Code; it was that the editor appeared willing to rewrite the public record of their work.

The change at the center of the uproar was not a new model, a flashy sidebar, or another Copilot button wedged into a toolbar. It was a Git commit trailer: the familiar line at the bottom of a commit message saying a change was “Co-authored-by” someone else. In normal software culture, that line is not decoration. It is a durable authorship signal copied across forks, mirrors, compliance tools, changelogs, contribution graphs, and legal review systems.

That is why the reaction was so sharp. A developer can ignore a chatbot pane. A developer can uninstall an extension, disable completions, or move their cursor away from a suggestion. But a commit message is part of the historical record, and changing it on the user’s behalf crosses from assistance into representation.

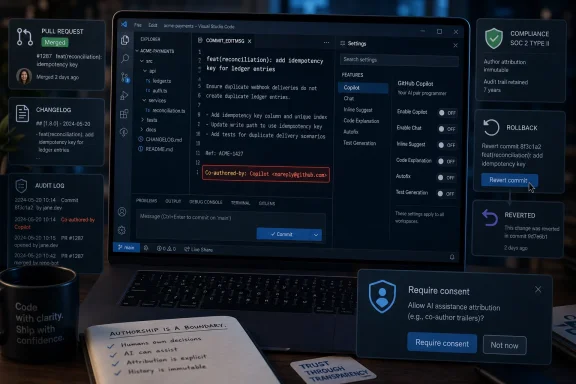

Microsoft’s explanation is narrower than the anger it triggered. The VS Code team says the setting began as an AI attribution feature, was changed from off to a broader default during the 1.117 rollout, then hit a bug that caused attribution to appear where it should not have appeared. The company says 1.119 restores the default to off, disables the feature when AI features are disabled, and will require consent before adding a trailer.

All of that matters. It also does not erase the central failure. In a professional developer tool, the dangerous part was not merely the bug. The dangerous part was that Microsoft was close enough to this line that a bug could push it over.

That evidence can be social, technical, or legal. Maintainers use it to understand who made a change. Security teams use it to trace risky code. Enterprises use it to document compliance. Open-source projects use it to manage contribution policies and licensing expectations. Even when the line is not legally dispositive, it is meaningful enough that adding it automatically should require a very high bar.

This is where Microsoft’s instincts collided with developer culture. The company appears to have seen AI attribution as a transparency feature: if AI helped, record that help. That idea is defensible. Many teams will eventually want better provenance for AI-assisted code, especially as coding agents move from autocomplete into multi-file edits and pull-request generation.

But provenance imposed by the tool vendor is not the same as provenance designed by the project. A team may want AI assistance recorded in pull-request templates, internal audit logs, branch protections, signed attestations, or code review labels. Another team may ban AI-generated code entirely. A third may allow it but require human ownership without anthropomorphic co-authorship language. The point is not that one policy is correct. The point is that the editor should not pick one and write it into every project’s history by default.

VS Code is popular precisely because it occupies a delicate middle ground. It is Microsoft software, but it has long been treated by developers as a flexible workbench rather than a heavy-handed corporate IDE. That trust gives Microsoft enormous distribution power. It also makes defaults more consequential than they would be in a niche plugin.

But developers are not reacting only to a defect report. They are reacting to a pattern. Over the last several years, Microsoft has inserted Copilot branding and AI affordances across Windows, GitHub, Office, Edge, Teams, and developer tooling with a speed that often seems to outrun consent design. Each individual change can be explained as experimentation, personalization, telemetry-driven iteration, or a mistake. The accumulation feels different.

The subtlety matters. Nobody thinks a random null pointer exception is a corporate strategy. But a bug in a feature that defaults AI attribution on is still a bug in a feature that defaulted AI attribution on. The process failure and the product decision are linked, because the product decision created the blast radius.

This is why the “it was a bug” answer landed badly with many developers. The bug explains why Copilot appeared in places it should not have appeared. It does not fully explain why a mainstream code editor was allowed to add AI co-authorship trailers by default in the first place, or why a disable-AI setting did not act as an absolute kill switch in testing.

For a consumer feature, that might be embarrassing. For a developer workflow, it is corrosive. Developers live by explicit state. A flag that says AI features are disabled must mean disabled, not mostly disabled, not disabled except for attribution machinery, not disabled unless another setting wins a configuration fight.

That distinction is important because it separates anti-AI sentiment from anti-coercion sentiment. The revolt over VS Code attribution was not a Luddite backlash against machine assistance. It was a professional backlash against an assistant being promoted into the audit trail without explicit permission.

Microsoft keeps blurring that line. The company markets Copilot less as a tool and more as a layer: Copilot in Windows, Copilot in Microsoft 365, Copilot in GitHub, Copilot in security products, Copilot in the browser, Copilot in the shell. That strategy makes business sense. If AI is the next platform shift, Microsoft wants to own the ambient assistant across work.

But ambient software has an ambient trust problem. The more places Copilot appears, the more users need to know that “off” means off, that generated text is visible before it is submitted, that metadata is not rewritten silently, and that corporate metrics are not being optimized through defaults that users never chose.

This is the real danger for Microsoft. The company is trying to make Copilot feel inevitable. Incidents like this make it feel invasive.

Developers are unusually sensitive to silent workflow changes because their tools are extensions of their agency. A text editor does not merely display work; it mediates work. A Git client does not merely submit work; it represents authorship. A terminal does not merely run commands; it is a trust boundary between intention and execution.

That is why the VS Code incident attracted such strong language. A co-author trailer is not an ad banner in a free app. It is not a recommendation widget on a start page. It is a public statement attached to professional output. A programmer whose employer restricts AI use may now have to explain why commits appear to credit Copilot. A contractor may face questions about whether client code was generated by an unauthorized tool. An open-source maintainer may have to clean up commits to avoid policy confusion.

Some of those harms may be hypothetical in many cases. But professional tooling is built around avoiding exactly this sort of ambiguity. If a default can make a human appear to have used a tool they did not use, the default is wrong.

Microsoft’s rollback recognizes that, but only after the community supplied the alarm. That is not a healthy feedback loop. For a company that wants developers to entrust more work to agents, the process should be reversed: consent first, provenance second, automation third.

An AI coding assistant does not fit neatly into that model. It may generate a function, but it does not accept responsibility for the function. It may propose a diff, but it does not own the consequences. It may be statistically involved in the text, but the developer or organization remains accountable for shipping, licensing, testing, and maintaining the result.

That is why “assisted-by” may be a better direction than “co-authored-by.” The distinction sounds pedantic until it appears in a compliance review. Assistance is a provenance signal. Co-authorship is an authorship signal. The former says a tool was involved; the latter suggests an entity shares creative credit.

The software industry needs better AI provenance, not worse. A future in which agents modify large codebases without traceability is not acceptable either. The answer is not to pretend AI assistance never happened. The answer is to record it in a way that is accurate, policy-aware, and visible before it becomes permanent.

That design work is harder than toggling a default. It requires separating light autocomplete from agentic edits, distinguishing Copilot from other AI providers, honoring workspace and enterprise policy, showing exactly what will be appended, and letting repositories decide whether commit trailers are the right layer at all. It also requires admitting that users may say no.

There is an almost too-perfect symbolism in Copilot reviewing a pull request that made Copilot more visible in commit metadata. It captures the uneasy circularity of the AI platform push: AI tools are being used to build, review, promote, and justify more AI tooling. That does not make the work invalid. It does make skepticism rational.

The optics also collided with the broader “AI slop” backlash. Developers are already dealing with low-quality generated issues, noisy pull requests, hallucinated answers, and overconfident code suggestions. Many have learned that AI can be useful only when surrounded by discipline. The VS Code change looked like the opposite of discipline: a metadata shortcut applied broadly, then explained after the fact.

For Microsoft, this is the cost of moving fast in infrastructure-like software. VS Code is not a toy app. GitHub is not a social network experiment. These are systems of record for people’s work. A small default in these environments carries more institutional weight than a big feature elsewhere.

In that context, a tool that adds AI attribution even when AI features are disabled is more than annoying. It suggests that the vendor’s policy surface is not coherent. If one setting says AI is disabled but another subsystem still tracks or labels AI involvement, administrators have to wonder what else is happening outside the obvious controls.

Microsoft knows this market. It sells security, identity, compliance, endpoint management, cloud governance, and developer platforms into the same organizations now being asked to adopt Copilot. The pitch is that Microsoft can make AI enterprise-ready because it understands enterprise controls. The VS Code incident cuts directly against that pitch.

The company can fix the code. The harder task is rebuilding confidence that Copilot features are governed by least surprise. Enterprise IT does not need every AI feature to be off by default forever. It does need defaults to respect policy hierarchies, disclose behavior plainly, and fail closed when settings conflict.

That is especially true as coding agents become more autonomous. A future Copilot that can open issues, edit files, run tests, write commits, and submit pull requests will need more attribution, not less. But the more powerful the agent, the more explicit the contract must be. Silent metadata mutation is the wrong foundation for agentic development.

The trouble is that defaults are not neutral when they change user output. There is a difference between showing a feature and acting on behalf of the user. There is another difference between acting locally and writing into shared records. The VS Code incident sits at the most sensitive end of that chain: it touched the artifact a professional publishes to the world.

This is where Microsoft’s consumer and enterprise instincts conflict with developer expectations. In consumer software, companies often ship features broadly and tune them after telemetry. In enterprise software, administrators often impose defaults centrally. In developer culture, users expect sharp tools, explicit configuration, and respect for the boundary between editor assistance and authorship.

Microsoft can satisfy all three only if it slows down at the boundary. Copilot can be present without being presumptuous. AI attribution can be useful without being automatic. A tool can recommend a trailer without appending it silently. Consent can be designed as a workflow step rather than a settings-page scavenger hunt.

That is not anti-innovation. It is the difference between adoption and resentment.

The company also appears to be reconsidering the language of attribution, including whether “assisted-by” better reflects AI involvement than “co-authored-by.” That is a constructive move. The industry needs vocabulary that neither hides AI use nor exaggerates machine authorship.

But the suspicion will linger because the incentives remain unchanged. Microsoft still wants Copilot usage to grow. GitHub still benefits when AI coding feels native to the development workflow. VS Code still gives Microsoft a distribution channel into millions of projects. Users know all of this, and they interpret ambiguous defaults through that lens.

Trust is not rebuilt by reverting one pull request. It is rebuilt when users see a pattern of restraint. That means opt-in defaults for intrusive features, visible previews for generated metadata, clean administrative controls, and public postmortems that distinguish bugs from product judgment calls.

If Microsoft wants developers to believe Copilot is a colleague-like assistant, it has to stop behaving like an uninvited stakeholder.

Microsoft’s next Copilot fight will not be about whether AI can write useful code; that question is already being answered in daily work. It will be about whether Microsoft can make AI feel like an accountable instrument rather than a corporate presence insinuating itself into every artifact. If the company learns from this episode, VS Code can still be the place where AI-assisted development becomes boring, governed, and trustworthy. If it does not, every new Copilot integration will arrive with the same suspicion: what did Microsoft just decide to do on my behalf?

Source: TechSpot https://www.techspot.com/news/112321-microsoft-made-copilot-co-author-every-vs-code.html

Microsoft Turned Attribution Into a Product Surface

Microsoft Turned Attribution Into a Product Surface

The change at the center of the uproar was not a new model, a flashy sidebar, or another Copilot button wedged into a toolbar. It was a Git commit trailer: the familiar line at the bottom of a commit message saying a change was “Co-authored-by” someone else. In normal software culture, that line is not decoration. It is a durable authorship signal copied across forks, mirrors, compliance tools, changelogs, contribution graphs, and legal review systems.That is why the reaction was so sharp. A developer can ignore a chatbot pane. A developer can uninstall an extension, disable completions, or move their cursor away from a suggestion. But a commit message is part of the historical record, and changing it on the user’s behalf crosses from assistance into representation.

Microsoft’s explanation is narrower than the anger it triggered. The VS Code team says the setting began as an AI attribution feature, was changed from off to a broader default during the 1.117 rollout, then hit a bug that caused attribution to appear where it should not have appeared. The company says 1.119 restores the default to off, disables the feature when AI features are disabled, and will require consent before adding a trailer.

All of that matters. It also does not erase the central failure. In a professional developer tool, the dangerous part was not merely the bug. The dangerous part was that Microsoft was close enough to this line that a bug could push it over.

The Commit Message Is Not Microsoft’s Canvas

Git commit metadata has a strange dual identity. It is plain text, easy to edit, and often written hastily at the end of a work session. Yet once pushed, reviewed, signed, audited, rebased, squashed, mirrored, or included in a release process, it becomes part of a record that teams treat as evidence.That evidence can be social, technical, or legal. Maintainers use it to understand who made a change. Security teams use it to trace risky code. Enterprises use it to document compliance. Open-source projects use it to manage contribution policies and licensing expectations. Even when the line is not legally dispositive, it is meaningful enough that adding it automatically should require a very high bar.

This is where Microsoft’s instincts collided with developer culture. The company appears to have seen AI attribution as a transparency feature: if AI helped, record that help. That idea is defensible. Many teams will eventually want better provenance for AI-assisted code, especially as coding agents move from autocomplete into multi-file edits and pull-request generation.

But provenance imposed by the tool vendor is not the same as provenance designed by the project. A team may want AI assistance recorded in pull-request templates, internal audit logs, branch protections, signed attestations, or code review labels. Another team may ban AI-generated code entirely. A third may allow it but require human ownership without anthropomorphic co-authorship language. The point is not that one policy is correct. The point is that the editor should not pick one and write it into every project’s history by default.

VS Code is popular precisely because it occupies a delicate middle ground. It is Microsoft software, but it has long been treated by developers as a flexible workbench rather than a heavy-handed corporate IDE. That trust gives Microsoft enormous distribution power. It also makes defaults more consequential than they would be in a niche plugin.

A Bug Can Still Reveal the Strategy

Microsoft’s defenders will reasonably argue that the worst behavior was a bug. The VS Code update said attribution should apply when AI-generated code was involved, and the company says non-Copilot code and disabled-AI scenarios were not intended to be labeled. Bugs happen, and software this widely used will always expose edge cases that internal testing misses.But developers are not reacting only to a defect report. They are reacting to a pattern. Over the last several years, Microsoft has inserted Copilot branding and AI affordances across Windows, GitHub, Office, Edge, Teams, and developer tooling with a speed that often seems to outrun consent design. Each individual change can be explained as experimentation, personalization, telemetry-driven iteration, or a mistake. The accumulation feels different.

The subtlety matters. Nobody thinks a random null pointer exception is a corporate strategy. But a bug in a feature that defaults AI attribution on is still a bug in a feature that defaulted AI attribution on. The process failure and the product decision are linked, because the product decision created the blast radius.

This is why the “it was a bug” answer landed badly with many developers. The bug explains why Copilot appeared in places it should not have appeared. It does not fully explain why a mainstream code editor was allowed to add AI co-authorship trailers by default in the first place, or why a disable-AI setting did not act as an absolute kill switch in testing.

For a consumer feature, that might be embarrassing. For a developer workflow, it is corrosive. Developers live by explicit state. A flag that says AI features are disabled must mean disabled, not mostly disabled, not disabled except for attribution machinery, not disabled unless another setting wins a configuration fight.

Copilot’s Real Problem Is Not Capability

The irony is that Copilot does not need this kind of behavior to matter. AI coding tools are already changing software work. Inline completions can save time. Chat can explain unfamiliar APIs. Agents can draft tests, update dependencies, and grind through boilerplate. Many developers who complain about Microsoft’s defaults also use AI tools every day.That distinction is important because it separates anti-AI sentiment from anti-coercion sentiment. The revolt over VS Code attribution was not a Luddite backlash against machine assistance. It was a professional backlash against an assistant being promoted into the audit trail without explicit permission.

Microsoft keeps blurring that line. The company markets Copilot less as a tool and more as a layer: Copilot in Windows, Copilot in Microsoft 365, Copilot in GitHub, Copilot in security products, Copilot in the browser, Copilot in the shell. That strategy makes business sense. If AI is the next platform shift, Microsoft wants to own the ambient assistant across work.

But ambient software has an ambient trust problem. The more places Copilot appears, the more users need to know that “off” means off, that generated text is visible before it is submitted, that metadata is not rewritten silently, and that corporate metrics are not being optimized through defaults that users never chose.

This is the real danger for Microsoft. The company is trying to make Copilot feel inevitable. Incidents like this make it feel invasive.

Developer Trust Is a Different Market From Office Upsell

Microsoft has spent decades selling software into environments where IT departments expect centralized defaults, bundled experiences, and administrative policy. That muscle memory is useful in enterprise sales. It is dangerous in developer tools.Developers are unusually sensitive to silent workflow changes because their tools are extensions of their agency. A text editor does not merely display work; it mediates work. A Git client does not merely submit work; it represents authorship. A terminal does not merely run commands; it is a trust boundary between intention and execution.

That is why the VS Code incident attracted such strong language. A co-author trailer is not an ad banner in a free app. It is not a recommendation widget on a start page. It is a public statement attached to professional output. A programmer whose employer restricts AI use may now have to explain why commits appear to credit Copilot. A contractor may face questions about whether client code was generated by an unauthorized tool. An open-source maintainer may have to clean up commits to avoid policy confusion.

Some of those harms may be hypothetical in many cases. But professional tooling is built around avoiding exactly this sort of ambiguity. If a default can make a human appear to have used a tool they did not use, the default is wrong.

Microsoft’s rollback recognizes that, but only after the community supplied the alarm. That is not a healthy feedback loop. For a company that wants developers to entrust more work to agents, the process should be reversed: consent first, provenance second, automation third.

“Co-Authored” Was the Wrong Word for the Job

The phrase “Co-authored-by” carries human expectations because it came from human collaboration. In GitHub culture, it commonly means another person contributed to the commit. It is legible to contribution graphs and social workflows. It maps to a world where authorship is tied to accountability, judgment, and intent.An AI coding assistant does not fit neatly into that model. It may generate a function, but it does not accept responsibility for the function. It may propose a diff, but it does not own the consequences. It may be statistically involved in the text, but the developer or organization remains accountable for shipping, licensing, testing, and maintaining the result.

That is why “assisted-by” may be a better direction than “co-authored-by.” The distinction sounds pedantic until it appears in a compliance review. Assistance is a provenance signal. Co-authorship is an authorship signal. The former says a tool was involved; the latter suggests an entity shares creative credit.

The software industry needs better AI provenance, not worse. A future in which agents modify large codebases without traceability is not acceptable either. The answer is not to pretend AI assistance never happened. The answer is to record it in a way that is accurate, policy-aware, and visible before it becomes permanent.

That design work is harder than toggling a default. It requires separating light autocomplete from agentic edits, distinguishing Copilot from other AI providers, honoring workspace and enterprise policy, showing exactly what will be appended, and letting repositories decide whether commit trailers are the right layer at all. It also requires admitting that users may say no.

The Open-Source Optics Made Everything Worse

VS Code’s development process is public enough that the controversy unfolded in the open. The pull request changing the default was visible. The reactions were visible. The complaints about disabled AI settings were visible. The reversal was visible. That transparency is good, but it also gave developers a clear narrative: Microsoft changed a default, Copilot reviewed the change, users objected, and Microsoft backed down.There is an almost too-perfect symbolism in Copilot reviewing a pull request that made Copilot more visible in commit metadata. It captures the uneasy circularity of the AI platform push: AI tools are being used to build, review, promote, and justify more AI tooling. That does not make the work invalid. It does make skepticism rational.

The optics also collided with the broader “AI slop” backlash. Developers are already dealing with low-quality generated issues, noisy pull requests, hallucinated answers, and overconfident code suggestions. Many have learned that AI can be useful only when surrounded by discipline. The VS Code change looked like the opposite of discipline: a metadata shortcut applied broadly, then explained after the fact.

For Microsoft, this is the cost of moving fast in infrastructure-like software. VS Code is not a toy app. GitHub is not a social network experiment. These are systems of record for people’s work. A small default in these environments carries more institutional weight than a big feature elsewhere.

Enterprises Will Read This as a Policy Failure

The enterprise version of this story is not about embarrassment on GitHub. It is about control. Large organizations are trying to decide where AI coding assistants fit inside secure development lifecycles. They are writing rules for data exposure, generated code review, license risk, model access, retention, audit logging, and human accountability.In that context, a tool that adds AI attribution even when AI features are disabled is more than annoying. It suggests that the vendor’s policy surface is not coherent. If one setting says AI is disabled but another subsystem still tracks or labels AI involvement, administrators have to wonder what else is happening outside the obvious controls.

Microsoft knows this market. It sells security, identity, compliance, endpoint management, cloud governance, and developer platforms into the same organizations now being asked to adopt Copilot. The pitch is that Microsoft can make AI enterprise-ready because it understands enterprise controls. The VS Code incident cuts directly against that pitch.

The company can fix the code. The harder task is rebuilding confidence that Copilot features are governed by least surprise. Enterprise IT does not need every AI feature to be off by default forever. It does need defaults to respect policy hierarchies, disclose behavior plainly, and fail closed when settings conflict.

That is especially true as coding agents become more autonomous. A future Copilot that can open issues, edit files, run tests, write commits, and submit pull requests will need more attribution, not less. But the more powerful the agent, the more explicit the contract must be. Silent metadata mutation is the wrong foundation for agentic development.

Microsoft’s AI Ambition Keeps Running Into the Consent Wall

The broader Copilot strategy is easy to understand. Microsoft paid heavily for early AI advantage, integrated the technology across its product line, and is trying to turn that integration into durable platform gravity. If Copilot becomes the default interface for work, Microsoft strengthens Windows, Microsoft 365, Azure, GitHub, and developer services at once.The trouble is that defaults are not neutral when they change user output. There is a difference between showing a feature and acting on behalf of the user. There is another difference between acting locally and writing into shared records. The VS Code incident sits at the most sensitive end of that chain: it touched the artifact a professional publishes to the world.

This is where Microsoft’s consumer and enterprise instincts conflict with developer expectations. In consumer software, companies often ship features broadly and tune them after telemetry. In enterprise software, administrators often impose defaults centrally. In developer culture, users expect sharp tools, explicit configuration, and respect for the boundary between editor assistance and authorship.

Microsoft can satisfy all three only if it slows down at the boundary. Copilot can be present without being presumptuous. AI attribution can be useful without being automatic. A tool can recommend a trailer without appending it silently. Consent can be designed as a workflow step rather than a settings-page scavenger hunt.

That is not anti-innovation. It is the difference between adoption and resentment.

The Reversal Fixes the Setting, Not the Suspicion

Microsoft’s announced course correction is the right minimum. Setting the default back to off reduces surprise. Respecting the global AI disable setting closes the most obvious policy hole. Requiring consent before adding a trailer acknowledges that metadata belongs to the user and the project, not the editor vendor.The company also appears to be reconsidering the language of attribution, including whether “assisted-by” better reflects AI involvement than “co-authored-by.” That is a constructive move. The industry needs vocabulary that neither hides AI use nor exaggerates machine authorship.

But the suspicion will linger because the incentives remain unchanged. Microsoft still wants Copilot usage to grow. GitHub still benefits when AI coding feels native to the development workflow. VS Code still gives Microsoft a distribution channel into millions of projects. Users know all of this, and they interpret ambiguous defaults through that lens.

Trust is not rebuilt by reverting one pull request. It is rebuilt when users see a pattern of restraint. That means opt-in defaults for intrusive features, visible previews for generated metadata, clean administrative controls, and public postmortems that distinguish bugs from product judgment calls.

If Microsoft wants developers to believe Copilot is a colleague-like assistant, it has to stop behaving like an uninvited stakeholder.

The Lesson Microsoft Should Have Learned Before the Backlash

The practical lessons from this incident are blunt because the failure was blunt. Copilot attribution is not inherently wrong, but the ownership model around it must be explicit, narrow, and controlled by the people responsible for the code.- VS Code changed

git.addAICoAuthorfrom an off-by-default attribution setting to a broader default during the 1.117 rollout, then reversed course after reports of unwanted Copilot trailers. - Microsoft says a bug caused attribution to appear for code that was not generated by Copilot and even when AI features were disabled.

- The 1.119 update is supposed to restore the default to off and ensure the global AI-disable setting prevents the attribution machinery from running.

- Future attribution is expected to require explicit user consent before a commit trailer is added.

- The controversy is less about whether AI-assisted code should be disclosed and more about who gets to decide how that disclosure appears in a project’s permanent history.

- The phrase “assisted-by” would better match the role of an AI tool than “co-authored-by,” but even better wording cannot substitute for consent.

Microsoft’s next Copilot fight will not be about whether AI can write useful code; that question is already being answered in daily work. It will be about whether Microsoft can make AI feel like an accountable instrument rather than a corporate presence insinuating itself into every artifact. If the company learns from this episode, VS Code can still be the place where AI-assisted development becomes boring, governed, and trustworthy. If it does not, every new Copilot integration will arrive with the same suspicion: what did Microsoft just decide to do on my behalf?

Source: TechSpot https://www.techspot.com/news/112321-microsoft-made-copilot-co-author-every-vs-code.html