Microsoft’s neat framing is simple and urgent: the era of “better answers” — where large language models serve mainly as glorified search-and-summarize tools — is yielding to something fundamentally different, and that difference matters more for business outcomes than model accuracy alone. The new frontier is agentic systems of work: ensembles of planner and worker agents that can plan, act, verify, revise, and deliver end-to-end outcomes inside the tools people already use every day. This is not merely a product pitch; it is a practical roadmap for why and how AI will change workflows, governance, and the very architecture of enterprise operations in 2026 and beyond.

The last three years have seen rapid advances in model capabilities and in the tooling that surrounds them. What used to be single-step “generate an answer” interactions are now multi-step, stateful workflows: coding agents that can inspect a repository, propose a multi-file plan, run tests in isolated environments, fix the failures, and produce a pull request; analysis agents that can execute code, test hypotheses, and return verified results; and workplace copilots that coordinate data, people, and systems to drive repeatable outcomes.

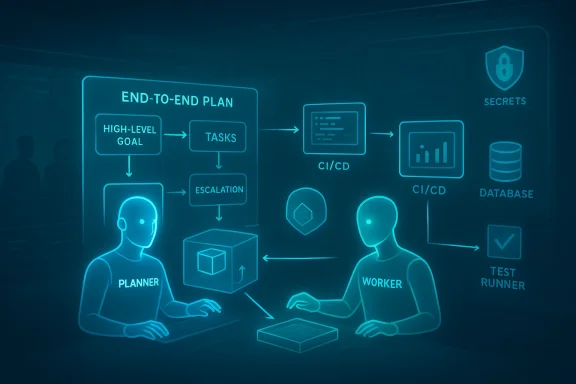

Two technical trends underpin this shift. First, models are being embedded with tool use: function calls, runtime sandboxes, terminal access, and structured agents that can call deterministic services (databases, CI systems, identity providers). Second, orchestration layers — the planner/worker abstractions Microsoft highlights — allow long-horizon objectives to be decomposed, routed, and executed with retry, verification, and escalation logic. The combination turns “assistant” into an operational layer of the business. Practical examples are already visible across competing platforms.

Key enterprise implications:

Security caveat: several community reports and early audits show that tooling that scans entire repos can inadvertently surface secrets or sensitive configuration files unless explicit opt-in guardrails are enforced. In practice, organizations must assume code-running agents will encounter credentials unless the environment and tooling explicitly prevent it.

Actionable first moves:

Source: Microsoft AI@Work: From “better answers” to real business outcomes

Background / Overview

Background / Overview

The last three years have seen rapid advances in model capabilities and in the tooling that surrounds them. What used to be single-step “generate an answer” interactions are now multi-step, stateful workflows: coding agents that can inspect a repository, propose a multi-file plan, run tests in isolated environments, fix the failures, and produce a pull request; analysis agents that can execute code, test hypotheses, and return verified results; and workplace copilots that coordinate data, people, and systems to drive repeatable outcomes.Two technical trends underpin this shift. First, models are being embedded with tool use: function calls, runtime sandboxes, terminal access, and structured agents that can call deterministic services (databases, CI systems, identity providers). Second, orchestration layers — the planner/worker abstractions Microsoft highlights — allow long-horizon objectives to be decomposed, routed, and executed with retry, verification, and escalation logic. The combination turns “assistant” into an operational layer of the business. Practical examples are already visible across competing platforms.

How Agentic Systems of Work Actually Work

Planner agents, worker agents — the two-loop architecture

At its core an agentic system of work follows a two-loop pattern:- Outer loop (Planner agents): Take a high-level goal and decompose it into discrete, testable tasks. They decide which tool or worker agent should handle each step, plan sequencing, and set success criteria. They also monitor progress and decide on retries, escalations, or human intervention when necessary.

- Inner loop (Worker agents): Execute those tasks deterministically or probabilistically — write code, run a database query, update a CRM record, generate a draft, run tests — and return structured outputs and evidence that the planner can validate.

Why this beats the “single-step” model

Single-turn Q&A tools are useful for discovery and drafting, but they flatten process into a single exchange and they place coordination burden on humans. Agentic systems distribute that burden: they track intermediate state, apply verification logic automatically, and capture learnings to improve subsequent runs. For repeatable business outputs — closing tickets, shipping features, running campaigns — that reliability and observability is more valuable than incremental improvements in model perplexity.Real-world examples: how leading tools are already pushing agentic work

GitHub Copilot and the IDE-to-Production loop

GitHub’s Copilot has moved beyond line-by-line autocompletion toward workspace-aware behaviors: inspecting a repository, generating a plan for a feature, making multi-file edits, running tests, and iterating on failures within an isolated worktree or ephemeral environment. Examples in official documentation show Copilot generating unit and integration tests, suggesting fixes after running test suites, and assembling implementation plans before executing edits. These capabilities illustrate the planner/worker pattern inside a developer’s workflow.Key enterprise implications:

- Copilot can act inside a trusted development environment (e.g., ephemeral runners, private worktrees) so that code changes, test runs, and artifacts remain scalable and auditable.

- The agentic loop supports approvals and handoff: the human reviews a plan or final PR rather than micromanaging every edit.

- Copilot’s “workspace mode” signals the shift from a local helper to an autonomous actor that still respects devops controls.

Anthropic’s Claude Code: plan mode and stateful execution

Anthropic’s Claude Code demonstrates a comparable trajectory: it can analyze codebases in a Plan Mode (read-only exploration), propose multi-step changes, run code in sandboxed environments, and iterate based on test outputs. That mixture of planning, stateful execution, and tools access is what separates multi-step agentic workflows from one-off code generation. Anthropic’s public notes and independent reporting emphasize the model’s ability to run code, analyze outputs, and produce iterative fixes — an operational milestone for coding agents.Security caveat: several community reports and early audits show that tooling that scans entire repos can inadvertently surface secrets or sensitive configuration files unless explicit opt-in guardrails are enforced. In practice, organizations must assume code-running agents will encounter credentials unless the environment and tooling explicitly prevent it.

OpenAI’s GPT‑5.3‑Codex: a model designed to accelerate its own lifecycle

OpenAI’s launch of GPT‑5.3‑Codex marks a milestone in which a coding-focused model is described as having been “instrumental in creating itself.” That claim is literal in OpenAI’s writeup: early versions were used internally to debug training runs, propose fixes, and accelerate evaluation and deployment. The model also showcases stateful, long-horizon development capabilities — building complex apps and iterating across many tokens — which are precisely the behaviors required for agentic execution in software engineering tasks. Independent coverage and the vendor announcement both stress the step from “write code” to “operate a computer and complete work end to end.”What this means for enterprise leaders: the strategic shift

From model-centric to system-centric thinking

Until recently, executives primarily evaluated AI by model accuracy, benchmark scores, and use-case demos. The hard lesson of 2024–2025 has been that outstanding models do not automatically produce business outcomes. IDC research highlighted the scale of the problem: an estimated 88% of AI proofs-of-concept fail to reach production, typically because organizational readiness, data pipelines, and workflow redesign lag behind experimental prototypes. Agentic systems force a new approach that treats AI as part of an operational stack — with engineering, governance, and process design as first-class concerns.Practical starting point: map one recurring outcome

You don’t need to redesign the enterprise from day one. Pick a single recurring outcome (shipping a campaign, closing a support ticket, releasing a feature) and trace how it actually gets done: where are delays, where do humans merely move artifacts between systems, where is tribal knowledge preventing scale? This is the operational design problem agentic systems solve: they reveal friction, then encode reliable automation + verification to remove it. Microsoft recommends this workflow-first approach rather than an across-the-board tooling retrofit.Measurable business KPIs, not model metrics

Shift your KPIs:- From: model accuracy, token cost, prompt latency.

- To: cycle time for the end-to-end outcome, error/regression rate after automation, fraction of tasks completed without human escalations, and ROI per recurring outcome.

Critical risks and operational controls

Agentic systems expand automation power but also amplify risk vectors. Leaders must address these explicitly.1) Data leakage and secrets exposure

Agents with repository or system access can accidentally read and transmit secrets unless the execution environment prevents it. Controls include:- Environment-level secrets redaction and secret-scanning before any upstream logging or telemetry.

- Ephemeral runner architectures with no outbound telemetry by default.

- Fine-grained tool access restrictions (principle of least privilege).

2) Errant or unsafe actions (dual-use and cybersecurity)

Agentic coding models are increasingly capable at security tasks, but that dual-use nature means guardrails are mandatory. OpenAI classifies GPT‑5.3‑Codex as “High capability” for cybersecurity tasks and has layered Trusted Access and monitoring to reduce risk. Enterprises must mirror this thinking with access tiers, logging, and human checkpoints for sensitive actions.3) Hallucinations that have operational impact

An agent that acts on a hallucinated assumption (e.g., deletes the wrong dataset, files an incorrect tax form, or commits a breaking change) is far more dangerous than a chatbot that merely produces an inaccurate sentence. Verification layers — deterministic checks, test suites, secondary model validators, and conservative “confidence thresholds” before action — must be designed into the inner loop. In practice this means:- Require test evidence for code changes (unit/integration tests pass in isolated runners).

- Use deterministic tools for numeric or legal computations (calculators, validated rules engines).

- Use human approvals where the cost of error is nontrivial.

4) Observability and auditability

For production-grade agentic work, you must have:- Immutable logs of agent decisions, prompts, and tool calls.

- End-to-end audit trails linking an outcome to the plan, worker logs, and verification steps.

- Telemetry that surfaces drift, repeated failures, or suspicious patterns.

5) Governance, policy, and human-in-the-loop design

Agentic systems require clearer governance constructs than single-use assistants:- Define which outcomes are automatable.

- Specify escalation policies and human checkpoints.

- Create “do not perform” lists for any agent that can take irreversible actions.

- Embed ethical and privacy constraints in planner logic and tool access layers.

A tactical roadmap for teams: five steps to get started

- Pick one high-value, recurring outcome. Trace it end-to-end. Identify bottlenecks and handoffs. Measure baseline cycle time and error rates.

- Design the planner/worker decomposition. Define which parts require deterministic tools, which parts are agentic, and where human approvals sit.

- Build secure, isolated execution environments. Use ephemeral runners, secrets management, and telemetry pipelines before connecting agents to production systems.

- Instrument verification layers. Add tests, secondary validators, policy checks, and rollback mechanisms so agents can fail safely and recover.

- Run tight pilots, measure real KPIs, then scale. Prioritize pilots that change a measurable metric (time, cost, throughput) and bake governance into the pilot hygiene.

Platform and architectural considerations

Tooling primitives you’ll need

- Ephemeral execution (runners/worktrees): Isolated workspaces for agents to build, run, and test without contaminating production.

- Secure function/tool APIs: Standardized, auditable interfaces agents call to interact with systems (CRMs, CI/CD, billing).

- Replayable decision logs: Structured prompts, decisions, and tool outputs stored so runs can be replayed or audited.

- Policy engines and validators: Deterministic checks for compliance, privacy, and safety that run before any irreversible action.

- Agent orchestration layer: A scheduler/monitor that routes tasks, tracks state, enforces SLAs, and implements retries/escalations.

Data and identity controls

Agents should obey the same identity and access rules as humans. Tie agent identities to identities in your IAM, require role-based approvals for extended privileges, and log at the identity level. Data access for agents should be explicit, audited, and limited by policy.The human factor: roles that matter

Agentic systems don’t eliminate human roles; they change them. New critical roles include:- Workflow owners: People who own the end-to-end outcome and the metrics that matter.

- Agent engineers / AI integrators: Engineers who design agent behavior, tool access, and recovery paths.

- Verification engineers / SLO owners: People who define verification criteria, tests, and acceptable error envelopes.

- Policy & compliance stewards: Ensure systems meet regulatory and privacy obligations.

- Change managers & trainers: Enable adoption and define new operating rhythms.

Early wins and warning signs: what to expect in the first 6–12 months

Early wins are typically operationally narrow and measurable:- Faster triage and resolution of routine support tickets with safe escalation to humans.

- Automated generation and verification of release artifacts that reduce developer cycle time.

- Marketing campaign assembly and multichannel publishing reduced from days to hours, with QA gates.

- Lack of a clear owner and KPI for the end-to-end outcome.

- Absent or shallow verification steps (e.g., no tests, no policy checks).

- Tooling that exposes secrets or lacks identity integration.

- Observability gaps that prevent debugging and trust building.

Conclusion: what leaders should act on now

The practical shift for 2026 is less about “which model is best” and more about “how do we make models part of a reliable, governed operating system for work.” Agentic systems — planners orchestrating worker agents and deterministic tools — create the conditions for AI to compound value across workflows rather than produce one-off drafts that don’t scale.Actionable first moves:

- Treat AI adoption as a workflow redesign problem, not a purely technical one.

- Start with one measurable outcome, instrument it, and apply planner/worker design.

- Harden execution environments, secrets handling, and verification layers before widening agent permissions.

- Build governance and observability as intrinsic platform features — not afterthoughts.

Source: Microsoft AI@Work: From “better answers” to real business outcomes