Microsoft’s cloud, developer tooling and creator platforms all made headlines this week as a chain of high‑impact product updates and a major Azure outage underscored both the accelerating pace of AI integration and the structural fragilities of hyperscale services.

Background / Overview

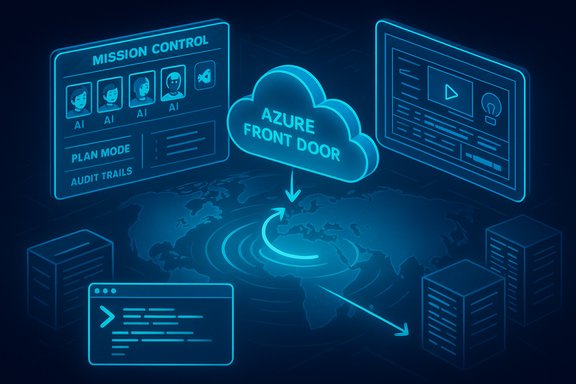

The story cycle began with a widespread Microsoft Azure outage on October 29, 2025 that degraded sign‑in, portal and storefront experiences across Microsoft 365, Xbox, Minecraft and thousands of third‑party sites. Microsoft traced the incident to an inadvertent configuration change that affected Azure Front Door (AFD) — its global Layer‑7 edge and application delivery fabric — and implemented a staged rollback and traffic rebalancing to restore service. Early telemetry and public reports place the visible start of the event in the mid‑afternoon UTC window on October 29, with progressive recovery over the next several hours. At the same time, GitHub used its Universe developer conference to unveil Agent HQ — a unified control plane for coding agents that GitHub describes as “a mission control center” capable of orchestrating third‑party and first‑party agents in the same workflow across Web, VS Code, mobile and CLI. The new product aims to let teams run, steer and audit multiple AI agents (OpenAI Codex, Anthropic’s Claude, Google’s agents and others) from a single pane of glass. Early coverage and GitHub’s own recaps indicate Agent HQ includes a “Mission Control,” an agents registry, and governance primitives for audit logs and policy enforcement. YouTube’s creator platform also shipped important upgrades: a global rollout of multi‑language audio/dubbing for creators, expanded AI‑assisted Shorts editing tools and new creator‑facing AI utilities that extend localization and production workflows. These enhancements continue YouTube’s multi‑year push to add AI tooling for creators — from Inspiration and Dream Track to automatic dubbing and thumbnail experimentation. This feature unpacks those three pillars — the Azure outage, GitHub’s Agent HQ launch, and YouTube’s upgrades — offering a close technical read, independent verification of claims, and a candid assessment of what the developments mean for Windows admins, developers and creators.Microsoft Azure outage: what happened and why it matters

Timeline and verified facts

- Visible start: approximately 16:00 UTC, October 29, 2025; external monitors and customer reports spiked around that time.

- Root trigger (public): Microsoft identified an inadvertent configuration change affecting Azure Front Door and indicated it was rolling back to a “last known good” configuration while blocking further AFD changes.

- Immediate mitigation: engineers froze AFD configuration rollouts, failed the Azure Portal away from AFD where possible, rebalanced traffic to healthy Points‑of‑Presence (PoPs) and restarted orchestration units supporting edge nodes. Recovery progressed over hours; residual, regionally uneven impacts persisted while DNS and routing converged.

Technical anatomy: why an AFD control‑plane mistake cascades

Azure Front Door is not a simple CDN: it is a globally distributed Layer‑7 ingress fabric that performs TLS termination, global HTTP(S) routing, Web Application Firewall enforcement, DNS‑level mapping and origin failover. When AFD misapplies routing or DNS rules, the symptoms are not limited to one service — they hit token issuance, portals and any service that relies on centralized edge routing and identity (Microsoft Entra ID/Azure AD). In short, a control‑plane misconfiguration at the edge can render otherwise healthy backend services unreachable because requests never reach origin or authentication fails at ingress.Business and operational impact

The outage amplified two related concerns:- Concentration risk: hyperscalers host many mission‑critical services; when a central routing or identity plane fails, the customer impact is immediate and broad. The Azure incident followed a high‑profile AWS outage earlier in October, intensifying debate about vendor concentration and single‑provider dependency.

- Control‑plane visibility and change governance: the proximate trigger was a configuration change that passed into production. Until Microsoft publishes its post‑incident report, the deeper causal chain — why validation gates failed or how the config slipped through deployment pipelines — remains provisional. Enterprises should treat vendor post‑mortems as essential reading and reconcile vendor timelines with tenant logs.

Practical resilience checklist for admins (short, actionable)

- Reassess identity dependencies: identify which customer journeys fail if Entra ID or edge routing fail, and document which flows need fallbacks.

- Implement multi‑path ingress: where feasible, design origin‑accessible failover (e.g., Traffic Manager, alternate DNS failovers) that bypasses the central edge for critical endpoints.

- Harden change management: require canaryed config rollouts, tighter validation gates, automated rollback tests and shorter maintenance windows for global control‑plane changes.

- Prepare programmatic admin routes: ensure operational playbooks include non‑GUI channels (PowerShell, CLI) and tested scripts to restore service when portals are impacted.

- Contract clarity: renegotiate SLAs for control‑plane incidents and add explicit remediation/compensation clauses for identity/edge failures.

GitHub’s Agent HQ: a mission control for AI agents

What GitHub announced (verified)

At Universe 2025 GitHub introduced Agent HQ, described as a single interface where organizations and developers can run, observe and govern multiple coding agents from different vendors. Core features highlighted by GitHub and independent reporters include:- Mission Control: a centralized dashboard to assign, steer and monitor agents across GitHub Web, VS Code, mobile and the CLI.

- Agent interoperability: GitHub says Agent HQ will support many third‑party coding agents (OpenAI Codex, Anthropic’s Claude Code, Google agents, etc., enabling them to use the same primitives and repository context.

- Custom agents and AGENTS.md: developers can create and share tailored agents with project‑level instructions, tool access and guardrails.

- Governance primitives: audit logging, policy controls and org‑level access management for agents and models.

- Integration with Copilot subscriptions: advanced agent access and features are tied to paid tiers (Copilot Pro+ and similar).

Why Agent HQ is important

- Developer workflow consolidation: engineers already use multiple coding assistants and models; Agent HQ tries to make them first‑class collaborators inside GitHub rather than fragmented external tools. This can reduce context switching and speed iteration.

- Enterprise governance for agents: by centralizing agent control GitHub can expose audit trails, approval gates and identity controls that enterprises demand for compliance. That’s a necessary step if coding agents are to be used in regulated contexts.

Risks, unanswered questions and governance needs

Agent HQ’s promise raises immediate technical and policy questions that deserve scrutiny:- Data privacy and memory: agents that learn from repo context and team interactions can accumulate sensitive intellectual property and developer behavior signals. Who owns agent memory, and how is that history protected or purged? Public commentary and early previews indicate GitHub will add audit logs and policy controls, but the mechanics of memory management and retention policies need clear documentation.

- Model choice vs vendor lock‑in: GitHub’s openness to many agents is strategically smart, but commercial realities may still favor certain providers via deeper integrations or exclusive features. Teams must evaluate vendor lock risks even in an ostensibly open agent registry.

- Security of agent tools: agents that can create branches, open pull requests, or commit code introduce a new attack surface. Supply‑chain and access controls must be tightened; automated policy checks and mandatory manual approval gates for high‑risk operations are prudent defaults.

Practical recommendations for dev teams

- Establish an Agent Governance Board: set policy on which agents are permitted, what data they can access, and how memory is retained or scrubbed.

- Audit trails and least privilege: require all agent actions to be auditable and scoped via least‑privilege credentials; block agents from committing directly to protected branches.

- Integrate human‑in‑the‑loop checks: mandate human review for any production‑impacting changes proposed by agents.

- Run agent simulations in sandboxes: test agent behavior on synthetic or mirrored repos to validate toolchains before production rollout.

YouTube upgrades: creators, localization and AI tooling

What’s shipping

YouTube is expanding a cluster of creator features focused on localization and AI‑assisted production:- Multi‑Language Audio (MLA): YouTube began a broad rollout of multi‑language audio/dubbing for creators, enabling single‑video uploads to carry multiple audio tracks and letting viewers select preferred audio. The feature has been piloted with big creators and is moving into general availability, with both manual multi‑track uploads and AI‑assisted dubbing options appearing in different coverage.

- Shorts editor AI: improvements to auto‑layout when converting long‑form videos into Shorts, auto‑captions, text‑to‑speech options and more template and remixing functionality continued to be expanded during 2024–2025 cycles.

- Creator studio enhancements: thumbnail testing, Inspiration tabs, and AI‑assisted thumbnail/title suggestions have been added to the creator workflow over the past year and continue to be refined.

Why these upgrades matter

- Borderless audience growth: multi‑language audio reduces the friction of multiple channel management and can dramatically increase watch time from non‑primary languages (YouTube reported non‑primary watch time improvements during pilots). For creators with global ambitions, that’s a direct revenue lever.

- Faster production: AI‑assisted tools (shorts auto‑layout, Dream Track music, auto‑captions) lower the barrier to frequent publishing — a core advantage in platformized attention economies.

Risks and creator protections

- Quality and authenticity: automated dubbing quality varies. Poor dubs reduce retention; overly synthetic vocal tones may undermine trust. Creators must test outputs and prefer human‑supervised dubbing for premium content.

- Labor displacement concerns: automated dubbing and AI assets could reduce demand for voice actors or editors; creators and studios should plan contracts and disclosures accordingly. Public discourse has flagged this as an industry concern.

- Transparency: YouTube has policies on labeling synthetic content, but continued vigilance is needed to flag AI‑generated voices or altered content so viewers can make informed choices.

Creator playbook (practical)

- Prioritize quality for top videos: choose manual or professionally recorded dubs for flagship content; use AI dubbing to scale evergreen tutorials or explainer content.

- Test regionally: run A/B experiments with multi‑language tracks and multi‑language thumbnails to measure incremental watch time and engagement.

- Preserve brand voice: create or license voice assets that preserve the creator’s vocal identity when automating dubs; track audience sentiment post‑rollout.

- Label synthetic content: when using AI dubbing or generative audio, follow platform rules and add clear disclosure to maintain trust.

Cross‑cutting analysis: what the week’s headlines reveal

Strengths exposed

- Rapid remediation: Microsoft’s response playbook — freezing control‑plane changes, rolling back to a validated configuration and rebalancing traffic — is a mature operational pattern that limited recovery time for many customers. Public transparency about the suspected trigger was helpful in coordinating tenant responses.

- Platform productivity gains: GitHub Agent HQ and YouTube’s creator AI toolset both deliver tangible productivity improvements: fewer context switches for developers and faster localization/production for creators. These are pragmatic, market‑driving features.

Structural risks

- Control‑plane fragility: the Azure outage is a textbook example of how centralization of ingress, routing and identity creates a single point of failure at scale. Architectures that concentrate those functions must be paired with robust canarying and conservative global change windows.

- New attack and compliance surfaces: Agent HQ’s ability to let agents create commits and branches raises novel security risks and compliance questions; platform vendors and enterprises must close gaps in auditability and access control.

- Rapid AI rollout without mature guardrails: YouTube’s AI dubbing and GitHub’s agent integrations accelerate capability adoption, but they also expand liability vectors — from misattribution and copyright to voice‑cloning misuse and unauthorized data exposure. Policy, labeling and opt‑in controls must keep pace.

Verification notes and caution flags

- Numbers reported on outage trackers (e.g., “tens of thousands” of incidents) vary by tracker methodology; public telemetry spikes are real, but precise tenant counts require Microsoft’s post‑incident report for definitive scope. Treat third‑party aggregates as indicative, not definitive.

- GitHub’s Agent HQ roadmap allows many agents “coming soon.” Some partner integrations (specific models and editor availability) are being staged; organizations should consult GitHub’s product pages for exact availability dates and billing terms. Claims about universal availability should be validated against GitHub’s official rollout schedule.

- YouTube’s multi‑language audio implementation includes both manual multi‑track uploads and varying levels of AI‑assisted dubbing depending on geography and account status. Don’t assume automatic, high‑quality AI dubbing will replace human voice work for flagship material. Verify how your channel’s creator studio shows the feature and whether auto‑dubbing or manual uploads are available.

Final takeaways: immediate actions for Windows admins, developers and creators

- For Windows/IT admins: run a dependency map that highlights where identity and edge routing are single points of failure; script programmatic workarounds to GUI failures; negotiate specific control‑plane SLAs with cloud vendors.

- For engineering leaders: pilot Agent HQ in non‑production to validate agent behaviors and integrate strict approval gates for any agent that can modify code. Create agent policies that define data access, memory retention and model usage limits.

- For creators: treat multi‑language audio as a scaling lever but protect your brand voice and quality; label AI‑generated or heavily modified content; run experiments to measure uplift before committing to automated dubbing across a channel.

The week’s headlines are a sharp reminder: hyperscale platforms and AI tools are delivering real, measurable capabilities — but they also rewire operational risk and governance responsibilities. The right response is not fear; it is disciplined adaptation: design for control‑plane failures, govern agentic assistants, and adopt AI tools with tested human oversight. The technical wins are real, but the engineering and policy work needed to make them safe and sustainable is now the central task for teams that rely on these platforms.

Source: Analytics Insight Top Tech News Today | Microsoft Azure Outage Fixed, GitHub’s AI Hub Launch, YouTube Upgrades & More!