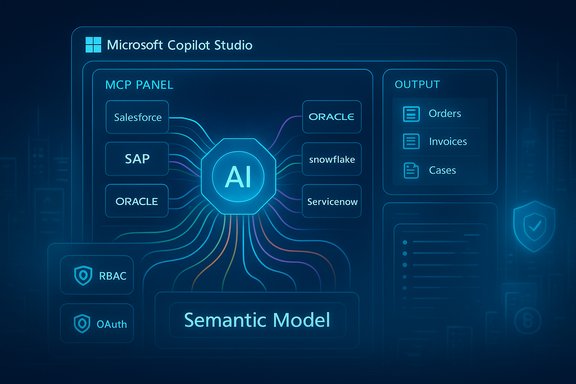

CData and Microsoft have moved from discussion to delivery: CData’s Connect AI platform is now available as a managed Model Context Protocol (MCP) provider inside Microsoft Copilot Studio and Microsoft Agent 365, promising real‑time, semantic access to live enterprise systems and a single integration layer that claims to remove much of the plumbing that has blocked agent rollouts to date.

Enterprises building agentic AI face three consistent production‑grade obstacles: connectivity to dozens or hundreds of systems, context so models can reason about business objects rather than raw JSON, and control so IT and security teams can govern what agents do. CData positions Connect AI as a managed MCP platform that addresses those three gaps with a library of prebuilt connectors, semantic source models, and governance integration with Microsoft’s agent control plane. The vendor and the announcement materials emphasize broad connector coverage (advertised at roughly 300–350+ sources), semantic metadata extraction, and inherited identity controls intended to preserve source permissions. The timing matters because Microsoft has made MCP a first‑class extensibility mechanism across Copilot Studio and related agent surfaces. Microsoft recently announced general availability for MCP support in Copilot Studio and published detailed onboarding and usage guidance, which lets tenants register external MCP servers (including third‑party managed offerings) for agent tooling and tracing. That platform readiness is the practical enabler for a third‑party MCP provider to appear as a discoverable toolset inside Copilot Studio and Agent 365.

Practical finance and engineering steps before committing:

Source: IT Brief Australia CData, Microsoft unlock broad MCP data connectivity

Background

Background

Enterprises building agentic AI face three consistent production‑grade obstacles: connectivity to dozens or hundreds of systems, context so models can reason about business objects rather than raw JSON, and control so IT and security teams can govern what agents do. CData positions Connect AI as a managed MCP platform that addresses those three gaps with a library of prebuilt connectors, semantic source models, and governance integration with Microsoft’s agent control plane. The vendor and the announcement materials emphasize broad connector coverage (advertised at roughly 300–350+ sources), semantic metadata extraction, and inherited identity controls intended to preserve source permissions. The timing matters because Microsoft has made MCP a first‑class extensibility mechanism across Copilot Studio and related agent surfaces. Microsoft recently announced general availability for MCP support in Copilot Studio and published detailed onboarding and usage guidance, which lets tenants register external MCP servers (including third‑party managed offerings) for agent tooling and tracing. That platform readiness is the practical enabler for a third‑party MCP provider to appear as a discoverable toolset inside Copilot Studio and Agent 365. What CData says Connect AI delivers

CData’s public materials and press release describe the Connect AI offering with three core capabilities that map directly to enterprise needs:- Universal MCP connectivity: a single, hosted MCP endpoint that exposes hundreds of prebuilt connectors to common enterprise systems — CRM, ERP, data warehouses, and ITSM platforms — so agents can discover and call source‑native operations without bespoke adapters. CData’s messaging repeatedly cites a 350+ connector footprint across product pages and the press release.

- Semantic intelligence: connectors do more than pass rows; Connect AI claims to expose source schemas, table/field metadata, entity relationships and business logic so an LLM‑driven agent reasons in business concepts (orders, invoices, cases) instead of opaque JSON. This semantic layer is marketed as a guardrail against hallucination and “context overload.”

- Enterprise control and governance: identity‑first security that supports OAuth/SSO, RBAC passthrough, CRUD‑level scoping for agent actions, and audit trails integrated with Microsoft’s Agent 365 governance surfaces, intended to keep IT in the loop and provide provenance for agent activity. Managed workspaces and curated toolsets are also called out as governance primitives.

How the MCP integration actually works (practical anatomy)

MCP as the interoperability fabric

Model Context Protocol (MCP) is a manifest‑first, tool/resource oriented spec that lets an agent client discover and call external tools and data resources in structured form. Copilot Studio supports MCP tools and resources and provides an onboarding wizard for connecting an MCP server to an agent workspace. Once an MCP server is registered, its tools, inputs/outputs, and metadata become available in Copilot Studio and are reflected dynamically as the server evolves. That pattern replaces brittle ad‑hoc prompt engineering with deterministic tool calls and structured I/O.CData’s role: managed MCP server + connector runtime

Connect AI runs connector instances behind an MCP server manifest. Each connector advertises APIs and schema information to Copilot as MCP tools and resources. When an agent needs data or an action, it issues structured MCP calls; Connect AI executes optimized queries against the target system, performs server‑side pushdown and aggregation, and returns compact, semantically labeled results to the agent. The claimed benefits are smaller LLM context payloads, lower token consumption, and improved response determinism compared with sending raw extractions into an LLM.Governance integration with Agent 365

Because Copilot Studio and Microsoft’s Agent 365 provide the governance and observability layer for agents, registering a third‑party MCP server like Connect AI exposes each tool invocation to Microsoft’s tracing and governance surfaces. CData says the service preserves source authentication and can map agent requests to tenant identities, enabling audit trails and admin oversight; Microsoft documents both the tracing features and the responsibilities that arise when tenants use external MCP providers. This alignment is fundamental to the trust model that enterprises require before they permit agent writebacks or automated approvals.Semantic context: why it matters—and what to test

One of Connect AI’s headline propositions is that connectors are semantic, not just syntactic. That means the MCP manifest surfaces:- field names and types,

- entity relationships (foreign keys, parent/child links),

- enumerations and domain constraints,

- business logic hints (status lifecycles, soft deletes),

- and human‑readable labels so agents can map tokens to business concepts.

- Verify per‑source depth, not just breadth: confirm the connector covers the API endpoints and versions you rely on (custom fields, bulk APIs, stored procedures).

- Test schema fidelity: ensure labels, data types, and relationships are exposed correctly and in the locale/format your business uses.

- Measure token savings: compare full‑dump RAG prompts versus MCP pushdown results to quantify cost and latency improvements.

- Simulate joins and cross‑system queries under representative loads to surface edge cases (rate limits, pagination, multi‑tenant nuances).

Security, governance and the trust boundary

Inherited identity, but expanded responsibility

CData and Microsoft both frame the model as identity‑first: authentication and source RBAC should flow through OAuth/SSO into the MCP layer, and Connect AI says it enforces CRUD scoping and logs actions. That model is stronger than a simplistic service account pattern because it preserves least privilege and per‑actor audit trails — assuming the passthrough is implemented correctly and end‑to‑end cryptography and token handling are robust. However, using a third‑party, hosted MCP server expands the organisation’s attack surface. Key security considerations:- Third‑party egress: any managed MCP provider receives or proxies query results and may see sensitive content. Contracts must specify data handling, encryption in transit and at rest, retention periods, and breach obligations.

- Prompt and tool injection: structured responses from MCP tools must be validated; malicious or compromised manifests can instruct agents to take undesired actions. Runtime sanity checks and human‑in‑the‑loop approvals for high‑risk writebacks are essential.

- Audit and forensic readiness: ensure audit logs are immutable, integrate with SIEM/UEBA, and retain sufficient context to reconstruct agent decisions.

- SLA and incident response: understand the provider’s uptime SLAs, escalation paths, and the impact of connector outages on critical workflows.

Operational efficiency: token economy, query execution and cost

CData markets Connect AI as a performance and cost optimisation layer: by performing heavy retrievals and joins server‑side, returning distilled semantic context, and normalising pagination and schema differences, Connect AI says agents will consume fewer tokens and see lower latency. This is plausible in principle — moving extraction, filtering and aggregation outside the LLM can reduce the model’s input size — but the actual savings depend on query patterns, cardinality, and how much pre‑processing the MCP server performs versus leaving reasoning to the model.Practical finance and engineering steps before committing:

- Run A/B tests measuring token consumption and latency for representative flows.

- Model the platform TCO: third‑party platform fees + additional API request charges + Copilot credits + model inference costs.

- Include cost governance in AgentOps runbooks and set per‑agent and per‑workspace budgets to prevent runaway consumption.

Benefits and strengths — where this integration is genuinely useful

- Speed to pilot and production: prebuilt connectors reduce bespoke integration effort, which is the largest early blocker for agent projects spanning many SaaS systems. Copilot Studio’s MCP onboarding makes registration straightforward for administrators.

- More reliable multi‑system reasoning: semantic exposure of schemas and relationships lets agents produce more explainable, auditable outputs across CRM, ERP and data warehouses rather than stitching disparate fragments via brittle prompt tricks.

- Governance alignment: integration with Microsoft Agent 365’s tracing and control plane gives IT a single surface for visibility, approvals and policy enforcement when used correctly.

- Ecosystem fit: MCP is gaining broad vendor traction (Microsoft, Anthropic and others), which reduces lock‑in risk for the protocol level and makes vendor ecosystems interoperable in practice. Independent reporting shows MCP is being discussed as a standard integration fabric for agentic apps.

Risks, limits and caveats — the hard realities

- Connector claims vary: CData markets “300–350+” connectors across materials; counts are headline metrics and can vary by product page and marketplace listing. Enterprises must verify connector coverage for their specific API surface and customizations before committing.

- Third‑party trust and egress liability: handing live data access to a hosted provider requires contractual, technical and operational controls — encryption, data residency, access reviews and audit capabilities — and introduces potential compliance and contractual risk.

- Operational dependency: although MCP is a standard protocol, the semantic models, curated workspaces and optimization plans that give Connect AI its value are proprietary. Migrating off a managed provider could require significant rework unless export and portability are explicitly supported.

- Attack surface for agents: agents calling external tools create new vectors for injection and supply‑chain compromise. Validate manifests, monitor anomalous tool behavior, and require human approval for sensitive actions.

- Real cost benefit depends on workload: the token savings and latency improvements are workload dependent. For heavy aggregation and high‑cardinality joins, server‑side pushdown will help; for tasks that require extensive natural language reasoning on long text corpora, savings may be smaller.

Practical rollout recommendations for Windows and Microsoft environments

For Windows‑centric organisations or those using Microsoft 365 and Copilot Studio, the following phased approach reduces risk while proving value:- Inventory and classify: map the exact systems you intend to expose, including custom fields, API versions and sensitivity levels.

- Start small: pilot with a low‑risk, high‑ROI workflow that spans no more than two or three systems and requires read or read‑only interactions initially.

- Validate connector fidelity: confirm the connector supports your API surface (bulk, filters, transforms) and correctly exposes schema metadata.

- Integrate governance: register the MCP server via Copilot Studio’s onboarding wizard, enable tracing, and route logs into your SIEM and Purview classification where applicable.

- Harden agent writebacks: require explicit human approvals for any automated writes or financial/HR operations; implement least‑privilege CRUD scoping.

- Measure: quantify token and latency improvements, agent error rates, human intervention frequency and business KPIs (time saved, incidents avoided).

- Contract and audit: review SLAs, data handling, retention, right to audit and breach obligations with the provider before expanding to sensitive data.

The competitive and industry context

This integration is part of a broader industry push to make agents actionable and enterprise‑safe. MCP’s rapid adoption by platform vendors is turning it into a practical integration fabric for agentic workflows, and providers like CData aim to monetize their connector expertise by offering hosted MCP runtimes with semantic modelling and operational features. But the vendor landscape remains early and fragmented: some organisations will prefer to self‑host an MCP server to retain maximum control; others will trade that control for speed and scale via managed services. Independent reporting highlights Microsoft’s strategy of treating MCP as a standard integration point across Copilot and Windows surfaces, which increases the value of broad connector libraries for enterprises seeking rapid agent deployment.Conclusion

CData’s Connect AI appearing as a managed MCP provider inside Microsoft Copilot Studio and Agent 365 is a meaningful step toward practical, production‑grade agents in enterprises: it bundles connector breadth, semantic modeling, and governance hooks into a discoverable MCP toolset that Copilot authors can easily register and use. For organisations that have struggled with bespoke adapters, brittle RAG patterns and the governance burden of agentic automation, this integration offers a faster on‑ramp to pilot and—if validated—production scenarios. At the same time, the integration transfers significant responsibility to IT and security teams. Connector counts vary across vendor pages, third‑party egress raises compliance questions, and the proprietary semantic models that power the value proposition create migration and supply‑chain considerations. The prudent path is a measured one: pilot with representative data, validate enforcement semantics and logging, quantify cost and token benefits, and harden AgentOps before broadening scope. When those steps are followed, agents with semantic, live access to enterprise systems can finally shift from promising prototypes into controlled, auditable automation that materially reduces operational friction.Source: IT Brief Australia CData, Microsoft unlock broad MCP data connectivity