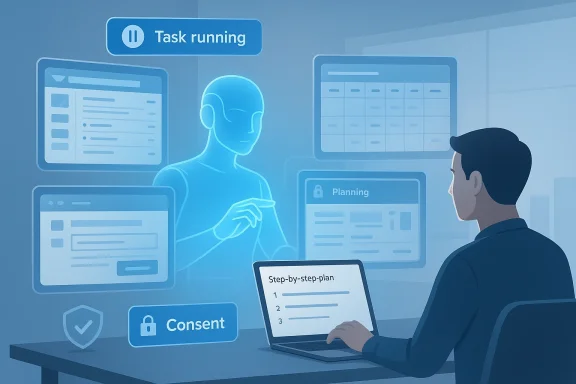

Microsoft’s Copilot has moved decisively from being a conversational assistant to behaving like a personal, background worker: the company’s Copilot Tasks launch — introduced as a research preview on February 26, 2026 — promises AI that not only advises but executes, running its own browser and computer to complete multi‑step tasks on your behalf while keeping you in the loop.

Microsoft announced Copilot Tasks on February 26, 2026, framing it as the next chapter in Copilot’s evolution: “from answers to actions.” The feature is initially available as a limited research preview for a small number of testers, with a public waitlist open for early access. Microsoft’s own description emphasizes that Tasks will operate across apps and services — from email and calendars to web pages and attachments — and is intended for everyday, personal use as much as work-focused workflows.

Independent technology outlets and product observers corroborated Microsoft’s timeline and core claims in the days following the announcement, describing Copilot Tasks as part of a broader industry shift toward agentic AI — systems that plan and act over time rather than replying to one-off prompts. Several outlets also framed the launch as Microsoft’s strategic bet on leveraging deep Microsoft 365 and Windows integration to offer a consumer-friendly, yet powerful, agent experience.

This article summarizes what Copilot Tasks claims to do, verifies the central technical and policy points Microsoft announced, evaluates the practical benefits, and critically examines the security, privacy, reliability, and legal risks this agentic turn brings. It also offers concrete guidance for consumers, IT leaders, and security teams on how to pilot the technology safely.

Important verification notes and limitations

The strengths are obvious: powerful automation for everyday problems, natural language access, and the competitive advantage of Microsoft’s ecosystem. But the risks are equally real: security, privacy, legal exposure, brittle web automation, and the governance implications of allowing software to act on people’s behalf.

If Microsoft follows through on the consent‑first promises, publishes the underlying safety and isolation mechanisms, and provides robust enterprise governance and auditability, Copilot Tasks could become a transformative personal agent. If it does not, the technology risks creating an operational headache and regulatory scrutiny that could delay adoption.

For early adopters: experiment cautiously, prioritize low‑risk scenarios, and insist on transparent logs and revocation mechanisms. For Microsoft: demonstrate in technical detail how credentials, sandboxes, and audits work; partner with key platforms to reduce brittle web automation; and offer simple guardrails so users and organizations can adopt agentic AI with confidence.

The era of agents has clearly begun. Copilot Tasks is Microsoft’s audition to be the agent millions trust on their desktops and phones — but trust is earned by proving safety, reliability, and accountability in the messy reality of everyday digital life.

Conclusion

Copilot Tasks is a pivotal moment for consumer and productivity AI: it makes good on the promise that AI should stop being merely conversational and begin to do. The preview release is the right first step — inviting real users to test the experience — but meaningful adoption will require Microsoft to back headline claims with concrete, auditable technical controls and enterprise governance. If Microsoft can deliver that combination of usefulness and accountability, Copilot Tasks could redefine what people expect from personal computing: not just answers, but trusted execution.

Source: Cloud Wars Microsoft Copilot Tasks: Microsoft Pushes Copilot from Chatbot to Personal AI Agent

Background / Overview

Background / Overview

Microsoft announced Copilot Tasks on February 26, 2026, framing it as the next chapter in Copilot’s evolution: “from answers to actions.” The feature is initially available as a limited research preview for a small number of testers, with a public waitlist open for early access. Microsoft’s own description emphasizes that Tasks will operate across apps and services — from email and calendars to web pages and attachments — and is intended for everyday, personal use as much as work-focused workflows.Independent technology outlets and product observers corroborated Microsoft’s timeline and core claims in the days following the announcement, describing Copilot Tasks as part of a broader industry shift toward agentic AI — systems that plan and act over time rather than replying to one-off prompts. Several outlets also framed the launch as Microsoft’s strategic bet on leveraging deep Microsoft 365 and Windows integration to offer a consumer-friendly, yet powerful, agent experience.

This article summarizes what Copilot Tasks claims to do, verifies the central technical and policy points Microsoft announced, evaluates the practical benefits, and critically examines the security, privacy, reliability, and legal risks this agentic turn brings. It also offers concrete guidance for consumers, IT leaders, and security teams on how to pilot the technology safely.

What Copilot Tasks says it can do

Microsoft’s Copilot team and public coverage outlined a clear set of everyday scenarios where Copilot Tasks can operate autonomously, including:- Recurring inbox management — surface urgent emails each evening, draft responses, and unsubscribe from promotional lists you never open.

- Apartment and job search automation — monitor new rental or job listings, compile options that match your criteria, and schedule viewings or interviews.

- Meeting and travel briefings — compile pre‑meeting briefings, summarize travel plans, and analyze how your time allocation aligns with priorities.

- Document generation and conversion — transform a syllabus into a study plan with practice tests; convert emails and attachments into a slide deck.

- Shopping, services, and appointments — organize events (invitations, RSVPs), compare tradespeople and book one, analyze used car listings and schedule test drives.

- Logistics and price monitoring — book rides timed to flights and auto‑adjust when delays occur; watch hotel rates and rebook if prices fall.

- Subscription housekeeping — identify unused subscriptions and cancel them on your behalf.

How Copilot Tasks works — verified technical points

Microsoft’s official blog post (Feb 26, 2026) is the primary source for the technical description. What we can verify from Microsoft’s messaging and independent reporting:- Copilot Tasks runs as an autonomous agent that can plan and execute multi‑step tasks without continuous prompts from the user. It can be scheduled, recurring, or one‑off.

- It uses a browser‑based execution environment and can interact with web pages, third‑party services, and apps — essentially automating steps a human would perform in a browser.

- The feature is rolling out as a research preview to a small group first; Microsoft has opened a waitlist for broader testing. No general availability date or pricing was announced at the preview launch.

- Microsoft asserts that Copilot will pause and request explicit approval before taking “meaningful” actions like spending money or sending messages on someone’s behalf, and that users can review, pause, or cancel tasks at any time.

Important verification notes and limitations

- Microsoft’s blog and subsequent reporting confirm the preview start date and the core behavioral claims (background execution, consent gates, dashboard controls).

- What remains unverified from public materials is the full technical architecture (for example, whether agent browsing uses ephemeral credentials, how third‑party sites with anti‑bot protections are handled, or the exact sandboxing and credential isolation mechanisms). Those internal implementation details were not disclosed and will need to be assessed once Microsoft publishes technical controls or documentation for enterprise customers.

The practical upside: why Copilot Tasks matters

Copilot Tasks transforms a helper into a worker. That shift yields several concrete advantages for both consumers and organizations.1) Real time savings for routine, multistep chores

Many useful tasks take many small steps: scouring listings, comparing options, filling forms, and coordinating schedules. Automating those steps yields high leverage. For consumers, this can mean fewer hours spent on shopping, job hunting, or admin; for knowledge workers, Copilot Tasks can triage email, prepare briefing packages, and assemble deliverables that previously required many manual actions.2) Natural language as a universal interface

Because Copilot Tasks accepts plain‑English goals and orchestrates subtasks, non‑technical users gain access to automation without building flows, macros, or integrations. That lowers the activation energy for automation across a broad user base.3) Deep Microsoft ecosystem advantage

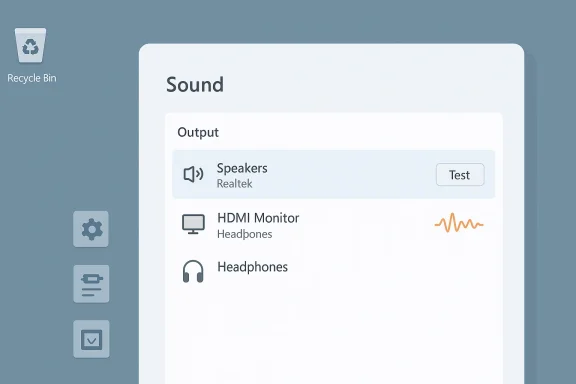

Microsoft’s integration into Outlook, Teams, Windows, and Microsoft 365 gives Copilot a privileged view of calendars, emails, and files that other consumer agents (which lack those enterprise hooks) do not. That enables contextual, workplace‑grade action that can be far more useful than a generic web‑only agent.4) A clear human‑in‑the‑loop model

The design claim that Copilot will ask for confirmation before sensitive operations preserves human oversight while allowing safe degrees of autonomy. If implemented correctly, this hybrid approach reduces risk while unlocking automation benefits.The competitive landscape (briefly)

Microsoft’s Copilot Tasks joins a fast‑growing field of agentic AI offerings. Recent months have seen agent features from Anthropic (Claude Cowork), OpenAI (Agent Modes), Google’s Gemini agent work, and several emergent players offering desktop or browser automation. Microsoft’s differentiator is its combination of consumer reach (Windows, Edge) and enterprise data surface area (Microsoft 365), which could make Copilot Tasks the most broadly useful personal agent for users embedded in Microsoft’s ecosystem.The hard realities: risks, failure modes, and governance gaps

Agentic AI executing actions on behalf of people introduces a new set of risks that are more operational and legal — not simply “AI hallucination” problems alone.1) Security and account abuse

When an automated agent logs into third‑party sites, interacts with forms, or makes bookings, it either uses stored credentials, delegated APIs, or automates a browser session. Each option has tradeoffs:- Stored credentials present a high‑value target for attackers if improperly protected.

- Browser automation that replays user sessions can trigger anti‑bot defenses and may leak tokens or session cookies if not properly isolated.

- Delegated APIs (OAuth) are safer but require third‑party integrations and explicit developer support.

2) Privacy leakage and over‑reach

Agents that browse the web and aggregate personal information may access sensitive data in emails, calendars, or documents. Even with permissions, automated cross‑context searches risk revealing more than intended — e.g., surfacing a private negotiation in a compiled briefing, or including personal identifiers when contacting third parties.3) Reliability and brittleness of web automation

Many of the use cases hinge on robust, reliable interactions with non‑API web pages (booking forms, listings, contact forms). Web pages change frequently; small front‑end changes can break an agent’s workflow. That brittleness creates risk for user expectations (e.g., a scheduled booking fails silently or a deposit is not captured correctly).4) Terms of Service and legal exposure

Automated access to websites can violate terms of service. Booking platforms, classified sites, and service providers often prohibit bots or require explicit API usage. If Copilot Tasks accesses services in ways that contravene provider policies, users or Microsoft could be exposed to liability or blocked service.5) Fraud, impersonation, and payment errors

Even with a consent step, automating communications that impersonate a user (email drafting and sending) or execute payments introduces potential for fraud, mistakes, or disputes. For example, a merchant may treat agent‑placed bookings differently than human bookings, or a payment reversal could create disputes that are hard to trace.6) Governance and auditability shortcomings

Enterprises will demand full audit trails, role‑based controls, and the ability to limit agent scope. If Copilot Tasks lacks robust admin controls, conditional access integration, or granular logging, organizations may block its use — even if individuals want it.Safety and security controls Microsoft should (and in many cases said it would) implement

Microsoft’s announcement emphasizes consent and the ability to pause tasks; however, operationalizing safety requires deeper controls. Below are the essential technical and policy controls that must be present for wide adoption.- Implement least privilege OAuth flows for third‑party services with short‑lived tokens. Avoid storing raw credentials where possible and use delegated access.

- Enforce ephemeral browsing sandboxes where agent sessions cannot access persistent credentials or unrelated OS resources. Sandboxes should scrub cookies and restrict cross‑origin data leakage.

- Provide strong audit logging that records each automated step, network call, and decision point, with tamper‑evident integrity and exportable logs for compliance reviews.

- Enable admin governance for enterprise tenants: policy templates, allowed/disallowed tasks, sensitivity label awareness, and per‑user whitelisting.

- Require two‑step confirmation for high‑risk actions (payments, contract signings), ideally involving multi‑factor authentication bound to the user rather than a simple in‑agent prompt.

- Offer explainability checkpoints in long workflows so users can review the plan before execution and intervene at clear breakpoints.

- Build rate limits and respectful scraping policies to avoid violating third‑party sites’ terms and overwhelming services. Where possible, prefer API integrations or partnerships.

- Provide clear user education and in‑product indicators showing when Copilot is acting autonomously, what data it used, and how to revoke access.

Practical guidance for consumers and early adopters

If you’re curious and on the waitlist, here’s a safe approach to explore Copilot Tasks without exposing yourself or your organization to undue risk.- Start with low‑risk automation: use Tasks for activity monitoring, price watching, or compiling non‑sensitive summaries rather than bookings or payments.

- Use separate accounts where possible: create a dedicated consumer email or account for agent interactions (especially for shopping or bookings) so agent activity is isolated from primary work or banking accounts.

- Inspect the task plan before execution: if Copilot presents a multi‑step plan, pause and examine the exact steps it intends to take.

- Use credit cards with strong fraud protection and avoid saving primary financial instruments to automated agents until you’ve validated behavior.

- Regularly review the Tasks Dashboard and audit logs (if available): ensure you can pause, cancel, and see what the agent did.

- Watch for unexpected communications from third parties after agent interactions (booking confirmations, purchase receipts) and reconcile them immediately.

Practical checklist for IT leaders and security teams

For organizations evaluating Copilot Tasks for pilot programs, policy and governance must be proactive. Consider the following checklist:- Define allowed use cases for the pilot and ban financial transactions or external bookings until controls are validated.

- Require conditional access policies and MFA for any account the agent might touch.

- Ensure sensitivity labels and data loss prevention (DLP) policies apply to agent‑generated artifacts and the agent’s ability to access sensitive sources is limited.

- Demand exportable audit logs and clear procedures for incident response that include agent activity.

- Negotiate vendor commitments for data residency, breach notifications, and support for integration with enterprise SIEM/SOAR tools.

- Train help desks to recognize agent‑initiated anomalies (duplicate bookings, erroneous emails) and to remediate them quickly.

- Conduct red‑team exercises simulating agent misuse (e.g., unauthorized bookings, credential misuse) to prove controls work in practice.

Legal and compliance considerations

Agentic automation touches contract law, consumer protection, and third‑party terms. Legal teams should consider:- Whether agent actions constitute the user’s binding signature for services and purchases. Establish clear consent evidence — ideally, multi‑factor verification for binding commitments.

- Whether automated access to third‑party services violates terms of service or scraping policies. Where possible, favor sanctioned API use or partner agreements.

- Privacy regulation implications (GDPR, CCPA, etc.) if the agent processes or shares personal data on behalf of the user. Ensure data processing agreements and DPIAs (Data Protection Impact Assessments) are updated.

- Product liability and consumer protection risks if the agent makes errors — who bears responsibility for an incorrect booking or financial loss?

UX and human factors: why human‑in‑the‑loop really matters

Copilot’s claim that it “asks for confirmation before meaningful actions” is central to user trust. But how and when that confirmation occurs is everything.- Instant, detailed checkpoints protect users but add friction. Microsoft must balance autonomy and control by allowing users to set their preferred risk tolerance (e.g., fully automated price rebooking under $X, but manual confirmation for >$X).

- Clear UI indicators when the agent is acting — visible banners, persistent dashboard cards, and contextual logs — prevent confusion and help users spot unauthorized activity quickly.

- Undo and reversal flows must be simple and reliable. When an agent books a hotel or unsubscribes an email, a one‑click undo reduces the fear of automated mistakes.

What Microsoft and the broader industry must prove next

The preview launch is a necessary proof of concept, but three things will determine whether agentic assistants become mainstream:- Reliability at scale — agents must robustly handle the chaotic real world of changing websites, CAPTCHAs, and incomplete data. Frequent breakage will kill adoption.

- Safety by design — consent models, tokenization, and enterprise governance must be demonstrably airtight. Organizations will not accept weak controls for staff agents that touch corporate data.

- Commercial clarity — Microsoft must clarify availability, pricing, and supported regions; lack of clarity stalls enterprise procurement and consumer subscriptions.

Final assessment: bold idea, correct direction, guarded optimism

Copilot Tasks is a natural and ambitious next step for digital assistants. Moving from chat to actions addresses a painful human problem — the tedium of boring, repetitive multi‑step tasks — and gives Microsoft an opportunity to embed Copilot more deeply into everyday workflows.The strengths are obvious: powerful automation for everyday problems, natural language access, and the competitive advantage of Microsoft’s ecosystem. But the risks are equally real: security, privacy, legal exposure, brittle web automation, and the governance implications of allowing software to act on people’s behalf.

If Microsoft follows through on the consent‑first promises, publishes the underlying safety and isolation mechanisms, and provides robust enterprise governance and auditability, Copilot Tasks could become a transformative personal agent. If it does not, the technology risks creating an operational headache and regulatory scrutiny that could delay adoption.

For early adopters: experiment cautiously, prioritize low‑risk scenarios, and insist on transparent logs and revocation mechanisms. For Microsoft: demonstrate in technical detail how credentials, sandboxes, and audits work; partner with key platforms to reduce brittle web automation; and offer simple guardrails so users and organizations can adopt agentic AI with confidence.

The era of agents has clearly begun. Copilot Tasks is Microsoft’s audition to be the agent millions trust on their desktops and phones — but trust is earned by proving safety, reliability, and accountability in the messy reality of everyday digital life.

Conclusion

Copilot Tasks is a pivotal moment for consumer and productivity AI: it makes good on the promise that AI should stop being merely conversational and begin to do. The preview release is the right first step — inviting real users to test the experience — but meaningful adoption will require Microsoft to back headline claims with concrete, auditable technical controls and enterprise governance. If Microsoft can deliver that combination of usefulness and accountability, Copilot Tasks could redefine what people expect from personal computing: not just answers, but trusted execution.

Source: Cloud Wars Microsoft Copilot Tasks: Microsoft Pushes Copilot from Chatbot to Personal AI Agent