Decagon’s pitch is simple but consequential: replace brittle, one-size-fits-all chatbots with an always-on, concierge-grade AI agent that reasons across systems, executes workflows, and learns from every interaction — and do it in a way that business teams can own. The company’s Agent Operating Procedures (AOPs), multi‑model runtime, and Azure‑backed fine‑tuning have helped clients push deflection and resolution metrics that would once have seemed aspirational in contact center operations, while materially lowering support costs. That combination—natural‑language agent programming, enterprise orchestration, and cloud‑grade model management—is what Decagon and its Microsoft partnership now sell as the future of customer experience.

Decagon emerged from stealth with a tight, pragmatic brief: solve the legacy problems of customer support automation that persist even after the chatbot boom—slow deployments, fragile rule trees, and poor visibility into why an automated answer was chosen. The company was founded by Jesse Zhang (CEO) and Ashwin Sreenivas (CTO) and traces its public rise through a series of funding rounds and customer case studies that emphasize measurable business outcomes, such as higher deflection, better CSAT, and materially reduced cost-per-conversation. Independent coverage and company filings show an early funding round in 2024 and follow‑on capital as Decagon scaled commercial deployments. The core problem Decagon addresses is well known to enterprise CX leaders: customers expect fast, accurate, and empathetic help across chat, email, and voice, but legacy automation struggles with nuance, and bespoke engineering pipelines are slow and expensive. Decagon’s response is to treat agents as first‑class, programmable products—human‑readable operating procedures that compile into reliable, auditable agent logic. That shift reframes customer service from a cost center into a delivery channel that can scale with brand expectations.

Decagon’s differentiator is its AOP authoring model plus a platform designed for production agent orchestration. That focus makes it attractive to enterprises that want to move quickly without surrendering governance. Microsoft’s Agent Framework, Azure AI Foundry, and Copilot Studio provide alternative patterns for customers already committed to the Microsoft stack—Decagon’s close Azure integration can be an advantage or a point of overlap depending on a buyer’s strategic cloud alignment.

Decagon’s approach provides a coherent recipe for those decisions; the market will decide whether natural‑language agent orchestration becomes the new standard for customer experience or one option among many in a complex, multi‑vendor future.

Source: Microsoft Decagon: Building the AI concierge for modern customer experience - Microsoft for Startups Blog

Background

Background

Decagon emerged from stealth with a tight, pragmatic brief: solve the legacy problems of customer support automation that persist even after the chatbot boom—slow deployments, fragile rule trees, and poor visibility into why an automated answer was chosen. The company was founded by Jesse Zhang (CEO) and Ashwin Sreenivas (CTO) and traces its public rise through a series of funding rounds and customer case studies that emphasize measurable business outcomes, such as higher deflection, better CSAT, and materially reduced cost-per-conversation. Independent coverage and company filings show an early funding round in 2024 and follow‑on capital as Decagon scaled commercial deployments. The core problem Decagon addresses is well known to enterprise CX leaders: customers expect fast, accurate, and empathetic help across chat, email, and voice, but legacy automation struggles with nuance, and bespoke engineering pipelines are slow and expensive. Decagon’s response is to treat agents as first‑class, programmable products—human‑readable operating procedures that compile into reliable, auditable agent logic. That shift reframes customer service from a cost center into a delivery channel that can scale with brand expectations. What Decagon Builds: Agent Operating Procedures and the Agent Stack

AOPs: Natural‑language rules that compile to code

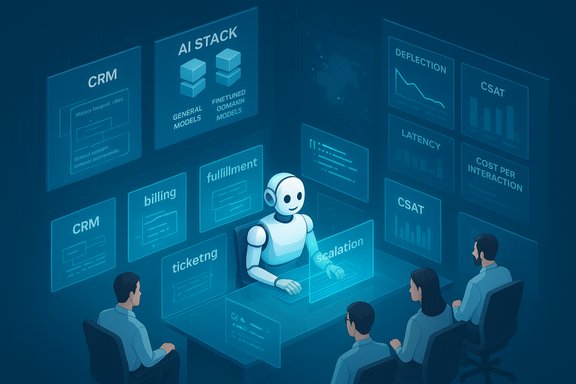

Decagon’s headline innovation is Agent Operating Procedures (AOPs) — a hybrid authoring model where CX teams write what the agent should do in plain language while the platform compiles those instructions into structured, executable logic. The idea mirrors human SOPs (standard operating procedures) but is engineered for machine execution with transparent guardrails. AOPs enable non‑technical teams to design workflows, while engineers retain the ability to extend and secure behavior through code—integrating with CRM, billing, fulfillment, ticketing, and escalation workflows. Key practical benefits of AOPs:- Faster time-to-value: business teams prototype and iterate without waiting for engineering sprints.

- Transparency: human‑readable logic reduces the “black box” problem common to many generative systems.

- Safety: critical operations (refunds, identity verification) remain implemented as enforceable code-level checks.

Multi‑model orchestration and hybrid model hosting

Decagon runs a multi‑model AI stack to balance cost, latency, and capability. The platform can orchestrate off‑the‑shelf models for general conversational skills and deploy fine‑tuned variants when domain accuracy or policy alignment is required. That orchestration happens at runtime: models are chosen based on task profile, user locale, and latency needs. Importantly, Decagon hosts models across regions to meet performance and data residency requirements — an architecture that favors low latency and high availability for global enterprises.Azure’s role: fine‑tuning, deployment, and observability

Microsoft Azure and Azure AI Foundry play a central operational role in Decagon’s stack. Azure provides:- Model fine‑tuning pipelines used to align general‑purpose models with company policy and domain data.

- Regional deployment points to minimize latency and comply with data residency.

- Production controls for rolling updates, monitoring, and safe rollback during model experiments.

Measuring Success: Offline and Online Metrics

Decagon combines traditional model evaluation metrics with business outcome tracking to ensure agents work in production:- Offline model checks: token-level evaluation, F1, accuracy and human‑annotated preference labels to screen candidate model versions.

- Online monitoring: resolution rate, deflection rate, latency, escalation frequency, end‑user satisfaction (CSAT), and cost per interaction.

- Closed feedback loop: production signals feed back into the training and AOP refinement processes so agents improve with real usage.

Real‑World Outcomes: What Clients Report

Company case studies and independent reporting present striking operational improvements for early Decagon deployments. The most quoted outcomes include:- Duolingo’s Duolingo English Test team reported ≈80% chat deflection after switching to Decagon’s agents, allowing human teams to focus on high‑touch cases. Decagon’s published case study and company materials highlight rapid deployment and hourly knowledge syncs as levers for that performance.

- ClassPass deployed Decagon’s agents and—by ClassPass’s own estimate reported in The Information—reached parity with human CSAT while reducing the cost per support conversation by roughly 95%, driven by high automation and staged human audits. The Information’s coverage confirms Decagon as the vendor behind that deployment and quotes ClassPass leadership on the cost and quality outcomes.

- Oura and other customers have publicly credited Decagon with significant CSAT improvements; company materials claim three‑times increases in some engagements. These are presented as customer‑reported outcomes in Decagon collateral and press coverage. Independent corroboration is sparser for every specific percentage, so the figures should be treated as company- and customer-reported results rather than independently audited statistics.

- Broader portfolio: Bilt, Chime, Hertz, Eventbrite, Notion and other brands appear across case studies and press coverage as early adopters or pilots that cite cost and resolution gains. Independent reporting and corporate press releases reinforce the pattern of sizable operational benefits tied to agentic automation.

Funding, Growth, and Market Position

Decagon moved quickly from early seed and Series A rounds in 2024 into larger growth capital. Reuters reported an early $35M raise in mid‑2024 that positioned the company to scale product and go‑to‑market operations. Subsequent rounds and press coverage indicate further capital infusion as the business scaled deployments and recruited enterprise customers. Decagon’s own announcements and coverage in technology press describe larger late‑stage rounds that reflect investor appetite for the category. This funding trajectory enabled product development (AOPs, testing and analytics) and a regional hosting footprint required for enterprise clients.Strengths: Why Decagon’s Approach Resonates

- Business‑first authoring: AOPs let CX teams shape behavior directly without an engineering bottleneck. That reduces iteration time and puts product teams in control of the agent’s personality and policy enforcement.

- Hybrid governance: The platform preserves engineering controls for sensitive actions (refunds, billing changes), combining natural‑language development with enforceable code paths—an attractive compromise for risk‑averse enterprises.

- Production focus: Multi‑model orchestration and Azure‑backed fine‑tuning provide a pragmatic path from prototype to production with regional deployment options for latency and compliance. The Azure partnership reduces operational friction for deploying fine‑tuned models at scale.

- Measurable business impact: High deflection rates and cost reductions—when realized—translate directly into measurable OPEX savings and capacity reallocation for higher‑value work. Independent reporting (e.g., The Information, Reuters) validates several high‑profile customer outcomes.

Risks, Limitations, and Operational Caveats

Despite attractive outcomes, the agentic model brings nontrivial operational and governance challenges enterprises must confront.- Hallucinations & accuracy drift: Generative components can still invent facts. Enterprises must design strong retrieval‑based grounding, constrained outputs for critical actions, and human‑in‑the‑loop checks for low‑confidence cases. Decagon’s testing and monitoring help, but the risk persists when models generalize beyond training distributions.

- Hidden TCO drivers: Token and model‑usage costs, orchestration overhead, connector maintenance, and the human effort needed for ongoing QA and retraining can produce meaningful operational expense. Consumption‑based agent actions require careful rate limiting and observability. Azure hosting mitigates some infrastructure complexity, but usage economics still matter.

- Governance and compliance: Cross‑border deployments impose data residency, retention, and privacy requirements. Enterprises must define data flows, DLP, and audit trails before delegating actions to agents. Decagon’s enterprise controls and Azure’s regional deployments address many concerns but do not eliminate the need for contractual and technical safeguards.

- Vendor lock‑in and escape hatches: The promise of natural‑language governance is appealing, but tightly coupling organization logic to vendor‑specific compile/execution semantics can hinder portability. Exportable agent definitions, version control, and multi‑cloud strategies are necessary guardrails for risk‑aware buyers.

- Metrics ambiguity: Reported outcomes often originate from vendor case studies. Independent audits are rare, and reported percentages (deflection, CSAT multipliers, cost reductions) depend heavily on baseline definitions and ticket mix. The most robust validated claims will come from longitudinal, independently measured results—enterprises should insist on pre/post pilots with clearly defined KPIs.

Technical Best Practices for Enterprise Adoption

- Start with high‑volume, low‑risk workflows:

- Prioritize FAQs, billing inquiries, and simple account requests where automation will have outsized impact and limited regulatory exposure.

- Design human‑in‑the‑loop escalation thresholds:

- Implement confidence and policy checks that route ambiguous or high‑impact requests to human agents with full context.

- Establish continuous evaluation:

- Use both offline metrics (precision, recall, annotated comparisons) and online business KPIs (CSAT, time‑to‑resolution, ticket transfers) to validate changes before full rollout.

- Instrument visibility and audit trails:

- Ensure every agent action is logged, versioned, and auditable for compliance, dispute resolution, and model governance.

- Guard against “agent creep”:

- Limit autonomous actions with economic exposure (refunds, billing changes) until a model matures across multiple audited datasets.

- Negotiate contractual protections:

- Secure data portability clauses, exportable agent definitions, and performance SLAs tied to measurable KPIs when contracting with an agent platform vendor.

Competitive Landscape and Where Decagon Fits

The market for agentic customer experience is crowded and fast‑moving. Large platform vendors (Microsoft, Salesforce, Zendesk) and specialized startups (Decagon, Forethought, PolyAI, others) are all racing to combine reasoning, tool use, and enterprise integration.Decagon’s differentiator is its AOP authoring model plus a platform designed for production agent orchestration. That focus makes it attractive to enterprises that want to move quickly without surrendering governance. Microsoft’s Agent Framework, Azure AI Foundry, and Copilot Studio provide alternative patterns for customers already committed to the Microsoft stack—Decagon’s close Azure integration can be an advantage or a point of overlap depending on a buyer’s strategic cloud alignment.

Critical Assessment: Where the Narrative Is Strong — and Where Caution Is Warranted

Strengths to lean into:- Operational pragmatism. Decagon answers the practical issues that stall many AI pilots—speed of iteration, transparency, and multi‑channel orchestration—rather than promising purely speculative capabilities.

- Measured outcomes. Several client stories, including the ClassPass report in The Information, provide independent confirmation that agentic systems can hit both cost and quality targets when properly instrumented.

- Enterprise readiness through Azure. Using Azure for fine‑tuning and regional hosting aligns with enterprise expectations for compliance, performance, and controlled deployment.

- Attribution and variability. Not all client outcomes are equally verifiable. Many performance numbers are vendor‑published case studies; buyers should demand transparent pilot metrics with baseline comparisons.

- Operational lift. Achieving and sustaining high deflection and CSAT requires ongoing human governance, iterative training, and tooling investment—automation is a continuous program, not a one‑time project.

- Economic exposure. While per‑conversation cost drops can be dramatic on paper, tokenized model consumption, multi‑model orchestration, and connector maintenance can erode marginal economics unless closely managed.

Practical Checklist for CX Leaders Considering Agentic Platforms

- Define the pilot scope: pick 2–3 high‑volume, low‑risk ticket types and set clear KPIs (deflection, CSAT delta, cost per interaction).

- Require pre/post data audits: ensure vendor commits to independent measurement and baseline export.

- Confirm governance controls: identity verification, refund workflows, and audit logs must be non‑bypassable.

- Validate portability: store agent definitions in versioned repositories and insist on export tools.

- Model economics: simulate TCO across scenarios (growth in interactions, model upgrades, multi‑region hosting).

- Build a reskilling plan: reassign human agents to escalation and quality roles and train them in agent design and auditing.

Conclusion

Decagon’s offering crystallizes a broader industry shift from static chatbots to agentic AI—systems that plan, act, and integrate across business systems while remaining auditable and manageable. Agent Operating Procedures give business teams a pragmatic way to author agent behavior, and Azure’s fine‑tuning and regional capabilities provide the production infrastructure enterprises require. Independent reporting—most notably The Information’s ClassPass coverage and Reuters’ funding coverage—confirms that when implemented with discipline, agentic systems can both improve user satisfaction and cut support costs. However, the path to durable value is not automatic. Success depends on rigorous evaluation, clear governance, careful economic modeling, and continued human oversight. For organizations that treat agentic AI as a structured program—prioritizing measurable pilots, observability, and human‑in‑the‑loop controls—the promise is tangible: a 24/7 concierge that not only reduces costs but also elevates the customer experience. For buyers, the immediate questions are pragmatic: which workflows to automate first, how to measure honestly, and how to tie agent actions to business outcomes without ceding control.Decagon’s approach provides a coherent recipe for those decisions; the market will decide whether natural‑language agent orchestration becomes the new standard for customer experience or one option among many in a complex, multi‑vendor future.

Source: Microsoft Decagon: Building the AI concierge for modern customer experience - Microsoft for Startups Blog