EPAM’s win of Microsoft’s 2025 Innovate with Azure AI Platform Partner of the Year award marks a significant validation of the systems‑integrator model for enterprise generative AI: the company’s entry — built on Azure AI Foundry and realized in a production deployment for Dutch grocer Albert Heijn — highlights a maturing market where platform alignment, repeatable engineering accelerators, and auditable governance are the currency of large‑scale AI adoption.

Microsoft’s Partner of the Year awards recognize partners that deliver measurable outcomes using Microsoft Cloud and AI technologies. The 2025 cycle prioritized partners demonstrating enterprise‑grade lifecycle management, multi‑modal model work, agentic systems and robust safety/observability — the precise competencies the Innovate with Azure AI Platform category is designed to spotlight. EPAM’s press announcement explicitly frames the winning submission around a GenAI platform for Albert Heijn that powers an employee‑facing virtual assistant integrated into the retailer’s staff app to surface product and stock information, automate task flows, and speed onboarding. The win is meaningful on three levels for enterprise IT leaders:

For enterprise buyers the implication is straightforward: winning the shortlisting game requires both the technical foundation (Azure AI Foundry, secure connectors, vector retrieval) and the operational artifacts (telemetry, runbooks, SLAs, portability). EPAM’s win signals capability and co‑sell motion access; it does not obviate the need for careful procurement verification. When awards, platform features and partner delivery discipline converge — and when buyers insist on auditable evidence in contracts — the combination can produce the measurable, scalable value Microsoft’s 2025 Partner of the Year program sought to highlight.

Source: The AI Journal EPAM Wins the 2025 Microsoft Innovate with Azure AI Platform Partner of the Year Award | The AI Journal

Background and overview

Background and overview

Microsoft’s Partner of the Year awards recognize partners that deliver measurable outcomes using Microsoft Cloud and AI technologies. The 2025 cycle prioritized partners demonstrating enterprise‑grade lifecycle management, multi‑modal model work, agentic systems and robust safety/observability — the precise competencies the Innovate with Azure AI Platform category is designed to spotlight. EPAM’s press announcement explicitly frames the winning submission around a GenAI platform for Albert Heijn that powers an employee‑facing virtual assistant integrated into the retailer’s staff app to surface product and stock information, automate task flows, and speed onboarding. The win is meaningful on three levels for enterprise IT leaders:- It signals platform‑native capability: winners are judged on how well they integrate with Azure’s stack (Azure AI Foundry, Copilot tooling, Fabric, Entra/Azure AD).

- It signals operational governance: judges emphasize observability, safety tooling, and model lifecycle practices needed for audits and compliance.

- It signals repeatability: Microsoft looks for partners who can show real customer impact beyond pilots, and EPAM presented an operational retail scenario as the evidence.

What EPAM announced — the essentials

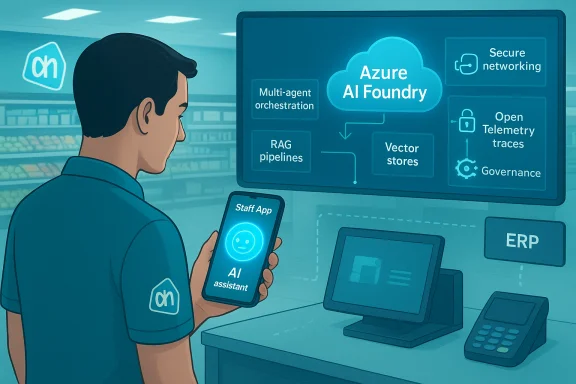

EPAM’s public statement (November 13, 2025) says the company won the Innovate with Azure AI Platform award for a solution created with Microsoft and Albert Heijn. The entry showcased:- A scalable GenAI platform built on Azure AI Foundry and EPAM’s internal accelerators (EPAM AI/RUN™, DIALX Lab).

- An employee virtual assistant inside Albert Heijn’s staff app that answers product and stock queries, simplifies restocking, helps onboarding, and reduces time to find authoritative product information.

- Engineering and SDLC speedups enabled by GitHub Copilot and automation in EPAM’s delivery pipeline.

Azure AI Foundry: the platform facts (verified)

Azure’s product documentation and feature pages make explicit the capabilities EPAM claims to have used:- Azure AI Foundry is described as “the AI application and agent factory” — a unified platform for model cataloguing, agent orchestration, RAG, fine‑tuning, observability and safety tooling intended for enterprise production workloads. It supports prebuilt and third‑party models, multi‑agent orchestration, and project‑level governance.

- Foundry Agent Service offers enterprise features such as BYOS (bring‑your‑own‑storage), private networking to prevent public egress, on‑behalf‑of authentication, OpenTelemetry tracing, and multi‑agent orchestration capabilities distinct from low‑code Copilot Studio. These are the primitives enterprise deployers need to keep sensitive retail and HR data under control.

- Foundry’s model catalog, built‑in evaluations and observability dashboards underpin the “auditability” narrative Microsoft promoted in 2025 for partner awards. The platform encourages CI/CD workflows, prompt and model registries, and automated evaluations that feed into governance workflows.

Reconstructing the likely architecture EPAM built

EPAM’s announcement leaves some technical specifics high‑level, but the combination of vendor copy and typical Foundry patterns allows a reasonable reconstruction of the architecture delivered to Albert Heijn:- Authoritative backend connectors securely expose POS, ERP, inventory and catalog data into a governed ingestion pipeline (Azure Data Factory / Databricks feeding Microsoft Fabric / OneLake).

- Document and metadata ingestion pipelines create indexed vectors (Azure AI Search / vector index) used by RAG patterns to ground model outputs in authoritative sources.

- Model hosting and inference use Azure AI Foundry model catalog with hosted deployments (Azure OpenAI or other Foundry‑catalog models) and controlled orchestration through Copilot Studio or Foundry Agent Service.

- Agent orchestration composes prompts, interprets user intent, and issues API calls for stock checks, product lookups or task creation; observability logs actions, provenance and moderation flags for audits.

Strengths EPAM brings to enterprise Azure AI projects

- Engineering scale and delivery maturity

EPAM’s global delivery footprint and long track record in enterprise engineering make it likely to productize ingestion pipelines, test harnesses, and RBAC patterns needed for multi‑region retail rollouts. This is a practical advantage when scaling assistants across hundreds or thousands of stores. - Platform alignment and co‑sell traction

Deep alignment with Microsoft’s roadmap (Foundry, Copilot Studio, Fabric, Entra) reduces integration friction and unlocks co‑sell and field support channels — a real commercial advantage for large enterprise sourcing decisions. EPAM’s elevation to Microsoft Global Systems Integrator (GSI) status amplifies those channels. - Responsible‑AI, governance focus

The award category itself emphasizes safety, observability and lifecycle controls; EPAM’s submission claims engineering investments in these areas. For regulated or high‑volume retail operations, those controls are a necessity, not an optional extra. - Verticalized IP and accelerators

EPAM’s internal accelerators (EPAM AI/RUN™, DIALX Lab) and SDLC automation via GitHub Copilot accelerate delivery and create repeatable patterns for ingestion, indexing, RBAC and red‑team testing — exactly the type of repeatable IP Microsoft rewards.

Practical risks and open questions buyers must verify

EPAM’s award and the Albert Heijn case provide credible market signals, but several operational facts are not public and must be contractually validated before procurement:- Missing per‑metric evidence

The press materials do not publish exact KPI improvements (for example, percentage reduction in restocking time, query latency percentiles, or hallucination incident rates). Those operational metrics should be treated as vendor claims to be validated with telemetry extracts and named references. - Inference economics and FinOps exposure

Agentic systems have recurring costs: model inference, vector store storage and search, and data egress can compound rapidly at retail scale. Buyers must insist on realistic cost forecasting, throttles, and cost‑per‑query modelling. Independent industry analysis shows fine‑tuning and RAG can be cost‑efficient alternatives to large‑scale fine‑tuning, but only when queries and retrieval architectures are optimized. - Hallucination management, red‑teaming and provenance

RAG reduces hallucinations but does not eliminate them. Buyers should require provenance linking every assistant answer to an authoritative source, automated regression tests for prompt flows, and documented red‑team results with remediation histories. - Data governance, residency and contractual protections

Retailers handle employee and supply‑chain data that may be sensitive; confirm data residency, retention policies, and rights around telemetry and model training. Ask for explicit DPA clauses, export formats, and exit runbooks to avoid lock‑in. - Vendor lock and portability risk from accelerators

Proprietary accelerators speed time‑to‑value but can increase lock‑in. Negotiate rights to exported indexes, ETL scripts, and a documented migration path to another cloud or provider.

A procurement checklist for IT leaders (practical steps)

- Request two named, contactable operational references with deployments of comparable scale and compliance requirements.

- Insist on telemetry extracts showing latency percentiles, hallucination incidents, token usage, and monthly inference costs for a 3–6 month window.

- Pilot in a bounded, high‑value scenario (store assistant or IT helpdesk) with measurable KPIs: task completion time, error rate, and escalation volume.

- Require contractual SLAs for incident response, rollback runbooks, data export timelines, and indemnities for data misuse.

- Verify governance artifacts: model evaluation reports, drift detection thresholds, moderation rules, and audit logs.

- Validate FinOps controls: tagging policies, budget alerts, throttles, and cost‑per‑query forecasts.

Technical guidance for Windows and Azure administrators

Identity, access and endpoints

- Use Microsoft Entra / Azure AD managed identities for agent service accounts and enforce least‑privilege roles. Avoid app credentials in clear text and prefer conditional access policies for tenant flows.

Observability and telemetry

- Centralize logs and traces with Azure Monitor and OpenTelemetry. Capture prompt flow tracing, model call latencies, and provenance metadata for every agent response to enable auditability and root‑cause analysis. Foundry’s observability features are designed for this; require concrete dashboards and alerting as part of runbooks.

Network and data controls

- Use private endpoints, VNet integration and BYOS (bring‑your‑own‑storage) where possible to prevent uncontrolled egress. Confirm that agent connectivity to POS/ERP systems uses managed identities, keyless flows or short‑lived credentials.

Testing and red‑teaming

- Implement a structured red‑team program: adversarial prompts, injection tests, and scenario‑based regression suites. Pair that with continuous A/B testing of model versions and automated evaluation against labeled test sets.

Cost control (FinOps)

- Tag all resources, set billing alerts, and require partners to provide projected cost curves for expected query volumes. Bench test typical user flows to estimate monthly inference and index costs before scaling.

Why the award matters in the broader ecosystem

EPAM’s win is part of a broader market pattern in 2025: hyperscalers increasingly reward partners who can take agentic systems from prototype to production with repeatable governance. That shapes procurement expectations: teams buying GenAI projects will prioritize partners who demonstrate shipping artifacts (exportable indexes, runbooks, telemetry) and enterprise controls rather than glamorous demos alone. EPAM’s elevated GSI status with Microsoft and this award will likely accelerate co‑sell introductions and field engagement, amplifying EPAM’s pipeline for large enterprise retail and consumer deals. At the same time, awards accelerate shortlists — they do not remove the need for robust procurement discipline. The practical truth remains: scale and safety are earned in operational detail, not press copy.What remains unverifiable in the public record (flagged)

- Exact operational KPIs claimed for Albert Heijn (percent reduction in restocking time, resolution latencies, or percentage of queries resolved without escalation) are not published in the press materials. These are customer‑specific telemetry facts that must be verified through references and contract artifacts. Treat such figures as vendor claims until validated.

- Specific SLA terms, incident histories and rollback cadence for the Albert Heijn deployment are not in the public announcement and should be requested during procurement.

Strategic takeaways for enterprises and WindowsForum readers

- Treat Microsoft Partner of the Year awards as an effective shortlisting filter: they identify platform‑aligned vendors with repeatable IP, but they are not a substitute for due diligence.

- Prioritize pilots that are low risk and high value (employee assistants, helpdesk copilots, store operations) to prove economic and safety hypotheses before broad rollouts.

- Require exportable artifacts, runbooks and named references. If portability is important, demand the right to exported indexes, transformation scripts, and documented migration paths.

- Invest in governance and FinOps up front: model lifecycle, provenance, cost‑controllability and red‑team testing are essential operational capabilities, not optional extras.

Conclusion

EPAM’s Innovate with Azure AI Platform Partner of the Year award is a credible market milestone: it reflects Microsoft’s current judging priorities — platform‑native engineering on Azure AI Foundry, auditable governance, and repeatable business outcomes. The Albert Heijn project, as presented, is a textbook retail use case for agentic GenAI: high frequency of deterministic knowledge queries, clear KPIs, and mobile, point‑of‑work utility.For enterprise buyers the implication is straightforward: winning the shortlisting game requires both the technical foundation (Azure AI Foundry, secure connectors, vector retrieval) and the operational artifacts (telemetry, runbooks, SLAs, portability). EPAM’s win signals capability and co‑sell motion access; it does not obviate the need for careful procurement verification. When awards, platform features and partner delivery discipline converge — and when buyers insist on auditable evidence in contracts — the combination can produce the measurable, scalable value Microsoft’s 2025 Partner of the Year program sought to highlight.

Source: The AI Journal EPAM Wins the 2025 Microsoft Innovate with Azure AI Platform Partner of the Year Award | The AI Journal