Microsoft’s demonstration of an on‑device, agentic AI that can literally click, type and navigate your PC marks one of the clearest previews yet of what “assistant as actor” will look like on Windows — and it raises more practical and policy questions than purely technical ones. Fara‑7B, a 7‑billion‑parameter Computer Use Agent (CUA) released as an experimental, open‑weight research model, runs by visually perceiving the screen, predicting pixel‑level mouse and keyboard actions, and pausing at predefined “Critical Points” for human approval. The release promises lower latency and better local privacy than cloud‑dependent assistants, but it also amplifies attack surface, governance and usability concerns that administrators and enthusiasts must treat as first‑class problems.

Microsoft Research and Azure AI teams published Fara‑7B and accompanying tooling as an experimental step toward on‑device agentic automation. The model is described as an agentic small language model (SLM) specifically trained to act inside desktop environments: ingesting screenshots plus textual goals, reasoning over long contexts, and emitting "observe → think → act" steps that map to Playwright‑style mouse and keyboard actions. Microsoft frames the design as a pragmatic compromise: compact enough to run locally while trained to handle multi‑step web tasks such as shopping, searching, summarization and basic transaction flows. Key public details established by Microsoft and visible in the published model card and blog:

Source: PCMag Microsoft's New On-Device AI Model Can Control Your PC

Background / Overview

Background / Overview

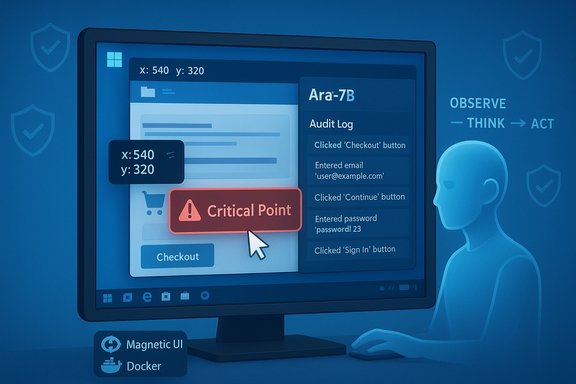

Microsoft Research and Azure AI teams published Fara‑7B and accompanying tooling as an experimental step toward on‑device agentic automation. The model is described as an agentic small language model (SLM) specifically trained to act inside desktop environments: ingesting screenshots plus textual goals, reasoning over long contexts, and emitting "observe → think → act" steps that map to Playwright‑style mouse and keyboard actions. Microsoft frames the design as a pragmatic compromise: compact enough to run locally while trained to handle multi‑step web tasks such as shopping, searching, summarization and basic transaction flows. Key public details established by Microsoft and visible in the published model card and blog:- Parameters: 7 billion.

- Context window: Support for very long contexts (Microsoft cites up to 128k tokens).

- Mode of perception: Pixel‑level screenshots (no reliance on accessibility trees or DOM parsing).

- Distribution: Open‑weight release on Microsoft Foundry and Hugging Face under permissive terms, with quantized/silicon‑optimized variants for Copilot+ PCs.

What Fara‑7B actually does — technical snapshot

Multimodal, action‑oriented output

Fara‑7B is a multimodal, decoder‑only CUA that accepts:- A textual user goal (the intent),

- One or more screenshots of the visible desktop or browser window,

- A history of previous agent thoughts and actions.

Training and data pipeline

Microsoft built Fara‑7B on a distilled backbone (Qwen2.5‑VL‑7B) and trained it with a synthetic trajectory generator (FaraGen) which orchestrated multi‑agent interactions to generate large volumes of verified, multi‑step web tasks at scale. The idea: teach the model how humans solve tasks by showing many successful interaction traces and filtering them through verifiers. Microsoft reports impressive throughput and cost efficiencies in the data pipeline, an important reason the company believes small, efficient agentic models can catch up to larger, prompt‑heavy multi‑model systems.Runtime and sandboxing

Microsoft bundles Magentic‑UI (their human‑centered sandbox) and Playwright‑style tool interfaces so researchers can run, observe and audit agent steps in a reproducible Docker environment. Actions and logs are instrumented; Microsoft points to “Critical Points” where the agent is expected to halt and request human confirmation for sensitive operations such as checkouts or logins. Quantized, hardware‑optimized builds are provided for Copilot+ PCs with dedicated NPUs.Benchmarks, performance claims and independent context

Microsoft publicizes a set of task benchmarks (WebVoyager, Online‑Mind2Web, DeepShop, and a new WebTailBench) and claims that Fara‑7B achieves state‑of‑the‑art results within its size class and is competitive with larger, multi‑model agent systems — for example, outperforming GPT‑4o when the latter is configured as a “Set‑of‑Marks” agent in some benchmarks. Microsoft’s published table reports Fara‑7B with higher task success rates on specific sets, and claims significant reductions in average steps per task (≈16 steps vs ≈41 for some comparators), improving efficiency. Caveats and verification notes:- These are vendor‑supplied benchmarks run on Microsoft datasets and evaluation harnesses; metric selection, dataset composition and prompt engineering materially affect outcomes. Independent, community benchmarking is necessary to validate generalization to real‑world, adversarial websites.

- Reports of performance parity or superiority should be interpreted as “promising and qualified” rather than definitive: benchmark choice and task distributions can favor specific design decisions (pixel grounding vs DOM parsing).

The demos and user experience

Microsoft published three demo scenarios showing Fara‑7B:- Completing an online purchase (stopping at Critical Points for user confirmation),

- Searching and summarizing web information,

- Using mapping services to compute distances and identify points of interest.

- The agent is fragile on dynamic or unusual layouts; pixel coordinate prediction works best on stable, predictable UIs.

- Critical Point enforcement reduces risk but depends on correct detection and conservative definitions: missed critical points equal real‑world exposure. Microsoft explicitly recommends sandboxed testing and avoiding production credentials during experiments.

How Fara‑7B differs from Copilot cloud agents

Microsoft’s Copilot assistant for Windows has already acted as an “agent” in a sense — via cloud‑backed reasoning that can perform actions when coupled with integrations — but there are key differences:- Local vs cloud: Copilot’s fuller reasoning typically runs in cloud data centers; Fara‑7B is built to run on‑device or in locally‑provisioned sandboxes for low latency and improved local privacy.

- Data handling: Cloud Copilot variants may require telemetry or selective data collection for features; on‑device inference keeps screenshots and interaction traces local unless explicitly exported. That said, local storage and logs still create governance responsibilities for admins.

- Model architecture: Fara‑7B is a compact, purpose‑built CUA trained for pixel‑grounded actions. Copilot’s cloud variants pair larger SLMs with tool chains and broader web connectivity, and thus are often more capable on complex, open‑ended reasoning tasks but costlier and higher latency.

File sizes, packaging and the “16.6GB” claim — verification

Some press reports and early coverage referenced a 16.6GB download package for Fara‑7B intended for Magentic‑UI use. That number appears in some summaries circulated with the initial reporting, but it requires careful qualification:- Model package size varies by file format (safetensors, PyTorch), quantization method (4‑bit, 8‑bit), packaging of tokenizer/config files, and whether CPU/GPU/NPU optimizations are included. Practical, quantized 7B weights in widely used formats frequently land in the mid‑teens GB range (≈15–17GB) as a rough planning figure, but the exact bytes depend on the variant you download. Treat any single “X GB” claim as implementation‑specific.

Privacy, security and governance — hard tradeoffs

Fara‑7B’s promise of on‑device intelligence reduces the need to ship screenshots or detailed interaction traces to cloud services, but it does not remove governance responsibilities. The arrival of agents that can control UIs introduces an enlarged endpoint attack surface that must be addressed at multiple layers:- Agent identity and isolation: Microsoft’s preview architecture for agentic features in Windows includes Agent Workspace and per‑agent accounts intended to run agents in isolated sessions with auditable logs. Treat these agent accounts like service principals requiring ACLs, signing and revocation.

- Critical Points and human‑in‑the‑loop controls: These are necessary but not sufficient. Agents must reliably detect all irreversible steps; failures to stop or misclassification can lead to unwanted purchases, data leakage or forgery. Microsoft’s tooling logs every action and includes refusal behavior, but real‑world robustness must be stress‑tested.

- Prompt injection and adversarial UIs: Pixel‑based action prediction avoids DOM‑level defenses but remains vulnerable to visual spoofing, layout manipulation and covert UI elements. Enterprises must plan DLP, MDM and SIEM rules that incorporate agent logs and allow rapid revocation of agent privileges.

- Open‑weight release tradeoffs: Microsoft made Fara‑7B available under an MIT license to lower barriers for research and auditing. That transparency is a strength for defenders and researchers, but also makes it easier for malicious actors to study the model to find jailbreaks or ways to bypass Critical Points. The release choices increase both scrutiny and risk simultaneously.

Enterprise and OEM implications

Copilot+ hardware (devices with NPUs rated by Microsoft for on‑device workloads) and the broader Windows agent platform create a two‑tier ecosystem:- For IT buyers: There’s now a decision calculus between existing devices and Copilot+ upgrades that materially improve on‑device AI latency and capability. Microsoft’s published guidance suggests Copilot+ NPUs target around ~40+ TOPS for richer experiences; OEMs and procurement teams must validate vendor claims with real-world tests.

- For security teams: Agentic capabilities should be treated as new principals in identity and access management, with revocation mechanisms, signed agent binaries, and SIEM integrations. Existing DLP rules may need expansion to include agent‑initiated file movements and UI actions.

- For OEMs and developers: Standardizing performance benchmarks and exposing consistent NPU runtimes and model acceleration stacks (ONNX, DirectML, vendor SDKs) will be critical so developers can target predictable behavior across devices. Microsoft’s Foundry/Hugging Face distribution aims to ease onboarding, but fragmentation in quantization and runtime remains an obstacle.

How to test Fara‑7B safely — a practical checklist

- Run Fara‑7B only in a fully isolated VM or the official Magentic‑UI Docker sandbox; do not use production accounts or personal credentials.

- Start with read‑only tasks (search & summarize) and require explicit confirmations at every Critical Point.

- Instrument and centralize logs; capture full action traces and screenshots for auditing.

- Use Azure AI Content Safety and programmatic filters to screen outputs before any automated action is executed.

- Engage red‑team exercises aimed at adversarial prompts, UI spoofing and Critical Point bypass attempts before any production rollout.

Strengths, risks and the balanced verdict

Strengths:- Efficient, pragmatic design: Fara‑7B shows a compelling path: combine scale in data generation with compact models to get practical, on‑device agentic behavior. This lowers cost, latency and cloud dependence for many routine workflows.

- Transparency and tooling: Open weights, Magentic‑UI sandboxes and a public model card accelerate external evaluation and ecosystem development.

- Operational safety primitives: Explicit Critical Points, refusal behaviors and auditable logs are built into the design rather than tacked on later.

- Expanded attack surface: Agents that can click and type are new privileged principals on endpoints; they require careful IAM, DLP and runtime isolation to mitigate abuse.

- Fragility in the wild: Pixel‑grounded action prediction can fail on dynamic or adversarial UIs, producing incorrect or unsafe outcomes if critical checks fail.

- Open release tradeoffs: Open weights speed research but also make jailbreak paths visible to malicious actors; Microsoft’s red‑teaming is necessary but not sufficient.

What to watch next

- Community benchmarking and open audits of Fara‑7B’s behavior on diverse, adversarial websites. Vendor benchmarks are informative but incomplete; independent A/B testing will determine real‑world robustness.

- Hardening of Critical Point detection and more conservative default policies for enterprise deployments. Tools that allow admins to whitelist or blacklist agent targets will be essential.

- Clarification from Microsoft on packaging variants and final shipping sizes for NPU‑optimized builds; quantization and runtime choices will determine practical deployment cost and disk/VRAM requirements. Verify sizes on Hugging Face or Foundry before provisioning.

- Legal, regulatory and compliance guidance as on‑device agents interact with regulated data domains; expect enterprise customers to demand attestation and third‑party audits before production rollouts.

Conclusion

Fara‑7B is a clear proof point that agentic computing can be compact and on‑device. Microsoft’s research demonstrates that careful synthetic trajectory generation, supervised distillation and rigorous sandboxing can produce a 7‑billion‑parameter model capable of perceiving a screen and executing multi‑step workflows. For Windows users and enterprises, that opens real productivity opportunities — and equally real governance responsibilities. The technical results are promising, the tooling is thoughtfully designed, and the open‑weight release invites rapid community scrutiny. The right next steps are disciplined: test in isolation, demand auditable controls, and treat agentic features as new privileged principals that need to be managed with the same rigor as service accounts and system drivers. The capability is arriving; the mandate now is to make sure it’s safe, accountable, and reliably useful before it’s given full control of everyday tasks.Source: PCMag Microsoft's New On-Device AI Model Can Control Your PC