The University of Georgia has launched a campus AI pilot program for students, marking the latest chapter in a nationwide push by colleges to move beyond blanket bans and toward guided, institution‑level adoption of generative AI tools — a shift that promises productivity and new learning pathways while raising urgent questions about privacy, pedagogy, and governance.

Colleges and universities across the United States are experimenting with how to integrate generative AI into everyday teaching, research, and student services. That experimentation ranges from small course‑level pilots to institution‑wide vendor partnerships that place advanced assistants at the center of campus productivity toolchains. One recent, large example saw a major university plan a broad roll‑out of Microsoft 365 Copilot for every student and staff member; that case has been widely discussed as a bellwether for what a full campus deployment looks like in practice and highlights the governance and technical work required to make such programs safe and educationally sound. om the University of Georgia — reported in a student newsroom article excerpt supplied to this piece — positions UGA’s effort as a pilot, not an unbounded deployment. The Red & Black excerpt highlights student social dynamics and the rise of campus‑focused platforms such as Yik Yak as part of the context for introducing campus AI: colleges are places where digital community and real‑world learning meet, and administrators are increasingly seeing AI literacy as part of the student experience. The description of Yik Yak in the excerpt underscores how campus technology often centers peer conversation and anonymity; that social context matters when designing AI initiatives because students use digital tools in ways administrators may not anticipate.

Important caveat: the original Red & Black article was provided as an excerpt for this assignment. I was unable to independently fetch the full Red & Black page from the public site at the time of writing; where this article makes broader factual claims about other universities or technical best practices, those claims are verified against independent institutional reporting and operational recommendations from documented campus rollouts. Where UGA‑specific details could not be corroborated beyond the supplied excerpt, I flag those statements clearly.

Other institutions are staging more conservative pilots: short, outcome‑driven trials with published metrics and incremental scaling. These governance‑first pilots typically require DLP, audit logging, and co‑authored policy before wider distribution. The operational recse rollouts are consistent: start small, measure, govern, and invest in training.

The most important lesson from campus pilots elsewhere is unglamorous but crucial: governance, training, and measurement must be the foundation of any successful program. If UGA and its peers prioritize those elements, they can preserve the core mission of higher education while equipping students with the skills to use AI responsibly — not merely the capacity to rely on it.

Source: The Red & Black University of Georgia launches AI pilot program for students

Background and overview

Background and overview

Colleges and universities across the United States are experimenting with how to integrate generative AI into everyday teaching, research, and student services. That experimentation ranges from small course‑level pilots to institution‑wide vendor partnerships that place advanced assistants at the center of campus productivity toolchains. One recent, large example saw a major university plan a broad roll‑out of Microsoft 365 Copilot for every student and staff member; that case has been widely discussed as a bellwether for what a full campus deployment looks like in practice and highlights the governance and technical work required to make such programs safe and educationally sound. om the University of Georgia — reported in a student newsroom article excerpt supplied to this piece — positions UGA’s effort as a pilot, not an unbounded deployment. The Red & Black excerpt highlights student social dynamics and the rise of campus‑focused platforms such as Yik Yak as part of the context for introducing campus AI: colleges are places where digital community and real‑world learning meet, and administrators are increasingly seeing AI literacy as part of the student experience. The description of Yik Yak in the excerpt underscores how campus technology often centers peer conversation and anonymity; that social context matters when designing AI initiatives because students use digital tools in ways administrators may not anticipate.Important caveat: the original Red & Black article was provided as an excerpt for this assignment. I was unable to independently fetch the full Red & Black page from the public site at the time of writing; where this article makes broader factual claims about other universities or technical best practices, those claims are verified against independent institutional reporting and operational recommendations from documented campus rollouts. Where UGA‑specific details could not be corroborated beyond the supplied excerpt, I flag those statements clearly.

Why universities are shifting from bans to pilots

The limits of prohibition

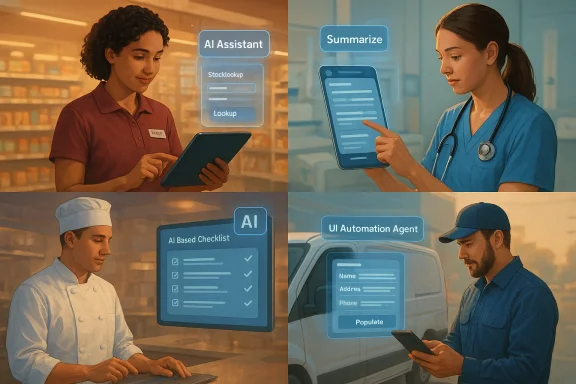

Banning generative AI outright is still a tempting policy for instructors who fear AI will simplify away learning objectives, but bans have proven brittle. Students will continue to access powerful models on personal devices, and instructors who ban tools may miss an opportunity to teach how to use them responsibly. Research from campus policy labs and event summaries shows three dominant approaches emerging nationwide: (1) guided integration that emphasizes AI literacy, (2) course‑level restrictions where mastering raw reasoning or formal methods is the learning goal, and (3) institution‑level governance experiments that pair training, tool access, and enforcement mechanisms. These frameworks aim to preserve pedagogical integrity while preparing students for workplaces where AI is already part of the toolkit.Equity and accesities argue that structured pilots and enterprise engagements can be equity tools: they close an emerging “AI access divide” between students who can afford premium tools and those who cannot. The University of Manchester’s recent campus‑wide Copilot partnership, for instance, was explicitly framed as an equity measure alongside workforce readiness. The Manchester case shows why large, vendor‑backed programs are attractive to administrators: they can rapidly scale access while offering centralized governance and training — but those benefits come with trade‑offs.

What an effective AI pilot looksilot must be bounded, measurable, and tightly governed. Practical recommendations synthesized from campus rollouts and operational guidance include:

- Start small and measure. Bounded pilots should include clear metrics: adoption, accuracy/correction rates, DLP (data loss prevention) events, and user satisfaction. These allow institutions to decide whether broader rollout is justified.

- Co‑design policy with stakeholders. Acceptable‑use policidance should be co‑authored with faculty, students, and academic integrity bodies so they reflect pedagogy rather than top‑down technology mandates.

- Harden technical controls first. Before scaling, ensure DLP, conditional acceare in place to limit unauthorized data exfiltration and to preserve student privacy.

- Invest in short, scenario‑based training. Teach prompt design, verification, and citation practiceels can do, but how to evaluate answers and document AI assistance.

- Report outcomes publicly. Publish pilot outcomes — adoption, incidents, energy use, student experience metrics — so othearn and accountability is built into the process.

The technical and governance checklist — what UGA should consider

Data protection and privacy

- FERPA and consent. Student educational records are governed by privacy rules (e.g., FERPA in the United States). Any AI service that ingests student work must be vetted for how it stores, processes, and shares personally identifiable information. Institutions should demand contractual assurances covering data residency, retention, and deletion.

- DLP and conditional access. Implementing Data Loss Prevention and conditional access policies at the perimeter and application layer helps prevent sensitive student data from being inadvertently transmitted to third‑party models. A hardened stack should precede a campus‑wide license distribution.

- Audit trails and logging. Retain logs of AI interactions where possible so faculty, IT, and compliance teams can investigate suspected misuse or data incidents.

and transparency - Model cards and vendor claims. Insist vendors provide model cards, training data provenance where feasible, and documentation on known limitations and bias assessments. Transparency underpins trust; without it, campus programs expose the institution to reputational and ethical risk.

- Human‑in‑the‑loop (HITL) requirements. For high‑stakes uses (assessment feedback, grading support, mental‑health triage), mandate human review and require systems that flag low‑confidence outputs.

Security and vendor risk

- Vendor dependency. Campus‑level deals with large cloud vendors simplify deployment but risk long‑term vendor lock‑in. Negotiate exit clauses, data export rights, and interoperability guarantees where possible. The Manchester example demonstrates both the scale achievable and the governance burden of such partnerships.

- Cost transparency. Large deployments carry direct licensing fees and hidden costs: IT integration, training, incident response, and environmental footprint. Track and disclose total --

Pedagogical implications: how to preserve learning while using AI

Redefine assignments and assessment

AI changes the kinds of assignments that reliably measure learning. Good practice includes:- Redesign tasks to emphasize process, sources, and critical reasoning rather than single‑deliverable outputs.

- Require students to document AI assistance (what prompts were used, why, and how outputs were edited).

- Use oral exams, in‑class problem solving, and iterative assessments to verify underlying competence.

Faculty development and incentives

Faculty need both training and incentives. Short, scenario‑driven workshops that demonstrate effective prompt construction, verification techniques, and how to grade AIgh‑leverage investments. Institutions should also recognize the time faculty spend redesigning assessments in tenure and promotion considerations.Student experience and campus culture

Balancing anonymity, community apps, and AI

The supplied Red & Black excerpt connects the social fabric of campus apps (the example given was Yik Yak) to student life. Anonymous campus platforms shape norms around sharing, feedback, and rumor flow — contexts where AI can both help and harm.- AI‑powered moderation may reduce harassment and improve safety, but poorly tuned systems can silence marginalized voices or misclassify nuance.

- AI that integrates with community platforms must be transparent about moderation policies, appeals, and data retention — especially when anonymity is a design feature.

Support and accessibility

Pilots that bundle AI access with training and support can advance equity — but only if support is ubiquitous and easy to use. If licensing or technical integration creates friction (complex single‑sign‑on flows, additional authentication steps), the students who most need help may be excluded.Risks and potential harms — a sober appraisal

- Academic integrity erosion. Left unchecked, AI can facilitate plagiarism and ghostwriting. This is an instructional design problem as much as a technology problem: the response must be assessment redesign, detection and documentation policies, and education on responsible use.

- Data leakage and legal exposure. Unvetted AI tools can leak student or faculty data, triggering regulatory and contractual liabilities.

- Bias and fairness. Models trained on large, heterogeneous corpora may produce outputs that embed societal biases; relying on those outputs in grading, advising, or admissions decisions is perilous.

- Environmental cost. Large model inference at campus scale consumes energy; institutions should measure and disclose energy use in pilot reporting.

- Vendor lock‑in and mission drift. Deeply embedding a single vendor’s assistant into teaching, research, and administration risks vendor dependency that may limit future autonomy and innovation. Transparent contracts and intecan mitigate but not eliminate this risk.

Case lessons from other campuses

The University of Manchester’s broad Copilot partnership shows both what’s possible and what to watch for: universal licences, in‑app Copilot features across productivity apps, and a stated emphasis on trainiompanied the announcement. Manchester coupled universal access for tens of thousands of users with structured training and a commitment to partner with student and staff representative bodies — a model UGA and others can study, while recognizing contextual differences between institutions.Other institutions are staging more conservative pilots: short, outcome‑driven trials with published metrics and incremental scaling. These governance‑first pilots typically require DLP, audit logging, and co‑authored policy before wider distribution. The operational recse rollouts are consistent: start small, measure, govern, and invest in training.

Practical recommendations for University of Georgia (and peer institutions)

If UGA intends to convert its pilot into a durable program, these pragmatic next steps will reduce risk and increase educational value:- Define pilot scope and success metrics before deployment: adoption,ncident rate, student satisfaction, time saved, and incidents per 10,000 interactions.

- Establish a cross‑functional AI steering committee with students, faculty, IT, legal, and privacy officers.

- Require vendor transparency: model documentation, data handling specifics, retention, and breach notification timelines.

- Implement DLP, conditional access, and audit logging as a prerequisite for any broad license distribution.

- Launch a mandatory short training module for pilot participants that covers prompt design, hallucination detection, citation, and ethical use.

- Redesign assessments in pilot courses to focus on process and reasoning rather than reproducible final drafts that AI can generate.

- Publish pilot outcomes publiclto build trust and enable peer learning.

- Plan for accessibility and low‑bandwidth alternatives to ensure equitable access.

- Negotiate exit and data export clauses in vendor contracts to prevent long‑term lock‑in.

- Build a small incident response team dedicated to AI incidents, combining IT, student conduct, and counseling resources.

How to measure success — realistic KPIs

- Adoption metrics: percentage of targeted students using the tool and frequency distribution of use.

- Accuracy / correction rate: how often students or staff must correct or override AI outputs.

- Academic integrity events: incidents per assessment type and remediation oisfaction and learning impact:** survey-based measures and performance differences in redesigned assessments.

- Security incidents and DLP events: counts and severity of any data leakage.

- Environmental cost: energy used per 1,000 interactions (where measurable).

Conclusion

UGA’s pilot joins a wider, fast‑moving movement in higher education: institutions are recognizing that generative AI cannot simply be wished away or banned into irrelevance. Well‑designed pilots — those that pair technical controls with co‑created pedagogy, transparency, and public reporting — can turn a disruptive technology into an educational asset. But the path from pilot to campus‑wide adoption is littered with pitfalls: privacy gaps, academic integrity challenges, vendor dependency, and hidden costs.The most important lesson from campus pilots elsewhere is unglamorous but crucial: governance, training, and measurement must be the foundation of any successful program. If UGA and its peers prioritize those elements, they can preserve the core mission of higher education while equipping students with the skills to use AI responsibly — not merely the capacity to rely on it.

Source: The Red & Black University of Georgia launches AI pilot program for students