Microsoft made Agent 365 generally available for commercial customers on May 1, 2026, positioning it as a Microsoft 365 control plane for discovering, governing, and securing AI agents across Microsoft, SaaS, endpoint, and multicloud environments. That framing sounds tidy, but the announcement is really an admission that agentic AI has already escaped the neat boundaries of the Copilot demo. Microsoft is not merely selling another admin console; it is trying to make itself the registry, policy broker, and security layer for a class of software that is multiplying faster than traditional IT governance can track. The bet is that enterprises will tolerate yet another paid Microsoft 365 layer if the alternative is unmanaged autonomous software with access to code, credentials, files, and business workflows.

The important word in Microsoft’s announcement is not “agent.” It is “control plane.” Agent 365 is Microsoft’s attempt to define the enterprise AI agent market before that market hardens into a mess of isolated dashboards, custom scripts, SaaS-specific admin pages, endpoint alerts, and governance theater.

That ambition matters because AI agents do not behave like ordinary applications. A chatbot answers. An automation runs a workflow. An agent, at least in the enterprise pitch, can observe context, invoke tools, access data, coordinate with other systems, and make progress against a goal with less direct human steering. That makes it useful, but it also makes it slippery.

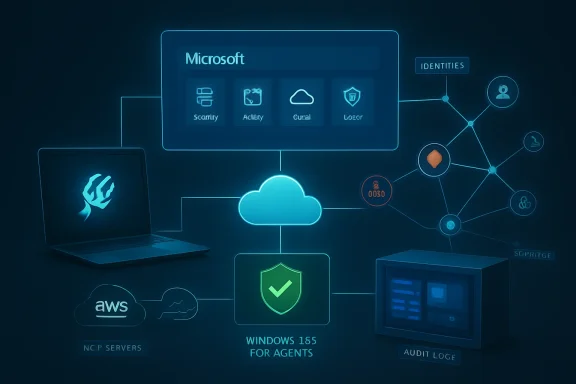

Microsoft’s thesis is blunt: companies are already running agents, whether the CIO has approved them or not. They may be embedded in Microsoft 365 Copilot, built in Copilot Studio, created in Microsoft Foundry, deployed through third-party SaaS platforms, running locally on developer workstations, or tied to cloud services such as Amazon Bedrock and Google’s enterprise AI platforms. The agent is not a single product category anymore. It is becoming a behavior pattern across software.

Agent 365 is Microsoft’s answer to the uncomfortable governance question that follows. If an organization cannot say which agents exist, who owns them, what identities they use, what data they can reach, and what tools they can invoke, then “AI transformation” becomes a euphemism for unmanaged delegation. Microsoft is using general availability to argue that the age of casual experimentation is over.

The company also knows the fear it is selling into. Security teams understand shadow IT. They understand over-permissioned service accounts. They understand OAuth consent sprawl, forgotten automation, unmanaged endpoints, and SaaS apps that quietly become critical infrastructure. Agentic AI collapses all those problems into something faster, more persuasive, and potentially more autonomous.

That choice reveals the product’s worldview. Microsoft is treating agents less like discrete software assets and more like extensions of organizational labor. The human remains the billing anchor, even when the software acts behind the scenes with its own credentials and permissions. For procurement teams, that may simplify forecasting. For admins, it raises a practical question: if agents multiply by the hundreds or thousands, how cleanly will Microsoft’s user-based licensing map to real operational complexity?

The general-availability package covers agents working on behalf of users with delegated access, as well as agents operating behind the scenes with their own access. Microsoft says support for agents participating in team workflows with their own access is in public preview. That distinction is not bureaucratic; it is the line between “assistant” and “coworker.”

A delegated agent inherits some of the familiar governance model of the user it represents. An agent with its own identity is different. It needs lifecycle management, permission review, ownership, logging, and potentially incident response as a first-class actor inside the enterprise. Once an agent can work independently, it starts to resemble a service principal with initiative.

That is why Agent 365’s general availability lands as more than another Copilot-era SKU. Microsoft is trying to establish that agents need a corporate system of record. In Microsoft’s preferred future, the agent registry sits beside Entra, Defender, Intune, Purview, Teams, and the Microsoft 365 admin center as part of the same management fabric.

For years, endpoint management has been about hardware posture, patch state, malware, app control, data loss, and identity. Agentic tools add a new category: software that may be installed by a user, operate locally, connect outward to AI services, and touch sensitive repositories or documents in ways that look legitimate until they are not. This is shadow AI in its most concrete form.

Microsoft says customers in its Frontier program can see whether OpenClaw agents are being used in the organization, identify the devices where they are running, and apply Intune policies to block common methods of running OpenClaw. The inventory of local agents is intended to flow through the Agent 365 registry into Defender and Intune, giving security and endpoint teams a shared picture.

That phrase “common methods” should make admins pause. Blocking an agent is rarely as simple as blocking one executable forever. Developers rename binaries. Tools ship through package managers. Extensions invoke runtimes. Scripts wrap CLIs. If the local agent ecosystem grows the way Microsoft expects, detection will become a moving target.

Still, Microsoft’s approach is sensible because the endpoint is where many unsanctioned agents become real. A SaaS agent may live behind an admin portal. A cloud-hosted agent may appear in a platform registry. A local coding agent, by contrast, can emerge from a user’s terminal, touch source code, read local secrets, call MCP servers, and push changes into enterprise systems before central IT knows it exists.

Security teams do not only need to know that an agent exists. They need to know what happens if it is tricked, compromised, misconfigured, or over-permissioned. An agent that can read a local repository is one risk. An agent that can read a local repository, use an MCP server, authenticate as a privileged identity, and reach production cloud resources is a very different one.

This is where the agent security conversation stops being futuristic and becomes ordinary incident response. A compromised endpoint already matters. A compromised developer identity already matters. A misconfigured automation token already matters. Agents combine those familiar elements and add a reasoning loop that may accelerate misuse.

Microsoft is promising relationship maps that show where an agent runs, which MCP servers are configured, which identities are associated with it, and which cloud resources those identities can reach. If implemented well, that could help security teams prioritize the agents that actually matter instead of drowning in novelty alerts. If implemented poorly, it could become another graph that looks impressive in a keynote and sits ignored during real investigations.

The runtime blocking claim is similarly important. Microsoft says Defender will be able to block coding agents at runtime when a managed agent exhibits malicious behavior patterns, such as attempting to access or exfiltrate sensitive data, and generate alerts with incident context. That is the point where Agent 365 crosses from governance into active control.

It also raises the hard question Microsoft cannot answer with marketing language: how confidently can a security product distinguish a dangerous agent action from an ambitious but legitimate developer task? A coding agent reading many files may be refactoring. A coding agent sending context to a remote endpoint may be using an approved AI service. A coding agent attempting to touch credentials may be either compromised or trying to fix a deployment pipeline. The future of agent security will be full of these gray zones.

That is Microsoft speaking directly to enterprise reality. Large customers are not going to build every agent in Copilot Studio or Microsoft Foundry. Developers will experiment where models, APIs, frameworks, and business units take them. Some teams will use AWS. Others will use Google. Others will buy SaaS tools with embedded agents and never think of themselves as “building AI” at all.

The risk for Microsoft is that agent governance becomes fragmented before Microsoft can define the category. If AWS, Google, ServiceNow, Salesforce, Atlassian, GitHub, Anthropic, OpenAI, and every vertical SaaS vendor each own their own slice of agent administration, Microsoft 365 loses the privileged position it has enjoyed as the default workplace control surface. Agent 365 is a bid to prevent that.

The company’s strongest argument is that identity, endpoint, email, documents, Teams, compliance, and security operations already live heavily inside Microsoft estates. If agents touch those systems, Microsoft can argue that agent governance belongs close to them. The weaker part of the argument is that “single control plane” has been promised across many generations of enterprise technology and rarely arrives without caveats.

Even so, registry sync is the right first move. Organizations need a place to ask basic questions before they can enforce sophisticated policy. Which agents exist? Which platform created them? Which model are they based on? Which resources can they access? Who owns them? Are they still needed? In agent governance, boring inventory is not a footnote. It is the foundation.

That “without integration work” promise is doing a lot of work. If Microsoft can make third-party agents show up in a consistent admin experience, it has a meaningful advantage. IT teams are exhausted by bespoke integrations. Security teams are tired of asking vendors whether logs are available, whether identity is sane, whether policy is enforceable, and whether audit evidence can be produced without a week of screenshots.

But SaaS agents are also where governance gets politically harder. A customer-support agent in Zendesk, a content agent in a productivity platform, a workflow agent in n8n, and a vertical banking agent do not share the same risk model. They touch different data, serve different owners, and may be procured by different departments. Central visibility is useful, but central control can quickly become a fight over who is allowed to slow down business teams.

That is why Microsoft’s partner-services push matters. The announcement names launch partners including Accenture, Bechtle, Capgemini, Insight, KPMG, Protiviti, and Slalom, with services for planning, onboarding, governance, managed operations, and security integration. This is not just product packaging. It is consulting-channel formation.

Microsoft is effectively saying that agent governance is complicated enough to need an ecosystem of advisors. That may be true. It also means customers should expect Agent 365 adoption to look less like flipping on a feature and more like an identity, endpoint, data governance, and operating-model project.

This is one of the more pragmatic parts of Microsoft’s strategy. If agents are going to operate applications, access files, browse web destinations, and perform tasks that look like user work, then putting them inside managed Windows environments gives enterprises something familiar to hold onto. The Cloud PC becomes a containment boundary, an administrative object, and a policy target.

It also aligns with Microsoft’s larger Windows 365 agenda. For years, Microsoft has sold Cloud PCs as a way to centralize, secure, and simplify user computing. Agentic workloads give that platform a new role. Instead of only streaming a desktop to a human, Windows 365 can become a managed workspace for non-human actors.

There is a certain irony here. The industry spent years trying to move beyond desktop automation, only to rediscover that many business processes still live in applications with user interfaces, local dependencies, browser sessions, and awkward legacy workflows. Agents may be “AI-native” in rhetoric, but many will still need a place to click, read, type, download, upload, and wait.

For admins, the key question will be isolation. If Windows 365 for Agents becomes a standard place to run enterprise agents, organizations will need clear boundaries between agent workloads, human user environments, data classes, and privileged systems. A managed box is better than an unmanaged laptop. It is not magic.

Agents can browse, retrieve, upload, call APIs, and interact with web services faster than humans. They can also be manipulated by malicious instructions embedded in content, tricked into using unsafe destinations, or induced to overshare sensitive files. The prompt-injection problem is not solved by telling users to be careful when the “user” is an agent processing content at machine speed.

Network controls are therefore a blunt but useful instrument. Organizations can restrict connections to approved destinations, identify unsanctioned AI services, filter risky file movement, and attempt to block malicious prompt-based attacks before they cascade into harmful actions. This is the security industry’s familiar pattern: when the application layer becomes too dynamic, enforce more at identity, endpoint, network, and data boundaries.

The danger is overconfidence. Network controls can reduce exposure, but they do not understand business intent on their own. A sanctioned agent can still make a bad decision inside an approved service. An approved destination can still host malicious content. A permitted file transfer can still be the wrong file transfer. Agent 365’s value will depend on how well Microsoft correlates network behavior with identity, endpoint context, agent registry data, and data sensitivity.

That is the reason Microsoft keeps tying Agent 365 to Defender, Intune, Entra, and Purview rather than presenting it as a standalone AI product. Agent security is not one control. It is a fabric of controls that only becomes persuasive when the signals reinforce each other.

For customers already wrestling with E5, Copilot, Security Copilot capacity, Entra add-ons, Defender components, Purview features, and Intune licensing, the arrival of another SKU may not inspire celebration. Microsoft’s bundling strategy often makes architectural sense and procurement headaches at the same time. The platform becomes more integrated as the invoice becomes harder to explain.

The fairness of the price will depend on adoption density. If an organization has a handful of Copilot Studio agents and a cautious AI program, Agent 365 may feel premature. If it has hundreds of departmental agents, local coding agents, SaaS agents, and cloud-built agents across multiple platforms, a control plane becomes easier to justify. The cost of not knowing what is running may exceed the cost of licensing the people responsible for it.

There is also a subtle organizational effect. By licensing users who manage, sponsor, or benefit from agents, Microsoft nudges companies to assign ownership. That may be useful. One of the worst patterns in enterprise automation is the ownerless workflow: built by someone who left, authenticated with stale credentials, touching systems nobody wants to break. Agent 365’s governance model implicitly says every agent needs a human accountable somewhere.

Still, buyers should watch for scope creep. Agent governance could easily become a dependency for serious Copilot adoption, then a prerequisite for acceptable audit posture, then a practical requirement for using more advanced Microsoft 365 AI features. Microsoft has a long history of turning operational maturity into a premium tier.

The essential issue is delegation. A human asks an agent to do something. The agent interprets the request, gathers context, invokes tools, and acts. Somewhere along that chain, policy must decide whether the action is allowed. Traditional access control answers whether an identity can reach a resource. Agent governance must ask whether this identity, acting through this agent, for this user or business process, using this tool, in this context, should perform this action now.

That is a much richer policy problem than most organizations are ready to manage. Least privilege is easy to endorse and difficult to operationalize even for human users. For agents, least privilege may need to be task-specific, time-bound, context-aware, and continuously reviewed. A support-ticket triage agent may need broad read access but narrow write access. A coding agent may need repository access but not production secrets. A finance agent may need spreadsheet access but not unrestricted email forwarding.

Microsoft can provide the machinery, but customers must still do the governance work. They must classify agents, assign owners, define acceptable behaviors, review permissions, manage exceptions, and respond when the agent does something unexpected. The uncomfortable truth is that many organizations still struggle with service accounts, app registrations, and SaaS permissions. Agents add more moving parts.

That is why the “control plane” framing is both compelling and incomplete. A control plane can expose the levers. It cannot decide an organization’s risk appetite. It cannot fix messy data permissions. It cannot make every business unit document why an agent exists. It cannot turn vague enthusiasm for AI into durable operating discipline.

Microsoft Agent 365 may not settle the agent governance market, and its first generally available release will almost certainly expose gaps as customers bring messy real-world estates into the registry. But the direction is clear: the next fight in enterprise AI will not be over who can create the most impressive demo agent, but over who can prove that thousands of them can be owned, constrained, audited, and shut down when necessary. If Microsoft is right, the companies that win with agents will not be the ones that deploy them fastest; they will be the ones that make them boring enough to trust.

Source: Microsoft Microsoft Agent 365, now generally available, expands capabilities and integrations | Microsoft Security Blog

Microsoft Is Turning Agent Sprawl Into a Platform Problem

Microsoft Is Turning Agent Sprawl Into a Platform Problem

The important word in Microsoft’s announcement is not “agent.” It is “control plane.” Agent 365 is Microsoft’s attempt to define the enterprise AI agent market before that market hardens into a mess of isolated dashboards, custom scripts, SaaS-specific admin pages, endpoint alerts, and governance theater.That ambition matters because AI agents do not behave like ordinary applications. A chatbot answers. An automation runs a workflow. An agent, at least in the enterprise pitch, can observe context, invoke tools, access data, coordinate with other systems, and make progress against a goal with less direct human steering. That makes it useful, but it also makes it slippery.

Microsoft’s thesis is blunt: companies are already running agents, whether the CIO has approved them or not. They may be embedded in Microsoft 365 Copilot, built in Copilot Studio, created in Microsoft Foundry, deployed through third-party SaaS platforms, running locally on developer workstations, or tied to cloud services such as Amazon Bedrock and Google’s enterprise AI platforms. The agent is not a single product category anymore. It is becoming a behavior pattern across software.

Agent 365 is Microsoft’s answer to the uncomfortable governance question that follows. If an organization cannot say which agents exist, who owns them, what identities they use, what data they can reach, and what tools they can invoke, then “AI transformation” becomes a euphemism for unmanaged delegation. Microsoft is using general availability to argue that the age of casual experimentation is over.

The company also knows the fear it is selling into. Security teams understand shadow IT. They understand over-permissioned service accounts. They understand OAuth consent sprawl, forgotten automation, unmanaged endpoints, and SaaS apps that quietly become critical infrastructure. Agentic AI collapses all those problems into something faster, more persuasive, and potentially more autonomous.

The New GA Product Is Really a Governance Claim

Agent 365 is now generally available for commercial customers, either as part of Microsoft 365 E7 or as a standalone product priced at $15 per user per month. Microsoft describes the license as covering people who manage or sponsor agents, or who use agents to do work on their behalf. That is an interesting choice, because Microsoft is not pricing the product per agent.That choice reveals the product’s worldview. Microsoft is treating agents less like discrete software assets and more like extensions of organizational labor. The human remains the billing anchor, even when the software acts behind the scenes with its own credentials and permissions. For procurement teams, that may simplify forecasting. For admins, it raises a practical question: if agents multiply by the hundreds or thousands, how cleanly will Microsoft’s user-based licensing map to real operational complexity?

The general-availability package covers agents working on behalf of users with delegated access, as well as agents operating behind the scenes with their own access. Microsoft says support for agents participating in team workflows with their own access is in public preview. That distinction is not bureaucratic; it is the line between “assistant” and “coworker.”

A delegated agent inherits some of the familiar governance model of the user it represents. An agent with its own identity is different. It needs lifecycle management, permission review, ownership, logging, and potentially incident response as a first-class actor inside the enterprise. Once an agent can work independently, it starts to resemble a service principal with initiative.

That is why Agent 365’s general availability lands as more than another Copilot-era SKU. Microsoft is trying to establish that agents need a corporate system of record. In Microsoft’s preferred future, the agent registry sits beside Entra, Defender, Intune, Purview, Teams, and the Microsoft 365 admin center as part of the same management fabric.

Shadow AI Has Found Its Endpoint

The most Windows-relevant part of the announcement is Microsoft’s move to discover and manage local AI agents on Windows devices through Defender and Intune. Microsoft names OpenClaw first, with GitHub Copilot CLI and Claude Code described as coming next. That list is not incidental. It points directly at developers, power users, and the new class of local coding and task agents that can read files, modify code, call tools, and interact with networked services.For years, endpoint management has been about hardware posture, patch state, malware, app control, data loss, and identity. Agentic tools add a new category: software that may be installed by a user, operate locally, connect outward to AI services, and touch sensitive repositories or documents in ways that look legitimate until they are not. This is shadow AI in its most concrete form.

Microsoft says customers in its Frontier program can see whether OpenClaw agents are being used in the organization, identify the devices where they are running, and apply Intune policies to block common methods of running OpenClaw. The inventory of local agents is intended to flow through the Agent 365 registry into Defender and Intune, giving security and endpoint teams a shared picture.

That phrase “common methods” should make admins pause. Blocking an agent is rarely as simple as blocking one executable forever. Developers rename binaries. Tools ship through package managers. Extensions invoke runtimes. Scripts wrap CLIs. If the local agent ecosystem grows the way Microsoft expects, detection will become a moving target.

Still, Microsoft’s approach is sensible because the endpoint is where many unsanctioned agents become real. A SaaS agent may live behind an admin portal. A cloud-hosted agent may appear in a platform registry. A local coding agent, by contrast, can emerge from a user’s terminal, touch source code, read local secrets, call MCP servers, and push changes into enterprise systems before central IT knows it exists.

Defender Is Being Asked to Map the Agent Blast Radius

The June 2026 preview features are where Agent 365 starts to sound less like inventory and more like attack-path management. Microsoft says Defender will provide asset context mapping for each agent, including the devices it runs on, the MCP servers configured for it, the identities associated with it, and the cloud resources those identities can reach. That is the right mental model.Security teams do not only need to know that an agent exists. They need to know what happens if it is tricked, compromised, misconfigured, or over-permissioned. An agent that can read a local repository is one risk. An agent that can read a local repository, use an MCP server, authenticate as a privileged identity, and reach production cloud resources is a very different one.

This is where the agent security conversation stops being futuristic and becomes ordinary incident response. A compromised endpoint already matters. A compromised developer identity already matters. A misconfigured automation token already matters. Agents combine those familiar elements and add a reasoning loop that may accelerate misuse.

Microsoft is promising relationship maps that show where an agent runs, which MCP servers are configured, which identities are associated with it, and which cloud resources those identities can reach. If implemented well, that could help security teams prioritize the agents that actually matter instead of drowning in novelty alerts. If implemented poorly, it could become another graph that looks impressive in a keynote and sits ignored during real investigations.

The runtime blocking claim is similarly important. Microsoft says Defender will be able to block coding agents at runtime when a managed agent exhibits malicious behavior patterns, such as attempting to access or exfiltrate sensitive data, and generate alerts with incident context. That is the point where Agent 365 crosses from governance into active control.

It also raises the hard question Microsoft cannot answer with marketing language: how confidently can a security product distinguish a dangerous agent action from an ambitious but legitimate developer task? A coding agent reading many files may be refactoring. A coding agent sending context to a remote endpoint may be using an approved AI service. A coding agent attempting to touch credentials may be either compromised or trying to fix a deployment pipeline. The future of agent security will be full of these gray zones.

Microsoft Wants the Registry Even When the Agent Lives Elsewhere

Agent 365’s multicloud preview is just as strategically important as the Windows endpoint story. Microsoft says admins can connect and sync the Agent 365 registry with Amazon Bedrock and Google Cloud connections, enabling discovery, inventory, and eventually basic lifecycle governance such as starting, stopping, and deleting agents across those platforms.That is Microsoft speaking directly to enterprise reality. Large customers are not going to build every agent in Copilot Studio or Microsoft Foundry. Developers will experiment where models, APIs, frameworks, and business units take them. Some teams will use AWS. Others will use Google. Others will buy SaaS tools with embedded agents and never think of themselves as “building AI” at all.

The risk for Microsoft is that agent governance becomes fragmented before Microsoft can define the category. If AWS, Google, ServiceNow, Salesforce, Atlassian, GitHub, Anthropic, OpenAI, and every vertical SaaS vendor each own their own slice of agent administration, Microsoft 365 loses the privileged position it has enjoyed as the default workplace control surface. Agent 365 is a bid to prevent that.

The company’s strongest argument is that identity, endpoint, email, documents, Teams, compliance, and security operations already live heavily inside Microsoft estates. If agents touch those systems, Microsoft can argue that agent governance belongs close to them. The weaker part of the argument is that “single control plane” has been promised across many generations of enterprise technology and rarely arrives without caveats.

Even so, registry sync is the right first move. Organizations need a place to ask basic questions before they can enforce sophisticated policy. Which agents exist? Which platform created them? Which model are they based on? Which resources can they access? Who owns them? Are they still needed? In agent governance, boring inventory is not a footnote. It is the foundation.

SaaS Agents Are the Real Governance Stress Test

Microsoft’s ecosystem announcement widens the aperture beyond Microsoft-built agents and cloud-builder platforms. Agent 365 now covers partner agents from companies including Genspark, Zensai, Egnyte, and Zendesk, along with agents built on agent factories such as Kasisto, Kore, and n8n. Microsoft says these agents can be observed, governed, and secured in Agent 365 without integration work by IT or security teams.That “without integration work” promise is doing a lot of work. If Microsoft can make third-party agents show up in a consistent admin experience, it has a meaningful advantage. IT teams are exhausted by bespoke integrations. Security teams are tired of asking vendors whether logs are available, whether identity is sane, whether policy is enforceable, and whether audit evidence can be produced without a week of screenshots.

But SaaS agents are also where governance gets politically harder. A customer-support agent in Zendesk, a content agent in a productivity platform, a workflow agent in n8n, and a vertical banking agent do not share the same risk model. They touch different data, serve different owners, and may be procured by different departments. Central visibility is useful, but central control can quickly become a fight over who is allowed to slow down business teams.

That is why Microsoft’s partner-services push matters. The announcement names launch partners including Accenture, Bechtle, Capgemini, Insight, KPMG, Protiviti, and Slalom, with services for planning, onboarding, governance, managed operations, and security integration. This is not just product packaging. It is consulting-channel formation.

Microsoft is effectively saying that agent governance is complicated enough to need an ecosystem of advisors. That may be true. It also means customers should expect Agent 365 adoption to look less like flipping on a feature and more like an identity, endpoint, data governance, and operating-model project.

Windows 365 for Agents Moves the Workload Into a Managed Box

Agent 365 is the control plane; Windows 365 for Agents is the execution environment. Microsoft says Windows 365 for Agents is now in public preview in the United States only and provides a new class of Cloud PCs purpose-built for agentic workloads. These Cloud PCs are managed in Intune and designed to let agents interact with applications inside policy-controlled environments.This is one of the more pragmatic parts of Microsoft’s strategy. If agents are going to operate applications, access files, browse web destinations, and perform tasks that look like user work, then putting them inside managed Windows environments gives enterprises something familiar to hold onto. The Cloud PC becomes a containment boundary, an administrative object, and a policy target.

It also aligns with Microsoft’s larger Windows 365 agenda. For years, Microsoft has sold Cloud PCs as a way to centralize, secure, and simplify user computing. Agentic workloads give that platform a new role. Instead of only streaming a desktop to a human, Windows 365 can become a managed workspace for non-human actors.

There is a certain irony here. The industry spent years trying to move beyond desktop automation, only to rediscover that many business processes still live in applications with user interfaces, local dependencies, browser sessions, and awkward legacy workflows. Agents may be “AI-native” in rhetoric, but many will still need a place to click, read, type, download, upload, and wait.

For admins, the key question will be isolation. If Windows 365 for Agents becomes a standard place to run enterprise agents, organizations will need clear boundaries between agent workloads, human user environments, data classes, and privileged systems. A managed box is better than an unmanaged laptop. It is not magic.

Network Controls Bring the Old Security Stack Back Into the AI Story

Microsoft is also extending Entra network controls to Microsoft Copilot Studio agents and agents running on user endpoint devices, including local agents such as OpenClaw. In plain English, Microsoft wants agent traffic to become inspectable and governable at the network layer. That is a predictable but necessary move.Agents can browse, retrieve, upload, call APIs, and interact with web services faster than humans. They can also be manipulated by malicious instructions embedded in content, tricked into using unsafe destinations, or induced to overshare sensitive files. The prompt-injection problem is not solved by telling users to be careful when the “user” is an agent processing content at machine speed.

Network controls are therefore a blunt but useful instrument. Organizations can restrict connections to approved destinations, identify unsanctioned AI services, filter risky file movement, and attempt to block malicious prompt-based attacks before they cascade into harmful actions. This is the security industry’s familiar pattern: when the application layer becomes too dynamic, enforce more at identity, endpoint, network, and data boundaries.

The danger is overconfidence. Network controls can reduce exposure, but they do not understand business intent on their own. A sanctioned agent can still make a bad decision inside an approved service. An approved destination can still host malicious content. A permitted file transfer can still be the wrong file transfer. Agent 365’s value will depend on how well Microsoft correlates network behavior with identity, endpoint context, agent registry data, and data sensitivity.

That is the reason Microsoft keeps tying Agent 365 to Defender, Intune, Entra, and Purview rather than presenting it as a standalone AI product. Agent security is not one control. It is a fabric of controls that only becomes persuasive when the signals reinforce each other.

The Pricing Tells Enterprises This Is Now a Tier

The $15-per-user standalone price puts Agent 365 in a familiar Microsoft category: important enough to monetize separately, strategic enough to bundle upward. Microsoft 365 E7, launched as the broader Frontier Suite, packages E5, Microsoft 365 Copilot, and Agent 365 into a premium bundle. That is Microsoft’s clearest signal that agent governance is becoming part of the upsell path for AI-first enterprises.For customers already wrestling with E5, Copilot, Security Copilot capacity, Entra add-ons, Defender components, Purview features, and Intune licensing, the arrival of another SKU may not inspire celebration. Microsoft’s bundling strategy often makes architectural sense and procurement headaches at the same time. The platform becomes more integrated as the invoice becomes harder to explain.

The fairness of the price will depend on adoption density. If an organization has a handful of Copilot Studio agents and a cautious AI program, Agent 365 may feel premature. If it has hundreds of departmental agents, local coding agents, SaaS agents, and cloud-built agents across multiple platforms, a control plane becomes easier to justify. The cost of not knowing what is running may exceed the cost of licensing the people responsible for it.

There is also a subtle organizational effect. By licensing users who manage, sponsor, or benefit from agents, Microsoft nudges companies to assign ownership. That may be useful. One of the worst patterns in enterprise automation is the ownerless workflow: built by someone who left, authenticated with stale credentials, touching systems nobody wants to break. Agent 365’s governance model implicitly says every agent needs a human accountable somewhere.

Still, buyers should watch for scope creep. Agent governance could easily become a dependency for serious Copilot adoption, then a prerequisite for acceptable audit posture, then a practical requirement for using more advanced Microsoft 365 AI features. Microsoft has a long history of turning operational maturity into a premium tier.

The Weak Point Is Not Discovery, It Is Delegation

Microsoft’s announcement is strongest when it talks about visibility. It is more speculative when it implies that visibility will become control. Discovering agents is hard, but enterprises have solved versions of that problem before. Governing what agents are allowed to do is harder because agents sit at the intersection of identity, data, tooling, and intent.The essential issue is delegation. A human asks an agent to do something. The agent interprets the request, gathers context, invokes tools, and acts. Somewhere along that chain, policy must decide whether the action is allowed. Traditional access control answers whether an identity can reach a resource. Agent governance must ask whether this identity, acting through this agent, for this user or business process, using this tool, in this context, should perform this action now.

That is a much richer policy problem than most organizations are ready to manage. Least privilege is easy to endorse and difficult to operationalize even for human users. For agents, least privilege may need to be task-specific, time-bound, context-aware, and continuously reviewed. A support-ticket triage agent may need broad read access but narrow write access. A coding agent may need repository access but not production secrets. A finance agent may need spreadsheet access but not unrestricted email forwarding.

Microsoft can provide the machinery, but customers must still do the governance work. They must classify agents, assign owners, define acceptable behaviors, review permissions, manage exceptions, and respond when the agent does something unexpected. The uncomfortable truth is that many organizations still struggle with service accounts, app registrations, and SaaS permissions. Agents add more moving parts.

That is why the “control plane” framing is both compelling and incomplete. A control plane can expose the levers. It cannot decide an organization’s risk appetite. It cannot fix messy data permissions. It cannot make every business unit document why an agent exists. It cannot turn vague enthusiasm for AI into durable operating discipline.

The First Agent 365 Wave Is a Warning Shot for IT

The concrete message for Windows and Microsoft 365 administrators is that agentic AI is becoming an estate-management problem, not a lab curiosity. The announcement gives IT teams a preview of the work that will define the next phase of enterprise AI adoption.- Agent 365 is now generally available for commercial customers and is being positioned as the central Microsoft control plane for observing, governing, and securing agents.

- Microsoft is extending Agent 365 beyond Copilot by tying it to Defender, Intune, Entra network controls, Windows 365 for Agents, SaaS partners, and multicloud registries.

- Local AI agents on Windows devices are becoming a first-class security concern, starting with OpenClaw discovery and policy controls and expanding toward tools such as GitHub Copilot CLI and Claude Code.

- The June 2026 preview features will matter because blast-radius mapping, MCP server visibility, identity context, and runtime blocking are where agent inventory becomes security operations.

- The standalone $15-per-user price and the Microsoft 365 E7 bundle make clear that Microsoft sees agent governance as a premium enterprise capability, not a free Copilot accessory.

- Customers should treat Agent 365 adoption as an operating-model project involving ownership, least privilege, lifecycle management, data protection, and incident response rather than as a simple admin-center toggle.

Microsoft Agent 365 may not settle the agent governance market, and its first generally available release will almost certainly expose gaps as customers bring messy real-world estates into the registry. But the direction is clear: the next fight in enterprise AI will not be over who can create the most impressive demo agent, but over who can prove that thousands of them can be owned, constrained, audited, and shut down when necessary. If Microsoft is right, the companies that win with agents will not be the ones that deploy them fastest; they will be the ones that make them boring enough to trust.

Source: Microsoft Microsoft Agent 365, now generally available, expands capabilities and integrations | Microsoft Security Blog