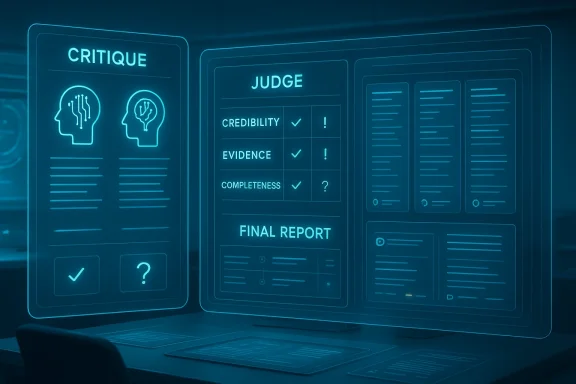

Microsoft is pushing AI research beyond simple answer generation and into something closer to an internal review process, and that is the real significance of Critique and Council. In Microsoft’s Copilot Researcher experience, the company is experimenting with a multi-model workflow where one model drafts an answer and another evaluates it against a rubric, while Council compares multiple model outputs side by side. The result is not just a smarter chatbot, but a system designed to question itself before presenting a final report. Microsoft’s own documentation on Researcher emphasizes that the experience is built for deep, multi-step research, with citations and structured reports as core design goals. citeturn0search1turn0search2turn0search0

For years, the dominant AI product pattern has been remarkably simple: one model takes a prompt, retrieves some context, writes a response, and hands it back to the user. That approach works well for quick Q&A, but it creates a familiar problem in longer research tasks: the system can sound polished while still missing evidence, nuance, or even basic factual grounding. Microsoft’s new Critique approach attacks that weakness directly by separating generation from evaluation, which is a notable shift in how enterprise AI is being built. Microsoft’s Researcher agent is already positioned as a deeper, multi-step tool than standard Copilot chat, and Critique appears to formalize that depth with a second layer of judgment. citeturn0search1turn0search2turn0search6

The broader context matters here. Microsoft has been steadily expanding Copilot into a family of tools that do not just answer questions, but assemble and verify information from multiple sources, including the web and enterprise data in Microsoft Graph. At the same time, Microsoft has openly described Copilot as a system that uses a variety of AI models, including OpenAI and Anthropic models, depending on the task. That multi-model posture is important because Critique and Council are not just product features; they are an expression of Microsoft’s strategy to route different stages of work to different models rather than forcing one model to do everything. citeturn0search0turn0search1

This is also part of a larger industry move toward evaluation-heavy AI. A few years ago, the biggest question was whether a model could produce a coherent answer at all. Now the question is whether the model can verify, compare, and defend that answer under scrutiny. Microsoft’s own documentation on Copilot Studio and deep research techniques shows that the company is investing in rubric-based evaluation, LLM judges, and repeatable quality checks as part of the AI stack. In other words, Critique is not an isolated gimmick; it fits a much wider design philosophy. citeturn0search5turn0search3

That shift has real implications for enterprise buyers. A finance team, legal department, consulting group, or policy analyst does not just want a fluent answer; it wants a defensible answer. A system that can surface disagreement, cite sources, and indicate where reasoning is weak is much closer to how human teams actually work. Microsoft seems to understand that the future of enterprise AI is not merely speed, but auditability and accountability. citeturn0search1turn0search6

Critique also reflects a practical reality that AI systems often perform better when they are forced into distinct phases. Planning is not the same as writing, and writing is not the same as verification. By making those phases explicit, Microsoft is trying to reduce the odds that one model’s early mistake cascades all the way to the final answer. It is not a guarantee of correctness, but it is a meaningful improvement over the classic single-pass pipeline. citeturn0search3turn0search5

That is especially useful in high-stakes domains. A legal team may want to know not only the recommended interpretation, but also the alternate interpretation that another model considered plausible. A financial analyst may care less about a single polished summary and more about the range of plausible scenarios. Council is, in effect, a built-in mechanism for showing the shape of disagreement before a decision is made. citeturn0search0turn0search1

The design also helps users avoid over-trusting a single model’s voice. AI systems can be persuasive even when they are wrong, and the human tendency is to read confidence as competence. Council deliberately interrupts that pattern by showing multiple independent outputs, which makes the user part of the judgment process rather than a passive consumer of one synthetic narrative. That is a subtle but important shift. citeturn0search5turn0search6

But rubrics also create risk. If the reviewer is overly focused on visible citations, for example, it may reward sources that look legitimate without deeply checking whether the sources are truly authoritative. If it overweights completeness, it may penalize concise answers that are actually correct. And if the reviewer inherits the same bias as the generator, the system can end up merely confirming its own mistakes in a more polished form. That is the classic failure mode of self-evaluation. citeturn0search3turn0search5

Microsoft’s broader documentation suggests it is aware of this problem. The company describes evaluation systems in Copilot Studio as relying on quality and safety scoring, including relevance, groundedness, and abstention behavior. That points to a broader internal culture of model assessment rather than blind trust in model output. Still, an evaluation layer is not the same thing as true verification, and users should treat it as a useful filter rather than an oracle. citeturn0search7turn0search5

The phrase “depth and breadth of analysis” sounds impressive, but it also needs unpacking. Depth usually means the system can handle nuance, evidence, and argumentation. Breadth can mean it covers more relevant angles, but it can also mean it produces longer output without necessarily improving the underlying reasoning. Users should therefore be cautious about equating longer or more elaborate reports with better analysis. More words are not the same as more truth. citeturn0search0turn0search3

There is also the issue of benchmark selection. If a benchmark is designed to reward multi-step research and comparative reasoning, then multi-model systems will naturally look stronger there than on simpler prompt-response tasks. That does not invalidate the result, but it does mean the result reflects a particular product ambition. Microsoft is optimizing for structured research, not for every possible AI use case. citeturn0search0turn0search1

That orchestration mindset is already visible in Microsoft’s documentation for deep research and Researcher. The company explicitly frames Researcher as a deep, multi-step tool that delivers source-cited reports, and it notes that model selection can be controlled in some Researcher contexts. That means Microsoft is not just swapping models behind the scenes; it is making the model layer a meaningful product dimension. citeturn0search1turn0search2

For rivals, this is a competitive challenge. If Microsoft can make multi-model research feel seamless, then users may stop thinking in terms of “which chatbot is smartest” and start thinking in terms of “which workflow is most trustworthy.” That is a harder market to win with a simple chat interface. It also raises the bar for competitors that still center their products around a single assistant experience. citeturn0search0turn0search3

That does not mean the system replaces expert review. Quite the opposite: it may make expert review more productive by surfacing the cases that deserve attention. If a judge model flags a weak claim or model disagreement, an analyst can focus time where it matters most. That is a classic enterprise efficiency win, and one that could justify the added compute costs. citeturn0search3turn0search7

It also fits Microsoft’s long-standing enterprise positioning. The company consistently frames Copilot as a tool that respects permissions, policies, and compliance boundaries inside Microsoft 365. That is important because enterprise adoption is not just about intelligence; it is about fitting into established governance models. A multi-model research workflow is easier to sell when it lives inside a familiar security and data-control envelope. citeturn0search1turn0search6

Still, there is consumer value in transparency. People increasingly know that AI can be wrong, but they do not always know how wrong, or why. A multi-model view can help users develop better instincts about confidence, trade-offs, and source quality. Over time, that could make consumers less susceptible to overly polished but unreliable outputs. citeturn0search6turn0search3

Microsoft may also be laying groundwork for a broader normalization of AI comparison tools. If users get used to seeing competing model outputs in one Microsoft experience, they may come to expect that feature elsewhere. That could push the whole market toward more interpretable AI rather than more assertive AI, which would be a healthy shift. citeturn0search0turn0search5

A final concern is that comparing multiple AI outputs may not always produce clearer answers. Sometimes the models will disagree in ways that reflect style rather than substance, which can waste time instead of saving it. The art will be in surfacing meaningful disagreement while suppressing noise, and that will require careful tuning over time. That is easier said than done. citeturn0search0turn0search5

Another key question is how Microsoft balances visibility with simplicity. A deep research tool should expose uncertainty, but not drown users in it. The best version of this product will probably be one that makes comparison and critique available when needed, while keeping the default experience readable and fast. That balance will likely determine adoption more than any single benchmark score. citeturn0search1turn0search2

Finally, the competitive response will matter. If other major AI platforms add similar multi-model comparison and internal critique systems, the market may quickly normalize this pattern. If they do not, Microsoft could establish a meaningful lead in enterprise research workflows. Either way, the broader direction is clear: AI products are being pushed to become less like answer engines and more like reasoning environments. citeturn0search0turn0search5

Source: YourStory.com https://yourstory.com/ai-story/microsoft-critique-ai-multi-model-deep-research-explained/

Overview

Overview

For years, the dominant AI product pattern has been remarkably simple: one model takes a prompt, retrieves some context, writes a response, and hands it back to the user. That approach works well for quick Q&A, but it creates a familiar problem in longer research tasks: the system can sound polished while still missing evidence, nuance, or even basic factual grounding. Microsoft’s new Critique approach attacks that weakness directly by separating generation from evaluation, which is a notable shift in how enterprise AI is being built. Microsoft’s Researcher agent is already positioned as a deeper, multi-step tool than standard Copilot chat, and Critique appears to formalize that depth with a second layer of judgment. citeturn0search1turn0search2turn0search6The broader context matters here. Microsoft has been steadily expanding Copilot into a family of tools that do not just answer questions, but assemble and verify information from multiple sources, including the web and enterprise data in Microsoft Graph. At the same time, Microsoft has openly described Copilot as a system that uses a variety of AI models, including OpenAI and Anthropic models, depending on the task. That multi-model posture is important because Critique and Council are not just product features; they are an expression of Microsoft’s strategy to route different stages of work to different models rather than forcing one model to do everything. citeturn0search0turn0search1

This is also part of a larger industry move toward evaluation-heavy AI. A few years ago, the biggest question was whether a model could produce a coherent answer at all. Now the question is whether the model can verify, compare, and defend that answer under scrutiny. Microsoft’s own documentation on Copilot Studio and deep research techniques shows that the company is investing in rubric-based evaluation, LLM judges, and repeatable quality checks as part of the AI stack. In other words, Critique is not an isolated gimmick; it fits a much wider design philosophy. citeturn0search5turn0search3

That shift has real implications for enterprise buyers. A finance team, legal department, consulting group, or policy analyst does not just want a fluent answer; it wants a defensible answer. A system that can surface disagreement, cite sources, and indicate where reasoning is weak is much closer to how human teams actually work. Microsoft seems to understand that the future of enterprise AI is not merely speed, but auditability and accountability. citeturn0search1turn0search6

What Critique Actually Changes

Critique changes the architecture of AI research by splitting one big task into two distinct jobs. The first model acts as the generator, handling planning, retrieval, and drafting. The second acts as the reviewer, checking whether the response is complete, grounded, and properly supported by evidence. That separation is more than an implementation detail; it changes the incentives of the workflow, because the drafting model no longer has to be the same one that judges the final output. citeturn0search5turn0search0A cleaner division of labor

This division of labor resembles a newsroom editor reading a reporter’s draft before publication. The reporter may have the facts, but the editor checks the logic, structure, and omissions. Microsoft’s rubric-based reviewer performs a similar function, and its focus on credibility, relevance, completeness, and evidence suggests a deliberate attempt to build a more disciplined research workflow. That is especially useful in long-form AI answers, where fluent prose can conceal shallow reasoning. citeturn0search5turn0search3Critique also reflects a practical reality that AI systems often perform better when they are forced into distinct phases. Planning is not the same as writing, and writing is not the same as verification. By making those phases explicit, Microsoft is trying to reduce the odds that one model’s early mistake cascades all the way to the final answer. It is not a guarantee of correctness, but it is a meaningful improvement over the classic single-pass pipeline. citeturn0search3turn0search5

- Generator model: plans, retrieves, and drafts.

- Reviewer model: checks grounding, completeness, and source quality.

- Rubric-driven evaluation: focuses attention on evidence rather than style.

- Feedback loop: aims to improve structure and reliability before delivery.

- Enterprise relevance: better suited to decisions that need justification.

Why Council Matters Too

If Critique is about improving a single answer, Council is about comparing multiple answers before trusting any of them. In practice, that means the system can run several models in parallel, then use a separate judge to summarize where they agree, where they differ, and what each model contributes. This is a very different philosophy from the traditional “best answer wins” approach, because it exposes uncertainty instead of hiding it. citeturn0search0turn0search5Comparison as a feature, not a failure

The value of Council lies in the fact that disagreement is often informative. If three models produce nearly identical conclusions, that may be reassuring. If they diverge sharply, that can reveal weak assumptions, missing data, or differences in reasoning style. For research workflows, those differences are often as valuable as the final answer itself, because they help users identify where deeper human judgment is needed. citeturn0search0turn0search3That is especially useful in high-stakes domains. A legal team may want to know not only the recommended interpretation, but also the alternate interpretation that another model considered plausible. A financial analyst may care less about a single polished summary and more about the range of plausible scenarios. Council is, in effect, a built-in mechanism for showing the shape of disagreement before a decision is made. citeturn0search0turn0search1

The design also helps users avoid over-trusting a single model’s voice. AI systems can be persuasive even when they are wrong, and the human tendency is to read confidence as competence. Council deliberately interrupts that pattern by showing multiple independent outputs, which makes the user part of the judgment process rather than a passive consumer of one synthetic narrative. That is a subtle but important shift. citeturn0search5turn0search6

- Multiple outputs can reveal hidden blind spots.

- Model agreement can increase confidence.

- Model disagreement can highlight uncertainty.

- Judge summaries can compress complexity for decision-makers.

- The feature is especially useful in analytical, not casual, tasks.

The Rubric Problem

At the heart of Critique is the idea of a rubric, and that is where the technical challenge gets interesting. A rubric can make evaluation more consistent, but it can also flatten judgment if it is too rigid or too narrow. Microsoft says the reviewer checks whether sources are credible and relevant, whether the response fully answers the query, and whether claims are supported by evidence. Those are sensible criteria, but any rubric is only as good as the assumptions built into it. citeturn0search5turn0search3What good evaluation looks like

Good evaluation in AI research should do more than mark content as “right” or “wrong.” It should assess whether a claim is traceable, whether the answer covers the actual question, and whether the evidence matches the conclusion. That mirrors how human analysts work in disciplined environments, where the quality of the reasoning matters as much as the final statement. Microsoft’s approach is notable because it tries to encode those expectations into the system itself. citeturn0search5turn0search6But rubrics also create risk. If the reviewer is overly focused on visible citations, for example, it may reward sources that look legitimate without deeply checking whether the sources are truly authoritative. If it overweights completeness, it may penalize concise answers that are actually correct. And if the reviewer inherits the same bias as the generator, the system can end up merely confirming its own mistakes in a more polished form. That is the classic failure mode of self-evaluation. citeturn0search3turn0search5

Microsoft’s broader documentation suggests it is aware of this problem. The company describes evaluation systems in Copilot Studio as relying on quality and safety scoring, including relevance, groundedness, and abstention behavior. That points to a broader internal culture of model assessment rather than blind trust in model output. Still, an evaluation layer is not the same thing as true verification, and users should treat it as a useful filter rather than an oracle. citeturn0search7turn0search5

- Credibility checks can improve trust.

- Relevance checks help avoid off-topic digressions.

- Evidence checks reduce hallucination risk.

- Overly strict rubrics can suppress nuance.

- A judge model can still inherit bias from the system it reviews.

What the Benchmark Claims Mean

Microsoft says it tested Critique on a benchmark called DRACO, which evaluates systems across 100 complex research tasks. According to the company, the Critique-based system showed a seven-point improvement over its single-model setup and a 13.88% advantage over other systems referenced in the benchmark, especially in depth and breadth of analysis. Those are eye-catching numbers, but they should be treated carefully because they are company-reported results. citeturn0search0Benchmark wins are not the same as product truth

Benchmark performance matters, but it can also mislead. A system that wins on a tightly defined test may behave very differently once real users ask messy, ambiguous, or incomplete questions. Research tasks in the wild often involve contradictory sources, incomplete context, and shifting user intent, none of which are easy to capture in a controlled benchmark. That is why independent validation is so important. citeturn0search0turn0search3The phrase “depth and breadth of analysis” sounds impressive, but it also needs unpacking. Depth usually means the system can handle nuance, evidence, and argumentation. Breadth can mean it covers more relevant angles, but it can also mean it produces longer output without necessarily improving the underlying reasoning. Users should therefore be cautious about equating longer or more elaborate reports with better analysis. More words are not the same as more truth. citeturn0search0turn0search3

There is also the issue of benchmark selection. If a benchmark is designed to reward multi-step research and comparative reasoning, then multi-model systems will naturally look stronger there than on simpler prompt-response tasks. That does not invalidate the result, but it does mean the result reflects a particular product ambition. Microsoft is optimizing for structured research, not for every possible AI use case. citeturn0search0turn0search1

- Benchmark gains should be viewed as directional, not final.

- Company-reported results need external validation.

- Real-world tasks are messier than benchmark tasks.

- Better analysis can still be hard to distinguish from longer analysis.

- The benchmark appears aligned with Microsoft’s research-oriented product strategy.

Multi-Model AI as Microsoft’s Broader Strategy

Critique and Council make much more sense when viewed alongside Microsoft’s broader multi-model strategy. Microsoft’s own documentation states that Microsoft 365 Copilot uses a variety of models, including OpenAI and Anthropic models, to match the right model to the right task. That is a clear sign that the company sees AI less as a single model platform and more as a system of specialized model choices. citeturn0search0Why one model is no longer enough

Different models excel at different things. Some are better at speed, some at reasoning, some at summarization, and some at following instructions under constrained contexts. Once a product must do planning, retrieval, synthesis, comparison, and judgment, the argument for a single monolithic model becomes weaker. Microsoft’s approach suggests the future of AI products may look more like orchestration than like one giant brain. citeturn0search0turn0search3That orchestration mindset is already visible in Microsoft’s documentation for deep research and Researcher. The company explicitly frames Researcher as a deep, multi-step tool that delivers source-cited reports, and it notes that model selection can be controlled in some Researcher contexts. That means Microsoft is not just swapping models behind the scenes; it is making the model layer a meaningful product dimension. citeturn0search1turn0search2

For rivals, this is a competitive challenge. If Microsoft can make multi-model research feel seamless, then users may stop thinking in terms of “which chatbot is smartest” and start thinking in terms of “which workflow is most trustworthy.” That is a harder market to win with a simple chat interface. It also raises the bar for competitors that still center their products around a single assistant experience. citeturn0search0turn0search3

- Model diversity can improve specialization.

- Orchestration may matter more than raw model size.

- Product differentiation may shift from chat quality to workflow quality.

- Enterprise buyers may value robustness over novelty.

- Competitors may need to add similar comparison and evaluation layers.

Enterprise Impact

For enterprise users, Critique and Council could be genuinely valuable. Businesses often operate in environments where the cost of a wrong answer is far higher than the cost of a slow answer. A system that can compare multiple models, surface disagreements, and show evidence-based evaluation is much more aligned with that reality than a fast but opaque assistant. citeturn0search1turn0search6Why auditors and analysts will care

In compliance-heavy environments, explainability is not a luxury. Analysts need to know where claims came from, managers need to know what assumptions were made, and legal or finance teams need to know what is still uncertain. Researcher’s emphasis on citations and source-grounded reports already points in this direction, and Critique adds another layer by asking whether the answer is actually well supported before it reaches the user. citeturn0search1turn0search2turn0search5That does not mean the system replaces expert review. Quite the opposite: it may make expert review more productive by surfacing the cases that deserve attention. If a judge model flags a weak claim or model disagreement, an analyst can focus time where it matters most. That is a classic enterprise efficiency win, and one that could justify the added compute costs. citeturn0search3turn0search7

It also fits Microsoft’s long-standing enterprise positioning. The company consistently frames Copilot as a tool that respects permissions, policies, and compliance boundaries inside Microsoft 365. That is important because enterprise adoption is not just about intelligence; it is about fitting into established governance models. A multi-model research workflow is easier to sell when it lives inside a familiar security and data-control envelope. citeturn0search1turn0search6

- Better support for research-heavy roles.

- More defensible outputs for compliance reviews.

- Faster triage of weak or conflicting claims.

- Potentially better collaboration across teams.

- Stronger fit for regulated industries.

Consumer Impact

For consumers, the story is more nuanced. Most casual users do not want to see multiple model reports or a judge summary when they are asking about a travel plan, a quick explanation, or a simple task. For them, the value of AI is still speed and convenience. That means multi-model comparison is likely to feel most useful in premium, research-heavy contexts rather than everyday chat. citeturn0search1turn0search2The usability trade-off

A system like Council could be overwhelming if it is exposed too aggressively. Too many parallel answers can create confusion, especially for users who simply want a direct recommendation. Microsoft’s challenge is to keep the experience accessible while still making uncertainty visible. That is a difficult UX balancing act, and not every user will want the same depth. citeturn0search0turn0search5Still, there is consumer value in transparency. People increasingly know that AI can be wrong, but they do not always know how wrong, or why. A multi-model view can help users develop better instincts about confidence, trade-offs, and source quality. Over time, that could make consumers less susceptible to overly polished but unreliable outputs. citeturn0search6turn0search3

Microsoft may also be laying groundwork for a broader normalization of AI comparison tools. If users get used to seeing competing model outputs in one Microsoft experience, they may come to expect that feature elsewhere. That could push the whole market toward more interpretable AI rather than more assertive AI, which would be a healthy shift. citeturn0search0turn0search5

- Casual users may prefer simplified defaults.

- Power users may welcome deeper comparison.

- Transparency can improve AI literacy.

- Too much output may reduce usability.

- Better explanations may improve trust over time.

Strengths and Opportunities

Microsoft’s biggest strength here is that it is attacking a real, persistent weakness in AI systems: the gap between fluent output and reliable output. By building a separate critique layer and a comparison layer, the company is acknowledging that the quality of an answer depends on process, not just prose. That is a promising direction for the next generation of AI research tools. Microsoft’s own Researcher and deep research materials show a consistent emphasis on citations, structure, and evaluation, which suggests the company is building toward a coherent product philosophy. citeturn0search1turn0search3turn0search5- Better grounding through evidence checks.

- Improved transparency through comparison and judge summaries.

- Stronger enterprise fit for audit-sensitive workflows.

- Multi-model flexibility that reduces dependence on a single system.

- More disciplined research output for long-form tasks.

- Potential benchmark advantages in depth-oriented evaluations.

- A clearer path to trustworthy AI in regulated environments.

Risks and Concerns

The biggest risk is that the reviewer model may give users a false sense of security. A system that critiques its own output can feel more authoritative, but it is still only as strong as the models beneath it. If both the generator and reviewer share similar blind spots, the process may simply produce a more polished error rather than a better one. That is why company-reported benchmark gains should be treated as encouraging, not conclusive. citeturn0search0turn0search3- False confidence if review is mistaken for verification.

- Higher latency from running multiple models.

- Increased compute cost that may limit scale.

- Judge bias if the evaluator inherits model weaknesses.

- UX complexity if comparison becomes too visible.

- Benchmark overfitting if results do not generalize.

- Operational drift as models and sources change over time.

A final concern is that comparing multiple AI outputs may not always produce clearer answers. Sometimes the models will disagree in ways that reflect style rather than substance, which can waste time instead of saving it. The art will be in surfacing meaningful disagreement while suppressing noise, and that will require careful tuning over time. That is easier said than done. citeturn0search0turn0search5

What to Watch Next

The most important thing to watch is whether Microsoft can prove that these ideas work beyond controlled benchmarks. If Critique and Council consistently improve real customer workflows, they could become a template for how research-oriented AI products are designed. If they only look good in demos and limited tests, the market will treat them as an interesting experiment rather than a category shift. citeturn0search0turn0search1turn0search3Another key question is how Microsoft balances visibility with simplicity. A deep research tool should expose uncertainty, but not drown users in it. The best version of this product will probably be one that makes comparison and critique available when needed, while keeping the default experience readable and fast. That balance will likely determine adoption more than any single benchmark score. citeturn0search1turn0search2

Finally, the competitive response will matter. If other major AI platforms add similar multi-model comparison and internal critique systems, the market may quickly normalize this pattern. If they do not, Microsoft could establish a meaningful lead in enterprise research workflows. Either way, the broader direction is clear: AI products are being pushed to become less like answer engines and more like reasoning environments. citeturn0search0turn0search5

- Independent validation of DRACO-style results.

- Enterprise feedback on latency and usefulness.

- UI choices that keep comparison understandable.

- Expansion of model choices inside Researcher.

- Competitive features from rival platforms.

Source: YourStory.com https://yourstory.com/ai-story/microsoft-critique-ai-multi-model-deep-research-explained/