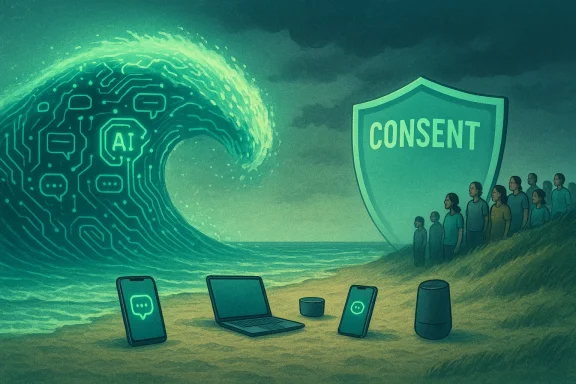

A large, noisy wave of AI announcements has crashed into the consumer device market — but a surprising shoreline remains untouched: more than a third of U.S. adults say they don’t want AI on their devices at all. That gap between vendor enthusiasm and buyer appetite isn’t just a footnote; it poses a strategic and ethical problem for device makers, platform owners, carriers, and the software ecosystem that’s betting on AI as a new revenue engine.

The consumer AI story since late 2022 has been one of rapid product launches and aggressive positioning. From branded assistants and “AI modes” baked into flagship phones to enterprise-first Copilot add-ons, vendors have moved quickly to embed generative models into everything from cameras and keyboards to productivity suites and browsers. The result: near-universal awareness of AI features, but mixed — and often lukewarm — levels of consumer interest.

A recent Connected Intelligence study from Circana captured this tension bluntly: while awareness of device AI is high, roughly 35% of U.S. adults say they are not interested in having AI built into their devices. Reasons cluster around privacy, cost, perceived usefulness, and a simple “I don’t want it” stance. Younger adults are far more receptive than older ones, and voice control remains one of the most commonly used forms of AI today.

This article breaks down the numbers, explains what consumers are actually saying, evaluates the risks for vendors and users, and lays out practical steps companies should take if they want to close the gap between AI hype and consumer demand.

If companies treat AI as a partnership with users rather than a feature to be forced into product bundles, adoption will follow. If they double down on obfuscation, surprise billing, and raw marketing hype, the outcome will be lower trust and slower adoption — and that’s a costly mistake in an era where consumer sentiment spreads fast.

AI’s future on consumer devices hinges on a simple principle: consent + clarity + utility. Deliver those three and the technology will move from “nice-to-have” to indispensable — but only if users believe they’re in control and getting real value for their money.

Source: AOL.com Many Users Don't Want AI On Their Devices For One Simple Reason

Background

Background

The consumer AI story since late 2022 has been one of rapid product launches and aggressive positioning. From branded assistants and “AI modes” baked into flagship phones to enterprise-first Copilot add-ons, vendors have moved quickly to embed generative models into everything from cameras and keyboards to productivity suites and browsers. The result: near-universal awareness of AI features, but mixed — and often lukewarm — levels of consumer interest.A recent Connected Intelligence study from Circana captured this tension bluntly: while awareness of device AI is high, roughly 35% of U.S. adults say they are not interested in having AI built into their devices. Reasons cluster around privacy, cost, perceived usefulness, and a simple “I don’t want it” stance. Younger adults are far more receptive than older ones, and voice control remains one of the most commonly used forms of AI today.

This article breaks down the numbers, explains what consumers are actually saying, evaluates the risks for vendors and users, and lays out practical steps companies should take if they want to close the gap between AI hype and consumer demand.

What the numbers actually say

The headline stat is simple: about one in three adults who know about on-device AI say they don’t want it. But the underlying slices matter.- Awareness: Very high — the vast majority of adults know that AI features are available on phones, laptops, and other devices.

- Opposition (no interest): ~35% of adults.

- Top reasons among those opposed:

- Privacy concerns (a clear majority among detractors cite this).

- Don’t want to pay more for AI-enabled features.

- Device already does what I need — many people see AI as redundant.

- Perceived complexity — a minority say AI sounds complicated.

- Generational split: Interest peaks among 18–24-year-olds (strong majority), and declines with age.

- Use case ranking: Voice control and voice assistants continue to top the list of ready—and widely adopted—AI capabilities.

Why so many people opt out: five core drivers

1) Privacy and data control are front and center

Privacy isn’t a catch-all complaint; it’s the primary barrier cited by those who reject device AI. People worry about audio or text inputs being recorded, model queries being logged, and personal data being used to train models they never consented to. These concerns are amplified by high-profile product missteps and investigative reporting that show data flow and telemetry are often opaque.- For many users, the question isn’t “Can AI improve my life?” but “Who owns the record of my interactions, and how will it be used later?”

- Device vendors and cloud providers frequently default to broad data-usage terms that confuse or alarm consumers.

2) Price sensitivity and subscription fatigue

The era of every-feature-for-a-fee is colliding with consumer resistance. Many users explicitly refuse to pay extra for AI features, and there’s measurable reluctance to add yet another subscription to a household budget already crowded with streaming, cloud storage, and app services.- Even when vendors include “AI” as part of premium tiers, consumers may vote with their wallets and stick to basic functionality.

- Attempts to monetize AI through separate per-user Copilot fees or subscription gates risk backlash if the perceived value isn’t immediate and clear.

3) Perceived utility: many users don’t see a clear need

A substantial share of those who say “I don’t want AI” are not anti-technology; they simply think their device already does what they need. For routine tasks — calls, photos, banking, maps — today’s phones already deliver, and users don’t perceive AI as a step-change in practical utility.- This is a classic product-market fit problem: AI is being pitched as transformational, but for many consumers it’s marginal or gimmicky.

- The onus is on product teams to demonstrate everyday value, not just impressive demos.

4) Complexity and confidence deficits

A smaller but meaningful segment perceives AI as complicated or brittle. Even when features exist, setup friction and unclear interfaces deter adoption.- When features require new mental models (prompts, agent flows, or prompt-tuning), many users won’t climb the learning curve unless the payoff is obvious.

- Poor UX — buried toggles, ambiguous permission dialogs, or inconsistent behavior — reinforces the perception that AI is “hard.”

5) Environmental and ethical concerns (less frequently cited, but real)

Interestingly, in some polls environmental impact didn’t top the list of reasons for refusal. That doesn’t mean sustainability and ethical sourcing aren’t relevant; rather, they rank behind immediate personal concerns like privacy and cost. Still, long-term adoption will be affected by concerns about compute energy, model sourcing, and fairness.A generational and behavioral map: who wants AI and why

- Younger users (18–24): Most receptive. They’re more comfortable experimenting with new features and tolerating changes to workflows. They also tend to be heavier users of social, creative, and conversational AI tools.

- Middle-aged users (25–54): Mixed — interested when the feature demonstrates clear productivity or entertainment value.

- Older adults (55+): More likely to be skeptical, especially when privacy and complexity concerns are present.

The vendor perspective: why companies keep pushing AI anyway

From a device-maker and platform-owner standpoint, adding AI to products is often logical and defensible.- Differentiation: In a commoditized hardware market, AI features offer a way to stand out in marketing and product briefs.

- New revenue streams: AI can be monetized via premium tiers, enterprise bundling, or per-seat Copilot charges.

- Ecosystem lock-in: AI features that connect docs, mail, calendar, and search increase switching costs and deepen platform stickiness.

- Competitive pressure: When major rivals advertise “AI-powered” features, others feel compelled to respond lest they appear behind.

Business risks: how a misstep can cost more than it makes

- Backlash and trust erosion: Forcing AI on users via bundled features or opaque defaults can trigger consumer anger and damage long-term brand trust.

- Regulatory exposure: Poorly managed data flows and insufficient consent risk violating privacy laws or inviting investigations.

- Monetization failure: Charging for AI before utility is proven leads to low conversion and churn. If only a tiny fraction of users pay for an AI add-on, the pricing strategy fails.

- Security and safety liabilities: Embedded AI that produces wrong advice, biased outputs, or reveals sensitive information exposes firms to legal and reputational harms.

- Environmental critique: Heavy compute demands without sustainability commitments may mobilize public criticism and activist scrutiny.

Design and policy fixes that actually move the needle

If vendors want to close the adoption gap, they must address the concrete reasons people opt out. Below are practical, prioritized actions.Clearer privacy-first defaults

- Make explicit, granular choices available at setup: local-only processing, on-device models, opt-in telemetry, and data retention windows.

- Use plain-language prompts that explain what data is used, how it’s stored, and whether it leaves the device.

- Offer privacy-safe modes that allow functionality without long-term logging or cloud uploads.

Value-first feature rollouts

- Ship AI features that solve everyday friction (smart replies, accessible search, battery-preserving optimization) rather than flashy generative demos.

- Test feature impact with A/B experiments focused on measurable productivity gains, not just engagement metrics.

- Provide free trial periods for premium AI features so users can experience concrete value before paying.

Transparent monetization

- Publish clear lists of what’s included in base tiers vs. AI-paid tiers.

- Avoid surprise fee increases at renewal; give customers the option to refuse AI-upgrade paths.

- Bundle AI with value — security, compliance, or storage — rather than selling it as a standalone tie-in that feels extractive.

UX that lowers cognitive cost

- Reduce friction with one-click examples, canned prompts, and templates that demonstrate value immediately.

- Provide undo, explainability, and provenance for generated outputs (e.g., “this summary used these emails”).

- Make onboarding contextual: teach by doing, not long manuals.

Strong security and safety guardrails

- Implement prompt-sanitization and rate-limiting to reduce leakage.

- Provide opt-in traceability for enterprise customers requiring audit logs.

- Employ human-in-the-loop for high-stakes outputs and propagate disclaimers where accuracy matters.

Regulatory and ethical context: what companies must watch

Regulators are moving faster than many expected. Laws and frameworks vary by region, but the pattern is clear: transparency, risk assessment, and accountability are becoming mandatory.- Disclosure obligations: Some jurisdictions require labeling of synthetic content or AI-generated media.

- Risk-based restrictions: High-risk uses (hiring, credit scoring, healthcare) may face strict compliance obligations and auditing.

- Data governance: Rules around consent, cross-border data flows, and training-set provenance are gaining traction.

The product playbook: a concise roadmap for device makers

- Prioritize two concrete, high-value AI features that solve daily tasks.

- Ship opt-in privacy defaults and a visible, understandable permissions dashboard.

- Offer a free or low-cost starter tier that demonstrates AI value before asking for a premium commitment.

- Provide robust explainability tools: show why a suggestion was made and what data fed it.

- Monitor and measure: track adoption, retention, complaints, and privacy opt-outs to iterate quickly.

- Audit all AI telemetry and categorize data flows (local, telemetry, cloud).

- Design privacy-first defaults and test them with representative users.

- Prototype two high-impact features and measure time-to-value in real-world usage.

- Price responsibly: trial → low-cost subscription → premium tier with added security or enterprise features.

- Publish transparency materials and invite independent audits.

The green and ethical angle: why environmental costs can’t be ignored

While environmental impact may not top consumer concerns today, it will increasingly shape brand reputation and regulatory scrutiny. Training and serving large models consumes electricity and carbon; device makers should:- Invest in efficient on-device models when feasible to reduce cloud inference demand.

- Purchase renewable energy or carbon offsets tied to AI workloads.

- Publish emissions estimates tied to heavy AI features and commit to reductions year-over-year.

Where vendors trip up: common mistakes to avoid

- Hiding AI in opaque defaults and hoping consumers won’t notice.

- Charging premium prices for features that lack a clear, repeatable value proposition.

- Using fuzzy marketing language that promises too much and underdelivers, creating disappointment and churn.

- Ignoring accessibility: voice-first features must handle diverse accents, languages, and disabilities.

- Treating AI as a checkbox rather than an ongoing investment in safety, UX, and explainability.

Looking ahead: will the AI wave crest or become infrastructure?

There are two plausible futures.- In one, AI becomes a background infrastructure — just like GPS or email — quietly improving functionality while running under the hood, mostly transparent to users. Adoption is gradual, with privacy-safe defaults and incremental monetization.

- In the other, AI remains a set of bold, showy features monetized aggressively. That path risks consumer pushback, regulatory heat, and a slow-burn backlash if benefits don’t match promises.

Conclusion: build with consent, not momentum

The headline that “many users don’t want AI on their devices” should be a wake-up call — not a speed bump. Device vendors and platform companies still have an opportunity to make AI genuinely useful: by addressing privacy concerns, proving tangible value, simplifying interfaces, and pricing responsibly.If companies treat AI as a partnership with users rather than a feature to be forced into product bundles, adoption will follow. If they double down on obfuscation, surprise billing, and raw marketing hype, the outcome will be lower trust and slower adoption — and that’s a costly mistake in an era where consumer sentiment spreads fast.

AI’s future on consumer devices hinges on a simple principle: consent + clarity + utility. Deliver those three and the technology will move from “nice-to-have” to indispensable — but only if users believe they’re in control and getting real value for their money.

Source: AOL.com Many Users Don't Want AI On Their Devices For One Simple Reason