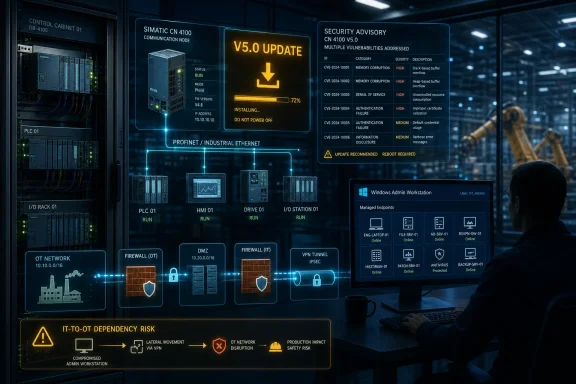

Siemens and CISA warned on May 12 and May 14, 2026, that SIMATIC CN 4100 communication nodes running versions before V5.0 contain multiple vulnerabilities, with Siemens releasing V5.0 and urging industrial operators worldwide to update affected deployments in critical manufacturing environments. The advisory is not a tidy single-bug story; it is a supply-chain maintenance bill arriving inside an operational technology product. The uncomfortable lesson is that modern industrial equipment now inherits the same sprawling dependency risk as servers, browsers, and cloud platforms, but with far less tolerance for downtime. For WindowsForum readers who live between endpoint patching, network segmentation, and production reality, this is exactly the sort of alert that separates paper security from plant-floor resilience.

SIMATIC CN 4100 is not a household name, even among many IT administrators, but that is precisely why the advisory deserves attention. It sits in the world of industrial communication, where devices often function as connective tissue rather than headline assets. When that connective tissue carries critical vulnerabilities, the blast radius can be hard to explain to executives and harder to schedule around production windows.

The affected range is simple enough: all SIMATIC CN 4100 versions before V5.0 are listed as affected. Siemens says it has released a new version and recommends updating to the latest release. CISA’s republication frames the issue for critical infrastructure defenders, with critical manufacturing listed as the relevant sector and worldwide deployment noted.

The severity number is what will get dashboards blinking: CVSS v3.1 at 9.6, with a CVSS v4.0 score reported lower but still serious. Scores alone can be misleading in industrial environments, but a near-critical ceiling is not noise. It is a signal that the update belongs in a formal risk conversation, not a backlogged maintenance spreadsheet.

What makes this advisory unusual is not merely that there are many vulnerabilities. It is the breadth of vulnerability classes named: memory corruption, resource exhaustion, race conditions, path traversal, access control failures, cross-site scripting, missing authentication, and cryptographic or timing-related weaknesses. That reads less like a single defective component and more like a reminder that an industrial appliance is often a bundle of operating systems, libraries, runtimes, services, and web surfaces.

That matters because many OT teams still operate with a mental model shaped by equipment lifecycles rather than software lifecycles. A PLC, gateway, or communication node may be expected to run for years with conservative change control. But the third-party components inside it may now need attention on a cadence that looks closer to server fleet management than traditional plant engineering.

The SIMATIC CN 4100 advisory makes that tension visible. Several listed issues appear tied to third-party software components rather than a single Siemens-authored function. That does not reduce the risk to operators; attackers do not care whether a bug originated in a vendor’s code or in an embedded library. But it changes how defenders should read the alert.

A vulnerability list this wide is not a moral failure by one manufacturer. It is an architectural fact of modern infrastructure. The winning organizations will be the ones that treat OT asset inventories, firmware baselines, dependency exposure, and maintenance windows as living operational data rather than compliance artifacts created once a year.

A denial-of-service condition against a communications node may not look as cinematic as remote code execution, but it can still be operationally serious. If a device that brokers communication between control components becomes unstable, slow, or unavailable, the plant may lose visibility, coordination, or reliable command paths. Even when safety systems remain separate and intact, production disruptions can cascade quickly.

Integrity risk is subtler but arguably more unnerving. A compromised communication path can undermine trust in the data moving across a system. Operators may see delayed, malformed, or manipulated information; automation layers may receive unexpected input; monitoring systems may generate either false alarms or dangerous silence.

Confidentiality is often treated as the least urgent of the three in OT, but that is an outdated view. Configuration data, network layout, equipment identity, version information, and operational rhythms can all be valuable to an attacker preparing a more targeted intrusion. In industrial attacks, information disclosure is frequently not the finale; it is reconnaissance.

The web-facing and management-plane issues matter just as much. Cross-site scripting, missing authentication for critical functions, improper access control, path traversal, and improper input validation are the kinds of weaknesses that become dangerous when administrative interfaces are reachable from the wrong network segment. They are also the kinds of bugs that become easier to exploit when operational shortcuts place engineering workstations, remote access gateways, and industrial devices too close together.

Concurrency problems add another layer. Race conditions, missing synchronization, improper locking, deadlocks, and signal handler race conditions are notoriously difficult to reason about in live systems. They can produce intermittent failures that are hard to reproduce in a lab and tempting to dismiss as “network weirdness” or “one-off device behavior.”

That is why a patch-only reading is insufficient. Updating to V5.0 is the immediate mitigation, but the advisory is also a map of where architecture matters. Segmentation, authenticated management access, logging, backup configurations, and restricted reachability are not decorative controls; they are what prevent a vulnerability menu from becoming an incident menu.

This is useful, but it also creates a trap for readers. A CISA page can feel like the start of a new vulnerability event, when in this case the vendor advisory was the primary source and the government publication followed. The relevant chronology is that Siemens published the advisory on May 12, 2026, and CISA republished it on May 14, 2026.

For asset owners, the two-day gap is less important than the workflow it implies. If an organization waits only for government republication, it may be behind the vendor’s own disclosure cadence. Conversely, if it tracks only vendor portals, it may miss the broader risk framing CISA provides for sector-specific defenders.

The mature approach is to monitor both. ProductCERT advisories tell you what the manufacturer believes is affected and remediated. CISA advisories tell you how the issue fits into the wider critical infrastructure ecosystem and reinforce baseline defensive practices that are too often treated as obvious until an incident proves otherwise.

That makes Windows administration part of the OT security boundary. A vulnerable industrial communication node may be reachable only from an engineering VLAN, but the machines in that VLAN often authenticate users, browse management interfaces, stage firmware, retrieve logs, and run vendor software. If those Windows systems are poorly hardened, the segmentation story collapses.

The advisory’s mention of web and input-handling vulnerabilities should sharpen that point. Management access through a browser is common, convenient, and dangerous when trust boundaries are fuzzy. A compromised workstation can become the bridge that turns a theoretically isolated industrial device into a reachable target.

This is where traditional IT hygiene earns its place in OT risk management. Credential isolation, least privilege, application control, endpoint detection, browser hardening, PowerShell logging, patching of VPN clients, and disciplined remote access are not generic best practices. They are the controls that keep an industrial advisory from becoming a Windows-assisted intrusion path.

Industrial operators should begin by identifying every SIMATIC CN 4100 in inventory and confirming its installed version. That sounds basic, but asset certainty is one of the hardest problems in OT security. Many organizations know what equipment they purchased but not what firmware is currently running, where it is reachable, which dependencies it supports, or what business process breaks if it is rebooted at the wrong time.

Next comes exposure analysis. A device on an isolated, tightly controlled segment with no internet reachability and limited management access presents a different immediate risk than the same device reachable through a flat plant network, a poorly governed remote access path, or a shared engineering workstation. CVSS gives a severity baseline; network architecture determines urgency.

Then comes testing. Firmware updates in OT should not be treated like routine desktop cumulative updates, but neither should that caution become paralysis. A representative test environment, known-good backups, documented rollback procedures, and vendor-supported upgrade steps are what allow teams to move decisively without gambling with production.

No internet exposure should be the easy part. The harder part is preventing accidental exposure through vendor support tunnels, temporary firewall rules, remote desktop convenience, dual-homed workstations, or “just for commissioning” exceptions that become permanent. OT networks often become porous not through grand architectural decisions, but through years of small operational compromises.

Firewalls are necessary but not sufficient. Segmentation must reflect function, not merely geography. Engineering workstations, HMIs, historians, domain controllers, jump hosts, vendor access systems, and industrial devices should not all share trust simply because they are “plant network” assets.

VPN guidance also deserves nuance. VPNs are not magic safety wrappers; they are software products with vulnerabilities, credentials, configuration debt, and endpoint dependencies. A VPN that lands a third-party technician directly into a sensitive network segment without strong authentication, device posture checks, session recording, and just-in-time access is not a mitigation. It is a controlled failure waiting to become uncontrolled.

This creates a governance challenge. IT teams are used to asking whether a server runs a vulnerable version of a library. OT teams often must ask whether a product contains that library at all, whether the vulnerable code path is reachable, whether the vendor has released a tested image, and whether applying it affects certification or support.

The SIMATIC CN 4100 advisory illustrates why software bills of materials, while imperfect, have become a serious topic. Knowing what is inside an industrial product does not automatically fix vulnerabilities. But without that visibility, defenders are reduced to waiting for vendor advisories and manually mapping them against asset inventories.

The practical answer is not to demand that plant teams become open-source dependency analysts. It is to build procurement, maintenance, and vendor management processes that require timely vulnerability disclosure, supported update paths, clear affected-version statements, and realistic lifecycle commitments. In 2026, “it is an appliance” is no longer a security answer.

The right response is structured triage. Confirm affected assets. Determine reachability. Identify compensating controls. Review logs and remote access paths. Schedule the update. Validate the system afterward. None of that is glamorous, but it is how organizations avoid turning advisories into either theater or emergencies.

CVSS v4.0 scoring can sometimes communicate nuance differently from v3.1, and this advisory’s lower v4.0 score should not be read as a downgrade in practical urgency. Scoring systems are models. They help prioritize, but they do not know the cost of stopping a production line, the maturity of a plant network, or the difficulty of coordinating a firmware window across a global manufacturing footprint.

For security teams reporting upward, the most honest message is not “we have a 9.6, everything is on fire.” It is “we have an affected industrial communication product with vendor-provided remediation, broad vulnerability classes, and potential impact to availability, integrity, and confidentiality; here is our asset count, exposure assessment, and update plan.”

That means separating emergency actions from scheduled remediation. If a vulnerable device is reachable from a business network or remote access path, immediate segmentation changes may be more urgent than the firmware update itself. If the device is already tightly isolated and monitored, the update may be scheduled through the next validated maintenance window without pretending the issue does not exist.

It also means rehearsing the boring parts. Backups of configurations, firmware repositories, out-of-band management contacts, vendor escalation channels, spare hardware, and rollback instructions should exist before a critical advisory lands. If the first time a team checks firmware recovery steps is during a vulnerability response, the security program is already paying interest on operational debt.

Windows administrators can help here more than they may think. They often own the tooling used for inventory, ticketing, remote access, authentication, endpoint control, and logging. If those systems can reliably show which workstations can reach industrial networks, which accounts have vendor access, and which devices have been touched during an upgrade, the OT team gains more than paperwork. It gains leverage.

A mature response begins with acknowledging the vendor’s fix and the affected version boundary. It continues by mapping that boundary against real assets, not assumed architecture diagrams. It ends only after remediation or compensating controls have been verified and documented.

Less mature organizations will forward the advisory to a distribution list, ask whether “we have any of these,” and wait. The delay may not matter every time. But the process failure will matter eventually, because industrial advisories are not rare anomalies anymore; they are the normal weather pattern.

The hard truth is that OT security now depends on the same disciplines that enterprise IT has spent decades building, plus a higher burden of operational caution. Inventory, patch management, network segmentation, identity governance, vulnerability triage, and incident response are not IT concepts awkwardly imposed on the plant. They are the price of running software-defined infrastructure in physical environments.

Source: CISA Siemens SIMATIC | CISA

Siemens Ships a Fix, but the Real Story Is the Stack Beneath the Box

Siemens Ships a Fix, but the Real Story Is the Stack Beneath the Box

SIMATIC CN 4100 is not a household name, even among many IT administrators, but that is precisely why the advisory deserves attention. It sits in the world of industrial communication, where devices often function as connective tissue rather than headline assets. When that connective tissue carries critical vulnerabilities, the blast radius can be hard to explain to executives and harder to schedule around production windows.The affected range is simple enough: all SIMATIC CN 4100 versions before V5.0 are listed as affected. Siemens says it has released a new version and recommends updating to the latest release. CISA’s republication frames the issue for critical infrastructure defenders, with critical manufacturing listed as the relevant sector and worldwide deployment noted.

The severity number is what will get dashboards blinking: CVSS v3.1 at 9.6, with a CVSS v4.0 score reported lower but still serious. Scores alone can be misleading in industrial environments, but a near-critical ceiling is not noise. It is a signal that the update belongs in a formal risk conversation, not a backlogged maintenance spreadsheet.

What makes this advisory unusual is not merely that there are many vulnerabilities. It is the breadth of vulnerability classes named: memory corruption, resource exhaustion, race conditions, path traversal, access control failures, cross-site scripting, missing authentication, and cryptographic or timing-related weaknesses. That reads less like a single defective component and more like a reminder that an industrial appliance is often a bundle of operating systems, libraries, runtimes, services, and web surfaces.

The CVE Flood Is Becoming an Industrial Maintenance Pattern

Industrial security advisories increasingly resemble enterprise software advisories because the products increasingly resemble enterprise software systems. A communication node may look like hardware in a cabinet, but underneath it are familiar ingredients: Java, OpenSSL, web interfaces, protocol parsers, logging paths, update mechanisms, and service daemons. The industrial badge does not exempt it from the dependency treadmill.That matters because many OT teams still operate with a mental model shaped by equipment lifecycles rather than software lifecycles. A PLC, gateway, or communication node may be expected to run for years with conservative change control. But the third-party components inside it may now need attention on a cadence that looks closer to server fleet management than traditional plant engineering.

The SIMATIC CN 4100 advisory makes that tension visible. Several listed issues appear tied to third-party software components rather than a single Siemens-authored function. That does not reduce the risk to operators; attackers do not care whether a bug originated in a vendor’s code or in an embedded library. But it changes how defenders should read the alert.

A vulnerability list this wide is not a moral failure by one manufacturer. It is an architectural fact of modern infrastructure. The winning organizations will be the ones that treat OT asset inventories, firmware baselines, dependency exposure, and maintenance windows as living operational data rather than compliance artifacts created once a year.

Availability Is the First Casualty, but Not the Only One

CISA’s summary says the vulnerabilities could compromise availability, integrity, and confidentiality. In IT security writing, that triad can sound boilerplate. In industrial environments, it is anything but boilerplate, because availability often carries immediate business and safety implications.A denial-of-service condition against a communications node may not look as cinematic as remote code execution, but it can still be operationally serious. If a device that brokers communication between control components becomes unstable, slow, or unavailable, the plant may lose visibility, coordination, or reliable command paths. Even when safety systems remain separate and intact, production disruptions can cascade quickly.

Integrity risk is subtler but arguably more unnerving. A compromised communication path can undermine trust in the data moving across a system. Operators may see delayed, malformed, or manipulated information; automation layers may receive unexpected input; monitoring systems may generate either false alarms or dangerous silence.

Confidentiality is often treated as the least urgent of the three in OT, but that is an outdated view. Configuration data, network layout, equipment identity, version information, and operational rhythms can all be valuable to an attacker preparing a more targeted intrusion. In industrial attacks, information disclosure is frequently not the finale; it is reconnaissance.

The Advisory’s Long Vulnerability Menu Is a Warning About Attack Surface

The vulnerability classes named in the advisory are almost comically broad, but that breadth should not cause defenders to tune out. NULL pointer dereferences and reachable assertions can crash services. Use-after-free flaws, out-of-bounds writes, integer overflows, stack-based buffer overflows, and improper buffer restrictions point toward the possibility of memory corruption. Resource exhaustion, uncontrolled recursion, inefficient algorithmic complexity, and infinite loops point toward denial-of-service conditions.The web-facing and management-plane issues matter just as much. Cross-site scripting, missing authentication for critical functions, improper access control, path traversal, and improper input validation are the kinds of weaknesses that become dangerous when administrative interfaces are reachable from the wrong network segment. They are also the kinds of bugs that become easier to exploit when operational shortcuts place engineering workstations, remote access gateways, and industrial devices too close together.

Concurrency problems add another layer. Race conditions, missing synchronization, improper locking, deadlocks, and signal handler race conditions are notoriously difficult to reason about in live systems. They can produce intermittent failures that are hard to reproduce in a lab and tempting to dismiss as “network weirdness” or “one-off device behavior.”

That is why a patch-only reading is insufficient. Updating to V5.0 is the immediate mitigation, but the advisory is also a map of where architecture matters. Segmentation, authenticated management access, logging, backup configurations, and restricted reachability are not decorative controls; they are what prevent a vulnerability menu from becoming an incident menu.

CISA’s Republication Is a Visibility Move, Not a New Discovery

CISA’s notice is a republication of Siemens ProductCERT’s advisory, not an independent technical teardown. That distinction matters. CISA is amplifying vendor information through its industrial control systems advisory channel, increasing visibility for U.S. and global defenders who track ICS alerts through government feeds.This is useful, but it also creates a trap for readers. A CISA page can feel like the start of a new vulnerability event, when in this case the vendor advisory was the primary source and the government publication followed. The relevant chronology is that Siemens published the advisory on May 12, 2026, and CISA republished it on May 14, 2026.

For asset owners, the two-day gap is less important than the workflow it implies. If an organization waits only for government republication, it may be behind the vendor’s own disclosure cadence. Conversely, if it tracks only vendor portals, it may miss the broader risk framing CISA provides for sector-specific defenders.

The mature approach is to monitor both. ProductCERT advisories tell you what the manufacturer believes is affected and remediated. CISA advisories tell you how the issue fits into the wider critical infrastructure ecosystem and reinforce baseline defensive practices that are too often treated as obvious until an incident proves otherwise.

The Windows Angle Is the Admin Workstation, Not the PLC Cabinet

WindowsForum readers may wonder why a Siemens industrial advisory belongs in a Windows-centric publication. The answer is that Windows remains deeply embedded in the operational workflow around industrial systems, even when the vulnerable device itself is not a Windows endpoint. Engineering workstations, historian servers, HMI stations, remote access jump boxes, patch staging systems, and vendor support laptops often run Windows.That makes Windows administration part of the OT security boundary. A vulnerable industrial communication node may be reachable only from an engineering VLAN, but the machines in that VLAN often authenticate users, browse management interfaces, stage firmware, retrieve logs, and run vendor software. If those Windows systems are poorly hardened, the segmentation story collapses.

The advisory’s mention of web and input-handling vulnerabilities should sharpen that point. Management access through a browser is common, convenient, and dangerous when trust boundaries are fuzzy. A compromised workstation can become the bridge that turns a theoretically isolated industrial device into a reachable target.

This is where traditional IT hygiene earns its place in OT risk management. Credential isolation, least privilege, application control, endpoint detection, browser hardening, PowerShell logging, patching of VPN clients, and disciplined remote access are not generic best practices. They are the controls that keep an industrial advisory from becoming a Windows-assisted intrusion path.

The Patch Is Straightforward; the Deployment Is Not

“Update to V5.0” is clean advice on paper. In a production environment, it becomes a negotiation among reliability, safety, vendor support, downtime, change boards, validation testing, and the calendar. That does not make the advice wrong. It makes planning essential.Industrial operators should begin by identifying every SIMATIC CN 4100 in inventory and confirming its installed version. That sounds basic, but asset certainty is one of the hardest problems in OT security. Many organizations know what equipment they purchased but not what firmware is currently running, where it is reachable, which dependencies it supports, or what business process breaks if it is rebooted at the wrong time.

Next comes exposure analysis. A device on an isolated, tightly controlled segment with no internet reachability and limited management access presents a different immediate risk than the same device reachable through a flat plant network, a poorly governed remote access path, or a shared engineering workstation. CVSS gives a severity baseline; network architecture determines urgency.

Then comes testing. Firmware updates in OT should not be treated like routine desktop cumulative updates, but neither should that caution become paralysis. A representative test environment, known-good backups, documented rollback procedures, and vendor-supported upgrade steps are what allow teams to move decisively without gambling with production.

Network Exposure Is Still the Control That Buys Time

CISA’s defensive guidance will sound familiar: minimize network exposure, keep control system devices off the internet, place control system networks behind firewalls, isolate them from business networks, and use secure remote access methods such as updated VPNs when remote access is required. The repetition is intentional. These are the controls that keep showing up because they keep failing in real incidents.No internet exposure should be the easy part. The harder part is preventing accidental exposure through vendor support tunnels, temporary firewall rules, remote desktop convenience, dual-homed workstations, or “just for commissioning” exceptions that become permanent. OT networks often become porous not through grand architectural decisions, but through years of small operational compromises.

Firewalls are necessary but not sufficient. Segmentation must reflect function, not merely geography. Engineering workstations, HMIs, historians, domain controllers, jump hosts, vendor access systems, and industrial devices should not all share trust simply because they are “plant network” assets.

VPN guidance also deserves nuance. VPNs are not magic safety wrappers; they are software products with vulnerabilities, credentials, configuration debt, and endpoint dependencies. A VPN that lands a third-party technician directly into a sensitive network segment without strong authentication, device posture checks, session recording, and just-in-time access is not a mitigation. It is a controlled failure waiting to become uncontrolled.

Third-Party Components Are Now Part of OT Risk Ownership

One of the quiet shifts in industrial cybersecurity is that vendors and operators increasingly share risk around embedded third-party components. Siemens can package and support an update, but the underlying bug may originate in Java, OpenSSL, or another widely used library. Operators cannot patch those components independently in most appliances, yet they still inherit the exposure.This creates a governance challenge. IT teams are used to asking whether a server runs a vulnerable version of a library. OT teams often must ask whether a product contains that library at all, whether the vulnerable code path is reachable, whether the vendor has released a tested image, and whether applying it affects certification or support.

The SIMATIC CN 4100 advisory illustrates why software bills of materials, while imperfect, have become a serious topic. Knowing what is inside an industrial product does not automatically fix vulnerabilities. But without that visibility, defenders are reduced to waiting for vendor advisories and manually mapping them against asset inventories.

The practical answer is not to demand that plant teams become open-source dependency analysts. It is to build procurement, maintenance, and vendor management processes that require timely vulnerability disclosure, supported update paths, clear affected-version statements, and realistic lifecycle commitments. In 2026, “it is an appliance” is no longer a security answer.

The CVSS Number Should Start the Conversation, Not End It

A 9.6 CVSS v3.1 score is serious, but industrial defenders should resist both extremes: panic and dismissal. Panic produces rushed changes without operational analysis. Dismissal produces a comforting fiction that because a device is “inside the plant,” the vulnerability is irrelevant.The right response is structured triage. Confirm affected assets. Determine reachability. Identify compensating controls. Review logs and remote access paths. Schedule the update. Validate the system afterward. None of that is glamorous, but it is how organizations avoid turning advisories into either theater or emergencies.

CVSS v4.0 scoring can sometimes communicate nuance differently from v3.1, and this advisory’s lower v4.0 score should not be read as a downgrade in practical urgency. Scoring systems are models. They help prioritize, but they do not know the cost of stopping a production line, the maturity of a plant network, or the difficulty of coordinating a firmware window across a global manufacturing footprint.

For security teams reporting upward, the most honest message is not “we have a 9.6, everything is on fire.” It is “we have an affected industrial communication product with vendor-provided remediation, broad vulnerability classes, and potential impact to availability, integrity, and confidentiality; here is our asset count, exposure assessment, and update plan.”

Where the Plant Floor Meets the Patch Calendar

The friction between IT patch culture and OT maintenance culture is real, but it is often overstated as an excuse for inaction. Industrial operators do not need to patch like consumer laptops. They do need to patch like organizations that understand their own risk.That means separating emergency actions from scheduled remediation. If a vulnerable device is reachable from a business network or remote access path, immediate segmentation changes may be more urgent than the firmware update itself. If the device is already tightly isolated and monitored, the update may be scheduled through the next validated maintenance window without pretending the issue does not exist.

It also means rehearsing the boring parts. Backups of configurations, firmware repositories, out-of-band management contacts, vendor escalation channels, spare hardware, and rollback instructions should exist before a critical advisory lands. If the first time a team checks firmware recovery steps is during a vulnerability response, the security program is already paying interest on operational debt.

Windows administrators can help here more than they may think. They often own the tooling used for inventory, ticketing, remote access, authentication, endpoint control, and logging. If those systems can reliably show which workstations can reach industrial networks, which accounts have vendor access, and which devices have been touched during an upgrade, the OT team gains more than paperwork. It gains leverage.

Siemens’ Advisory Is a Test of Industrial Security Maturity

The most important thing about this advisory is not that it exists. Industrial vendors publish vulnerability advisories every month. The important thing is whether organizations can absorb the information and act without chaos.A mature response begins with acknowledging the vendor’s fix and the affected version boundary. It continues by mapping that boundary against real assets, not assumed architecture diagrams. It ends only after remediation or compensating controls have been verified and documented.

Less mature organizations will forward the advisory to a distribution list, ask whether “we have any of these,” and wait. The delay may not matter every time. But the process failure will matter eventually, because industrial advisories are not rare anomalies anymore; they are the normal weather pattern.

The hard truth is that OT security now depends on the same disciplines that enterprise IT has spent decades building, plus a higher burden of operational caution. Inventory, patch management, network segmentation, identity governance, vulnerability triage, and incident response are not IT concepts awkwardly imposed on the plant. They are the price of running software-defined infrastructure in physical environments.

What This Siemens Alert Should Change Before the Next Maintenance Window

This advisory should not send teams into indiscriminate shutdown mode, but it should force a concrete review of SIMATIC CN 4100 exposure and update readiness. The organizations best positioned to handle it will be those that can answer asset, version, network, and ownership questions without starting a scavenger hunt.- Siemens SIMATIC CN 4100 systems running versions before V5.0 should be treated as affected and prioritized for vendor-supported update planning.

- The advisory’s CVSS v3.1 score of 9.6 reflects potentially serious impact, but local exposure and segmentation should determine immediate operational urgency.

- CISA’s May 14 republication amplifies Siemens’ May 12 ProductCERT advisory rather than replacing the vendor as the primary technical source.

- Windows-based engineering workstations, jump servers, vendor laptops, and remote access systems should be reviewed because they often form the practical path to industrial devices.

- Network isolation, firewall policy, and tightly governed remote access can reduce risk while firmware updates are tested and scheduled.

- Asset inventory quality will determine whether this advisory becomes a controlled maintenance task or an expensive emergency discovery exercise.

Source: CISA Siemens SIMATIC | CISA