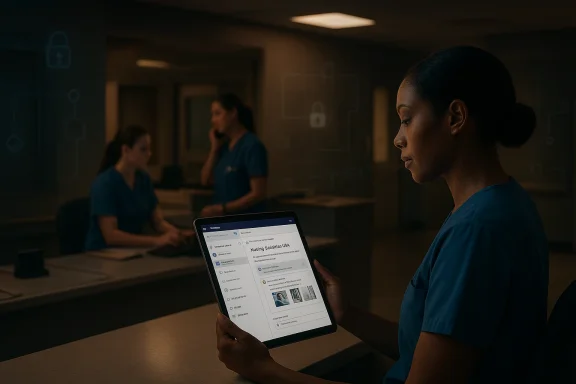

Late-evening nurses at Yonsei University Health System are now getting something that hospital staff everywhere have long wanted but rarely receive: fast, contextual answers without leaving the workflow they already use. In a move that blends hospital administration, low-code automation, and secure AI deployment, Yonsei is using Copilot agents to reduce repetitive work, surface guidelines faster, and improve the professionalism of support processes across the institution. The result is more than a productivity upgrade; it is a signal that hospital AI adoption is moving from experimentation to operating model. Microsoft’s own reporting says Yonsei is building this around Copilot Studio, the Power Platform, and Microsoft Teams, with a strong emphasis on security, governance, and department-level ownership. itals are among the most demanding environments for any AI rollout because they combine rigid compliance requirements, high-stakes decision-making, and workflows that never stop. In that context, Yonsei University Health System’s approach is notable not because it is flashy, but because it is practical. Microsoft’s Source story describes a system where staff members had already been experimenting with AI individually, yet the organization recognized that broad adoption inside a hospital would only work if it fit security policies, network separation, and internal governance rules.

That distinction mams begin with enthusiasm and then stall when they collide with access control, document hygiene, and operational reality. Yonsei’s Digital Health Strategy Center appears to have treated those constraints as design inputs rather than obstacles, building a framework that could be safely used by frontline departments instead of a centralized lab team. Microsoft frames this as a citizen developer ecosystem, where departments build and refine their own agents around their own work.

The broader industry backdrop also helpsMicrosoft has spent the last year pushing healthcare AI from broad ambition into specific products and partnerships. In October 2024, the company announced healthcare AI models, healthcare data solutions in Fabric, and the public preview of healthcare agent service in Copilot Studio, explicitly positioning agents for appointment scheduling, clinical trial matching, and patient triage. That same announcement also pointed to AI-driven nursing workflow tools as part of a wider healthcare push. (news.microsoft.com)

What Yonsei is doing sits naturally inside that arc, but with an important twist. Rather than focusing first on clinical automation, the hospital is targeting administrative and support tasks where AI can make an immediate difference without touching the highest-risk clinical decisions. Microsoft’s healthcare AI guidance has also been pushing in that direction, arguing that healthcare organizations can use agentic AI to automate repetitive processes, coordinate across systems, and reduce manual toil. (techcommunity.microsoft.com)

There is another reason this matters now: the enterprise AI market is rapidly shifting from “assistant” language toward “agent” language. Microsoft’s broader Copilot strategy now describes agents as the new apps in an AI-powered world, capable of executing workflows for teams and functions rather than simply drafting text. That makes Yonsei less of an isolated case study and more of a preview of how regulated institutions may operationalize AI in the next phase. (blogs.microsoft.com)

Yonsei’s model is built around a simple idea: don’t force every user to become an AI expert, and don’t force every department to rebuild its process from scratch. Instead, give frontline teams tools that live inside familiar collaborationhem shape the agents around their own documents and recurring questions. Microsoft says this is being done in Teams, with the hospital using existing familiarity with Microsoft tools to make adoption feel natural rather than disruptive.

The Microsoft narrative also suggests that this is a SaaS-based architecture rather than a custom-built internal AI stack. That is significant because hospitals can then evolve through configuration changes instead of recurrs much easier to sustain operationally. In a field where IT teams are already stretched, the difference between maintenance and reinvention is often the difference between success and abandonment.

A second key choice is the use of Copilot Studio and the Power Platform, which makes the system accessible to staff who already know low-code tools like Power Automate. That means the hospital can encourage a broader population of internequiring every department to hire specialized AI engineers. Microsoft says the Center is training and guiding frontline teams to help them design responses and validate quality.

Microsoft’s broader platform messaging supports this model. The company says Copilot Studio and Copilot are meant to connect work data, systems of record, and business processes, allowing agents to support functions ranging from help desks to onboarding. That is exactly the kind of enterprise plumbing a hospital needs if it wants to scale AI without creating a chaotic patchwork of one-off bots. (blogs.microsoft.com)

The opportunity is not only efficiency. It is also standardization. When departments build their own Q&A agents from approved source documents, the ually converge on a shared knowledge layer while still preserving department-specific nuance. That is a subtle but powerful distinction, because hospitals often struggle with fragmented knowledge even when they have the same policies on paper.

In the example Microsoft describes, nurse Juhui Jung opens Teams and gets the test purpose, preparation steps, consent forms, and reference images in a single view. The practical value is obvious: fewer searches, fewer handoffs, and fewer chanceetail. That is exactly what hospitals mean when they talk about improving professionalism — not just being faster, but being more consistent and more confident in the answer given.

The story also hints at a broader operational benefit: nurses can start relying on the agent first for repetitive questions. That changes the rhythm of work. Insterrupting colleagues or chasing down the same answer, staff can move more quickly from question to action, which is a small change that compounds across shifts.

The article also makes clear that document structure affects agent quality. That is a critical lesson for any hospital considering similar tools: AI does not magically fix bad ire. If the underlying documentation is inconsistent, even a strong model will struggle to produce reliable responses.

A few likely benefits are already visible:

That observation is widely applicable beyond Yonsei. In many organizations, the first instinct is to ask whether the model is accurate enough. The better question may be whether the organization’s knowledge base is organized enough to support reliable answers in the first place. If the answer is no, the Aocumentation project in disguise.

This is one of the deeper institutional changes AI creates: it forces organizations to treat knowledge management as a strategic function rather than a cleanup task. In a hospital, that can improve not only AI performance but also training, onboarding, and cross-department coordination. That is a much broader return than aalculation.

The practical implications are straightforward:

This is where Microsoft’s wider healthcare platform strategy matters. The company has been emphasizing safe and responsible healthcare agents, healthcare data solutions, and governance layers that make AI more usable in regulated settings. In October 2024, Microsoft explicitly positioned healthcare agent service in Copilot Studio as a way to build patient-facing and clinical workflow tools while helping organizations meet industry expectations. (news.microsoft.com)

That is not accidental language. Healthcare buyers want innovation, but they also want evidence that the vendor understands the compliance burden. Yonsei’s architecture suggests the hospital is looking for a platform that can evolve without repeatedly reopening its ss. That is a major reason hospitals often prefer configurable cloud services over internal AI infrastructure.

Microsoft’s agent platform messaging reinforces this broader enterprise posture. The company says agents draw on authorized work data and can support specific workflows, but that also implies enterprises must carefully control what those agents can see. In a hospital, “authorized” is a very precise word. It defines what AI can safely know, not just what users happen to be curious about. (blogs.microsoft.com)

The strongest security lesson from Yonsei is therefore cultural as much as technical:

That sequence is also strategically sound. If an organization starts with clini the stakes are immediately higher and the path to trust is much longer. Starting with administrative and support use cases lets the hospital prove the value of the platform in lower-risk settings before expanding into more sensitive territory.

Microsoft’s broader healthcare announcements point in the same direction. The company has already highlighted AI tools for nursing documentation, patient triage, and connected care experiences. Yonsei’s stepwise approach feels like an institutional version of that same progression: learn, validate, then expand. (news.microsoft.com)

There is also an enterprise professionalism angle. When staff canmptly and with fewer handoffs, the institution appears more organized, more reliable, and more responsive. In competitive healthcare markets, those qualities are not cosmetic; they shape patient trust, staff morale, and referral confidence. Professionalism, in this sense, is operational trust made visible.

The likely payoff includes:

Yonsei is effectively validating that thesis in a hospital context. The agent is not functioning as a novelty chatbot; it is acting as a workflow layer inside Teamted documents and department knowledge. That is exactly the sort of embedded AI Microsoft wants enterprises to adopt.

It also helps Microsoft make a broader claim about extensibility. If a hospital can build useful agents for nursing guidelines and support tasks, then similar patterns can be extended to other departments. The platform story becomes more persuasive when customers can see a path from one concrete win to a portfolio of use cases.

The flip side is that Microsoft must keep proving governance and reliability. Hospitals will not tolerate flashy AI that cannot be audited or controlled. So the competitive battleground is not just model quality; it is operational trust, configurable security, and the ability to keep pace with change without breaking the workflow. That is a harder contest to win, but also a more durable one. (blogs.microsoft.com)

Key platform takeaways:

Microsoft’s healthcare work reflects this tension. The company has both consumer-facing health ambitions and enterprise healthcare agent services, but the hospital story depends on the enterprise side being trustworthy enough to support real work. Yonsei’s implementation shows what that trust layer looks like in practice. ([news.microsoft.com](https://news.microsoft.com/2024/10/10/microsoft-expands-ai-capabilities-to-shape-a-healthied, the consumer and enterprise sides can reinforce each other. As more healthcare organizations normalize AI-assisted workflows, public comfort with AI in medicine may increase. The danger is if consumer expectations outpace hospital governance. Then the conversation shifts from productivity to liability very quickly. (news.microsoft.com)

The broader implication is that hospitals will likely adopt AI in layers:

The next interesting question is not whether the hospital can build more agents, but whether it can build a durable institutional habit around maintaining them. That means ongoing document stewardship, structured feedback from frontline staff, and clear ownership for every knowledge domain. If Yonsei gets that part right, the system can become a foundational layer for hospital-wide operations rather than a pilot that fades after the first wave of enthusiasm.

What to watch next:

Source: Microsoft Source The Future of Medical Innovation with AI: Yonsei University Health System Uses AI Agents to Enhance Efficiency and Professionalism in Administrative & Support Processes - Source Asia

That distinction mams begin with enthusiasm and then stall when they collide with access control, document hygiene, and operational reality. Yonsei’s Digital Health Strategy Center appears to have treated those constraints as design inputs rather than obstacles, building a framework that could be safely used by frontline departments instead of a centralized lab team. Microsoft frames this as a citizen developer ecosystem, where departments build and refine their own agents around their own work.

The broader industry backdrop also helpsMicrosoft has spent the last year pushing healthcare AI from broad ambition into specific products and partnerships. In October 2024, the company announced healthcare AI models, healthcare data solutions in Fabric, and the public preview of healthcare agent service in Copilot Studio, explicitly positioning agents for appointment scheduling, clinical trial matching, and patient triage. That same announcement also pointed to AI-driven nursing workflow tools as part of a wider healthcare push. (news.microsoft.com)

What Yonsei is doing sits naturally inside that arc, but with an important twist. Rather than focusing first on clinical automation, the hospital is targeting administrative and support tasks where AI can make an immediate difference without touching the highest-risk clinical decisions. Microsoft’s healthcare AI guidance has also been pushing in that direction, arguing that healthcare organizations can use agentic AI to automate repetitive processes, coordinate across systems, and reduce manual toil. (techcommunity.microsoft.com)

There is another reason this matters now: the enterprise AI market is rapidly shifting from “assistant” language toward “agent” language. Microsoft’s broader Copilot strategy now describes agents as the new apps in an AI-powered world, capable of executing workflows for teams and functions rather than simply drafting text. That makes Yonsei less of an isolated case study and more of a preview of how regulated institutions may operationalize AI in the next phase. (blogs.microsoft.com)

The Yonsei Model

The Yonsei Model

Yonsei’s model is built around a simple idea: don’t force every user to become an AI expert, and don’t force every department to rebuild its process from scratch. Instead, give frontline teams tools that live inside familiar collaborationhem shape the agents around their own documents and recurring questions. Microsoft says this is being done in Teams, with the hospital using existing familiarity with Microsoft tools to make adoption feel natural rather than disruptive.A familiar interface, not a new system

This is one of the most important design choices in the whole story. Hospital employees are already overloaded with alerts, handoffs, messages, and documentducing a separate AI portal would almost guarantee friction. By embedding the agent into Teams, Yonsei lowers the adoption barrier and increases the odds that AI becomes a daily habit rather than a special-purpose tool.The Microsoft narrative also suggests that this is a SaaS-based architecture rather than a custom-built internal AI stack. That is significant because hospitals can then evolve through configuration changes instead of recurrs much easier to sustain operationally. In a field where IT teams are already stretched, the difference between maintenance and reinvention is often the difference between success and abandonment.

A second key choice is the use of Copilot Studio and the Power Platform, which makes the system accessible to staff who already know low-code tools like Power Automate. That means the hospital can encourage a broader population of internequiring every department to hire specialized AI engineers. Microsoft says the Center is training and guiding frontline teams to help them design responses and validate quality.

Why citizen development matters

The citizen developer concept is not just a buzzword here. It reflects a realistic division of labor: IT can set policy, security, and infrastructure guardrails, while departments close to the work can define what usike. That is especially important in hospitals, where a guideline that seems obvious to IT might be confusing to nurses or administrative staff in practice.Microsoft’s broader platform messaging supports this model. The company says Copilot Studio and Copilot are meant to connect work data, systems of record, and business processes, allowing agents to support functions ranging from help desks to onboarding. That is exactly the kind of enterprise plumbing a hospital needs if it wants to scale AI without creating a chaotic patchwork of one-off bots. (blogs.microsoft.com)

The opportunity is not only efficiency. It is also standardization. When departments build their own Q&A agents from approved source documents, the ually converge on a shared knowledge layer while still preserving department-specific nuance. That is a subtle but powerful distinction, because hospitals often struggle with fragmented knowledge even when they have the same policies on paper.

Nursing Teams as the First Proof Point

Yonsei’s nursing teams seem to be the first group to feel the most visible benefits, and that makes sense. Nurses are often the institution’s highest-volume knowledge workers: they answer questions, navigate proceduremanage handoffs, and communicate across departments all day long. Microsoft’s story emphasizes that they need timely access to accurate information because even small delays can affect service quality and operational flow.The Q&A agent in daily practice

The Nursing Guidelines Q&A Copilot Agent is designed to surface the right guidance, reference images, and procedural details in one place. That matters because hospital documents are usually fragmented across formats — tand departmental references — and staff often have to search multiple places to piece together a complete answer. In a 24-hour operation, that fragmentation becomes more painful on nights and weekends.In the example Microsoft describes, nurse Juhui Jung opens Teams and gets the test purpose, preparation steps, consent forms, and reference images in a single view. The practical value is obvious: fewer searches, fewer handoffs, and fewer chanceetail. That is exactly what hospitals mean when they talk about improving professionalism — not just being faster, but being more consistent and more confident in the answer given.

The story also hints at a broader operational benefit: nurses can start relying on the agent first for repetitive questions. That changes the rhythm of work. Insterrupting colleagues or chasing down the same answer, staff can move more quickly from question to action, which is a small change that compounds across shifts.

Real-world feedback loop

One of the strongest details in the Microsoft account is that nurses actively suggested improvements during testing. That tells us the deployment is not a static product drop but a fehcare, that matters because the best workflow tools are the ones that evolve with frontline use rather than freezing the process in place.The article also makes clear that document structure affects agent quality. That is a critical lesson for any hospital considering similar tools: AI does not magically fix bad ire. If the underlying documentation is inconsistent, even a strong model will struggle to produce reliable responses.

A few likely benefits are already visible:

- Faster access to procedure guidance.

- Reduced reliance on repeated manual searches.

- Better support for night and weekend shifts.

- Lower interruption burden on senior staff.

- More consistent execution of routine tasks.

Document Quality Becomes an AI Discipline

Yonsei’s story makes a point that many AI rollouts discover only after launch: the hardest part is often not the model, but the documents. Hospital guidelines are living artifacts, constantly revised, restructured, and redistributed, which makes a one-time static AI setup unsuitable. Microsoft says Yonsei’s teams are l based on recurring questions and refining the source documents over time.Why “good enough for humans” is not enough for AI

A human can often interpret a messy guideline because humans infer missing context. AI systems, especially those grounded in internal documents, are less forgiving. Jaerin Jung from the IT team notes that even intuitive documents can be difficult for AI is why structure and ongoing testing are so important.That observation is widely applicable beyond Yonsei. In many organizations, the first instinct is to ask whether the model is accurate enough. The better question may be whether the organization’s knowledge base is organized enough to support reliable answers in the first place. If the answer is no, the Aocumentation project in disguise.

This is one of the deeper institutional changes AI creates: it forces organizations to treat knowledge management as a strategic function rather than a cleanup task. In a hospital, that can improve not only AI performance but also training, onboarding, and cross-department coordination. That is a much broader return than aalculation.

Continuous refinement as the real innovation

The most promising part of the Yonsei model is probably its iterative nature. Staff are not just consuming AI outputs; they are reshaping source material based on what the agent gets wrong or fails to answer cleanly. That feedback loop turns the deployment into an organizational learoft’s larger healthcare messaging also points in this direction. The company has repeatedly framed healthcare AI as a way to improve workflows, data integration, and operational insight, not as a plug-and-play magic trick. That is a more credible framing for hospitals, which need technology to fit reality rather than force a fantasy. (news.microsoft.com)The practical implications are straightforward:

- Guidelines must be standardized.

- Images and tables need to be machine-readable where possible.

- Teams need ownership of updates.

- Testing must happen in real work conditions.

- Feedback must drive document revisions.

Security and Governance First

If the Yonsei story has a center of gravity, it is security. Microsoft says the hospital had to find a form of AI that could be deployed while maintaining network separation and security policies, which is a very different brief from rolling out a generic consumer chatbot. That constrain other architectural decision in the project.A hospital cannot afford casual AI

The hospital environment is uniquely unforgiving. Internal guidelines may contain operationally sensitive information, and any AI that touches those materials has to respect access controls, data boundaries, and policy frameworks. Yonsei’s choice of a SaaS model with external AI endpoints reflects a decision to prioritize controlled flexibility ke internal model stack.This is where Microsoft’s wider healthcare platform strategy matters. The company has been emphasizing safe and responsible healthcare agents, healthcare data solutions, and governance layers that make AI more usable in regulated settings. In October 2024, Microsoft explicitly positioned healthcare agent service in Copilot Studio as a way to build patient-facing and clinical workflow tools while helping organizations meet industry expectations. (news.microsoft.com)

That is not accidental language. Healthcare buyers want innovation, but they also want evidence that the vendor understands the compliance burden. Yonsei’s architecture suggests the hospital is looking for a platform that can evolve without repeatedly reopening its ss. That is a major reason hospitals often prefer configurable cloud services over internal AI infrastructure.

The operational logic of separation

The decision to keep network separation intact is more than a technical checkbox. It is what makes adoption politically and operationally possible in the first place. Without that guarasiasm would likely collide with IT caution, and the project would bog down in exceptions.Microsoft’s agent platform messaging reinforces this broader enterprise posture. The company says agents draw on authorized work data and can support specific workflows, but that also implies enterprises must carefully control what those agents can see. In a hospital, “authorized” is a very precise word. It defines what AI can safely know, not just what users happen to be curious about. (blogs.microsoft.com)

The strongest security lesson from Yonsei is therefore cultural as much as technical:

- Build within policy, not around it.

- Keep governance close to the business.

-ents as protected assets. - Design for auditability.

- Assume workflows will change.

Administrative Efficiency Becomes Clinical Capacity

The most immediate value of Yonsei’s agents is in administrative and support processes, but that should not be mistaken for a limited use case. In healthcare, administrative time saved often becomes clinical time recovered. When nurses and support staff no longer have ty hunting for documents or clarifying routine questions, they can redirect attention toward patients, coordination, and judgment-intensive work.Why admin is the smart first step

Microsoft has been explicit that healthcare organizations are also complex enterprises with scheduling, compliance, billing, and supply chain processes behind the scenes. Its healthcare agentic AI guidance says those repetitive multi-step tasks are exactly where agents can streamline work and reduce burden. Yonsei’s focus on support processes fits that logic neatly. (techcommunity.microsoft.com)That sequence is also strategically sound. If an organization starts with clini the stakes are immediately higher and the path to trust is much longer. Starting with administrative and support use cases lets the hospital prove the value of the platform in lower-risk settings before expanding into more sensitive territory.

Microsoft’s broader healthcare announcements point in the same direction. The company has already highlighted AI tools for nursing documentation, patient triage, and connected care experiences. Yonsei’s stepwise approach feels like an institutional version of that same progression: learn, validate, then expand. (news.microsoft.com)

Clinical spillover without clinical risk

This matters between administrative and clinical work is not always clean in a hospital. Administrative accuracy affects clinical flow, and poor documentation support can create delays that ripple into patient care. By improving support processes first, Yonsei may be quietly creating the conditions for safer and smoother clinical work later.There is also an enterprise professionalism angle. When staff canmptly and with fewer handoffs, the institution appears more organized, more reliable, and more responsive. In competitive healthcare markets, those qualities are not cosmetic; they shape patient trust, staff morale, and referral confidence. Professionalism, in this sense, is operational trust made visible.

The likely payoff includes:

- Less repetitive clarification work.

- Betthifts.

- Faster response to procedural questions.

- More time for patient-facing tasks.

- Reduced strain on subject-matter experts.

The Microsoft Platform Strategy Behind the Story

Yonsei’s deployment is not just a local IT story; it is also a case study in Microsoft’s broader platform strategy. The company is positioning Copilot Studio and the Power Platform as the foundation for a broad agent ecosystem, and Yonsei shows how that strategy can land in a regulated institution with real operational constraints. (blogs.microsoft.com)From assistant to agent to workflow layer

Microsoft’s own language has evolved quickly. In late 2024, the company said Copilot Studio enables organizations to create, manage, and connect agents, and described agents as the new apps for an AI-powered world. That is an important framing shift because it moves the conversatd prompts and toward operational systems. (blogs.microsoft.com)Yonsei is effectively validating that thesis in a hospital context. The agent is not functioning as a novelty chatbot; it is acting as a workflow layer inside Teamted documents and department knowledge. That is exactly the sort of embedded AI Microsoft wants enterprises to adopt.

It also helps Microsoft make a broader claim about extensibility. If a hospital can build useful agents for nursing guidelines and support tasks, then similar patterns can be extended to other departments. The platform story becomes more persuasive when customers can see a path from one concrete win to a portfolio of use cases.

Competitive implications

For rivals, the message is clear: vertical AI must now prove it can live inside the systems people already use. Standalone tools have to fight for attention, while platform-embedded agents inherit distribution, permissions, and familiarity. That gives Microsoft a strong hand in enterprise healthcare, especially where organizations already depend on Teams and the broader Microsoft stack. (blogs.microsoft.com)The flip side is that Microsoft must keep proving governance and reliability. Hospitals will not tolerate flashy AI that cannot be audited or controlled. So the competitive battleground is not just model quality; it is operational trust, configurable security, and the ability to keep pace with change without breaking the workflow. That is a harder contest to win, but also a more durable one. (blogs.microsoft.com)

Key platform takeaways:

- Microsoft is making Copilot a workflow layer, not a side tool.

- Copilot Studio is the bridge between generic AI and regulated enterprise use.

- Power Platform lowers the barrier to local customization.

- Teams provides an adoption surface hospitals already trust.

- Governance is becoming a product feature, not an afterthought.

Enterprise vs Consumer Impact

Although Yonsei’s use case is firmly enterprise-focused, it has a broader significance for how people think about AI in healthcare. Consumers tend to see AI assistant, but hospitals see it as a coordination engine. Those are related ideas, yet they solve different problems and require different safeguards. (news.microsoft.com)Why the enterprise case is harder

In consumer settings, a user may accept some ambiguity if the assistant is convenient. In a hospital, ambiguity can create operational risk. That is why enterprise AI needs document control, access governance, validation loops, and role-specific design. Yonsei’s approach is more demanding, but it is also more credible because it begins where accountability already exists.Microsoft’s healthcare work reflects this tension. The company has both consumer-facing health ambitions and enterprise healthcare agent services, but the hospital story depends on the enterprise side being trustworthy enough to support real work. Yonsei’s implementation shows what that trust layer looks like in practice. ([news.microsoft.com](https://news.microsoft.com/2024/10/10/microsoft-expands-ai-capabilities-to-shape-a-healthied, the consumer and enterprise sides can reinforce each other. As more healthcare organizations normalize AI-assisted workflows, public comfort with AI in medicine may increase. The danger is if consumer expectations outpace hospital governance. Then the conversation shifts from productivity to liability very quickly. (news.microsoft.com)

What consumers may not see

What a patient sees as “the hospital answered my question faster” may actually be the result of a whole hidden system: better guidelines, cleaner documents, a low-code agent, trained staff, and a secure architecture. That invisible stack is where the real work happens. In that sense, enterprise AI is doing the unglamorous groundwork for any future patient-facing AI experience.The broader implication is that hospitals will likely adopt AI in layers:

- Internal admin and suppont-specific knowledge agents.

- Clinical documentation and workflow ass validated decision support.

- Potentially, patient-facing interactions.

Strengths and Opportunities

Yonsei’s impt because it is rooted in actual hospital operations rather than speculative AI theater. It coerface, secure architecture, and department-level ownership in a way that could scale across a complex healthcare institution. The opportunity is not just faster answers; it is a more disciplined way to manage institutional knowledge.- Lower friction adoption by using Teams, which staff already know.

- Security-first design that respects hospital network separation.

- Citizen developer model that lets departments own their own agents.

- Faster access to guidelines for nurs.

- Better document discipline through iterative cleanup and validatcalability across administrative, research, and clinical support w alignment with Microsoft’s healthcare platform and Copilot Studio roadmap. ([blops://blogs.microsoft.com/blog/2024/10/21/new-autonomous-agents-scale-your-t/))

Risks and Concerns

The same qualities that make this approach promising also create real risks. AI in a hospital cannot be treated as a simple productivity hack, and the more deeply it becomes embedded in daily operations, the more important governance, testing, and documentation quality become.- Document quality problems can produce weak or misleading answers.

- Overreliance risk may cause staff to trust the agent too quickly.

- **Secucur if updates outpace policy review.

- Workflow fragmentation may appear if departments build inconsistent agents.

- Change management burden could slow adoption if training is uneven.

- Scalability limits may emerge if support processes are automated faster than governance matures. (techcommunity.microsoft.com)

- Clinical boundary creep may tempt organizatioy from admin support to higher-risk use cases. (news.microsoft.com)

Looking Ahead

Yonsei’s road map suggests a staged expansion: start with administratives, then gradually extend the same knowledge-and-validation model into more complex hospital functions. That is likely the right move because it gives the organization a way to prove value while improving its governance muscle at the same time. Microsoft’s framing of AI transformation, or AX, reinforces that this is a multi-step journey rather than a one-off deployment.The next interesting question is not whether the hospital can build more agents, but whether it can build a durable institutional habit around maintaining them. That means ongoing document stewardship, structured feedback from frontline staff, and clear ownership for every knowledge domain. If Yonsei gets that part right, the system can become a foundational layer for hospital-wide operations rather than a pilot that fades after the first wave of enthusiasm.

What to watch next:

- Expansion from nursing guidelines into other department knowledge bases.

- More standardized internal document formats for AI readiness.

- Additional Teams-native agents for support workflows.

- Staged movement into clinical documentation support.

- Evidence of measurable time savings or reduced inquiry volume.

- New governance practices for validating AI outputs over time.

Source: Microsoft Source The Future of Medical Innovation with AI: Yonsei University Health System Uses AI Agents to Enhance Efficiency and Professionalism in Administrative & Support Processes - Source Asia

Last edited: