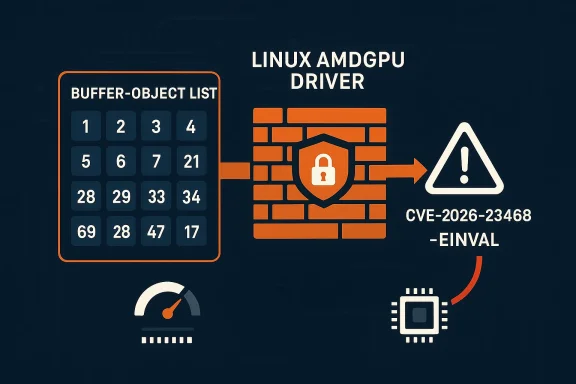

A newly published Linux kernel CVE is drawing attention for a reason that is easy to miss at first glance: it is not a flashy code-execution bug, but a resource-exhaustion flaw in the AMDGPU driver that can let userspace request an absurd number of buffer-object list entries and consume far more memory and CPU time than intended. The fix, now tracked as CVE-2026-23468, adds a hard ceiling of 128k BO list entries and rejects larger requests with

That distinction matle hear “overflow check passed” and assume the code is safe. It was safe from one narrow class of bug, but not from the broader reality that large, attacker-controlled allocations can be just as disruptive as malformed ones. In a driver path that may run often on graphics-heavy systems, even a benign-looking scalability issue can become a practical denial-of-service vector when the input is untrusted.

This is also a good example of how Linux kingly blends with reliability engineering. The patch description explicitly says the new cap is “more than sufficient” for realistic workloads, including large scenes with many buffers, which tells you the maintainers were balancing usability against abuse resistance rather than simply slamming the door on legitimate workloads. That kind of measured constraint is typically where high-quality kernel fixes end up: narrow enough to preserve functionality, strict enough to force predictable behavior.

For WindowsForum readers, the Microsoft angle is not that Window by an AMDGPU Linux kernel bug, but that Microsoft’s vulnerability catalog now tracks the CVE and can surface it through security tooling and advisories. In mixed estates — especially cloud, virtualization, and Linux-hosted developer environments — that visibility matters, because a Linux kernel issue can still become an enterprise issue when it lives inside infrastructure an organization depends on.

AMDGPU is one of the Linux kernel’s most complex and frequently exrs. It sits at the intersection of memory management, scheduling, command submission, and display handling, so small input-handling mistakes can have outsized effects on system stability. The BO list path involved here is a classic example: it is meant to help userspace describe a set of buffer objects, but once the driver accepts that description, it must trust the count, allocate for it, and walk it.

The kernel has long treated malformed inputs and oversized inputs as different problems. A multiplication ova dangerous wraparound when calculating total allocation size, but it does not answer the question, “Should the kernel honor a request this large at all?” That is the key insight behind this CVE. The patch moves the code from mathematically safe to operationally bounded.

That shift is not academic. GPU drivers tend to be exposed to high-volume, high-frequency workloads, and they often sit in environspace can be partially trusted but not fully controlled. Containers, desktop sessions, CI jobs, remote graphics workloads, and multi-tenant compute nodes all give userspace a way to stress kernel paths repeatedly. A limit of 128k entries is therefore less about avoiding a single crash and more about preventing a class of “death by giant request” problems.

The wording of the fix also tells a story about maintainership. The kernel comment says the limit is sufficient for any realistic use case, including a g all buffers in a large scene,” which is the kind of domain-specific sanity check that usually emerges from people who know how the subsystem is actually used. In other words, this is not a random cap; it is an informed boundary meant to preserve real workloads while rejecting pathological ones.

In the GPU space, that matters because graphics stacks are already juggling large buffers, large scenes, and expensive synchronization. A bug that adds another layer of unbounded processing create memory pressure, scheduling delay, and user-visible sluggishness can all stack on top of each other. That is why the fix is framed not just as a security boundary but as a way to keep performance predictable.

The practical effect is twofold. First, the kernel may allocate a huge amount of memory for the list bookkeeping itself. Second, it may spend a long time iterating over that list, which increases CPU consumption and can make the system feels not immediately crash. That combination is why this bug is best understood as predictable denial of service rather than a one-shot crash primitive.

By adding a hard cutoff rs converted the issue into a normal bounds-check problem: if you ask for more, you get

This is also a defense-in-depth move. If one validation layer can be bypassed in a way that still permits pathological input, a second, higher-level policy check closes the gap. The old code was trying to prove “this integer math is correct”; the new code adds “this request is not too large to be reasonable.” Those are different jobs, and both are threshold

The choice of 128k entries is notable because it is large enough to be effectively invisible to normal users, yet small enough to stop memory blowups before they become serious. That is the sweet spot kernel engineers aim for when they set a ceiling in a hot path: high enough to avoid false positives, low enough to bound resource usage.

It r lesson for driver authors. When a structure is ultimately fed by userspace, the question is not whether the kernel can technically handle a number, but whether it should. A sane upper bound is often the most robust and maintainable answer. That is especially true in subsystems where state grows proportionally with attacker-controlled input.

But DoS bugs are not low-value just because they are less ged hosts, build servers, thin-client systems, and desktop environments used for GPU-intensive workflows, a local kernel resource exhaustion path can be enough to disrupt service for other workloads or users. If the affected machine is part of a larger platform, the blast radius may exceed the single process that triggered it.

It also means defenders should think in terms of trust boundaries rather than just IP addre, a developer workstation, or a GPU compute node accepts code from multiple tenants or projects, the kernel path can become part of a larger multi-tenant resilience problem. That is why enterprise teams often care about these “mere” resource limits more than casual observers expect.

What is different here is the nature of the bug. It is not an exotic memory-corruption flaw, and it is not a race in the usual sense. y failure: the driver trusted a count that should have been bounded before any deep processing began. That makes the fix conceptually simpler, but not less important.

The history of graphics-driver hardening suggests a recurring pattern: maintainers first eliminate catastrophic corruption, then tighten scale limits, and then refine perfo system fails gracefully under stress. CVE-2026-23468 looks very much like part of that second wave. It is about making the driver behave predictably when inputs are extreme.

This is particularly relevant for organizations running Linux on AMD hardware in workstation fleets, VDI-like setups, or GPU-enabled compute nodes. In those environments, the GPU driver is not a niart of the platform’s core user experience and sometimes its revenue engine. A bug that slows or starves the GPU stack is therefore an availability problem, not a footnote.

The challenge is that graphics issues often sit at the awkward intersection of security and performance. Security teams want the patch installed, while workstation and platform teams want assurance that a new limit will not disrupt legitimate rendering or compute jobs. In that sense, the 128k is high enough to avoid obvious functional breakage, but it still deserves validation in very large or unusual pipelines.

Gamers, creators, and Linux desktop users running udience most likely to notice if a malformed or pathological workload can stress the BO-list path. In ordinary usage, most people will never get anywhere near 128k entries, which is why the patch should be effectively invisible for day-to-day users. That is the ideal outcome for a fix of this kind.

That said, users who run bleedom graphics stacks, or experimental workloads may encounter the new limit sooner than mainstream desktop users. In those settings, a tighter ceiling is not a flaw; it is a safety rail. The right response is to confirm that the workload remains within sane operating assumptions.

The broader significance is that cross-platform vulnerability management has become the default for many organizationtainers, and cloud images are no longer managed in separate mental silos, and a kernel bug can affect patch cadence, compliance reporting, and risk scoring across the entire estate. In that environment, a small driver fix can have surprisingly broad administrative consequences.

There is also an opportunity here for organizations to improve their patch discipline around graphics stacks, which are often treated as less urgent than network-facing components. In reality, drivers can become availability bottlenecks just, especially when they mediate heavily used local workflows. A clean input cap is a good reminder that kernel hardening is not only about exploits; it is about resilience.

There is also the usual backporting risk. A fix that is clean in mainline can be trickier in older distro kernels, and organizations running long-term support branches may not receive identical code even when they receive . That means administrators should verify the actual vendor backport, not just the CVE name.

Finally, the existence of this bug is a reminder that bound checks are only as strong as the policy behind them. If other driver paths still accept oversized structures without a comparable ceiling, the same class of issue can recur elsewhere. In that sense, this fix is valuable, but it is not the last word on GPU-side input hygiene.

Longer term, this CVE may reinforce a familiar lesson in kernel development: if a user-controlled count can grow linearly into memory, time, or state explosion, the code needs both arithmetic checg. That principle applies well beyond AMDGPU. It is the kind of defensive rule that keeps mature systems stable when the inputs stop being polite.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

-EINVAL, closing off a path to memory exhaustion and pathological processing delays. Microsoft’s security guidance has already surfaced the CVE entry, while the kernel-side description makes clear that the goal is predictable performance as much as security hardening. e issue is straightforward but important: the AMDGPU driver accepted a bo_number field from userspace and used it to size and process a buffer-object list. Even though previous checks prevented classic multiplication overflow, they did not stop an attacker or stress test from asking for a vastly oversized list, which could still drive memory allocation into the gigabytes and make list processing take an unreasonably long time. The upstream fix introduces a hard limit instead of relying on arithmetic validation alone, a pattern that kernel maintainers often use when “technically valid” inputs are still operationally dangerous.That distinction matle hear “overflow check passed” and assume the code is safe. It was safe from one narrow class of bug, but not from the broader reality that large, attacker-controlled allocations can be just as disruptive as malformed ones. In a driver path that may run often on graphics-heavy systems, even a benign-looking scalability issue can become a practical denial-of-service vector when the input is untrusted.

This is also a good example of how Linux kingly blends with reliability engineering. The patch description explicitly says the new cap is “more than sufficient” for realistic workloads, including large scenes with many buffers, which tells you the maintainers were balancing usability against abuse resistance rather than simply slamming the door on legitimate workloads. That kind of measured constraint is typically where high-quality kernel fixes end up: narrow enough to preserve functionality, strict enough to force predictable behavior.

For WindowsForum readers, the Microsoft angle is not that Window by an AMDGPU Linux kernel bug, but that Microsoft’s vulnerability catalog now tracks the CVE and can surface it through security tooling and advisories. In mixed estates — especially cloud, virtualization, and Linux-hosted developer environments — that visibility matters, because a Linux kernel issue can still become an enterprise issue when it lives inside infrastructure an organization depends on.

Background

Background

AMDGPU is one of the Linux kernel’s most complex and frequently exrs. It sits at the intersection of memory management, scheduling, command submission, and display handling, so small input-handling mistakes can have outsized effects on system stability. The BO list path involved here is a classic example: it is meant to help userspace describe a set of buffer objects, but once the driver accepts that description, it must trust the count, allocate for it, and walk it.The kernel has long treated malformed inputs and oversized inputs as different problems. A multiplication ova dangerous wraparound when calculating total allocation size, but it does not answer the question, “Should the kernel honor a request this large at all?” That is the key insight behind this CVE. The patch moves the code from mathematically safe to operationally bounded.

That shift is not academic. GPU drivers tend to be exposed to high-volume, high-frequency workloads, and they often sit in environspace can be partially trusted but not fully controlled. Containers, desktop sessions, CI jobs, remote graphics workloads, and multi-tenant compute nodes all give userspace a way to stress kernel paths repeatedly. A limit of 128k entries is therefore less about avoiding a single crash and more about preventing a class of “death by giant request” problems.

The wording of the fix also tells a story about maintainership. The kernel comment says the limit is sufficient for any realistic use case, including a g all buffers in a large scene,” which is the kind of domain-specific sanity check that usually emerges from people who know how the subsystem is actually used. In other words, this is not a random cap; it is an informed boundary meant to preserve real workloads while rejecting pathological ones.

Why this matters more than a simple overflow bug

A lot of security reporting focuses on memory corruption, but resource exhaustion bugs are often just as useful to an n shared hosts or systems with tight memory budgets. If a userspace process can make the kernel allocate much more memory than intended, it can degrade the machine, stall critical services, or trigger OOM behavior that spreads pain beyond the offending process.In the GPU space, that matters because graphics stacks are already juggling large buffers, large scenes, and expensive synchronization. A bug that adds another layer of unbounded processing create memory pressure, scheduling delay, and user-visible sluggishness can all stack on top of each other. That is why the fix is framed not just as a security boundary but as a way to keep performance predictable.

What the Vulnerability Actually Does

The vulnerable path begins when userspace supplies thebo_number field. In the pre-fix code, the driver could accept an arbitrary count and proceed to allocate or processas the arithmetic around the allocation did not overflow. That left the door open to extremely large but still “valid” input sizes.The practical effect is twofold. First, the kernel may allocate a huge amount of memory for the list bookkeeping itself. Second, it may spend a long time iterating over that list, which increases CPU consumption and can make the system feels not immediately crash. That combination is why this bug is best understood as predictable denial of service rather than a one-shot crash primitive.

The logic flaw in plain English

The driver asked, “Is this allocation arithmetic safe?” but not, “Is this request sane?” That’s a subtle but important distinction. A request can be safe to compute and still be disastrous to honor.By adding a hard cutoff rs converted the issue into a normal bounds-check problem: if you ask for more, you get

-EINVAL. That is a clean failure mode, and in kernel work that is usually preferable to trying to gracefully process arbitrary input sizes that are emand.- The bug is triggered through a userspace-controlled count field.

- The old check prevented wraparound, not scale abuse.

- Oversized requests could still consume excessive memory.

- Large inputs could also increase kernel processing time dramatically.

- The fix rejects requests above the new hard limit. imit Makes Sense

This is also a defense-in-depth move. If one validation layer can be bypassed in a way that still permits pathological input, a second, higher-level policy check closes the gap. The old code was trying to prove “this integer math is correct”; the new code adds “this request is not too large to be reasonable.” Those are different jobs, and both are threshold

The choice of 128k entries is notable because it is large enough to be effectively invisible to normal users, yet small enough to stop memory blowups before they become serious. That is the sweet spot kernel engineers aim for when they set a ceiling in a hot path: high enough to avoid false positives, low enough to bound resource usage.

It r lesson for driver authors. When a structure is ultimately fed by userspace, the question is not whether the kernel can technically handle a number, but whether it should. A sane upper bound is often the most robust and maintainable answer. That is especially true in subsystems where state grows proportionally with attacker-controlled input.

- Hard limits are ofn layered arithmetic guards.

- Large-but-valid inputs can still be dangerous.

- User-controlled counts should be treated as policy decisions, not just numbers.

- Predictable failure is better than unpredictable strain.

- Kernel hot paths benefit from explicit ceilings.

Security and Exploitability

This CVE does not read like a classic privilege-escalation path, ing plainly. The primary danger described in the fix is resource exhaustion: too much memory, too much processing time, too much opportunity for a local actor to make the machine behave badly. That makes it a denial-of-service issue first and foremost.But DoS bugs are not low-value just because they are less ged hosts, build servers, thin-client systems, and desktop environments used for GPU-intensive workflows, a local kernel resource exhaustion path can be enough to disrupt service for other workloads or users. If the affected machine is part of a larger platform, the blast radius may exceed the single process that triggered it.

Local versus remote risk

From the text avails from userspace, which implies local reach rather than a network-facing attack surface. That still leaves plenty of room for abuse in environments where untrusted local code is common, such as shared workstations, container hosts, and CI/CD systems that process third-party workloads. Local does not mean harmless.It also means defenders should think in terms of trust boundaries rather than just IP addre, a developer workstation, or a GPU compute node accepts code from multiple tenants or projects, the kernel path can become part of a larger multi-tenant resilience problem. That is why enterprise teams often care about these “mere” resource limits more than casual observers expect.

- Most likely impact: local denial of service.

- Higher risk in multi-user or multi-tenant Linux systems.PU workloads are frequent and large.

- Less about data theft than about availability and stability.

- Still security-relevant because untrusted userspace can drive it.

Relationship to Prior AMDGPU Fixes

AMDGPU has seen its share of stability fixes over the years, and that is exactly why this CVE feels familiar to kernel watchers. The driver handles complex state transitions and enormouainers repeatedly tighten the same broad areas: validation, allocation sizing, buffer lifetime, and cleanup logic. This new fix fits squarely into that pattern.What is different here is the nature of the bug. It is not an exotic memory-corruption flaw, and it is not a race in the usual sense. y failure: the driver trusted a count that should have been bounded before any deep processing began. That makes the fix conceptually simpler, but not less important.

Why these bugs keep surfacing

GPU drivers are deeply stateful. Every buffer list, queue submission, and command stream is a little contract between userspace and the kernel, and the kernel must enforce the rules without becoming so restrictitimate workloads. That balancing act guarantees recurring edge cases, because the pressure to support larger, more complex scenes never really stops.The history of graphics-driver hardening suggests a recurring pattern: maintainers first eliminate catastrophic corruption, then tighten scale limits, and then refine perfo system fails gracefully under stress. CVE-2026-23468 looks very much like part of that second wave. It is about making the driver behave predictably when inputs are extreme.

- AMDGPU has a long history of robustness fixes.

- Modern graphics workloads increase pressure on driver limits.

- Policy checks often follow correctness checks.

- Large-user-input bugs can emerge even when overflow is already handled.

- Theward stricter, more predictable boundaries.

Enterprise Impact

For enterprise administrators, the biggest takeaway is that this bug is about operational control, not just security patching. If a system lets users create giant BO lists, the result could be memory pressure, delayed rendering, degraded desktop responsiveness, or outright process and system instabitructure, that can translate into ticket noise, reduced uptime, and frustratingly hard-to-reproduce failures.This is particularly relevant for organizations running Linux on AMD hardware in workstation fleets, VDI-like setups, or GPU-enabled compute nodes. In those environments, the GPU driver is not a niart of the platform’s core user experience and sometimes its revenue engine. A bug that slows or starves the GPU stack is therefore an availability problem, not a footnote.

Operational fallout

Even when a vulnerability does not become an exploit in the wild, it can still drive costly operational behavior. Teams may need to validate kernel versions, confirm distro backports, test workloads under load, and watch for regression risk after updates. That ecurity, but it consumes time and attention that most organizations would rather spend elsewhere.The challenge is that graphics issues often sit at the awkward intersection of security and performance. Security teams want the patch installed, while workstation and platform teams want assurance that a new limit will not disrupt legitimate rendering or compute jobs. In that sense, the 128k is high enough to avoid obvious functional breakage, but it still deserves validation in very large or unusual pipelines.

- Expect this to be handled as an availability-hardening update.

- Validate on real workloads before broad rollout in graphics-heavy environments.

- Pay attention to distro backports, not just upstream commits.

- Watch for any user-facing regressions in extreme-scene rendering.

- Trel Linux kernel hygiene on AMD systems.

Consumer Impact

For consumers, the most likely symptom would not be a dramatic security alert but a frustrating slowdown, application hang, or crash in a graphics-heavy session. That is exactly why bugs like this are easy to underestimate: they often feel like “just another driver problem” until they pile up into recurring instability.Gamers, creators, and Linux desktop users running udience most likely to notice if a malformed or pathological workload can stress the BO-list path. In ordinary usage, most people will never get anywhere near 128k entries, which is why the patch should be effectively invisible for day-to-day users. That is the ideal outcome for a fix of this kind.

What users should expect

The practical eupdated kernels should behave the same for normal workloads and reject absurd ones cleanly. If anything changes visibly, it would more likely be in edge-case behavior around pathological stress tests or unusual graphics applications. For the vast majority of users, this should land as a quiet stability improvement.That said, users who run bleedom graphics stacks, or experimental workloads may encounter the new limit sooner than mainstream desktop users. In those settings, a tighter ceiling is not a flaw; it is a safety rail. The right response is to confirm that the workload remains within sane operating assumptions.

- Most consumers will never notice the change.

- Stability should improve under extreme or maics-heavy workloads are the most relevant test case.

- Experimental users may be the first to encounter the new limit.

- The patch is designed to be invisible in normal desktop use.

The Microsoft Angle

Microsoft’s inclusion of the CVE in its security ecosystem is a reminder that Linux kernel vulnerabilities now live in a much broader enterprise visibility layer than they olaw is entirely in upstream Linux, security teams using Microsoft tooling may still see it in their dashboards, inventories, or vulnerability workflows. That makes the advisory relevant beyond the AMDGPU community.The broader significance is that cross-platform vulnerability management has become the default for many organizationtainers, and cloud images are no longer managed in separate mental silos, and a kernel bug can affect patch cadence, compliance reporting, and risk scoring across the entire estate. In that environment, a small driver fix can have surprisingly broad administrative consequences.

Why visibiliteasiest mistakes in modern IT is assuming that a Linux CVE is only for Linux specialists. In practice, many enterprise defenders care less about the technical origin and more about whether a VM image, host kernel, or appliance build includes the affected code. That is why public CVE tracking, even for seemingly niche issues, has become operationally valuable.

And because Microsoft often maps Linux-related issues into product-specific gcan become relevant to cloud teams, endpoint teams, and security operations teams at once. That is not duplication; it is the reality of heterogeneous fleets. The more integrated the environment, the less isolated a kernel bug becomes.- Microsoft visibility increases the reach of the advisory.

- Mixed estates need Linux kernel awareness in security workflows.

- CVE tracking can influence compliance and reporting teams may see the same issue differently.

- Cross-platform estates make kernel bugs operationally visible.

Strengths and Opportunities

The good news is that this is the kind of vulnerability Linux kernel maintainers know how to fix well: the remedy is narrow, understandable, and unlikely to create major architectural churn. It also reflects a maturing approach to defensive coding, where t says “no” to obviously unreasonable requests instead of trying to be infinitely accommodating.There is also an opportunity here for organizations to improve their patch discipline around graphics stacks, which are often treated as less urgent than network-facing components. In reality, drivers can become availability bottlenecks just, especially when they mediate heavily used local workflows. A clean input cap is a good reminder that kernel hardening is not only about exploits; it is about resilience.

- The patch is conceptually simple and easy to reason about.

- It preserves realistic workloads while blocking abuse.

- It improves predicel path.

- It reinforces defense-in-depth in driver validation.

- It may prompt better workload testing in enterprise GPU environments.

- It showcases maintainers applying domain knowledge to set a sane ceiling.

- It should reduce stress on memory and processing in extreme cases.

Risks and Concerns

The main concern is that resource exhaustion bugs often get dismissed because they sound less severe t That is a mistake. In infrastructure, especially on multi-user Linux hosts, the ability to drive the kernel into excessive allocation or prolonged processing can be enough to disrupt business-critical workloads.There is also the usual backporting risk. A fix that is clean in mainline can be trickier in older distro kernels, and organizations running long-term support branches may not receive identical code even when they receive . That means administrators should verify the actual vendor backport, not just the CVE name.

Where caution is warranted

Another concern is regression risk in unusually large graphics pipelines. Even though 128k is a generous limit, some specialized workloads may depend on edge-case behavior that developers did not anticipate. If a vendor or distribution backport changes surrounding logic, the impact could show up as new failures tharst.Finally, the existence of this bug is a reminder that bound checks are only as strong as the policy behind them. If other driver paths still accept oversized structures without a comparable ceiling, the same class of issue can recur elsewhere. In that sense, this fix is valuable, but it is not the last word on GPU-side input hygiene.

- Resource exhaustion sly, not downgraded.

- Backport differences can make vendor verification essential.

- Large specialized workflows may warrant regression testing.

- Similar issues could exist in adjacent driver paths.

- Security tooling may overstate or understate practical exposure depending on inventory.

- Local-only bugs still matter on shared or tenarnel fixes can improve safety while still creating edge-case compatibility questions.

Looking Ahead

The most likely next step is broad distro and vendor backporting, followed by routine incorporation into enterprise kernel baselines. Because the fix is simple and the limit is generous, this should be a relatively low-drama remediation cycle compared with more invatill, security and platform teams should confirm that the exact kernel build they run includes the limit, not just a generic AMDGPU update.Longer term, this CVE may reinforce a familiar lesson in kernel development: if a user-controlled count can grow linearly into memory, time, or state explosion, the code needs both arithmetic checg. That principle applies well beyond AMDGPU. It is the kind of defensive rule that keeps mature systems stable when the inputs stop being polite.

What to watch next

- Vendor backports into long-term-support kernels.

- Confirmation that downstream packages preserve the 128k cap.

- Any reports of regressions in unusually large GPU workloads.

- Whether adjacent AMDGPU paths receive similar sanity limits.

- Security tooling updates that map the CVE into enterprise dashboards.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center