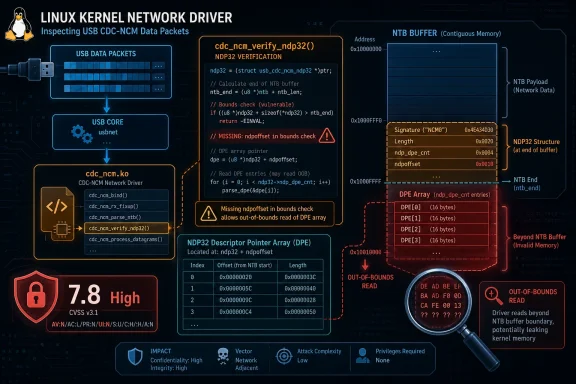

CVE-2026-23447 is a narrow Linux kernel bug with broader implications for anyone running USB networking stacks on affected systems. The flaw sits in the cdc_ncm driver’s NDP32 verification path, where the kernel failed to account for ndpoffset when checking the bounds of the descriptor pointer entry array. That omission can produce out-of-bounds reads when the NDP32 structure is positioned near the end of an NTB, which is why the issue has now been tracked as a security vulnerability with a high CVSS 3.1 score in the Microsoft Security Update Guide data you provided. The published description makes clear that this is a follow-on to the earlier NDP16 fix, not a wholly separate design failure, and that matters because it shows how one validation mistake can echo across closely related code paths.

The underlying problem is a classic kernel hardening story: a parser or verifier trusted a length relationship that was true only if a structure started at offset zero. In cdc_ncm_rx_verify_ndp32(), the code validated the size of the DPE array against the total skb length, but it did so without adding the starting offset of the NDP32 itself. That means the code could believe there was enough room for the descriptor array when, in reality, the NDP32 had been placed so late in the NTB that the tail end of the structure extended past the end of the buffer. The result is not necessarily dramatic corruption, but it is enough to let the kernel read memory it should not touch, and that is enough to justify a security fix.

The wording of the fix is revealing. The patch adds ndpoffset to the nframes bounds check and uses struct_size_t() to express the combined NDP-plus-DPE size more clearly. That is not just a style change; it is a sign that the maintainers wanted the code to reflect the actual layout of the on-wire or in-memory object rather than an abstract assumption about where that object begins. Kernel vulnerabilities often appear where code reasons about structure size without also reasoning about structure location, and this CVE is a textbook example of that pattern.

The record also shows that the issue is considered relevant across multiple Linux kernel branches and long-lived stable lines. That is a strong signal that this is not an isolated edge-case bug in a dead branch, but a defect that maintainers believe may exist in real deployed kernels. Because Linux networking and USB gadget code show up in embedded devices, mobile infrastructure, and specialized appliances as well as general-purpose systems, the practical exposure can be wider than the modest size of the code change suggests.

Perhaps most importantly, this is the kind of bug that is easy to dismiss if you only look at the headline. An out-of-bounds read sounds less alarming than a write, and the CVE description says the patch is compile-tested only, not runtime-tested in the upstream announcement. But in kernel space, reads still matter. They can leak memory contents, trigger crashes, and expose internal state that other bugs can combine with later. The fact that the flaw was caught in a verification function also means the kernel was very close to the trust boundary when it made the mistake.

The use of struct_size_t() is equally important. Kernel code is easier to reason about when it calculates the size of a structure-plus-array combination in one expression rather than assembling the logic through multiple implicit assumptions. That makes it harder for a future change to introduce a mismatch between the base structure and its trailing entries. In other words, the patch is not just closing a hole; it is trying to reduce the chances of reopening that hole later.

The Microsoft entry describes the impact in standard kernel terms: a local attacker with low privileges could exploit the flaw to achieve high confidentiality, integrity, and availability impact under the CVSS 3.1 vector provided. That should be read carefully. “Local” in kernel vulnerability scoring often means the attacker must already be able to present the malicious input to the host through a local interface or device path, not necessarily that they must log into the machine in the traditional sense.

That creates a few realistic exposure scenarios:

That is especially true when the code is inspecting a structure near the end of a buffer. The tail of a packet often contains the most interesting metadata and the least slack space, which means the exact read location can produce inconsistent but meaningful results. Even when the immediate symptom is a warning or a malformed parse, the security consequences can be real.

It also reflects a broader kernel development trend: explicit arithmetic is better than implied arithmetic. When the code uses struct_size_t(), it is spelling out the relationship between the base structure and the array that follows it. That reduces the chance that a future maintainer will accidentally break the check by changing one piece and forgetting the other.

A few reasons this is still a strong fix:

Using a combined size expression reduces ambiguity. It also helps reviewers spot whether a later change has drifted from the actual layout. In a subsystem like cdc_ncm, where the transport format has multiple nested descriptors, clarity is a defense mechanism in itself.

For enterprises, the situation is more nuanced. USB networking may be irrelevant on hardened servers, but it can absolutely matter on laptops, field systems, kiosks, test rigs, and embedded Linux appliances. These are exactly the kinds of environments where a bad peripheral, an abused development device, or a maliciously crafted gadget can create exposure. The patch therefore belongs in a normal kernel maintenance cycle, not in a “we’ll get to it eventually” bucket.

That means the real questions are:

That score should not be interpreted as a claim that exploitation is trivial. It means the potential impact is severe if the vulnerable path can be exercised. In kernel security, the gap between “hard to reach” and “easy to reach” often depends on deployment specifics, which is why some organizations may see this as a medium-priority patch while others should treat it as urgent.

The appearance of the issue there also suggests the vulnerability has crossed the threshold from upstream kernel maintenance into enterprise-facing security tracking. That usually means organizations should expect vendor advisories, backports, and patch windows to follow.

The second thing to watch is whether adjacent cdc_ncm validation paths are audited for similar offset mistakes. Once one member of a code family has a bounds-check omission, the neighboring logic is rarely far behind. That is especially true in code that has evolved through incremental patches rather than a complete rewrite.

The third thing to watch is whether enterprise teams classify this based on exposure rather than headline severity. A host with no USB networking use may be low priority, while a fleet of tethered devices or embedded endpoints may need rapid action. The right response depends on how close the vulnerable path is to real input.

CVE-2026-23447 is not the kind of vulnerability that makes headlines outside the kernel community, but it is exactly the kind that rewards disciplined patching. The fix is small, the reasoning is solid, and the implications are larger than the surface area suggests. In modern kernel maintenance, small correctness bugs are often the earliest warning that a subsystem has drifted just far enough from its assumptions to become dangerous, and this one deserved the prompt attention it received.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Overview

Overview

The underlying problem is a classic kernel hardening story: a parser or verifier trusted a length relationship that was true only if a structure started at offset zero. In cdc_ncm_rx_verify_ndp32(), the code validated the size of the DPE array against the total skb length, but it did so without adding the starting offset of the NDP32 itself. That means the code could believe there was enough room for the descriptor array when, in reality, the NDP32 had been placed so late in the NTB that the tail end of the structure extended past the end of the buffer. The result is not necessarily dramatic corruption, but it is enough to let the kernel read memory it should not touch, and that is enough to justify a security fix.The wording of the fix is revealing. The patch adds ndpoffset to the nframes bounds check and uses struct_size_t() to express the combined NDP-plus-DPE size more clearly. That is not just a style change; it is a sign that the maintainers wanted the code to reflect the actual layout of the on-wire or in-memory object rather than an abstract assumption about where that object begins. Kernel vulnerabilities often appear where code reasons about structure size without also reasoning about structure location, and this CVE is a textbook example of that pattern.

The record also shows that the issue is considered relevant across multiple Linux kernel branches and long-lived stable lines. That is a strong signal that this is not an isolated edge-case bug in a dead branch, but a defect that maintainers believe may exist in real deployed kernels. Because Linux networking and USB gadget code show up in embedded devices, mobile infrastructure, and specialized appliances as well as general-purpose systems, the practical exposure can be wider than the modest size of the code change suggests.

Perhaps most importantly, this is the kind of bug that is easy to dismiss if you only look at the headline. An out-of-bounds read sounds less alarming than a write, and the CVE description says the patch is compile-tested only, not runtime-tested in the upstream announcement. But in kernel space, reads still matter. They can leak memory contents, trigger crashes, and expose internal state that other bugs can combine with later. The fact that the flaw was caught in a verification function also means the kernel was very close to the trust boundary when it made the mistake.

What the Bug Changes

At a technical level, the fix tightens validation in the NDP32 path so that the kernel no longer assumes the descriptor array starts at the beginning of the buffer. That distinction is critical because NTBs can place data structures at different offsets, and the logic has to account for both size and placement. By adding ndpoffset to the bounds check, the patched code makes sure the structure’s effective footprint fits inside the actual skb before the kernel walks the DPE entries.The use of struct_size_t() is equally important. Kernel code is easier to reason about when it calculates the size of a structure-plus-array combination in one expression rather than assembling the logic through multiple implicit assumptions. That makes it harder for a future change to introduce a mismatch between the base structure and its trailing entries. In other words, the patch is not just closing a hole; it is trying to reduce the chances of reopening that hole later.

Why the offset matters

The bug exists because the verifier looked at the array size in isolation. If the NDP32 starts late in the NTB, the array may technically be “large enough” by total length but still extend beyond the buffer after accounting for the starting point. That is why the fix adds ndpoffset to the check rather than merely tightening the array size limit.- The flaw is in a bounds check, not in protocol parsing itself.

- The effective object size depends on where the structure begins.

- The bug can be triggered when the NDP32 sits near the end of the NTB.

- The correction aligns the code with the actual memory layout.

- The risk is out-of-bounds read, not necessarily immediate corruption.

Why NDP32 is not just “NDP16 with bigger numbers”

The fact that this bug mirrors an earlier NDP16 issue is a clue that the driver has two closely related verification paths that do not share perfectly identical assumptions. The previous patch fixed the same class of mistake for NDP16, and now the NDP32 path has been corrected as well. That pattern is common in mature subsystems: one half of a design gets tightened, and the sibling path turns out to have a slightly different but equally risky version of the same bug.- NDP16 and NDP32 are not interchangeable implementations.

- Parallel code paths can accumulate parallel validation bugs.

- Fixing one branch does not guarantee the sibling branch is safe.

- Security maintenance often arrives as a sequence of near-duplicate patches.

- The real lesson is to audit families of helpers, not just individual functions.

How the Vulnerability Could Be Reached

This CVE is not about a remote attacker casually sending malformed HTTP requests to a server. It lives in the Linux USB networking stack, which means the attack surface depends on whether the affected host processes USB CDC NCM traffic. That makes the issue more contextual than some higher-profile kernel bugs, but it does not make it trivial to ignore. In the right environment—especially embedded systems, tethered devices, or systems handling USB networking adapters—the vulnerable path may be reachable through crafted input.The Microsoft entry describes the impact in standard kernel terms: a local attacker with low privileges could exploit the flaw to achieve high confidentiality, integrity, and availability impact under the CVSS 3.1 vector provided. That should be read carefully. “Local” in kernel vulnerability scoring often means the attacker must already be able to present the malicious input to the host through a local interface or device path, not necessarily that they must log into the machine in the traditional sense.

The relevant trust boundary

USB networking drivers sit on a trust boundary between the kernel and a peripheral or gadget presenting structured data. If that structured data is malformed in just the wrong way, the driver may walk past the end of the buffer while trying to verify it. The vulnerability described here is exactly that sort of bug: the code validates a nested structure as though its base offset were irrelevant.That creates a few realistic exposure scenarios:

- A system using USB CDC NCM for tethering or network transport.

- An embedded device that exposes or consumes NTBs from a controlled peripheral.

- A lab or enterprise environment where malicious or compromised USB devices are present.

- A host using vendor kernels that backported the driver but not the full verification fix.

Why this still matters if the bug is “only” an out-of-bounds read

Out-of-bounds reads are sometimes treated as lesser bugs because they do not directly overwrite memory. That is a dangerous shortcut. A read outside bounds can still crash a kernel, disclose adjacent memory, or create a reliable primitive for chaining with other weaknesses. In kernel space, a leak of nearby state can be just as strategically valuable as a write if it helps an attacker bypass mitigations or map internal layout.That is especially true when the code is inspecting a structure near the end of a buffer. The tail of a packet often contains the most interesting metadata and the least slack space, which means the exact read location can produce inconsistent but meaningful results. Even when the immediate symptom is a warning or a malformed parse, the security consequences can be real.

Why This Fix Is a Good Kernel Hardening Example

The best thing about this patch is that it corrects the logic without widening the surface area of the code. The change is surgical. It does not redesign the driver, rewrite the protocol parser, or add a broad new abstraction. Instead, it makes the existing validation actually match the object layout the kernel is inspecting. That is the kind of change maintainers prefer in stable kernels because it is easier to reason about and less likely to introduce collateral regressions.It also reflects a broader kernel development trend: explicit arithmetic is better than implied arithmetic. When the code uses struct_size_t(), it is spelling out the relationship between the base structure and the array that follows it. That reduces the chance that a future maintainer will accidentally break the check by changing one piece and forgetting the other.

Why compile-tested-only still matters

The patch note says the change is compile-tested only. That does not mean the fix is weak; it means the maintainers were transparent about the verification stage at the time of publication. In kernel development, especially for stable backports, compile-tested changes are common when the code path is well understood and the risk of changing the logic is low. The real confidence comes from the narrowness of the patch and the fact that it aligns with a previously fixed sibling bug.A few reasons this is still a strong fix:

- It follows the same logic as the earlier NDP16 correction.

- It corrects an obvious mismatch between offset and length accounting.

- It uses a clearer helper for structure sizing.

- It does not alter packet semantics beyond the bounds check.

- It is the kind of patch that tends to backport cleanly.

Why clarity in size checks is a security feature

Kernel bugs often survive because the code is almost right. A helper checks the array length, another routine checks the total packet length, and the relationship between the two is assumed rather than encoded. That works until an attacker or malformed device puts the object in an unusual place. Then the “almost” becomes a vulnerability.Using a combined size expression reduces ambiguity. It also helps reviewers spot whether a later change has drifted from the actual layout. In a subsystem like cdc_ncm, where the transport format has multiple nested descriptors, clarity is a defense mechanism in itself.

Enterprise and Consumer Impact

For most consumer desktops, this CVE is probably low in practical day-to-day relevance unless they use affected USB networking hardware or a distribution kernel that has the vulnerable code path active. Many users will never encounter cdc_ncm at all, and those who do may only use it indirectly through USB tethering or specialized adapters. That said, low-frequency code paths are not the same as safe code paths, and modern consumer systems still interact with USB devices far more often than administrators sometimes assume.For enterprises, the situation is more nuanced. USB networking may be irrelevant on hardened servers, but it can absolutely matter on laptops, field systems, kiosks, test rigs, and embedded Linux appliances. These are exactly the kinds of environments where a bad peripheral, an abused development device, or a maliciously crafted gadget can create exposure. The patch therefore belongs in a normal kernel maintenance cycle, not in a “we’ll get to it eventually” bucket.

Where the risk is highest

The likely highest-risk environments are the ones where USB networking is part of the routine operating model rather than an edge case.- Field laptops that tether over USB.

- Embedded Linux devices with CDC NCM support enabled.

- Development systems used with custom USB gadgets.

- Industrial or kiosk systems that accept removable peripherals.

- Vendor kernels that lag upstream stable fixes.

Why mixed estates complicate the picture

One of the more subtle challenges here is that vulnerability management tools often emphasize package versions, while this bug is really about code paths and device behavior. A machine can run a “fixed” kernel version and still have vendor-specific backports that change the effective patch state. Conversely, a machine may appear old but already include the necessary backport from a distribution kernel.That means the real questions are:

- Does the kernel include the cdc_ncm fix?

- Is USB CDC NCM actually used in the environment?

- Was the correction backported by the vendor?

- Are there attached or trusted devices that can reach the path?

- Is the kernel build one of the affected stable branches listed in the advisory data?

Why the CVSS Score Looks Serious

The CVSS 3.1 score of 7.8 High indicates that the vulnerability is considered material even though it is local and depends on device-triggered code paths. The vector in your provided data is AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:H/A:H, which is an unusually heavy combination for a bug that lives in a protocol verifier. The reason is simple: kernel bugs often sit close enough to privileged code and sensitive memory that even a narrow flaw can have broad consequences if it is reachable.That score should not be interpreted as a claim that exploitation is trivial. It means the potential impact is severe if the vulnerable path can be exercised. In kernel security, the gap between “hard to reach” and “easy to reach” often depends on deployment specifics, which is why some organizations may see this as a medium-priority patch while others should treat it as urgent.

How to read the severity correctly

A high score here says the bug is not merely cosmetic. It deserves action because it can affect confidentiality, integrity, and availability. But it does not automatically mean every Linux machine in the world is at immediate risk. The severity must be paired with environmental exposure.- High severity does not always mean universal exploitability.

- Kernel bugs can be severe but still situational.

- The practical risk is highest where the affected subsystem is active.

- Vendor backports can reduce or eliminate exposure before version numbers change.

- Administrators should treat this as a patch validation item, not just a threat bulletin.

Why Microsoft’s listing matters for defenders

Because the vulnerability appears in Microsoft’s Security Update Guide, it becomes visible in a workflow many enterprise teams already use for cross-platform monitoring. That does not mean Microsoft products are directly affected; it means defenders can track Linux kernel issues in the same operational pipeline as other enterprise vulnerabilities. For mixed Windows-Linux environments, that is a practical convenience rather than a minor detail.The appearance of the issue there also suggests the vulnerability has crossed the threshold from upstream kernel maintenance into enterprise-facing security tracking. That usually means organizations should expect vendor advisories, backports, and patch windows to follow.

Strengths and Opportunities

This patch is a good example of how a small kernel fix can deliver outsized value. It corrects a validation flaw without changing the overall architecture of the driver, and it improves the clarity of the code at the same time. That combination makes it a low-friction backport candidate and a useful reminder that security often comes from precision rather than redesign.- The fix is narrow and surgical.

- It aligns the bounds check with the actual structure offset.

- It uses struct_size_t() for clearer expression of object size.

- It mirrors an earlier correction in the sibling NDP16 path.

- It should be relatively easy for vendors to backport.

- It reduces the chance of future review mistakes in the same code path.

- It strengthens a trust boundary in a USB networking parser.

Risks and Concerns

The main concern is that this bug could be underestimated because it is “only” a read and because the affected subsystem feels niche to many users. That kind of thinking is how kernel bugs linger in production longer than they should. The other concern is that exposure can hide behind device usage patterns, meaning inventory tools may not tell you whether the vulnerable path is actually live.- The bug may be dismissed as a low-visibility USB issue.

- A read past bounds can still enable information disclosure.

- Exposure depends on actual device behavior, not just package version.

- Vendor backports can make version checks misleading.

- Long-lived embedded systems may carry the affected code longer.

- Misconfigured or malicious peripherals can make the path reachable.

- Similar logic bugs may exist in nearby descriptor validation code.

What to Watch Next

The first thing to watch is whether downstream distributions and vendor kernels quickly absorb the fix across the affected branches listed in the advisory data. Because the bug has already been tied to stable reference commits, the patch should be straightforward for maintainers to propagate. The practical question is not whether the fix exists upstream, but how quickly it becomes available in the kernels that real systems actually run.The second thing to watch is whether adjacent cdc_ncm validation paths are audited for similar offset mistakes. Once one member of a code family has a bounds-check omission, the neighboring logic is rarely far behind. That is especially true in code that has evolved through incremental patches rather than a complete rewrite.

The third thing to watch is whether enterprise teams classify this based on exposure rather than headline severity. A host with no USB networking use may be low priority, while a fleet of tethered devices or embedded endpoints may need rapid action. The right response depends on how close the vulnerable path is to real input.

- Track vendor advisories for backported fixes.

- Confirm whether cdc_ncm is enabled in your kernel builds.

- Identify systems that actually use USB networking.

- Watch for follow-up fixes in related descriptor verification helpers.

- Validate whether your patch management process distinguishes upstream version from vendor patch state.

CVE-2026-23447 is not the kind of vulnerability that makes headlines outside the kernel community, but it is exactly the kind that rewards disciplined patching. The fix is small, the reasoning is solid, and the implications are larger than the surface area suggests. In modern kernel maintenance, small correctness bugs are often the earliest warning that a subsystem has drifted just far enough from its assumptions to become dangerous, and this one deserved the prompt attention it received.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center