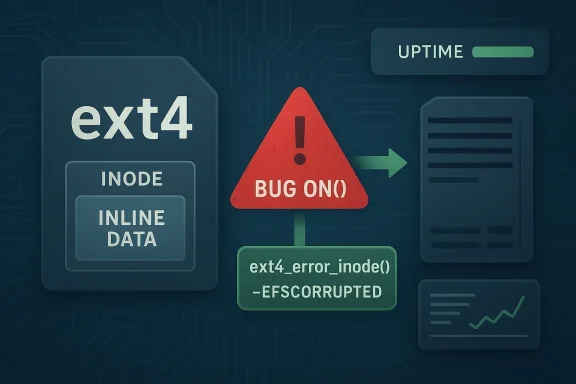

The newly published CVE-2026-31451 is a classic example of why kernel bug fixes matter even when the flaw is framed as a “proper error handling” change rather than a dramatic exploit primitive. In the Linux ext4 filesystem, an unchecked inline-data condition could trigger a BUG_ON in

The important detail here is not merely that ext4 had a bug. It is that the bug lived in a code path intended to read inline data, a feature that stores very small file payloads directly inside the inode rather than in external data blocks. That optimization is attractive because it can reduce metadata overhead and improve small-file efficiency, but it also means the filesystem must be exact about size limits and boundaries. A mistaken assumption in that area can be the difference between a clean error return and a kernel-wide crash.

The record published by NVD shows that the problem was reported upstream by kernel.org and linked to stable kernel commits, which is the normal route for a Linux kernel fix moving from mainline into supported branches. The NVD entry also shows that the vulnerable configuration range includes Linux kernel versions from 6.14 up to but not including 6.18.6, along with the release candidates listed on the page. That immediately tells administrators this is not a theoretical issue trapped in a lab branch; it affects live, modern kernel lines.

There is also a broader pattern worth noting. Kernel developers have been systematically replacing BUG_ON usage with recoverable error handling in filesystems and storage paths for years, because a kernel panic is often the least graceful outcome possible when the underlying condition is corrupted data rather than a code execution issue. The ext4 patch follows that philosophy: treat impossible-on-healthy-data states as corruption, not as a reason to take the whole machine down. The practical outcome is better availability and narrower blast radius.

For WindowsForum readers, the story is relevant even though the vulnerable component is Linux. Linux filesystems are everywhere: on cloud instances, appliance stacks, containers, NAS devices, and hybrid deployments where a crash can take down storage services or orchestration nodes. Microsoft’s own security and Linux documentation ecosystem continues to emphasize Linux supportability, CVE tracking, and filesystem choices in production deployments, which underlines how mainstream ext4 remains across enterprise workloads.

That sequence also improves diagnosability. A panic usually leaves you with a crash trace and a reboot cycle, but not necessarily a clean indication of which inode or file triggered the condition. A logged filesystem error is far more useful for root cause analysis, especially on systems where the actual corruption may be caused by storage failure, abrupt power loss, or an application bug rather than a kernel defect.

The broader significance is that ext4 is not “safer” because the corruption disappeared. It is safer because the kernel now responds proportionately. That is good engineering hygiene, and it aligns with how modern Linux storage code increasingly handles bad on-disk state.

If inline content grows beyond what fits in a page, the old code path apparently treated that condition as a fatal invariant violation. The new code recognizes that even if the filesystem should not contain such a state, the safer course is to quarantine the damage, not crash the machine.

For enterprise teams, the fact that this is an ext4 read path matters because read paths are exercised constantly. You do not need a rare administrative operation to hit the affected logic; any workload that touches the damaged inode can potentially surface the issue. That makes the flaw especially nasty in environments where storage corruption may remain latent until a routine read finally trips it.

The NVD page currently classifies the issue with CWE-125, which is a somewhat broad bucket for out-of-bounds read conditions. In practical terms, though, the important part is not the taxonomy label but the behavior: a malformed or corrupted inline-data state should not be allowed to transform into a machine-wide fail-stop event.

This is the kind of issue that is easy to underestimate until it strikes a production environment. The machine may not be “hacked” in the classic sense, yet it still becomes unavailable at exactly the wrong time. That is why availability bugs belong in serious vulnerability management programs.

Historically, filesystems have often used assertions in places where developers believed the on-disk state should be impossible if all prior code worked correctly. That works well until corruption, unexpected hardware behavior, or edge-case tooling produces an input the developers did not anticipate. Then the assertion becomes the problem. In kernel land, that frequently translates into BUG_ON being replaced with explicit validation and error propagation.

The shift away from fatal assertions is especially important as Linux storage increasingly serves mixed workloads: local disks, virtualized volumes, container storage, and network-backed filesystem layers. A bug that once might have been rare now sits in a much denser ecosystem of I/O paths and failure modes.

That is a good sign of defensive engineering. It means the fix is not cosmetic; it addresses both the control-flow hazard and the resource-management hazard. In kernel code, those two problems often travel together.

A few practical outcomes follow from that design:

The published NVD record shows the initial analysis linked the issue to modern Linux kernel versions and flagged the vulnerability class as a filesystem memory-safety problem. That suggests NVD considered the issue real enough to track promptly, but not necessarily one that has a mature public exploitation narrative. In other words, the risk is operational certainty rather than headline-grabbing attacker sophistication.

The impact is strongest where uptime matters more than secrecy. On desktops, the visible effect may be a crash and reboot. On servers, it may mean availability loss, alert storms, or cascading service failures.

Microsoft’s documentation around Azure Linux and SQL Server on Linux underscores how much enterprise infrastructure now depends on Linux kernel and filesystem behavior. The company explicitly tracks CVEs in Azure Linux package workflows and documents ext4 as a supported filesystem in several Linux deployment scenarios. That context makes ext4 stability a shared concern, not just a niche upstream kernel matter.

The broader cloud lesson is that graceful failure scales better than fatal failure. When dozens or hundreds of nodes are involved, the difference between an error and a panic becomes a fleet-wide stability issue.

That means a Linux filesystem fix like this can matter to Microsoft customers even if they never boot a Windows kernel on the affected machine. In a mixed environment, vulnerability management is not OS-pure; it is service-pure. If the storage layer is Linux, the issue is still part of the Microsoft-adjacent operational picture.

This is also a good example of why cross-platform security awareness matters. The best runbooks now assume heterogeneous systems and mixed support boundaries, not neat one-vendor estates.

It will also be worth watching whether this fix becomes part of a larger trend of ext4 hardening. When one assertion is replaced with a recoverable error, it often prompts reviewers to hunt for neighboring assumptions that should be treated the same way. That is how one bug fix can quietly improve an entire subsystem.

The deeper lesson is that kernel safety is not just about preventing exploits. It is also about preventing a bad state from turning into a cascading outage. That is exactly what this ext4 fix does.

CVE-2026-31451 is not the kind of vulnerability that dominates headlines, but it is the kind that determines whether a production system stays up when the storage layer misbehaves. By replacing BUG_ON with proper error handling, the Linux kernel moves from catastrophic termination to measured recovery, which is exactly the sort of change that makes infrastructure more durable over time. For administrators, that means patching matters—not because the bug is flashy, but because the difference between a panic and an error is often the difference between a bad day and a major incident.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

ext4_read_inline_folio, turning a filesystem corruption event into a full kernel panic. The upstream fix replaces that hard stop with structured recovery: log the corruption, release the buffer head, and return -EFSCORRUPTED so the system can keep running. NVD has already associated the issue with CWE-125 and, at the time of publication, had not yet assigned a finalized CVSS score on the CVE detail page.

Overview

Overview

The important detail here is not merely that ext4 had a bug. It is that the bug lived in a code path intended to read inline data, a feature that stores very small file payloads directly inside the inode rather than in external data blocks. That optimization is attractive because it can reduce metadata overhead and improve small-file efficiency, but it also means the filesystem must be exact about size limits and boundaries. A mistaken assumption in that area can be the difference between a clean error return and a kernel-wide crash.The record published by NVD shows that the problem was reported upstream by kernel.org and linked to stable kernel commits, which is the normal route for a Linux kernel fix moving from mainline into supported branches. The NVD entry also shows that the vulnerable configuration range includes Linux kernel versions from 6.14 up to but not including 6.18.6, along with the release candidates listed on the page. That immediately tells administrators this is not a theoretical issue trapped in a lab branch; it affects live, modern kernel lines.

There is also a broader pattern worth noting. Kernel developers have been systematically replacing BUG_ON usage with recoverable error handling in filesystems and storage paths for years, because a kernel panic is often the least graceful outcome possible when the underlying condition is corrupted data rather than a code execution issue. The ext4 patch follows that philosophy: treat impossible-on-healthy-data states as corruption, not as a reason to take the whole machine down. The practical outcome is better availability and narrower blast radius.

For WindowsForum readers, the story is relevant even though the vulnerable component is Linux. Linux filesystems are everywhere: on cloud instances, appliance stacks, containers, NAS devices, and hybrid deployments where a crash can take down storage services or orchestration nodes. Microsoft’s own security and Linux documentation ecosystem continues to emphasize Linux supportability, CVE tracking, and filesystem choices in production deployments, which underlines how mainstream ext4 remains across enterprise workloads.

What CVE-2026-31451 Actually Changes

At its core, this CVE is about a panic-to-error conversion. The old behavior used BUG_ON when inline data exceeded PAGE_SIZE, which is effectively an assertion that says, “this should never happen, so stop everything if it does.” The fix removes that assumption and replaces it with proper failure handling insideext4_read_inline_folio. That is a major reliability improvement because the filesystem can now report corruption without collapsing the kernel.The technical flow

The patch description says the error is logged throughext4_error_inode, the buffer head is released to avoid a memory leak, and -EFSCORRUPTED is returned. Each of those steps matters. Logging tells operators what went wrong, freeing the buffer head keeps the system from leaking resources, and returning an explicit corruption code allows upper layers to react appropriately.That sequence also improves diagnosability. A panic usually leaves you with a crash trace and a reboot cycle, but not necessarily a clean indication of which inode or file triggered the condition. A logged filesystem error is far more useful for root cause analysis, especially on systems where the actual corruption may be caused by storage failure, abrupt power loss, or an application bug rather than a kernel defect.

The broader significance is that ext4 is not “safer” because the corruption disappeared. It is safer because the kernel now responds proportionately. That is good engineering hygiene, and it aligns with how modern Linux storage code increasingly handles bad on-disk state.

Why inline data is sensitive

Inline data is a space-saving optimization, but it also changes the shape of failure. Rather than reading from a block map, the filesystem reads content from metadata structures embedded in the inode path. That makes boundary validation especially important, because the metadata itself is trusted to describe where data lives and how large it is.If inline content grows beyond what fits in a page, the old code path apparently treated that condition as a fatal invariant violation. The new code recognizes that even if the filesystem should not contain such a state, the safer course is to quarantine the damage, not crash the machine.

- Before the fix: a corruption condition could trigger a kernel panic.

- After the fix: ext4 reports corruption and returns an error.

- Operational result: higher uptime and less collateral damage.

- Security result: the bug becomes less useful as a denial-of-service lever.

Why a Kernel Panic Matters to Administrators

A lot of vulnerability writeups focus on confidentiality or privilege escalation, but stability bugs can be just as painful in practice. A filesystem-triggered kernel panic can turn a localized corrupt file into a host outage, and on a busy server that outage can cascade into application failure, service retries, and data consistency issues. Even without remote code execution, that is a serious operational risk.For enterprise teams, the fact that this is an ext4 read path matters because read paths are exercised constantly. You do not need a rare administrative operation to hit the affected logic; any workload that touches the damaged inode can potentially surface the issue. That makes the flaw especially nasty in environments where storage corruption may remain latent until a routine read finally trips it.

The NVD page currently classifies the issue with CWE-125, which is a somewhat broad bucket for out-of-bounds read conditions. In practical terms, though, the important part is not the taxonomy label but the behavior: a malformed or corrupted inline-data state should not be allowed to transform into a machine-wide fail-stop event.

Availability is the real exposure

The published NVD vector for comparable Linux kernel filesystem flaws often leans toward local impact because an attacker generally needs filesystem access or the ability to induce a vulnerable state. But the availability impact is still significant, particularly on systems that host shared volumes, CI runners, embedded appliances, or cloud nodes with noisy neighbors.This is the kind of issue that is easy to underestimate until it strikes a production environment. The machine may not be “hacked” in the classic sense, yet it still becomes unavailable at exactly the wrong time. That is why availability bugs belong in serious vulnerability management programs.

- Host reboots can interrupt clustered services.

- Panic recovery may trigger journal replay and consistency checks.

- Repeated crashes can amplify storage wear and operational churn.

- Failover systems may mask the issue until multiple nodes hit the same corruption.

Consumers vs. enterprise

For consumer systems, the likely impact is a crash, data loss, or a frustrating boot loop if a damaged filesystem is accessed during startup. For enterprises, the stakes are broader: one crash can take down a database node, a container host, or a storage appliance that multiple teams depend on. That difference explains why even “just a BUG_ON fix” deserves attention.ext4 Inline Data and the History Behind the Fix

ext4 has long supported a range of performance and space-efficiency features, and inline data is one of the more specialized ones. The Linux kernel documentation explains ext4 as a mature filesystem with advanced data structures and algorithms, and it remains a widely used default or near-default choice in many distributions and deployments. The security significance of that ubiquity is simple: if ext4 has a bug, a huge installed base can be exposed.Historically, filesystems have often used assertions in places where developers believed the on-disk state should be impossible if all prior code worked correctly. That works well until corruption, unexpected hardware behavior, or edge-case tooling produces an input the developers did not anticipate. Then the assertion becomes the problem. In kernel land, that frequently translates into BUG_ON being replaced with explicit validation and error propagation.

Why this pattern keeps repeating

The recurring theme across ext4 fixes is that robust software must handle bad data gracefully. NVD’s Linux-kernel CVE pages show multiple ext4 issues in recent years where bug fixes focused on preventing kernel crashes or infinite loops caused by malformed filesystem conditions. That is not a sign that ext4 is uniquely fragile; it is a sign that storage code is a constant negotiation between performance assumptions and safety guarantees.The shift away from fatal assertions is especially important as Linux storage increasingly serves mixed workloads: local disks, virtualized volumes, container storage, and network-backed filesystem layers. A bug that once might have been rare now sits in a much denser ecosystem of I/O paths and failure modes.

The role of corruption handling

The fixed behavior uses-EFSCORRUPTED, which is more than just a return code. It tells calling code and higher-level tools that the file system state is invalid, not merely that a transient read failed. That distinction matters because recovery decisions differ: a corruption signal may prompt fsck, remount-only behavior, or a controlled restart rather than a blind retry.- Transient I/O failures suggest a storage or transport problem.

- Corruption errors suggest metadata integrity has already been compromised.

- Assertion failures suggest the kernel gave up entirely.

Patch Behavior and Code-Path Consequences

The change inext4_read_inline_folio is small in lines of code but large in consequence. Kernel fixes often look trivial from the outside because they replace a single macro or branch, yet that one line can sit at the boundary between graceful degradation and system-wide outage. The buffer-head release is particularly important because kernel memory leaks in error paths can become a second problem on top of the first.Why buffer-head cleanup matters

Error handling in low-level filesystem code has to be exact. If a path emits an error but forgets to free an allocated or referenced structure, the fix can solve the visible failure while quietly introducing a resource leak. The record’s mention that the buffer head is released indicates the patch author explicitly cleaned up that edge case rather than just swapping out the panic.That is a good sign of defensive engineering. It means the fix is not cosmetic; it addresses both the control-flow hazard and the resource-management hazard. In kernel code, those two problems often travel together.

How callers are expected to react

Returning -EFSCORRUPTED gives the rest of the stack a chance to decide what to do next. Depending on the caller, that may surface as a file read error, a journaled filesystem warning, or an administrative alert. The important thing is that the failure is now contained and observable.A few practical outcomes follow from that design:

- The system stays alive.

- The filesystem can record the corruption.

- Monitoring tools have a better chance of catching the issue.

- Operators can schedule remediation instead of chasing crash loops.

Why this is a better failure mode

A kernel panic may seem “safe” in the sense that it stops potentially bad behavior, but it is operationally expensive and often unnecessary when the underlying problem is on-disk corruption. An explicit error is more surgical. It preserves the rest of the machine and keeps the incident scope closer to the actual damage.How Serious Is CVE-2026-31451?

The NVD page did not yet provide a finalized score at the time of the record’s publication, so any severity judgment has to be made from the behavior itself rather than a complete scoring matrix. Even so, it is reasonable to treat the issue as significant because it can take down a system through a storage read path. That makes it a high-value reliability fix, even if the exploitability is bounded by local filesystem conditions.The published NVD record shows the initial analysis linked the issue to modern Linux kernel versions and flagged the vulnerability class as a filesystem memory-safety problem. That suggests NVD considered the issue real enough to track promptly, but not necessarily one that has a mature public exploitation narrative. In other words, the risk is operational certainty rather than headline-grabbing attacker sophistication.

Likely threat model

The most plausible threat model is someone who can cause or leverage malformed filesystem state, intentionally or accidentally. That could include a malicious local user with access to a writable mount, a compromised container with access to a vulnerable volume, or simply corrupted storage causing a bad state that later gets read.The impact is strongest where uptime matters more than secrecy. On desktops, the visible effect may be a crash and reboot. On servers, it may mean availability loss, alert storms, or cascading service failures.

Comparison with higher-profile kernel bugs

Compared with remote code execution flaws, this is a narrower issue. Compared with a plain data corruption bug, it is worse because it can escalate into a panic. That middle ground is important: the vulnerability is not necessarily “internet worm” material, but it absolutely belongs in patch queues for anyone running affected kernels.- Not likely to be remote by default.

- Still dangerous in production.

- Especially relevant on shared storage nodes.

- Worth fixing even when exploitability is constrained.

Enterprise and Cloud Implications

Enterprise Linux deployments often run in environments where a kernel panic is not just a reboot, but a service incident with ticketing, paging, and perhaps automated failover. If a filesystem corruption event can be converted into a clean error instead of a panic, that is an immediate win for operational resilience. It is also the kind of fix that cloud teams appreciate because it reduces noise in ephemeral and scale-out systems.Microsoft’s documentation around Azure Linux and SQL Server on Linux underscores how much enterprise infrastructure now depends on Linux kernel and filesystem behavior. The company explicitly tracks CVEs in Azure Linux package workflows and documents ext4 as a supported filesystem in several Linux deployment scenarios. That context makes ext4 stability a shared concern, not just a niche upstream kernel matter.

Cloud nodes and orchestrators

In cloud environments, a kernel panic often triggers node replacement, container rescheduling, or volume reattachment. If the panic is caused by a corrupted file read, those recovery flows may keep the platform afloat, but they still waste cycles and reduce predictability. A clean filesystem error is much easier to absorb.The broader cloud lesson is that graceful failure scales better than fatal failure. When dozens or hundreds of nodes are involved, the difference between an error and a panic becomes a fleet-wide stability issue.

Storage and virtualization layers

Virtual machines, hypervisors, and storage appliances can all encounter ext4 volumes under conditions that make corruption more likely to go unnoticed until a read path hits it. That means administrators should not assume “we don’t run bare metal Linux” equals “we are safe.” The filesystem still exists somewhere in the stack, and this bug can still be relevant there.- VM guests can hit the issue on corrupted virtual disks.

- Container hosts may expose it through mounted persistent volumes.

- Appliance firmware can inherit ext4 behavior indirectly.

- Backup and restore workflows can encounter latent corruption during validation.

Why Microsoft Is Tracking a Linux ext4 CVE

It may seem odd at first glance to see Microsoft-hosted vulnerability guidance reference a Linux kernel ext4 issue, but the explanation is straightforward: Microsoft operates across Linux-adjacent infrastructure, cloud services, and managed platforms that need CVE awareness. The Microsoft ecosystem documents Linux support considerations for SQL Server and Azure Linux, and it also participates in the broader CVE ecosystem through security guidance and package management processes.That means a Linux filesystem fix like this can matter to Microsoft customers even if they never boot a Windows kernel on the affected machine. In a mixed environment, vulnerability management is not OS-pure; it is service-pure. If the storage layer is Linux, the issue is still part of the Microsoft-adjacent operational picture.

The practical takeaway

Administrators should read this CVE as a reminder to treat filesystem bugs with the same seriousness as application flaws. The stack does not care whether your control plane runs on one vendor’s software and the node OS runs on another’s. If a kernel panic can take down the workload, the vulnerability is operationally relevant.This is also a good example of why cross-platform security awareness matters. The best runbooks now assume heterogeneous systems and mixed support boundaries, not neat one-vendor estates.

Strengths and Opportunities

This fix has several clear strengths, and it also creates an opportunity for operators to improve how they handle filesystem corruption events more broadly. The best outcome is not just patching the kernel, but using the incident to harden monitoring, recovery, and backup discipline.- Better uptime because corruption now yields an error instead of a panic.

- Cleaner diagnostics through

ext4_error_inodelogging. - Less memory-risk exposure thanks to buffer-head cleanup.

- More predictable recovery with

-EFSCORRUPTEDsignaling. - Reduced blast radius when a single inode is malformed.

- Operational clarity for incident response and postmortems.

- Alignment with modern kernel practice that favors recoverable errors over assertions.

A small fix with a large reliability dividend

The strength of this patch is that it changes the system’s behavior at the exact point where operators feel the pain most: downtime. A corruption event is still a problem, but it becomes a manageable one. That is often the difference between a maintenance ticket and a major incident.Risks and Concerns

The fix improves resilience, but it does not eliminate the underlying corruption or the conditions that created it. Administrators still need to treat filesystem health as a first-class concern, because a graceful error path is only valuable if the organization knows how to respond to it.- Latent corruption can remain undiscovered until the affected inode is accessed.

- Monitoring gaps may let repeated errors go unnoticed.

- Fleet inconsistency is possible if some nodes are patched and others are not.

- Backup assumptions may fail if corrupted data is restored without validation.

- Operational complacency can grow if teams assume the error handling itself solves the root cause.

- Version sprawl may leave older kernels exposed longer than expected.

- False confidence is a risk if teams equate “no panic” with “no incident.”

The hidden hazard of “safe” failures

One subtle concern is that graceful error handling can mask the severity of filesystem damage if teams are not alert enough. A panic gets attention immediately. An error log can be missed, especially in large fleets. That makes observability and alerting critical companions to the patch.What to Watch Next

The next few days and weeks will likely clarify where this CVE lands in patch pipelines, whether downstream vendors backport the fix promptly, and whether related ext4 issues surface in adjacent code paths. Since the NVD page ties the problem to kernel.org patches and lists impacted kernel ranges, administrators should expect vendor guidance to follow as distributions absorb the change.It will also be worth watching whether this fix becomes part of a larger trend of ext4 hardening. When one assertion is replaced with a recoverable error, it often prompts reviewers to hunt for neighboring assumptions that should be treated the same way. That is how one bug fix can quietly improve an entire subsystem.

Key things to monitor

- Distribution backports for affected kernel streams.

- Vendor advisories that map the CVE to supported builds.

- Any follow-on ext4 hardening patches in the same area.

- Monitoring updates for filesystem corruption alerting.

- Fleet consistency checks to confirm patch coverage.

- Reports of related panic-to-error conversions in other storage paths.

Practical action for admins

The immediate action is straightforward: identify affected kernel versions, prioritize systems that rely on ext4 for critical workloads, and ensure your logging and alerting can surface filesystem corruption quickly. If you operate cloud or container hosts, do not assume another layer will make this issue irrelevant. The filesystem is still part of your reliability chain.The deeper lesson is that kernel safety is not just about preventing exploits. It is also about preventing a bad state from turning into a cascading outage. That is exactly what this ext4 fix does.

CVE-2026-31451 is not the kind of vulnerability that dominates headlines, but it is the kind that determines whether a production system stays up when the storage layer misbehaves. By replacing BUG_ON with proper error handling, the Linux kernel moves from catastrophic termination to measured recovery, which is exactly the sort of change that makes infrastructure more durable over time. For administrators, that means patching matters—not because the bug is flashy, but because the difference between a panic and an error is often the difference between a bad day and a major incident.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center