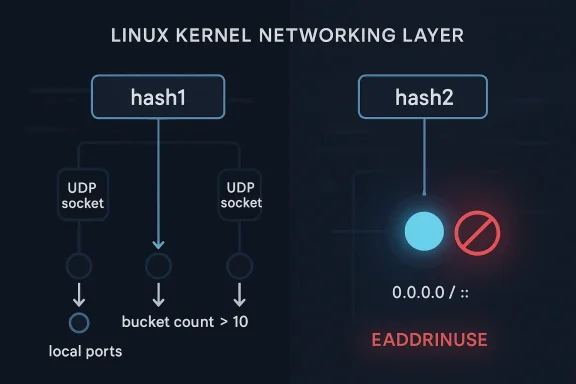

Linux systems picked up another networking CVE this week, and CVE-2026-31503 is a good reminder that some of the most consequential kernel bugs are not dramatic memory corruption flaws but logic failures in trusted packet paths. The issue lives in UDP bind conflict checking, where the kernel can switch from a port-only hash to an address-plus-port hash once a bucket grows large enough, and in that transition it can miss wildcard conflicts that should have blocked a bind. The result is a subtle but serious correctness problem: a socket that should have been rejected with -EADDRINUSE may be allowed to bind anyway once the hash2 path is in play. The vulnerability was recently published by the Linux kernel and surfaced in Microsoft’s vulnerability tracking as a new advisory entry, with the upstream fix already identified in stable references. nding looks deceptively simple from the outside. A process asks the kernel for a local address and port, and the kernel either reserves that tuple or rejects it if something else is already using the same space. Under the hood, however, Linux has to balance performance and correctness across many sockets, multiple address families, and wildcard semantics that vary depending on whether the request came from IPv4, IPv6, or an IPv4-mapped form. That is where the bug begins: the kernel uses two different hash tables for collision detection, and the threshold that decides which one to consult can change the outcome of the bind check.

The newly published switch to hash2 happens once

This is not an abstract theoretical issuout a concrete sequence: bind ten sockets to different addresses on the same port, then attempt a wildcard bind to that port. With the ordinary hash path, the kernel correctly returns EADDRINUSE. Add one more socket so the bucket count crosses the threshold, and the kernel switches to hash2; at that point, the wildcard bind can succeed even though it still conflicts logically with the existing listeners. That kind of boundary bug is exactly the sort that survives testing because the system behaves correctly most of the time, then breaks only at scale or under specific address-mix conditions.

There is also a broader lesson here about kernel networking aten keeps multiple lookup structures around because no single index is optimal for every access pattern. The trade-off works well only if the code consistently applies the same policy across all paths that make binding decisions. If one path checks port occupancy broadly and another checks address-specific occupancy more narrowly, the system can drift into inconsistent results. In a socket API, inconsistency is not just confusing; it changes who gets to own a port and whether later traffic reaches the intended listener.

UDP is connectionless, but it is not consequence-free. A bind stillrules, and those rules are essential for daemons, service meshes, DNS servers, load balancers, and any application that expects to receive traffic on a stable port. Wildcard binds are especially important because they tell the kernel to accept packets for all local interfaces, which is often how services expose themselves broadly. If wildcard conflict detection is wrong, the system can end up with overlapping listeners that behave unpredictably depending on packet path, address family, or bind order.

The published description specifically calls out IPv6 addresses, IPv4 wildcard binds, and IPv4-mapped wfff:0.0.0.0`. That matters because Linux networking does not treat these forms as mere syntactic variants. They map to different family rules, different lookup semantics, and different user expectations. A bug that appears only when the kernel crosses family boundaries is therefore more dangerous than it looks, because operators may test one family and assume the others inherit the same behavior.

The fix also reveals something about code reuse between TCP and UDP. The advisory notes that TCP’s

This is especially relevant for high-density systems. Once multiple services, containers, or network namespaces stack up on the same host, port-space collisions becomthe kernel’s internal shortcuts matter. A defect that only emerges after ten collisions may sound niche, but infrastructure often reaches those thresholds naturally in modern deployments. In other words, the bug is not exotic; it is just waiting for a busy enough machine.

That kind of behavior is particularly troublesome for troubleshooting. If a bind fails one minute and succeeds the next after unrelated sockets are added, engineers may assume a race, an orchestration bug, ore issue. The real culprit is more subtle: the kernel changed lookup strategy. Because the symptom depends on how crowded a bucket becomes, the problem can be intermittent under lab conditions and obvious in production only after the service estate grows.

The description also makes clear that the same logic applies to IPv4 wildcard binds. If existing sockets are bound to

The most important point for defenders is that the failure is deterministic once the bucket is crowded enough. This is not a probabilistic memory bug or a timing-sensitive race. It is a predictable misclassification of a bind request after a specific occupancy thresholer to reason about, but also easier to underestimate if you only test with a handful of sockets.

In practice, this kind of bug is less about packet crafting and more about service identity. Many applications do not care which interface delivered a datagram; they care that the kernel enforces the same exclusivity rules every time. A wildcard bind that slips through can create a shadow listener, and shadow lihe sort of problem that cause weird, expensive outages later. The bug may not hand an attacker code execution, but it can absolutely erode trust in the socket layer.

That is why this CVE belongs in the same mental category as other correctness-oriented networking flaws. It does not need a dramatic exploit narrative to be operationally significant. In busy Linux estates, correct socket ownership is a control-plane guarantee. Once that guarantee slips, the consequences can show up as failed failovers, misrouted traffiners that are hard to trace back to the root cause.

What makes this interesting is that the issue is not “hash2 is wrong” but “hash2 is incomplete for this use case.” That is a crucial distinction. The more selective structure is probably the right tool for many collision checks, yet wildcard binds need a wider view because they conflict with more than just exact-address matches. The fix therefore has to preserve the benefits of hash2 while restconflict semantics that wildcard requests rely on.

That kind of convergence matters downstream. When TCP and UDP agree on the meaning of hash2-based bind selection, distributions and vendors have a much easier time backporting and validating the change. It also reduces the chance that one protocol family quietly behaves differently from the other in edge cases that should have been covered by the same rule. In kernel terms, that is a good cleanup, not just a one-off patch.

The systems most likely to care about this CVE are not bare desktops. They are nodes with active service registration, orchestration, NAT, or address-heavy UDP workloads. DNS, telemetry collectors, service meshes, embedded appliances, and multi-tenant hosts are all plausible candidates. The more sockets share the same port across different addresses, the more likely the bug is to surface.

That means patch prioritization should be based on topology, not just versionch-office server that listens broadly may be at higher practical risk than a more powerful workstation with very few sockets open. In other words, exposure is about address diversity and port reuse, not raw CPU count or machine class.

That matters for long-term maintenance because bugs often reappear when two nearly identical implementations slowly drift apart. Kernel networking code is especially vulne due to its layered history. A single common helper reduces that risk and makes future audits easier, which is exactly what stable-tree maintainers want from a security fix.

The second thing to watch is whether additional bind-path cleanups follow. Once the kernel community identifies a mismatch between hash selection and wildcard conflict semantics, it is reasonable to ask whether adjacent address-family code paths have similar edge cases. Networking bugs in Linux often arrive in small clusters, not

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

The newly published switch to hash2 happens once

hslot->count > 10. In the ordinary hash path, the check is keyed by local port only, which is broad enough to notice wildcard conflicts in many common cases. In the hash2 path, the key includes both local address and local port, which is more precise for heavily populated buckets but can accidentally narrow the scope of the conflict test. That is the heart of the flaw: precision is good, but not if it causes the kernel to forget that a wildcard bind must still collide with sockets already using the same port on other local addresses.This is not an abstract theoretical issuout a concrete sequence: bind ten sockets to different addresses on the same port, then attempt a wildcard bind to that port. With the ordinary hash path, the kernel correctly returns EADDRINUSE. Add one more socket so the bucket count crosses the threshold, and the kernel switches to hash2; at that point, the wildcard bind can succeed even though it still conflicts logically with the existing listeners. That kind of boundary bug is exactly the sort that survives testing because the system behaves correctly most of the time, then breaks only at scale or under specific address-mix conditions.

There is also a broader lesson here about kernel networking aten keeps multiple lookup structures around because no single index is optimal for every access pattern. The trade-off works well only if the code consistently applies the same policy across all paths that make binding decisions. If one path checks port occupancy broadly and another checks address-specific occupancy more narrowly, the system can drift into inconsistent results. In a socket API, inconsistency is not just confusing; it changes who gets to own a port and whether later traffic reaches the intended listener.

Background

Background

UDP is connectionless, but it is not consequence-free. A bind stillrules, and those rules are essential for daemons, service meshes, DNS servers, load balancers, and any application that expects to receive traffic on a stable port. Wildcard binds are especially important because they tell the kernel to accept packets for all local interfaces, which is often how services expose themselves broadly. If wildcard conflict detection is wrong, the system can end up with overlapping listeners that behave unpredictably depending on packet path, address family, or bind order.The published description specifically calls out IPv6 addresses, IPv4 wildcard binds, and IPv4-mapped wfff:0.0.0.0`. That matters because Linux networking does not treat these forms as mere syntactic variants. They map to different family rules, different lookup semantics, and different user expectations. A bug that appears only when the kernel crosses family boundaries is therefore more dangerous than it looks, because operators may test one family and assume the others inherit the same behavior.

The fix also reveals something about code reuse between TCP and UDP. The advisory notes that TCP’s

inet_csk_get_port() alreck and that the helper should be renamed to inet_use_hash2_on_bind() and moved into inet_hashtables.h so UDP can share it. That is classic kernel maintenance logic: when two subsystems face the same policy question, one well-defined helper is usually safer than two slightly different implementations. Reuse is not just about saving code; it is about avoiding divergent interpretations of the same rule.Why wildcard binds are harder than they sound

A wildcard bind is not just “bind to everything.” It is a promise that the socket should receito any local interface, so the kernel must ensure that no more specific bind has already occupied that port in a way that should block the wildcard. The bug here is that the decision to consult hash2 can shift the lookup so far toward specificity that the wildcard nature of the request no longer gets its full protection. That turns a policy question into a hashing accident.This is especially relevant for high-density systems. Once multiple services, containers, or network namespaces stack up on the same host, port-space collisions becomthe kernel’s internal shortcuts matter. A defect that only emerges after ten collisions may sound niche, but infrastructure often reaches those thresholds naturally in modern deployments. In other words, the bug is not exotic; it is just waiting for a busy enough machine.

How the Bug Manifests

The advisory’s example is straightforward and useful because it shows the failure in terms administrators already understand. Bind ten UDP sockets to separate ad then try to bind:: on the same port. With the smaller bucket still using the original hash, the wildcard bind is rejected correctly. Add one more address-specific socket, and the same wildcard bind can succeed because the check path has switched to hash2. That means the result depends not on the logical conflict but on the bucket’s internal population.That kind of behavior is particularly troublesome for troubleshooting. If a bind fails one minute and succeeds the next after unrelated sockets are added, engineers may assume a race, an orchestration bug, ore issue. The real culprit is more subtle: the kernel changed lookup strategy. Because the symptom depends on how crowded a bucket becomes, the problem can be intermittent under lab conditions and obvious in production only after the service estate grows.

The description also makes clear that the same logic applies to IPv4 wildcard binds. If existing sockets are bound to

192.168.1.[1-11]:8888, then binding 0.0.0.0:8888 or ::ffff:0.0.0.0:8888 can miss the conflict once the thhat is a notable detail because it means the issue is not restricted to IPv6-native deployments. IPv4-heavy environments can hit the same flaw, which widens the practical impact considerably.The threshold problem

Threshold-based fast paths are common in kernel code because they improve performance on busy systems. The risk is that threshold logic tends to assume the underlying semantic test remains equivalent across paths. Here, they a performance optimization; it changes what “conflict” means in practice. That makes the bug less like a pure optimization error and more like a policy regression introduced by scaling logic.The most important point for defenders is that the failure is deterministic once the bucket is crowded enough. This is not a probabilistic memory bug or a timing-sensitive race. It is a predictable misclassification of a bind request after a specific occupancy thresholer to reason about, but also easier to underestimate if you only test with a handful of sockets.

Why This Matters for Networking Semantics

Kernel networking code lives and dies by invariants. If two bind requests are allowed to overlap when they should not, every assumption above the socket layer becomes shaky. Daemons may think they own a port exclusively when they do not, load balic on the wrong listener, and diagnostic tools can report misleading results because the kernel accepted an invalid state in the first place.In practice, this kind of bug is less about packet crafting and more about service identity. Many applications do not care which interface delivered a datagram; they care that the kernel enforces the same exclusivity rules every time. A wildcard bind that slips through can create a shadow listener, and shadow lihe sort of problem that cause weird, expensive outages later. The bug may not hand an attacker code execution, but it can absolutely erode trust in the socket layer.

Wildcards, exclusivity, and operator expectations

Administrators generally expect0.0.0.0:port and :::port to mean “nobody else on that host should be able to occupy the same service port in a conflicting way.” The whole point of wildcarding is to make listening broader, not to loosen port ownership rules. When a kernel silard bind despite preexisting per-address binds, it violates that expectation and can produce puzzling service overlap.That is why this CVE belongs in the same mental category as other correctness-oriented networking flaws. It does not need a dramatic exploit narrative to be operationally significant. In busy Linux estates, correct socket ownership is a control-plane guarantee. Once that guarantee slips, the consequences can show up as failed failovers, misrouted traffiners that are hard to trace back to the root cause.

Hash1 Versus Hash2

The vulnerability description is unusually clear about the two lookup structures. hash is keyed by local port only, while hash2 is keyed by local address and local port. That distinction makes sense from a performance perspective: a busy system with many sockets on the same port needs a more selective index to avoid checking everything in one policy check for wildcard binds cannot be narrowed that far without considering the broader exclusivity rule.What makes this interesting is that the issue is not “hash2 is wrong” but “hash2 is incomplete for this use case.” That is a crucial distinction. The more selective structure is probably the right tool for many collision checks, yet wildcard binds need a wider view because they conflict with more than just exact-address matches. The fix therefore has to preserve the benefits of hash2 while restconflict semantics that wildcard requests rely on.

Why TCP already had the answer

The record notes that TCP’s bind logic already contains the correct check in a helper namedinet_use_bhash2_on_bind(). That is a useful clue because it shows the kernel community already solved a related problem elsewhere in the stack. Moving that logic into a shared header and renaming it for generic reuse is a sign that the bug fix is meant to align UDP with established TCP sinvent a new policy.That kind of convergence matters downstream. When TCP and UDP agree on the meaning of hash2-based bind selection, distributions and vendors have a much easier time backporting and validating the change. It also reduces the chance that one protocol family quietly behaves differently from the other in edge cases that should have been covered by the same rule. In kernel terms, that is a good cleanup, not just a one-off patch.

Enterpact

The enterprise angle is the stronger one here. Servers that host many services on the same node, especially container hosts and network appliances, are the most likely to accumulate enough binds to cross the threshold that activates hash2. In those environments, port exclusivity is not academic; it is part of the service map. A missed conflict can create unpredictable exposure or service collisions that take time to diagnms are less likely to run into the bug in daily use, but they are not immune. Home labs, virtualization workstations, developer laptops with container stacks, and small router appliances can all create the kind of address diversity that fills the hash bucket. If a machine is running local services for development, testing, or packet forwarding, the issue becomes much more plausible. The safe assumption is that the bug matters anywhere Linux is used as a real netwractical exposure profileThe systems most likely to care about this CVE are not bare desktops. They are nodes with active service registration, orchestration, NAT, or address-heavy UDP workloads. DNS, telemetry collectors, service meshes, embedded appliances, and multi-tenant hosts are all plausible candidates. The more sockets share the same port across different addresses, the more likely the bug is to surface.

That means patch prioritization should be based on topology, not just versionch-office server that listens broadly may be at higher practical risk than a more powerful workstation with very few sockets open. In other words, exposure is about address diversity and port reuse, not raw CPU count or machine class.

The Fix and What It Likely Changes

The fix, as described, is conceptually simple: reuse TCP’s existing hash2-on-bind helper, rename it to a more generic form, and let UDP call the same suggests the bug was not in the existence of hash2 itself, but in the code path that decided whether hash2 could safely be used for a wildcard bind conflict test. If the shared helper is applied correctly, the kernel should again reject wildcard binds that overlap with already occupied local ports regardless of how crowded the hash s is exactly the kind of repair the kernel tends to like. It is narrow, it is semantically clear, and it does not require redesigning UDP port allocation. Instead, it restores a policy check that had become inconsistent under one internal optimization path. That keeps the patch small enough for stable backports while also fixing the core logic rather than masking the symptom.Why shared helpers are safer here

Shared helpers reduce drift. If TCP already carries the right answer for when hash2 is appropriate, UDP should not maintain its own slightly don unless there is a protocol-specific reason to do so. Moving the helper toinet_hashtables.h is therefore more than a code organization change; it is an attempt to codify a shared binding rule in one place.That matters for long-term maintenance because bugs often reappear when two nearly identical implementations slowly drift apart. Kernel networking code is especially vulne due to its layered history. A single common helper reduces that risk and makes future audits easier, which is exactly what stable-tree maintainers want from a security fix.

Strengths and Opportunities

This CVE has a few positive qualities from a remediation standpoint. It is well-defined, the failure mode is easy to reproduce conceptually, and the fix appears to be a small semantic correction rather than change. That makes it a good candidate for rapid backporting across supported kernel lines.- The bug is clearly described with a reproducible bind sequence.

- The fix is narrow and likely low-risk to backport.

- TCP already contains a semantically similar helper.

- The issue affects both IPv6 and IPv4-style wildcard binds.

- The patch can improve consistency acr.

- Administrators can reason about exposure using port density.

- The defect is operationally meaningful even without memory corruption.

Risks and Concerns

The main concern is underestimation. Because the issue is a bind-conflict miss rather than a crash or exploit primitive, some teams may treat it as a low-priority nuisance. That would be a mistake, because incorrect socket ownership can lead to service overlap, hidden listeners, and hard-to-debug operatio bug may be dismissed as “just” a logic error.- Crowded UDP environments can hit the threshold naturally.

- Vendor backports may lag behind upstream stable fixes.

- Mixed IPv4/IPv6 deployments are more exposed than expected.

- Symptoms may look like orchestration or network flakiness.

- Shadow listeners can complicate incident response.

-ommon enough to matter in production.

Looking Ahead

The first thing to watch is how quickly downstream vendors fold in the fix. Because the record already references upstream stable commits, the meaningful question for administrators is not whether a patch exists, but whether their specific kernel build contains the backport. That is especially important in enterprise distributions, where arrive without dramatic version changes.The second thing to watch is whether additional bind-path cleanups follow. Once the kernel community identifies a mismatch between hash selection and wildcard conflict semantics, it is reasonable to ask whether adjacent address-family code paths have similar edge cases. Networking bugs in Linux often arrive in small clusters, not

What administrators should verify

- Confirm whether the running kernel includes the backported UDP bind fix.

- Review workloads that bind many sockets to the same port across different addresses.

- Test wildcard binds on busy nodes rather than only on quiet lab systems.

- Validate IPv4, IPv6, and IPv4-mapped behavior separately.

- Treat any unexpected bind success as a potential conflict bug, not a configur term, this CVE is another reminder that the Linux kernel’s security surface is increasingly defined by correctness under scale. Many modern flaws are not about obvious corruption, but about a system taking the wrong branch when one more socket, one more container, or one more address tips a threshold. CVE-2026-31503 fits that pattern neatly, and thatttention even without a dramatic exploit story. The kernel did not simply miscount; it briefly forgot what a wildcard bind is supposed to mean.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center