CVE-2026-43053 is a Linux kernel XFS filesystem vulnerability published on May 1, 2026, and later analyzed by NIST on May 7, involving a crash-recovery flaw during extended-attribute tree cleanup that can leave XFS metadata unreplayable after a local, privileged failure sequence. The bug is not a remote-code-execution barnburner, and it will not make the average Windows desktop user drop everything. But it is exactly the sort of filesystem defect that matters in the places where Windows and Linux now overlap: WSL-heavy developer machines, Hyper-V labs, Azure estates, storage appliances, backup servers, and mixed-platform enterprise fleets. The lesson is not that XFS is fragile; it is that modern infrastructure has made “Linux kernel CVE” part of the Windows administrator’s patch vocabulary.

The headline score on CVE-2026-43053 is moderate: NVD lists a CVSS 3.1 base score of 4.7, with local attack vector, high attack complexity, low privileges required, no user interaction, unchanged scope, no confidentiality or integrity impact, and high availability impact. That is the score of a bug that is hard to trigger on purpose but ugly when it lands. Availability, not data theft, is the center of gravity.

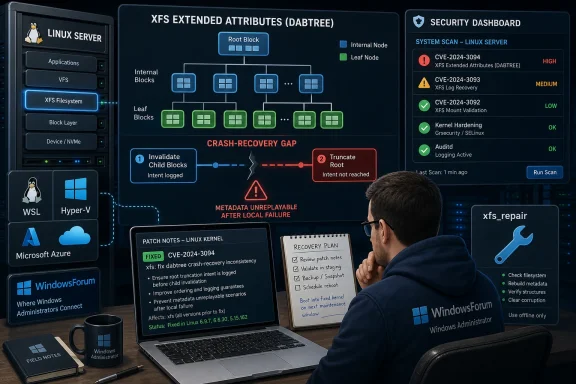

The affected subsystem is XFS, the long-running high-performance Linux filesystem originally born at SGI and now common in enterprise Linux deployments. The vulnerable path sits deep in extended-attribute handling, specifically during inode inactivation when XFS is cleaning up a node-format attribute tree. This is not the code path one thinks about when clicking “update” or copying files, but it is precisely the kind of code storage administrators depend on never to get subtly wrong.

The failure described in the CVE is a crash window. XFS invalidates child leaf and node blocks in an attribute tree but, before the fix, could leave parent nodes containing references to those now-cancelled blocks until a later truncation step removed the whole attribute fork. If the system shut down in the gap between those operations, log recovery could come back with enough of the old map intact to follow stale pointers into blocks whose replay had been suppressed.

That is why the sample failure reads like a storage engineer’s bad morning: metadata corruption detected, unmount and run

Filesystems, however, live and die by their crash-consistency contracts. Operations that are logically adjacent to a programmer are not necessarily atomic to the journal. A log shutdown between “the children are gone” and “the map no longer points at the tree” is rare, but filesystems are judged by rare events: power loss, kernel panic, storage timeout, controller failure, virtual disk hiccup, host reset.

The patch therefore does not merely paper over an error message. It changes the transaction choreography. After invalidating a child block, XFS now removes the parent entry that referenced that child in the same transaction. The parent is no longer allowed to contain a durable pointer to a block that recovery may later suppress.

The fix also converts an emptied root node into a leaf block before the later truncation phase. That detail sounds almost absurdly niche until you follow the recovery path. An empty node block is not valid under XFS verification rules, while a leaf block can represent the transient state safely. The patch is therefore not just “delete stale pointers”; it is “make every intermediate on-disk state something recovery can legally read.”

That is the deeper filesystem lesson. Crash safety is not achieved only at the beginning and end of an operation. It has to hold at every point where the machine might stop.

That oddness is the point. Microsoft is no longer merely the steward of Windows desktops and Windows Server. It ships and supports Linux-adjacent experiences through WSL, hosts enormous Linux estates on Azure, builds tooling for mixed fleets, and maintains security metadata that enterprise vulnerability scanners consume. For many administrators, the MSRC page is not saying “your Windows workstation is directly vulnerable.” It is saying “this CVE may appear in your Microsoft-centered security workflow.”

There is a difference, and it matters. A Windows 11 laptop using only NTFS volumes does not suddenly inherit XFS metadata semantics because MSRC lists a Linux kernel CVE. But a Windows developer workstation running Linux distributions under WSL, a Hyper-V host full of Linux guests, or an Azure-backed environment with XFS-formatted volumes may absolutely have systems in scope. The affected asset is the Linux kernel and its XFS code, not the Windows storage stack.

This is the daily reality of 2026 infrastructure. Security teams do not patch operating systems; they patch estates. Those estates contain Windows, Linux, container hosts, cloud images, appliances, kernels supplied by vendors, and kernels carried inside products that do not advertise themselves as Linux until someone opens a shell.

For WindowsForum readers, that is the most useful framing. This CVE is not a reason to panic about Windows. It is a reason to check whether your Windows-centric environment quietly depends on Linux filesystems in places you have stopped thinking of as separate.

XFS stores larger or more complex extended-attribute sets in tree form. The CVE text refers to a dabtree, the directory-and-attribute B-tree structure XFS uses to organize entries. When an inode is being inactivated — effectively, when the filesystem is tearing down the inode after deletion or cleanup — XFS must remove not only the file data mappings but also the attribute fork and its tree of metadata blocks.

That teardown is where the bug sits. Parent nodes could remain logged in a state that still pointed to children whose replay had been cancelled. If the subsequent truncation committed, the state was cleaned up and no one cared. If the system died at the wrong instant, recovery could rebuild enough structure to chase a pointer into unreplayed or stale content.

This is why the CVE is classified under CWE-367, a time-of-check/time-of-use race condition. In application security, TOCTOU often conjures images of swapping a symlink between a permissions check and a file open. In filesystem internals, the same class of problem can be more mechanical: one transaction makes an assumption about the state another transaction will soon establish, and a crash exploits the gap.

The word “exploit” should be used carefully here. The public description does not describe a practical privilege-escalation chain or remote trigger. It describes a local, high-complexity availability problem whose most credible danger is induced or accidental filesystem unavailability. But for systems where uptime is the product, that is not a minor outcome.

Kernel CVEs are messy because distribution kernels are not pure upstream version numbers. Red Hat, Ubuntu, Debian, SUSE, Oracle, Amazon, Microsoft-related images, appliance vendors, and cloud providers all backport fixes aggressively. A system that claims an older kernel version may already carry the relevant patch. Conversely, a custom kernel built from an apparently safe branch may lack a downstream fix if the maintainer did not pick it up.

That is why CPE data is a starting point, not a verdict. The two kernel.org patch references matter more than the scary-looking version span, because they identify the code-level remediation. Enterprise triage should answer whether the running kernel package includes the XFS fix, not merely whether the marketing version string falls inside a broad range.

There is also the question of filesystem exposure. A vulnerable kernel with no XFS mounts has a different risk profile from a vulnerable kernel running XFS on a busy container host, backup target, or database volume. Security scanners are good at finding version strings; they are less good at understanding whether the relevant code path is reachable during real operations.

This is where administrators earn their keep. The correct response is not to dismiss the CVE because it is medium, nor to treat every Linux VM as equally urgent. The correct response is to map the bug to actual XFS use.

The more compelling scenario is operational rather than adversarial. A system is under load, deleting files with extended attributes on XFS, and then suffers a log shutdown due to an I/O problem, kernel fault, storage issue, or forced reset. On the next mount, log recovery encounters metadata it cannot verify and the machine lands in repair territory. No human attacker has to exist for that story to hurt.

Persistent memory in the sample log device name,

For attackers, availability bugs still have value in multi-user systems and shared hosting environments. A local denial-of-service path against storage can be meaningful when tenants share a kernel or when an untrusted workload can influence filesystem activity. But this one’s high complexity and crash-window requirement make it a lower-priority security emergency than its “metadata corruption” language might imply.

For operators, however, metadata corruption warnings are never background noise. Even when the kernel is doing the right thing by refusing unsafe metadata, the result is still downtime, repair work, and possibly a difficult conversation about backups. The kernel’s verifier protects integrity by stopping the mount path; your service-level objective experiences that as an outage.

Second, the attribute-fork truncation is split into two explicit phases. The filesystem first truncates extents beyond the root block, after the child references have already been removed. Then it invalidates the root block and truncates the attribute bmap to zero in a single transaction. The key phrase is single transaction: as long as the bmap has non-zero length, recovery can find the root, so root invalidation and bmap removal must become atomic from the log’s point of view.

That may sound like a tiny implementation detail, but it is the whole story. Journaled filesystems are not magic; they are sets of promises about what state can be reconstructed after interruption. If a pointer can survive without its target, recovery must either never follow it or the transaction design must prevent the mismatch from becoming durable.

The empty-root-node conversion is the elegant part. XFS verification rules reject a node block with a count of zero, which is sensible for normal metadata. But in this cleanup path, the system needs a crash-safe representation of “the tree has been emptied but the final truncation has not yet landed.” A leaf block can carry that transient state. So the fix does not relax verification; it changes the intermediate shape so verification remains meaningful.

This is the kind of patch that rarely becomes famous because, when it works, nothing happens. The server reboots, recovery runs, metadata verifies, and no one learns the names of the functions involved. Filesystem maintainers spend a great deal of their careers ensuring users never have to read code paths like

But Windows power users are rarely pure anymore. WSL distributions run Linux userlands with Microsoft-supplied integration. Hyper-V labs host Linux guests. Docker Desktop, Kubernetes tooling, dev containers, and cloud build agents routinely blur the boundary between “my Windows machine” and “the Linux kernel over there.” Even when the Windows host is not vulnerable, the work being done on it may depend on vulnerable Linux components.

WSL deserves careful wording. Many WSL use cases store the Linux distribution’s root filesystem in a virtual disk, and filesystem choices depend on the distribution, configuration, and kernel environment. Administrators should not assume XFS is present simply because Linux is present. They should check actual mounts and kernel package provenance.

The same applies to Azure and cloud-hosted Linux images managed from Windows shops. XFS is common in enterprise Linux, especially where administrators selected it for performance, scalability, or vendor defaults. A Windows-heavy operations team may still own the patching outcome if the affected systems sit behind Active Directory integration, Microsoft Defender for Cloud reporting, Azure Update Manager processes, or a central vulnerability dashboard.

In short: do not ask whether your Windows machines are vulnerable. Ask whether your Windows-administered environment contains Linux kernels using XFS in roles where a crash-recovery failure would matter.

The second step is to identify kernel provenance. A distribution kernel with vendor support should be evaluated through that vendor’s advisory and package changelog. A custom-built kernel should be checked against the upstream stable commits referenced in the CVE record. Appliances and embedded products should be treated as vendor-managed until proven otherwise, because the kernel version string alone can mislead.

The third step is to think about reboot sequencing. Kernel filesystem fixes generally require booting into a fixed kernel, not merely installing a package and waiting. That matters for storage servers, virtualization hosts, CI runners, container nodes, and any system where maintenance windows are negotiated rather than assumed.

The fourth step is to protect the boring basics. Backups should be current, restores should be tested, and

Security teams should resist overfitting to the CVSS number. A 4.7 medium can still justify prompt patching on a storage-dense host with many tenants and strict recovery objectives. Conversely, it may be a routine-cycle update on a lab VM with no XFS mounts and disposable data. Context beats score.

That means scanners may overstate the number of affected systems. They may also understate operational risk by presenting the CVE as a medium local issue when the exposed asset is a storage-critical production host. The database does not know which machine holds the only copy of last quarter’s archive or which container node backs a customer-facing service.

A good triage workflow should therefore enrich scanner output with local facts. Which systems run XFS? Which kernels include the relevant commits? Which hosts are multi-user or multi-tenant? Which ones have a realistic path to untrusted local workloads? Which ones have maintenance windows soon enough to make this a normal patch rather than an exception?

This is also a reminder that “known affected software configurations” sections in CVE databases are not asset inventories. They are vulnerability metadata. Treating them as a substitute for environment knowledge is how teams either waste emergency cycles or miss the one server that actually matters.

The best administrators will turn this CVE into a small test of their Linux visibility from a Windows-centered console. If the answer to “where do we use XFS?” takes an afternoon of Slack archaeology, the CVE has already found a process bug.

That is not good news, but it is not the same as a confirmed silent-data-corruption bug. The verifier is doing its job by refusing to proceed with metadata that fails structural checks. The impact is availability because the filesystem may not mount cleanly until repaired, and repair always carries operational risk depending on what state the disk is in and how current the backups are.

The public description does not claim confidentiality loss or integrity modification by an attacker. NVD’s vector reflects that: confidentiality and integrity are scored as none, availability as high. That distinction is important for response prioritization, insurance reporting, customer communication, and internal risk acceptance.

Still, “not silent data theft” should not become “not important.” Filesystems are trust machines. When recovery stops because metadata no longer satisfies the rules, administrators face downtime and uncertainty. In many environments, that is enough to move a medium CVE onto the near-term maintenance board.

Yet these are the bugs that separate mature filesystems from toy projects. XFS has decades of engineering behind it, extensive verification, and a long history in demanding environments. The existence of CVE-2026-43053 does not contradict that reputation. If anything, the fix shows the standard expected of production filesystems: intermediate states must be safe, recovery must be deterministic, and verifiers must remain strict.

The broader industry lesson is that storage reliability and security have fused. A crash-consistency bug becomes a CVE because an unprivileged or low-privileged local actor may contribute to an availability failure. A filesystem repair event becomes a security incident if it affects service guarantees. A kernel patch becomes a Windows operations concern because the estate is hybrid whether or not the org chart admits it.

This is also why vendor metadata matters. The CVE arrived from kernel.org, was published by NVD on May 1, received NIST analysis on May 7, and is visible through Microsoft’s security ecosystem. The patch trail and advisory trail together tell administrators not just that a bug exists, but where to look for the fixed code path.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

A Medium CVE With a Very Uncomfortable Failure Mode

A Medium CVE With a Very Uncomfortable Failure Mode

The headline score on CVE-2026-43053 is moderate: NVD lists a CVSS 3.1 base score of 4.7, with local attack vector, high attack complexity, low privileges required, no user interaction, unchanged scope, no confidentiality or integrity impact, and high availability impact. That is the score of a bug that is hard to trigger on purpose but ugly when it lands. Availability, not data theft, is the center of gravity.The affected subsystem is XFS, the long-running high-performance Linux filesystem originally born at SGI and now common in enterprise Linux deployments. The vulnerable path sits deep in extended-attribute handling, specifically during inode inactivation when XFS is cleaning up a node-format attribute tree. This is not the code path one thinks about when clicking “update” or copying files, but it is precisely the kind of code storage administrators depend on never to get subtly wrong.

The failure described in the CVE is a crash window. XFS invalidates child leaf and node blocks in an attribute tree but, before the fix, could leave parent nodes containing references to those now-cancelled blocks until a later truncation step removed the whole attribute fork. If the system shut down in the gap between those operations, log recovery could come back with enough of the old map intact to follow stale pointers into blocks whose replay had been suppressed.

That is why the sample failure reads like a storage engineer’s bad morning: metadata corruption detected, unmount and run

xfs_repair, metadata I/O error. The practical impact is not an attacker stealing secrets; it is a filesystem that refuses to trust its own recovered metadata and forces intervention. In production, that can be the difference between a routine reboot and a service outage.The Bug Lives in the Space Between Two Correct Ideas

What makes CVE-2026-43053 interesting is that the old behavior was not obviously reckless. The code assumed that after child blocks in the attribute tree were invalidated, a following operation would truncate the entire attribute fork to zero extents. If that later truncation was guaranteed to commit before recovery ever had a chance to inspect the stale tree, there would be no lasting pointer problem.Filesystems, however, live and die by their crash-consistency contracts. Operations that are logically adjacent to a programmer are not necessarily atomic to the journal. A log shutdown between “the children are gone” and “the map no longer points at the tree” is rare, but filesystems are judged by rare events: power loss, kernel panic, storage timeout, controller failure, virtual disk hiccup, host reset.

The patch therefore does not merely paper over an error message. It changes the transaction choreography. After invalidating a child block, XFS now removes the parent entry that referenced that child in the same transaction. The parent is no longer allowed to contain a durable pointer to a block that recovery may later suppress.

The fix also converts an emptied root node into a leaf block before the later truncation phase. That detail sounds almost absurdly niche until you follow the recovery path. An empty node block is not valid under XFS verification rules, while a leaf block can represent the transient state safely. The patch is therefore not just “delete stale pointers”; it is “make every intermediate on-disk state something recovery can legally read.”

That is the deeper filesystem lesson. Crash safety is not achieved only at the beginning and end of an operation. It has to hold at every point where the machine might stop.

Microsoft’s Name on a Linux Kernel CVE Is the Modern Normal

The user-supplied source points to Microsoft’s Security Update Guide, which may look odd at first glance. CVE-2026-43053 is not a Windows kernel bug, and the vulnerable component is not NTFS, ReFS, SMB, or Hyper-V itself. It is a Linux kernel XFS flaw, sourced from kernel.org and reflected through vulnerability databases and vendor advisories.That oddness is the point. Microsoft is no longer merely the steward of Windows desktops and Windows Server. It ships and supports Linux-adjacent experiences through WSL, hosts enormous Linux estates on Azure, builds tooling for mixed fleets, and maintains security metadata that enterprise vulnerability scanners consume. For many administrators, the MSRC page is not saying “your Windows workstation is directly vulnerable.” It is saying “this CVE may appear in your Microsoft-centered security workflow.”

There is a difference, and it matters. A Windows 11 laptop using only NTFS volumes does not suddenly inherit XFS metadata semantics because MSRC lists a Linux kernel CVE. But a Windows developer workstation running Linux distributions under WSL, a Hyper-V host full of Linux guests, or an Azure-backed environment with XFS-formatted volumes may absolutely have systems in scope. The affected asset is the Linux kernel and its XFS code, not the Windows storage stack.

This is the daily reality of 2026 infrastructure. Security teams do not patch operating systems; they patch estates. Those estates contain Windows, Linux, container hosts, cloud images, appliances, kernels supplied by vendors, and kernels carried inside products that do not advertise themselves as Linux until someone opens a shell.

For WindowsForum readers, that is the most useful framing. This CVE is not a reason to panic about Windows. It is a reason to check whether your Windows-centric environment quietly depends on Linux filesystems in places you have stopped thinking of as separate.

XFS Extended Attributes Are Not an Exotic Corner Anymore

Extended attributes sound like optional filesystem trivia, but they are now part of ordinary Linux life. They store security labels, metadata used by backup and sync tools, namespace-specific information, and application-managed file properties. On systems using SELinux, containers, overlay filesystems, backup software, or specialized storage workflows, extended attributes are often not ornamental.XFS stores larger or more complex extended-attribute sets in tree form. The CVE text refers to a dabtree, the directory-and-attribute B-tree structure XFS uses to organize entries. When an inode is being inactivated — effectively, when the filesystem is tearing down the inode after deletion or cleanup — XFS must remove not only the file data mappings but also the attribute fork and its tree of metadata blocks.

That teardown is where the bug sits. Parent nodes could remain logged in a state that still pointed to children whose replay had been cancelled. If the subsequent truncation committed, the state was cleaned up and no one cared. If the system died at the wrong instant, recovery could rebuild enough structure to chase a pointer into unreplayed or stale content.

This is why the CVE is classified under CWE-367, a time-of-check/time-of-use race condition. In application security, TOCTOU often conjures images of swapping a symlink between a permissions check and a file open. In filesystem internals, the same class of problem can be more mechanical: one transaction makes an assumption about the state another transaction will soon establish, and a crash exploits the gap.

The word “exploit” should be used carefully here. The public description does not describe a practical privilege-escalation chain or remote trigger. It describes a local, high-complexity availability problem whose most credible danger is induced or accidental filesystem unavailability. But for systems where uptime is the product, that is not a minor outcome.

The CPE Range Looks Broad Because Kernel CVEs Often Are

The NVD change record supplied with the prompt lists Linux kernel versions from early 2.6.12-era entries up to versions before 6.19.12, with release-candidate entries also appearing. That sort of broad CPE range routinely causes confusion, and sometimes false positives, in vulnerability management tools. It does not necessarily mean every deployed Linux system in that span is equally exploitable in a practical environment.Kernel CVEs are messy because distribution kernels are not pure upstream version numbers. Red Hat, Ubuntu, Debian, SUSE, Oracle, Amazon, Microsoft-related images, appliance vendors, and cloud providers all backport fixes aggressively. A system that claims an older kernel version may already carry the relevant patch. Conversely, a custom kernel built from an apparently safe branch may lack a downstream fix if the maintainer did not pick it up.

That is why CPE data is a starting point, not a verdict. The two kernel.org patch references matter more than the scary-looking version span, because they identify the code-level remediation. Enterprise triage should answer whether the running kernel package includes the XFS fix, not merely whether the marketing version string falls inside a broad range.

There is also the question of filesystem exposure. A vulnerable kernel with no XFS mounts has a different risk profile from a vulnerable kernel running XFS on a busy container host, backup target, or database volume. Security scanners are good at finding version strings; they are less good at understanding whether the relevant code path is reachable during real operations.

This is where administrators earn their keep. The correct response is not to dismiss the CVE because it is medium, nor to treat every Linux VM as equally urgent. The correct response is to map the bug to actual XFS use.

The Attack Story Is Less Persuasive Than the Outage Story

A vulnerability with local access, high complexity, and low privileges required sits in an awkward zone. It is not nothing: local users or workloads may be able to create conditions that exercise filesystem behavior. But the chain described in the public record depends on a shutdown at a very particular point in filesystem cleanup. That makes it harder to weaponize cleanly than a simple null-pointer dereference or permission bypass.The more compelling scenario is operational rather than adversarial. A system is under load, deleting files with extended attributes on XFS, and then suffers a log shutdown due to an I/O problem, kernel fault, storage issue, or forced reset. On the next mount, log recovery encounters metadata it cannot verify and the machine lands in repair territory. No human attacker has to exist for that story to hurt.

Persistent memory in the sample log device name,

pmem0, is a useful reminder but not a narrow limit. The device name in a reproducer or bug report does not mean only persistent-memory users should care. XFS is used across ordinary block devices, SAN-backed volumes, cloud disks, and virtualized storage. The important variables are XFS, extended-attribute tree state, inode inactivation, and crash timing.For attackers, availability bugs still have value in multi-user systems and shared hosting environments. A local denial-of-service path against storage can be meaningful when tenants share a kernel or when an untrusted workload can influence filesystem activity. But this one’s high complexity and crash-window requirement make it a lower-priority security emergency than its “metadata corruption” language might imply.

For operators, however, metadata corruption warnings are never background noise. Even when the kernel is doing the right thing by refusing unsafe metadata, the result is still downtime, repair work, and possibly a difficult conversation about backups. The kernel’s verifier protects integrity by stopping the mount path; your service-level objective experiences that as an outage.

Why the Fix Is a Transaction Design Lesson

The fix described for CVE-2026-43053 has two halves, and both are instructive. First, XFS removes parent references to child blocks immediately after invalidating those children, within the same transaction. That collapses the dangerous gap between “this child is no longer replayable” and “nothing points to it.”Second, the attribute-fork truncation is split into two explicit phases. The filesystem first truncates extents beyond the root block, after the child references have already been removed. Then it invalidates the root block and truncates the attribute bmap to zero in a single transaction. The key phrase is single transaction: as long as the bmap has non-zero length, recovery can find the root, so root invalidation and bmap removal must become atomic from the log’s point of view.

That may sound like a tiny implementation detail, but it is the whole story. Journaled filesystems are not magic; they are sets of promises about what state can be reconstructed after interruption. If a pointer can survive without its target, recovery must either never follow it or the transaction design must prevent the mismatch from becoming durable.

The empty-root-node conversion is the elegant part. XFS verification rules reject a node block with a count of zero, which is sensible for normal metadata. But in this cleanup path, the system needs a crash-safe representation of “the tree has been emptied but the final truncation has not yet landed.” A leaf block can carry that transient state. So the fix does not relax verification; it changes the intermediate shape so verification remains meaningful.

This is the kind of patch that rarely becomes famous because, when it works, nothing happens. The server reboots, recovery runs, metadata verifies, and no one learns the names of the functions involved. Filesystem maintainers spend a great deal of their careers ensuring users never have to read code paths like

xfs_attr3_node_inactive().The Windows Angle Is WSL, Virtualization, and Inventory

For a pure Windows desktop user, CVE-2026-43053 is mostly ambient security noise. NTFS and ReFS are not affected because they are different filesystems in a different kernel. Installing Windows cumulative updates will not repair a vulnerable Linux kernel running inside a VM or supplied by a Linux distribution.But Windows power users are rarely pure anymore. WSL distributions run Linux userlands with Microsoft-supplied integration. Hyper-V labs host Linux guests. Docker Desktop, Kubernetes tooling, dev containers, and cloud build agents routinely blur the boundary between “my Windows machine” and “the Linux kernel over there.” Even when the Windows host is not vulnerable, the work being done on it may depend on vulnerable Linux components.

WSL deserves careful wording. Many WSL use cases store the Linux distribution’s root filesystem in a virtual disk, and filesystem choices depend on the distribution, configuration, and kernel environment. Administrators should not assume XFS is present simply because Linux is present. They should check actual mounts and kernel package provenance.

The same applies to Azure and cloud-hosted Linux images managed from Windows shops. XFS is common in enterprise Linux, especially where administrators selected it for performance, scalability, or vendor defaults. A Windows-heavy operations team may still own the patching outcome if the affected systems sit behind Active Directory integration, Microsoft Defender for Cloud reporting, Azure Update Manager processes, or a central vulnerability dashboard.

In short: do not ask whether your Windows machines are vulnerable. Ask whether your Windows-administered environment contains Linux kernels using XFS in roles where a crash-recovery failure would matter.

Patch Triage Starts With Mounts, Not Panic

The first practical step is boring and decisive: identify where XFS is mounted. On Linux systems, administrators can inspect mount output, filesystem types,/etc/fstab, cloud-init configuration, storage automation, and fleet inventory. The vulnerable code path is in XFS; systems using ext4, btrfs, ZFS, NTFS passthrough, or other filesystems are not exposed to this particular bug in the same way.The second step is to identify kernel provenance. A distribution kernel with vendor support should be evaluated through that vendor’s advisory and package changelog. A custom-built kernel should be checked against the upstream stable commits referenced in the CVE record. Appliances and embedded products should be treated as vendor-managed until proven otherwise, because the kernel version string alone can mislead.

The third step is to think about reboot sequencing. Kernel filesystem fixes generally require booting into a fixed kernel, not merely installing a package and waiting. That matters for storage servers, virtualization hosts, CI runners, container nodes, and any system where maintenance windows are negotiated rather than assumed.

The fourth step is to protect the boring basics. Backups should be current, restores should be tested, and

xfs_repair should not be a plan invented under pressure. A filesystem availability CVE is a good excuse to verify that rescue media, out-of-band management, and snapshot policies still work the way the runbook claims.Security teams should resist overfitting to the CVSS number. A 4.7 medium can still justify prompt patching on a storage-dense host with many tenants and strict recovery objectives. Conversely, it may be a routine-cycle update on a lab VM with no XFS mounts and disposable data. Context beats score.

Vulnerability Scanners Will Overstate and Understate This at the Same Time

CVE-2026-43053 is tailor-made for noisy vulnerability reporting. Broad CPE ranges can flag fleets that have already received backported fixes. Kernel version comparisons can miss custom or vendor-specific patch states. Asset systems may know that a machine runs Linux but not whether it mounts XFS, uses extended attributes heavily, or hosts workloads that can trigger inode inactivation under crash conditions.That means scanners may overstate the number of affected systems. They may also understate operational risk by presenting the CVE as a medium local issue when the exposed asset is a storage-critical production host. The database does not know which machine holds the only copy of last quarter’s archive or which container node backs a customer-facing service.

A good triage workflow should therefore enrich scanner output with local facts. Which systems run XFS? Which kernels include the relevant commits? Which hosts are multi-user or multi-tenant? Which ones have a realistic path to untrusted local workloads? Which ones have maintenance windows soon enough to make this a normal patch rather than an exception?

This is also a reminder that “known affected software configurations” sections in CVE databases are not asset inventories. They are vulnerability metadata. Treating them as a substitute for environment knowledge is how teams either waste emergency cycles or miss the one server that actually matters.

The best administrators will turn this CVE into a small test of their Linux visibility from a Windows-centered console. If the answer to “where do we use XFS?” takes an afternoon of Slack archaeology, the CVE has already found a process bug.

The Repair Message Is Not the Same as Data Loss

The scary string in the report is “metadata corruption detected.” That phrase tends to flatten nuance because users hear corruption and think destroyed files. In this case, the kernel is reporting metadata it cannot verify during recovery, and the recommended response is to unmount and runxfs_repair.That is not good news, but it is not the same as a confirmed silent-data-corruption bug. The verifier is doing its job by refusing to proceed with metadata that fails structural checks. The impact is availability because the filesystem may not mount cleanly until repaired, and repair always carries operational risk depending on what state the disk is in and how current the backups are.

The public description does not claim confidentiality loss or integrity modification by an attacker. NVD’s vector reflects that: confidentiality and integrity are scored as none, availability as high. That distinction is important for response prioritization, insurance reporting, customer communication, and internal risk acceptance.

Still, “not silent data theft” should not become “not important.” Filesystems are trust machines. When recovery stops because metadata no longer satisfies the rules, administrators face downtime and uncertainty. In many environments, that is enough to move a medium CVE onto the near-term maintenance board.

The Kernel Community Fixed a Narrow Bug With Broad Implications

One reason Linux filesystem CVEs can appear so arcane is that they expose the machinery most users never see. The bug is not in a web parser, authentication layer, or network daemon. It is in how a filesystem tears down a particular form of extended-attribute tree while preserving crash consistency across journal replay.Yet these are the bugs that separate mature filesystems from toy projects. XFS has decades of engineering behind it, extensive verification, and a long history in demanding environments. The existence of CVE-2026-43053 does not contradict that reputation. If anything, the fix shows the standard expected of production filesystems: intermediate states must be safe, recovery must be deterministic, and verifiers must remain strict.

The broader industry lesson is that storage reliability and security have fused. A crash-consistency bug becomes a CVE because an unprivileged or low-privileged local actor may contribute to an availability failure. A filesystem repair event becomes a security incident if it affects service guarantees. A kernel patch becomes a Windows operations concern because the estate is hybrid whether or not the org chart admits it.

This is also why vendor metadata matters. The CVE arrived from kernel.org, was published by NVD on May 1, received NIST analysis on May 7, and is visible through Microsoft’s security ecosystem. The patch trail and advisory trail together tell administrators not just that a bug exists, but where to look for the fixed code path.

The Real Checklist Fits on One Screen

For all the internals, the administrator’s response should be compact. CVE-2026-43053 is a storage-availability issue in Linux XFS, not a Windows desktop panic, and the right response is to identify real exposure before assigning urgency.- Systems that do not run Linux kernels with XFS mounts are not the practical center of this CVE.

- Systems that run XFS on production, multi-user, multi-tenant, backup, database, or container hosts deserve faster review than the CVSS score alone may suggest.

- Vendor kernels should be checked through distribution advisories and package changelogs because backports can make upstream version numbers misleading.

- Custom kernels should be checked directly for the two upstream stable fixes referenced by the CVE record.

- Maintenance plans should include a reboot into the fixed kernel and a quick validation that XFS volumes mount cleanly afterward.

- Backup and repair runbooks should be verified before anyone discovers they are stale during an actual metadata recovery event.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center