Microsoft 365 users across North America endured a prolonged, high-impact disruption on January 22–23, 2026, as core services including Outlook, Exchange Online, OneDrive, Microsoft Defender, and Microsoft Purview were intermittently unavailable or sluggish for nearly ten hours whatile engineers worked to restore and rebalance traffic across affected infrastructure.

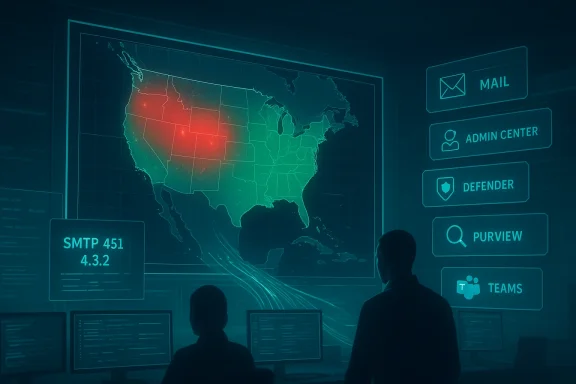

The incident began in mid-afternoon Eastern Time on January 22, 2026, when system telemetry and user reports simultaneously spiked. Microsoft logged an active incident under identifier MO1221364 and acknowledged that “a portion of dependent service infrastructure in the North America region isn’t processing traffic as expected.” That failure cascaded into visible customer symptoms: external mail deliveries returned SMTP 4xx temporary errors (commonly seen as 451 4.3.2 temporary server error responses), tenant administrators reported trouble accessing the Microsoft 365 admin console, and security and governance portals such as Microsoft Defender and Microsoft Purview became intermittent or inaccessible for many customers.

Public outage-trackers showed a rapid escalation of complaints during the afternoon and evening, with reported peaks varying by snapshot and source — figures ranged from several thousand reports to mid‑teens of thousands at the incident’s peak. Over the following hours Microsoft implemented targeted remediation actions, chiefly traffic rebalancing to route load away from the degraded infrastructure. The company reported that access was restored and mail flow stabilized early on January 23 UTC, although a minority of tenants reported lingering delivery delays and portal access problems for a period after the official update.

Key points in context:

For IT leaders, the immediate lesson is practical: assume that any single cloud provider, however reliable in normal operation, can suffer extended outages; plan accordingly. Implement layered defenses for inbound communications and administrative access, rehearse outage scenarios, and insist on post-incident transparency that provides the technical detail needed to assess risk and prevent recurrence.

The incident also carries a broader industry message: resilience in an era of hyperscale cloud requires both provider-level engineering rigor and customer-side architectural diversity. Until architectures and commercial relationships evolve to reduce single‑vendor systemic risk, extended outages like this will remain consequential events for businesses and their customers.

Source: theregister.com Microsoft 365 outage drags on for nearly 10 hours

Background

Background

The incident began in mid-afternoon Eastern Time on January 22, 2026, when system telemetry and user reports simultaneously spiked. Microsoft logged an active incident under identifier MO1221364 and acknowledged that “a portion of dependent service infrastructure in the North America region isn’t processing traffic as expected.” That failure cascaded into visible customer symptoms: external mail deliveries returned SMTP 4xx temporary errors (commonly seen as 451 4.3.2 temporary server error responses), tenant administrators reported trouble accessing the Microsoft 365 admin console, and security and governance portals such as Microsoft Defender and Microsoft Purview became intermittent or inaccessible for many customers.Public outage-trackers showed a rapid escalation of complaints during the afternoon and evening, with reported peaks varying by snapshot and source — figures ranged from several thousand reports to mid‑teens of thousands at the incident’s peak. Over the following hours Microsoft implemented targeted remediation actions, chiefly traffic rebalancing to route load away from the degraded infrastructure. The company reported that access was restored and mail flow stabilized early on January 23 UTC, although a minority of tenants reported lingering delivery delays and portal access problems for a period after the official update.

What failed and why it mattered

Services affected

- Exchange Online / Outlook: Primary symptoms were delayed or blocked receipt of external email, SMTP 4xx errors during delivery attempts, and timeouts when users tried to send or receive messages.

- Microsoft 365 Admin Center: Administrators reported difficulty viewing the service health dashboard and managing tenant-level settings during the incident window.

- Microsoft Defender & Microsoft Purview: Security and compliance portals showed degraded responsiveness or were intermittently inaccessible, reducing visibility into threats and retention/labeling controls.

- OneDrive / SharePoint: Search and file-access operations degraded for some tenants, hampering collaboration and access to important documents.

- Microsoft Teams (partial): Some tenants reported inability to create chats, add members, or see presence information in affected sections of the environment.

The technical root described by Microsoft

Microsoft’s public post-mortem language during the incident focused on traffic-processing abnormalities inside a subset of North American infrastructure. The immediate remediation consisted of:- Restoring degraded infrastructure components to a healthy state.

- Implementing traffic rebalancing to shift requests away from affected sections and distribute load more broadly.

- Incremental verification of telemetry to ensure the environment entered a balanced, stable state.

Timeline (concise, with absolute times)

- Early-to-mid afternoon ET (around 14:33 ET / 19:33 UTC): First official incident entry and public acknowledgement under MO1221364; users began seeing 451 4.3.2 SMTP errors and admin portal timeouts.

- Afternoon through evening ET: Spike in user reports on outage-tracking services; troubleshooting and incremental mitigation (traffic rerouting/load balancing) in progress.

- Overnight (around 05:33 UTC on Jan 23): Microsoft reported access restored and mail flow stabilized; engineers continued load-balancing and monitoring.

- Shortly thereafter: Microsoft confirmed the incident impact had been resolved, although some tenants reported lingering issues.

The customer impact: real-world consequences

The outage illustrated how heavily many businesses depend on a single vendor’s cloud tenancy. Observed and reported consequences included:- Blocked or delayed client communications for customer‑facing businesses, including time-sensitive financial and legal correspondence.

- Reduced visibility into security telemetry and compliance controls while Defender and Purview portals were degraded.

- Lost productivity as workers could not access files in OneDrive/SharePoint or coordinate via Teams.

- Increased load on support desks and service desk queues; admins scrambled to triage incidents with limited visibility because tenant admin consoles were also affected.

- Secondary impacts where third-party systems integrated with Microsoft 365 experienced failures (e.g., vendors that rely on Exchange for notifications).

Why the outage escalated: technical analysis

Large cloud platforms are engineered with resilience in mind: redundancy, regional failover, and automated traffic distribution are core design principles. Still, several factors make outages at hyperscale providers particularly disruptive:- Shared infrastructure dependencies: Many SaaS features rely on a small set of routing and processing components. When those components falter, multiple services appear to fail at once.

- Stateful routing and session affinity: Some mail and portal flows depend on stateful connections. If sessions cannot be migrated smoothly away from degraded nodes, customers see transient failures.

- Telemetry and control-plane coupling: If tenant admin portals or status dashboards are hosted on the same systems or rely on the same routing fabric as production traffic, administrators lose both the service and the means to observe it — complicating triage.

- Load rebalancing complexity: While rerouting traffic is a logical mitigation, achieving a balanced state across thousands of physical nodes and network paths is non-trivial. Rebalancing itself can generate transient capacity pressure elsewhere.

- External DNS and MX interactions: SMTP is an inherently distributed protocol. When Mail Exchange (MX) delivery attempts receive repeated 4xx responses, sending systems queue and retry messages — producing delayed delivery and confusing administrators who see queued mail reports from external providers.

What Microsoft did and what it said

During the outage Microsoft posted incremental updates to its public incident channel and the Microsoft 365 admin center. The core messages described:- An initial investigation and active monitoring of customer-impacted scenarios.

- Identification of the affected portion of North American infrastructure that was not processing traffic as expected.

- Execution of a recovery plan that involved restoring the infrastructure to a healthy state and rerouting traffic.

- Reassurances that mail flow was stable after remediation and confirmation that impact had been resolved.

Cross-checkable facts and where reporting varies

- The incident was tracked under the identifier MO1221364 and began in the mid-afternoon Eastern Time on Jan 22, 2026. Multiple independent monitoring snapshots and Microsoft’s own service health entries confirm this.

- Error messages commonly reported by customers during the outage included SMTP 4xx / 451 4.3.2 temporary server errors when attempting to send or receive mail; this symptom was widely reported by administrators.

- Public outage trackers showed a range for peak complaint counts: snapshots vary widely (approximately 8,000–16,000 reports at different times). Differences stem from timing, geographic coverage, and the sampling method used by those services.

- Microsoft’s remediation centered on traffic rebalancing and restoring affected infrastructure; it declared the impact resolved roughly ten hours after the initial acknowledgement, although a minority of customers reported residual edge cases afterwards.

The broader context and pattern risk

This outage did not occur in isolation. Over the past months large-scale providers have experienced several multi-hour incidents that emphasized shared-risk constraints in massive cloud platforms and the global economy’s dependence on a small number of vendor-operated infrastructures.Key points in context:

- Outages tend to have outsized attention because mainstream productivity relies on always-on email and collaboration.

- When the administrative and status consoles are impacted alongside production services, customers lose both service and visibility, increasing frustration and operational risk.

- Platform-wide incidents reinforce the importance of cross-checks, diversification, and robust incident-response playbooks for critical businesses.

Strengths shown by Microsoft during this incident

- Rapid detection and public acknowledgement: Microsoft posted an incident entry quickly and maintained a public incident identifier that tenants could reference.

- Clear remediation steps: The mitigation (restore affected nodes, rebalance traffic) was standard, sensible, and ultimately effective.

- Scale of containment: Recovery actions appeared to limit the outage to a defined region rather than trigger a wider global disruption.

- Post-incident stability verification: Microsoft explicitly monitored mail flow and telemetry before declaring impact resolved, reducing the chance of a premature resolution statement.

Risks and shortcomings exposed

- Single-vendor dependency: Organizations that trust a single cloud provider for email, identity, and security lose multiple capabilities when that provider’s infrastructure degrades.

- Visibility blind spots: When admin consoles are impacted, tenant administrators lack the tools to monitor or partially remediate tenant-specific issues.

- Recovery time and scale: Nearly ten hours to reach widespread restoration is a long window for many businesses; the iterative nature of traffic rebalancing can stretch recovery time.

- Inconsistent public metrics: Variable counts on public outage trackers complicate the assessment of actual impact and may amplify uncertainty among customers.

- Communications granularity: High-level remediation statements are useful, but enterprise customers will expect precise root-cause analyses and concrete corrective actions in the post-incident report.

Practical guidance for IT teams and administrators

- Prioritise resilient email flows

- Maintain an external monitoring pipeline for SMTP delivery (testing from outside the tenancy).

- Use secondary MX routing or mail relays for critical inbound flows where compliance and continuity require near‑zero acceptance gaps.

- Plan for admin-console loss

- Ensure at least two forms of out-of-band administrative access (for example, mobile admin apps, secondary emergency accounts, or alternative management pathways).

- Pre-publish incident runbooks with vendor-agnostic steps that do not rely on the provider console.

- Harden communications and incident response

- Predefine escalation contacts with your cloud provider and keep up-to-date incident-contact paths.

- Run tabletop exercises for vendor-wide outages that simulate loss of both admin and production portals.

- Diversify critical controls

- Consider third-party monitoring, email continuity services, or hybrid deployment models to maintain essential inbound/outbound communications during vendor outages.

- Implement multi-layered data protection (e.g., third-party backups, exportable journaling) for compliance-critical mailflows.

- Measure and negotiate SLAs

- Understand the provider’s SLA scope and what remediation or credits apply in prolonged incidents.

- Negotiate contractual transparency and post-incident reporting commitments if email and security services are business-critical.

What enterprises should demand in post-incident reporting

Companies that rely on hosted services should expect vendor reports to include:- A clear, technical root-cause summary (not just high-level phrasing).

- A timeline of detection, remediation steps, and verification checkpoints.

- Concrete corrective actions and changes to prevent recurrence.

- Service‑specific impact detail (which functions and tenants were affected and why).

- An assessment of customer-visible residual risks and recommended mitigations.

The strategic takeaway for CIOs and IT decision-makers

This outage reinforces several strategic priorities:- Resilience over convenience: Convenience of an all-in-one cloud stack must be balanced with contingency planning that tolerates partial or complete outages.

- Operational transparency matters: Vendors that provide granular, timely operational telemetry and clear remediation commitments help customers recover faster and make better business decisions during incidents.

- Prepare for imperfect restoration: Even after a vendor declares an incident resolved, residual effects sometimes linger; plan for staged resumption of operations rather than an immediate binary return to normal.

Conclusion

The January 22–23 Microsoft 365 disruption was a textbook example of how a localized infrastructure fault can cascade through a complex, interconnected cloud ecosystem and create widespread business impact. Microsoft’s remediation — focused on restoring affected nodes and rebalancing traffic — ultimately succeeded, but the incident exposed persistent enterprise risks: concentrated vendor dependency, loss of admin visibility during outages, and the prolonged time-to-stability that load-rebalancing mitigations can entail.For IT leaders, the immediate lesson is practical: assume that any single cloud provider, however reliable in normal operation, can suffer extended outages; plan accordingly. Implement layered defenses for inbound communications and administrative access, rehearse outage scenarios, and insist on post-incident transparency that provides the technical detail needed to assess risk and prevent recurrence.

The incident also carries a broader industry message: resilience in an era of hyperscale cloud requires both provider-level engineering rigor and customer-side architectural diversity. Until architectures and commercial relationships evolve to reduce single‑vendor systemic risk, extended outages like this will remain consequential events for businesses and their customers.

Source: theregister.com Microsoft 365 outage drags on for nearly 10 hours

Last edited: