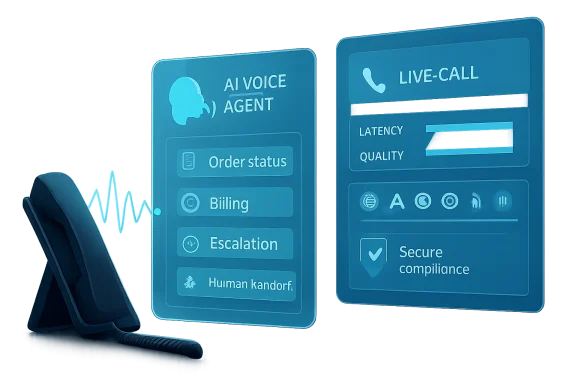

Microsoft is moving its customer-service AI strategy from scripted chat and menu-driven phone trees into real-time voice agents that can listen, reason, respond, and act during live calls. The company says the capability is now generally available in Microsoft Copilot Studio for Dynamics 365 Contact Center customers in North America, positioning voice as the next major frontier for enterprise AI automation. The announcement matters because phone support remains one of the most expensive, emotional, and operationally fragile parts of customer experience, and Microsoft is betting that low-latency speech-to-speech agents can modernize it without forcing businesses to abandon existing contact center controls.

For years, organizations have tried to reduce call center pressure with interactive voice response systems, scripted bots, call deflection, and web self-service portals. Those systems can handle predictable tasks, but they often fail when customers interrupt, change direction, express frustration, or combine several requests in one conversation. Microsoft’s new real-time voice agents are designed to address that gap by letting callers speak more naturally while the agent keeps track of context and takes action through connected systems.

The launch also reflects a broader shift in Microsoft’s business applications strategy. Copilot Studio began as a low-code environment for building conversational agents, but Microsoft increasingly treats it as the control plane for enterprise AI workers that can appear across chat, apps, websites, and now live voice channels. The company says more than 80% of Fortune 500 companies now have active agents built using its low-code and no-code tools, giving it a large installed base for voice expansion.

Historically, Microsoft’s contact center ambitions leaned on several foundations: Dynamics 365 Customer Service, the acquisition of Nuance, Azure Communication Services, Teams Phone, and the broader Copilot ecosystem. The new real-time voice layer pulls those threads together around a more ambitious goal: not just routing calls, but resolving issues while the conversation is still unfolding. That is a meaningful distinction, because a caller’s tolerance for delay is far lower on the phone than in a chat window.

The initial general availability is deliberately bounded. Real-time voice agents are available through Dynamics 365 Contact Center in North America, while Microsoft says language support, additional regions, and broader channels will expand over time. That staged rollout is prudent, because real-time speech AI introduces new requirements around latency, data movement, compliance, escalation, and user trust.

The problem is that many real conversations are messy. A customer may begin by asking about a bill, then mention a payment failure, then ask why a promotional credit disappeared. In a rigid system, every new detail risks sending the caller back to the beginning.

Real-time voice agents are meant to reduce that reset problem. Instead of forcing customers to speak in menu-friendly phrases, they can interpret intent, maintain state, and use tools during the call. The important change is not simply voice recognition; it is combining voice recognition with reasoning, workflow execution, and context retention.

Key forces pushing this transition include:

This is not a total replacement for existing voice automation. Microsoft is positioning real-time voice as an option for scenarios where the caller needs a more fluid exchange. Structured flows still matter for compliance, authentication, payment capture, eligibility checks, and other deterministic steps.

The distinction between basic voice and real-time voice will matter for deployment planning. Basic voice agents can still serve traditional IVR-like interactions, while real-time voice agents target conversations where interruption handling, natural pauses, and context switching are essential. The best implementations will probably blend both modes rather than treat one as universally superior.

Capabilities Microsoft is emphasizing include:

Copilot Studio remains the authoring layer. Makers define instructions, attach knowledge, configure tools, and create deterministic topics. Dynamics 365 Contact Center provides telephony integration, routing, and the environment where the voice agent participates in customer service workflows.

A useful way to understand the architecture is as a layered system:

Microsoft’s documentation also highlights voice activity detection, or VAD, as a configurable element. Server-based VAD responds based on silence and sound patterns, while semantic VAD tries to judge whether the caller has completed a thought. That matters because premature interruption is one of the fastest ways for an AI voice agent to feel rude or incompetent.

Microsoft’s examples point to billing, payments, order support, reservations, healthcare eligibility, telecom verification, appointment scheduling, and membership management. These are not glamorous scenarios, but they are operationally important. They also generate huge volumes of calls where small efficiency gains can matter.

Likely early deployment areas include:

Enterprises should also consider which calls are emotionally safe for automation. A flight delay, denied claim, surprise bill, or medical eligibility dispute can become sensitive quickly. In those cases, the agent’s ability to detect escalation signals may matter more than its ability to answer the first question.

Data residency is another major issue. As of April 2026, the real-time voice AI model is hosted in North America only. Customers outside North America must allow cross-geo processing, while EU Data Boundary customers cannot currently use real-time voice because audio would need to be processed through US-hosted models.

That limitation will shape adoption. North American companies can move faster, but multinational enterprises will need to map call flows by region, customer type, and regulatory exposure. A global airline, bank, or healthcare provider may need different automation strategies depending on where the caller, data, and service operation reside.

Governance priorities should include:

A sensible rollout should start with a narrow call type. The goal is to validate call quality, tool reliability, customer behavior, and escalation patterns before expanding to more complex journeys. Teams should avoid launching with the most politically visible or emotionally charged support line.

A practical deployment sequence might look like this:

IT teams should also design for observability from day one. A voice agent failure may appear as a bad answer, a hang-up, a repeated prompt, a failed tool call, or an unnecessary transfer. Without granular telemetry, teams will struggle to distinguish model issues from integration issues.

Amazon Connect has a strong cloud-native contact center story, especially for companies already invested in AWS. Genesys remains deeply entrenched in enterprise customer experience and has mature routing, workforce, and omnichannel capabilities. Google has long emphasized conversational AI, Dialogflow, and contact center intelligence.

Microsoft’s challenge is to prove that its integrated stack can match or exceed best-of-breed contact center depth. Buyers will compare not only model quality, but also uptime, reporting, workforce integration, call routing sophistication, pricing transparency, and global availability. In voice, good enough is not enough if a rival platform delivers more predictable operations.

Competitive differentiators to watch include:

The best consumer impact will come when businesses use voice agents to remove friction rather than hide humans. For example, a customer calling to reschedule an appointment should not have to repeat the date three times or navigate a five-step menu. A well-designed real-time voice agent can ask clarifying questions, offer options, and confirm the final change.

However, there is a risk that companies overuse automation to reduce costs while making it harder to reach people. That would undermine trust quickly. The more humanlike these agents sound, the more important it becomes to be clear about what they are and when a caller can transfer.

Customer experience improvements could include:

This shift will require new workforce planning. Human representatives may receive fewer easy calls and more escalations where the customer is already frustrated. That changes training needs, performance metrics, and mental workload.

Supervisors will also need new analytics. Traditional metrics such as average handle time and abandonment rate will remain useful, but they will not be enough. Teams will need to track AI containment quality, transfer reasons, tool failure rates, escalation timing, and whether the human handoff included usable context.

Enterprise benefits may include:

Watch these areas closely:

Real-time voice agents in Copilot Studio mark a significant step in Microsoft’s effort to make AI agents useful in high-stakes, customer-facing work. The technology will not eliminate the need for human representatives, and organizations that deploy it as a blunt cost-cutting tool may damage customer trust. But if Microsoft and its customers can combine low-latency speech, governed workflows, reliable escalation, and careful regional compliance, the phone call may finally evolve from a scripted bottleneck into a more adaptive front door for digital service.

Source: Microsoft Extend AI voice support with real‑time voice agents in Copilot Studio | Microsoft Copilot Blog

Overview

Overview

For years, organizations have tried to reduce call center pressure with interactive voice response systems, scripted bots, call deflection, and web self-service portals. Those systems can handle predictable tasks, but they often fail when customers interrupt, change direction, express frustration, or combine several requests in one conversation. Microsoft’s new real-time voice agents are designed to address that gap by letting callers speak more naturally while the agent keeps track of context and takes action through connected systems.The launch also reflects a broader shift in Microsoft’s business applications strategy. Copilot Studio began as a low-code environment for building conversational agents, but Microsoft increasingly treats it as the control plane for enterprise AI workers that can appear across chat, apps, websites, and now live voice channels. The company says more than 80% of Fortune 500 companies now have active agents built using its low-code and no-code tools, giving it a large installed base for voice expansion.

Historically, Microsoft’s contact center ambitions leaned on several foundations: Dynamics 365 Customer Service, the acquisition of Nuance, Azure Communication Services, Teams Phone, and the broader Copilot ecosystem. The new real-time voice layer pulls those threads together around a more ambitious goal: not just routing calls, but resolving issues while the conversation is still unfolding. That is a meaningful distinction, because a caller’s tolerance for delay is far lower on the phone than in a chat window.

The initial general availability is deliberately bounded. Real-time voice agents are available through Dynamics 365 Contact Center in North America, while Microsoft says language support, additional regions, and broader channels will expand over time. That staged rollout is prudent, because real-time speech AI introduces new requirements around latency, data movement, compliance, escalation, and user trust.

Why Voice Support Is Changing

Voice never disappeared from customer service, even as companies pushed customers toward apps, portals, and messaging. People still call when the issue is urgent, confusing, financially sensitive, or emotionally charged. That makes voice both a cost center and a trust channel, which is why bad automation can damage a brand faster over the phone than almost anywhere else.From IVR Menus to Adaptive Conversations

Traditional IVR systems were built for predictability. They ask callers to choose options, confirm inputs, and move through predesigned paths. That architecture works well for balance checks, office hours, simple routing, and other high-volume interactions where the caller’s goal is narrow.The problem is that many real conversations are messy. A customer may begin by asking about a bill, then mention a payment failure, then ask why a promotional credit disappeared. In a rigid system, every new detail risks sending the caller back to the beginning.

Real-time voice agents are meant to reduce that reset problem. Instead of forcing customers to speak in menu-friendly phrases, they can interpret intent, maintain state, and use tools during the call. The important change is not simply voice recognition; it is combining voice recognition with reasoning, workflow execution, and context retention.

Key forces pushing this transition include:

- Rising call volumes across service-heavy industries.

- Higher customer expectations shaped by instant digital experiences.

- Labor pressure in contact centers with high turnover and training costs.

- Fragmented customer data spread across CRM, billing, order, and scheduling systems.

- Growing demand for multilingual support without duplicating every call flow.

- Executive pressure to prove AI return on investment beyond internal productivity demos.

What Microsoft Is Actually Launching

Microsoft describes the new capability as a premium mode within voice agents, optimized for low-latency, interruptible, speech-to-speech conversations. In practical terms, that means the agent does not simply transcribe a caller, send text to a model, generate text, and then read it aloud as a disconnected sequence. The system is designed around streaming audio and responsive turn-taking.A New Mode Inside Copilot Studio

The key product move is that real-time voice agents are authored in Copilot Studio and deployed through Dynamics 365 Contact Center. That allows business users and IT teams to build agents with familiar Copilot Studio concepts such as instructions, topics, tools, and knowledge sources. It also lets service leaders connect the voice experience to contact center routing, escalation, and operational oversight.This is not a total replacement for existing voice automation. Microsoft is positioning real-time voice as an option for scenarios where the caller needs a more fluid exchange. Structured flows still matter for compliance, authentication, payment capture, eligibility checks, and other deterministic steps.

The distinction between basic voice and real-time voice will matter for deployment planning. Basic voice agents can still serve traditional IVR-like interactions, while real-time voice agents target conversations where interruption handling, natural pauses, and context switching are essential. The best implementations will probably blend both modes rather than treat one as universally superior.

Capabilities Microsoft is emphasizing include:

- Natural language understanding without requiring exact menu phrases.

- Speech-to-speech responsiveness for more fluid call handling.

- Context awareness across previous turns in the conversation.

- Tool and workflow invocation for retrieving or updating business data.

- Deterministic topics for governed steps such as escalation or business rules.

- Multilingual support with formally validated languages expanding over time.

- Human handoff through Dynamics 365 Contact Center when judgment is required.

The Technical Architecture Behind the Call

Real-time voice support is technically unforgiving because callers evaluate it at human speed. A chatbot can pause for a few seconds and still feel acceptable; a phone agent that hesitates too long feels broken. Microsoft’s architecture reflects that by using a real-time multimodal model, Copilot Studio configuration, and Dynamics 365 Contact Center telephony orchestration.Speech-to-Speech, Not Just Text Read Aloud

The real-time model processes streaming caller audio and produces spoken responses in real time. That approach is intended to reduce the latency introduced by older pipeline designs that separately perform speech recognition, text reasoning, and text-to-speech generation. The difference may sound subtle, but in a live call it affects whether the agent feels responsive or mechanical.Copilot Studio remains the authoring layer. Makers define instructions, attach knowledge, configure tools, and create deterministic topics. Dynamics 365 Contact Center provides telephony integration, routing, and the environment where the voice agent participates in customer service workflows.

A useful way to understand the architecture is as a layered system:

- Telephony layer handles the inbound call and routing.

- Real-time audio layer streams speech input and output.

- Model layer interprets intent and generates spoken responses.

- Copilot Studio layer governs instructions, topics, tools, and knowledge.

- Business systems layer connects CRM, billing, scheduling, order, or case data.

- Contact center layer manages escalation, reporting, and human representative handoff.

Microsoft’s documentation also highlights voice activity detection, or VAD, as a configurable element. Server-based VAD responds based on silence and sound patterns, while semantic VAD tries to judge whether the caller has completed a thought. That matters because premature interruption is one of the fastest ways for an AI voice agent to feel rude or incompetent.

High-Volume Use Cases Microsoft Is Targeting

Microsoft is aiming these agents at everyday business-to-consumer calls rather than rare edge cases. The strategic logic is clear: if AI voice can safely handle repetitive, high-volume interactions, it can produce measurable savings while freeing human agents for complex work. The risk is that these interactions often involve money, identity, eligibility, or customer frustration.Where Real-Time Voice Makes Sense First

The strongest early use cases are those with clear business data, repeatable outcomes, and well-defined escalation paths. For example, an order-status call can start with identity confirmation, retrieve live shipping information, and then branch into a return, delivery change, or escalation if the customer reports a missing package. That is a better fit than an open-ended legal, medical, or emotionally sensitive conversation.Microsoft’s examples point to billing, payments, order support, reservations, healthcare eligibility, telecom verification, appointment scheduling, and membership management. These are not glamorous scenarios, but they are operationally important. They also generate huge volumes of calls where small efficiency gains can matter.

Likely early deployment areas include:

- Billing explanations where customers need clarification, not just a balance.

- Payment assistance with structured confirmation and compliance controls.

- Order and reservation changes that require live system lookups.

- Eligibility checks in sectors such as healthcare, insurance, telecom, and government.

- Appointment scheduling where callers interrupt, revise preferences, or ask follow-up questions.

- Membership and subscription management involving updates, benefits, or cancellations.

- Routine case creation where the agent gathers details before escalation.

Enterprises should also consider which calls are emotionally safe for automation. A flight delay, denied claim, surprise bill, or medical eligibility dispute can become sensitive quickly. In those cases, the agent’s ability to detect escalation signals may matter more than its ability to answer the first question.

Governance, Compliance, and Trust

Microsoft is framing the release around enterprise-grade control, which is necessary because voice automation can create serious compliance exposure. A written chatbot mistake can be reviewed after the fact, but a spoken commitment during a live call may be interpreted by the customer as an official decision. That makes guardrails, auditability, and escalation design central to any rollout.The Enterprise Control Problem

The company’s responsible AI materials acknowledge that real-time voice agents can produce incorrect confirmations, disclose too much information, misunderstand age or context, and behave inconsistently if prompts and knowledge boundaries are poorly designed. That transparency is welcome, but it also underscores that organizations cannot treat this as a plug-and-play replacement for trained representatives. The hard part is operational design.Data residency is another major issue. As of April 2026, the real-time voice AI model is hosted in North America only. Customers outside North America must allow cross-geo processing, while EU Data Boundary customers cannot currently use real-time voice because audio would need to be processed through US-hosted models.

That limitation will shape adoption. North American companies can move faster, but multinational enterprises will need to map call flows by region, customer type, and regulatory exposure. A global airline, bank, or healthcare provider may need different automation strategies depending on where the caller, data, and service operation reside.

Governance priorities should include:

- Clear scope boundaries for what the agent may and may not answer.

- Deterministic controls for payments, commitments, refunds, and eligibility decisions.

- Human escalation triggers for disputes, safety issues, uncertainty, or emotional distress.

- Knowledge scoping to prevent over-disclosure from internal documents.

- Testing across accents, noise conditions, and call quality variations before launch.

- Monitoring of latency, tool failures, containment rates, and customer sentiment after deployment.

- Review of regional data movement and consent requirements before enabling voice AI.

Deployment Considerations for IT Teams

For IT and service operations teams, the new capability is not just another Copilot toggle. It touches telephony, identity, CRM integration, knowledge management, compliance review, analytics, and agent training. That makes deployment a cross-functional project rather than a single administrator task.Building the First Real-Time Voice Agent

Microsoft’s configuration model requires Dynamics 365 Contact Center with voice channel and call routing, appropriate administrator permissions, Copilot Studio access, and maker permissions for agent creation. Once an agent is configured for real-time voice, switching it back to basic voice requires creating a new agent. That one-way choice makes planning and environment separation important.A sensible rollout should start with a narrow call type. The goal is to validate call quality, tool reliability, customer behavior, and escalation patterns before expanding to more complex journeys. Teams should avoid launching with the most politically visible or emotionally charged support line.

A practical deployment sequence might look like this:

- Select one high-volume, low-risk call flow with clear success criteria.

- Map the existing IVR and human handoff path to identify failure points.

- Define approved actions, forbidden actions, and escalation triggers before authoring prompts.

- Connect only the minimum knowledge and tools required for the first use case.

- Test with realistic audio, including accents, background noise, interruptions, and incomplete information.

- Run a limited production pilot with close monitoring and fast rollback options.

- Expand gradually only after measuring containment, satisfaction, latency, and error patterns.

IT teams should also design for observability from day one. A voice agent failure may appear as a bad answer, a hang-up, a repeated prompt, a failed tool call, or an unnecessary transfer. Without granular telemetry, teams will struggle to distinguish model issues from integration issues.

Competitive Implications for the Contact Center Market

Microsoft is entering a crowded and fast-moving market where Genesys, Amazon Connect, Google Contact Center AI, Cisco, Talkdesk, Five9, and specialized voice AI vendors are all pushing deeper automation. What makes Microsoft’s move notable is not just the voice model; it is the combination of Copilot Studio, Dynamics 365, Teams, Azure, and Microsoft 365 identity. That ecosystem can be a powerful adoption engine.Microsoft’s Platform Advantage

Enterprises already standardized on Microsoft often prefer fewer vendors and tighter governance. If they use Dynamics 365 Customer Service, Teams Phone, Power Platform, Entra ID, and Microsoft 365, Copilot Studio voice agents may feel like a natural extension rather than a separate AI platform. That is the core competitive advantage.Amazon Connect has a strong cloud-native contact center story, especially for companies already invested in AWS. Genesys remains deeply entrenched in enterprise customer experience and has mature routing, workforce, and omnichannel capabilities. Google has long emphasized conversational AI, Dialogflow, and contact center intelligence.

Microsoft’s challenge is to prove that its integrated stack can match or exceed best-of-breed contact center depth. Buyers will compare not only model quality, but also uptime, reporting, workforce integration, call routing sophistication, pricing transparency, and global availability. In voice, good enough is not enough if a rival platform delivers more predictable operations.

Competitive differentiators to watch include:

- Depth of Dynamics 365 integration compared with third-party CRM connectors.

- Teams Phone expansion as Microsoft brings voice agents to more channels.

- Power Platform tooling for business-user extensibility.

- Azure AI governance and security posture for regulated industries.

- Global language and regional availability relative to rivals.

- Pricing and consumption models for high-volume call environments.

- Partner ecosystem readiness for migration, testing, and managed operations.

Consumer Impact: Better Calls or Better Deflection?

For consumers, the promise is obvious: fewer menus, less repetition, faster resolution, and more natural support. If real-time voice agents can answer questions, update records, and escalate with context, phone support could become less frustrating. The key word is if.The Customer Experience Test

Customers do not care whether the agent uses a frontier model, semantic VAD, or tool invocation. They care whether it understands them, solves the problem, and gets out of the way when it cannot. A polite synthetic voice that confidently gives the wrong answer is still a bad experience.The best consumer impact will come when businesses use voice agents to remove friction rather than hide humans. For example, a customer calling to reschedule an appointment should not have to repeat the date three times or navigate a five-step menu. A well-designed real-time voice agent can ask clarifying questions, offer options, and confirm the final change.

However, there is a risk that companies overuse automation to reduce costs while making it harder to reach people. That would undermine trust quickly. The more humanlike these agents sound, the more important it becomes to be clear about what they are and when a caller can transfer.

Customer experience improvements could include:

- Shorter time to resolution for routine issues.

- Less repetition when calls transfer to human representatives.

- More natural language instead of rigid menu commands.

- Better after-hours service for simple requests.

- More consistent answers across common policy questions.

- Improved accessibility for users who prefer speaking over typing.

- Overconfident wrong answers delivered in a persuasive voice.

- Difficulty reaching a human when the AI misclassifies urgency.

- Poor performance with accents, background noise, or speech impairments.

- Privacy concerns around live audio processing.

- Frustration when agents sound natural but lack authority to resolve the issue.

Enterprise Impact: Cost, Scale, and Workforce Design

For enterprises, real-time voice agents are attractive because contact centers are expensive to staff, train, and manage. Every minute shaved from average handle time, every routine call contained, and every successful self-service interaction can affect margins. But the bigger transformation may be how companies redesign the relationship between AI agents and human representatives.Human Agents Move Up the Complexity Stack

If AI handles simple verification, status checks, scheduling, and routine data updates, human agents can focus on exceptions, empathy, negotiation, and judgment. That is the optimistic version. The less comfortable version is that companies may try to reduce headcount too quickly and leave remaining staff with a heavier concentration of difficult calls.This shift will require new workforce planning. Human representatives may receive fewer easy calls and more escalations where the customer is already frustrated. That changes training needs, performance metrics, and mental workload.

Supervisors will also need new analytics. Traditional metrics such as average handle time and abandonment rate will remain useful, but they will not be enough. Teams will need to track AI containment quality, transfer reasons, tool failure rates, escalation timing, and whether the human handoff included usable context.

Enterprise benefits may include:

- Lower cost per contact for repeatable service interactions.

- Improved scalability during seasonal peaks or outages.

- More consistent policy delivery across distributed teams.

- Reduced onboarding burden for routine call handling.

- Better data capture from structured AI-led conversations.

- Faster experimentation through low-code agent updates.

Strengths and Opportunities

Microsoft’s strongest opportunity is that it can bring real-time voice automation into the same enterprise ecosystem many organizations already use for CRM, collaboration, identity, analytics, and low-code workflow design. That gives it a credible path to mainstream adoption, especially among companies that want AI modernization without stitching together a stack of niche vendors.- Copilot Studio integration lowers the barrier for teams already building agents.

- Dynamics 365 Contact Center deployment provides a natural operational home for voice workflows.

- Context carryover to human representatives addresses a major customer pain point.

- Hybrid deterministic and generative design helps balance flexibility with control.

- Power Platform extensibility could speed up workflow integration for common business processes.

- North American GA status gives early adopters a production-ready starting point.

- Future Teams Phone expansion could make voice agents relevant beyond classic contact centers.

Risks and Concerns

The risks are not theoretical, because voice AI operates in real time, under emotional pressure, and often around sensitive information. Microsoft’s own transparency materials point to concerns around hallucinations, over-disclosure, latency, tool reliability, regional hosting, and inconsistent behavior across languages or user populations. Enterprises should treat those warnings as implementation requirements, not legal fine print.- Regional limitations currently constrain global deployment, especially for EU Data Boundary customers.

- Latency sensitivity means even small delays can make the agent feel unnatural.

- Tool failures can derail live conversations unless fallback paths are carefully designed.

- Over-disclosure risks increase when knowledge sources are too broad or poorly scoped.

- Hallucinated commitments could create financial, legal, or reputational exposure.

- Accessibility gaps may appear for callers with accents, impairments, noisy environments, or older devices.

- Over-automation incentives may tempt companies to make human escalation too difficult.

Looking Ahead

The next phase will be about expansion, evidence, and trust. Microsoft says broader regions, more language support, and additional touchpoints are coming over time, with Teams Phone specifically named as part of the roadmap. That could eventually move real-time voice agents beyond contact centers into departmental hotlines, appointment desks, internal help desks, field service operations, and Microsoft 365-connected business workflows.What to Watch Next

The most important milestones will not be flashy demos. They will be production evidence: lower repeat-call rates, smoother escalations, measurable customer satisfaction gains, and transparent handling of compliance requirements. Enterprises should demand proof that real-time voice agents improve resolution, not just that they reduce human talk time.Watch these areas closely:

- Regional model hosting expansion beyond North America.

- Validated language coverage for multinational service operations.

- Teams Phone integration and how it changes internal and external voice workflows.

- Pricing clarity for high-volume contact centers with unpredictable call spikes.

- Independent customer case studies showing containment, satisfaction, and escalation quality.

Real-time voice agents in Copilot Studio mark a significant step in Microsoft’s effort to make AI agents useful in high-stakes, customer-facing work. The technology will not eliminate the need for human representatives, and organizations that deploy it as a blunt cost-cutting tool may damage customer trust. But if Microsoft and its customers can combine low-latency speech, governed workflows, reliable escalation, and careful regional compliance, the phone call may finally evolve from a scripted bottleneck into a more adaptive front door for digital service.

Source: Microsoft Extend AI voice support with real‑time voice agents in Copilot Studio | Microsoft Copilot Blog